By creating a Hudi data source, you can enable Dataphin to read business data from Hudi or write data to Hudi. This topic describes how to create a Hudi data source.

Background information

Apache Hudi is a general big data storage system that directly incorporates core repository and database functions into the database and supports the ability to insert, update, and delete data at the record level. For more information, see Apache Hudi official website.

Permissions

Only users with custom global roles that have the Create Data Source permission and users with the super administrator, data source administrator, domain architect, or project administrator role can create data sources.

Procedure

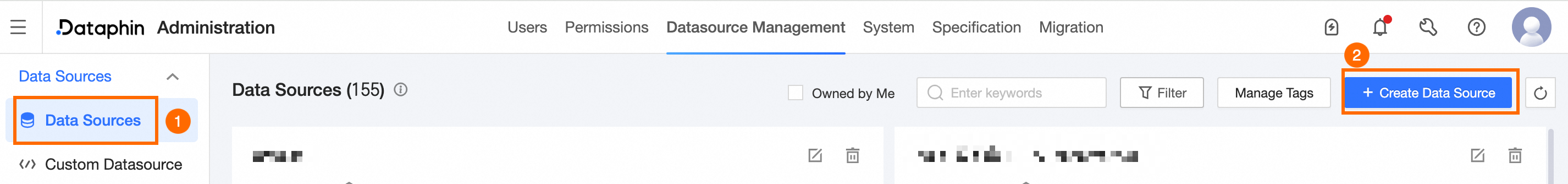

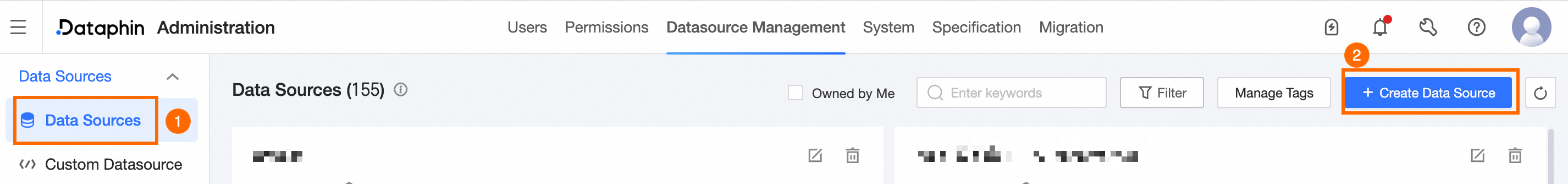

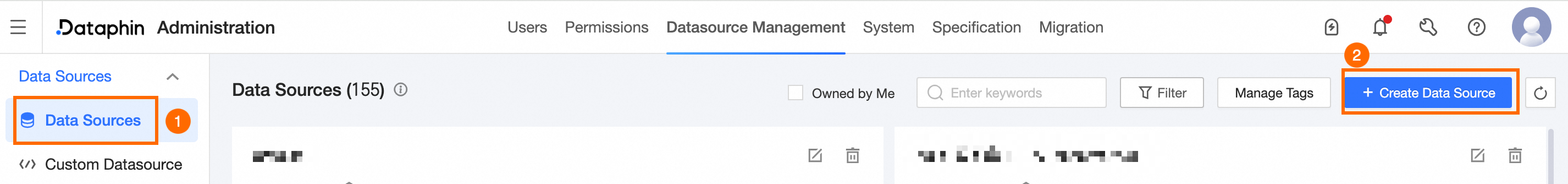

On the Dataphin homepage, click Management Center > Data Source Management in the top navigation bar.

On the Datasource page, click +Create Data Source.

In the Create Data Source page, select Hudi from the Big Data section.

If you have recently used Hudi, you can also select it from the Recently Used section. You can also quickly search for Hudi by entering keywords in the search box.

On the Create Hudi Data Source page, configure the connection parameters.

Configure the basic information of the data source.

Parameter

Description

Data Source Name

The name must meet the following requirements:

It can contain only Chinese characters, letters, digits, underscores (_), and hyphens (-).

It cannot exceed 64 characters in length.

Datasource Code

After you configure the data source code, you can reference tables in the data source in Flink_SQL tasks by using the

data_source_code.table_nameordata_source_code.schema.table_nameformat. If you need to automatically access the data source in the corresponding environment based on the current environment, use the variable format${data_source_code}.tableor${data_source_code}.schema.table. For more information, see Dataphin data source table development method.ImportantThe data source code cannot be modified after it is configured successfully.

After the data source code is configured successfully, you can preview data on the object details page in the asset directory and asset inventory.

In Flink SQL, only MySQL, Hologres, MaxCompute, Oracle, StarRocks, Hive, and SelectDB data sources are currently supported.

Data Source Description

A brief description of the data source. It cannot exceed 128 characters.

Data Source Configuration

Select the data source that you want to configure:

If your business data source distinguishes between production and development data sources, select Production + Development Data Source.

If your business data source does not distinguish between production and development data sources, select Production Data Source.

Configure the connection parameters between the data source and Dataphin.

If you select Production + Development Data Source for Data Source Configuration, you need to configure the connection information for both Production + Development Data Source. If you select Production Data Source, you only need to configure the connection information for the Production Data Source.

NoteTypically, production and development data sources should be configured as separate data sources to isolate the development environment from the production environment and reduce the impact of the development data source on the production data source. However, Dataphin also supports configuring them as the same data source with identical parameter values.

Parameter

Description

Storage Configuration

Supports HDFS or OSS storage.

Storage Path

HDFS Storage: Enter the HDFS storage path. Make sure that the Flink user has permission to access the path. The format is:

hdfs://host:port/path.OSS Storage: Enter the OSS storage path. Example:

oss://dp-oss/hudi/.If you use OSS storage, you also need to enter the OSS Endpoint, AccessKeyID, and AccessKeySecret.

Endpoint: If you use Alibaba Cloud OSS, you can fill in the corresponding network type based on the region where OSS is located. For more information, see OSS Region and Endpoint reference table for public cloud.

AccessKeyID, AccessKeySecret: The AccessKey ID and AccessKey Secret of the account that owns the OSS. For information about how to obtain them, see Obtain an AccessKey pair.

Metadata Synchronization

When enabled, the schema of Hudi tables will be synchronized to the Hive MetaStore.

If you use HDFS storage configuration and enable metadata synchronization, you also need to configure the following information:

Version: Supports CDH6:2.1.1 and CDP7.1.3:3.1.300.

Synchronization Mode: Supports hms and jdbc. Different parameters need to be configured for each synchronization mode:

hms: The thrift address of the Hive metadata database and the database name to synchronize to Hive.

ImportantIf you select hms, Hive needs to enable the metastore server.

jdbc: The jdbc address of the Hive metadata database, the username of the Hive metadata database, the password of the Hive metadata database, and the database name to synchronize to Hive.

If you use OSS storage configuration and enable metadata synchronization, you also need to configure the following information:

Synchronization Mode: Default is hms and cannot be modified.

Metadata Target Database: Default is DLF and cannot be modified.

DLF Service Region Name: Enter the region domain name of the DLF service. For more information, see DLF Region and Endpoint reference table.

DLF Service Endpoint: Enter the Endpoint address of the DLF service. For more information, see DLF Region and Endpoint reference table.

Database Name to Synchronize to Hive: Enter the database name to synchronize to Hive.