This topic describes how to connect to Dataphin by using the JDBC driver.

Prerequisites

You must enable the Dataphin OpenAPI feature before using the JDBC driver.

Overview

Two authentication modes: The Dataphin JDBC driver supports two authentication modes: Simple Mode and Proxy Mode. For more information, see Authentication modes.

Consistent execution results: Executing an SQL statement with the Dataphin JDBC driver is equivalent to executing it in the Dataphin console. Therefore, the query results are identical. Configurations in Dataphin, such as permissions, Desensitization Rules, security settings, and code specifications, also apply to executions through the JDBC driver.

Limitations

You cannot connect to Dataphin by using JDBC when the Compute Engine is Databricks, SelectDB, Doris, or Aliyun EMR Serverless Spark.

The Dataphin JDBC driver processes SQL statements through Dataphin for preprocessing, such as SQL translation and data masking. Result sets are also transmitted and forwarded through Dataphin. This process introduces performance overhead and increases query times compared to querying the Compute Engine directly.

The Dataphin JDBC driver submits SQL statements to Dataphin for processing via the OpenAPI before sending them to the underlying Compute Engine. Before using the Dataphin JDBC driver, evaluate your expected call volume and determine if Scaling is necessary for the Dataphin cluster and Compute Engine.

NoteIf you cannot estimate the required capacity, contact the Dataphin operations and maintenance (O&M) team to request a capacity assessment for your Dataphin cluster. This assessment does not include the Compute Engine.

Dataphin does not currently support traffic or concurrency control. Carefully assess the potential impact.

JDBC driver version and download link

To obtain the driver JAR file, contact the Dataphin O&M team.

Connection parameters

When the Dataphin JDBC driver uses a platform-level AccessKey ID and AccessKey Secret (Proxy Mode), you can use the SET statement to specify the user to access resources.

set dp_delegation_uid = 'target_source_user_uid';set dp_delegation_name = 'target_user_name';

JDBC URL format: jdbc:dataphin://host:port/catalog?[tenant_id=TenantID][&ssl=true][&log_level=Log_Level][&user=UserName][&password=PassWord][&delegation_uid=DelegationUid][&account_type=AccountType][&connect_timeout=ConnectTimeout][&engine=engine_type][compute_project=ProjectName]

Parameter names are case-sensitive.

Square brackets

[]indicate optional parameters and should not be included in the final URL.

Parameter | Required | Description | Example |

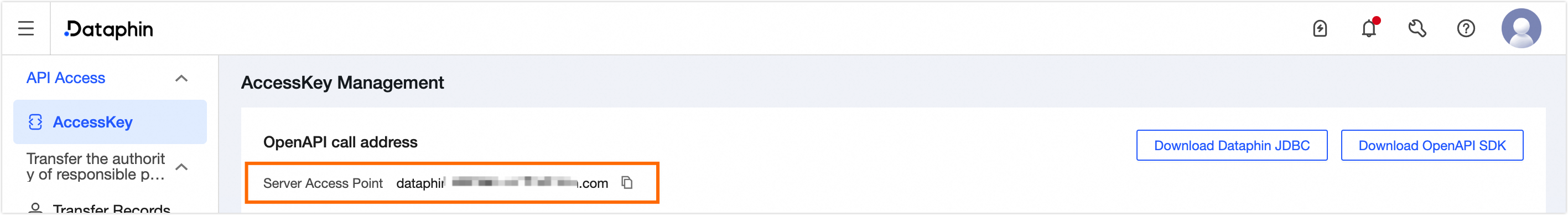

host | Yes | The domain name of the Dataphin OpenAPI. The domain name is listed under OpenAPI Invocation Address on the Personal Center > AccessKey Management page.

| dataphin-openapi.****.aliyun.com |

port | No | The port number depends on whether HTTPS is enabled for the Dataphin OpenAPI. Use | 80 |

catalog | Yes | The default query scope.

Note

| Exprojectname |

tenant_id | Yes | The ID of the tenant to be queried. | 111***111 |

ssl | No | Specifies whether to use HTTPS.

| False |

currentschema | No | The schema of the Data Source.

| information_schema |

compute_project | No | The English name of the project for executing SQL queries. This project and its associated Compute Source must have read permissions for the queried tables.

| Exprojectname |

user | Yes | The AccessKey ID of the user or platform. For Proxy Mode, use the platform's AccessKey ID.

| kIB**********PT0 |

log_level | No | The logging level. Valid values are:

| DEBUG |

password | Yes | The AccessKey Secret for the specified user.

| Cy**************r2T |

delegation_uid | No | Specifies the Dataphin user to impersonate when connecting with a platform AccessKey. The ID provided for the impersonated user must match the specified account_type. Setting this parameter enables the Proxy Mode authentication method. | 999***999 |

account_type | No | For Proxy Mode, you must specify the account type of the user being impersonated.

Note

| USER_ID |

connect_timeout | No | The connection timeout in seconds.

| 10 |

engine | No | Specifies the offline engine for the project (project_name) defined in the session or connection string. If the Compute Source is Hadoop, the default engine is hive, but you can set this parameter to Impala or Spark. The selected engine type must be pre-configured in the Compute Source. If an unsupported engine type is specified for the project, the setting is ignored, and a warning is issued. Valid values are:

Note This parameter is ignored when accessing a Data Source. | MaxCompute |

acceleration_source | No | Specifies the Acceleration Source. You can enter the code of any Acceleration Source within the tenant. | starrocks_code |

acceleration_resource_group | No | The acceleration Resource Group. You can select a configured Resource Group from the specified Acceleration Source. | starrocks_resource_group |

Authentication modes

Simple mode

Set the username to the user's AccessKey ID and the password to the user's AccessKey Secret. The connection is then authenticated as this user. To view your AccessKey, see Manage Dataphin OpenAPI AccessKeys.

When you connect to Dataphin through the JDBC driver, Dataphin authenticates the AccessKey and authorizes the user for the requested resources or SQL statements. Dataphin then executes the SQL queries as the authenticated user.

Proxy mode

To use Proxy Mode, you must contact the Dataphin O&M team to enable and configure this feature.

Proxy Mode is typically used for system-level integrations with Dataphin. This mode avoids the need to distribute or manage individual user AccessKeys. You can specify the user to impersonate in the JDBC connection URL, ensuring that all operations are authorized based on that user's permissions. A platform-level AccessKey has high privileges and can impersonate any user. As shown in the following figure, the client is configured with a platform AccessKey. When userA connects through the client, the delegation_uid=userA parameter is used to impersonate userA. All permission checks are then performed based on userA's functional and data permissions.

Dataphin driver API reference

com.aliyun.dataphin.jdbc.DataphinDriver

Interface | Description | Syntax |

connect | Establishes a database connection. | |

acceptsURL | Checks whether the driver can handle a given URL. | |

com.aliyun.dataphin.jdbc.DataphinConnection

Interface | Description | Syntax |

createStatement | Creates a Statement object. | |

prepareStatement | Creates a PreparedStatement object. | |

com.aliyun.dataphin.jdbc.DataphinStatement

Interface | Description | Syntax |

executeQuery | Executes an SQL statement and returns a | |

setFetchSize | Fetches result data in batches based on the specified number of rows. If not set or set to 0, the default fetch size is 1,000. | |

cancel | Cancels the execution of the | |

com.aliyun.dataphin.jdbc.DataphinPrepareStatement

Interface | Description | Syntax |

executeQuery | Executes an SQL statement and returns a | |

com.aliyun.dataphin.jdbc.DataphinResultSetMetaData

Interface | Description | Syntax |

getColumnCount | Gets the number of columns in the result schema. | |

getColumnName | Gets the name of a column in the result schema. | |

com.aliyun.dataphin.jdbc.ResultSet

Interface | Description | Syntax |

next | Retrieves SQL query results row by row. | |

com.aliyun.dataphin.jdbc.DatabaseMetaData

Interface | Description | Syntax |

getTables | Retrieves table metadata.

| |

getColumns | Retrieves column metadata for a table.

| |

Examples

Use the Dataphin JDBC driver to retrieve catalog information.

1. Get a list of tables

Gets a list of Physical Tables and Physical Views in a project.

Syntax

SHOW TABLES

[FROM db_name]

[LIKE 'pattern']Parameters

db_name:

Dataphin project name: Lists the Physical Tables in this project.

For development projects and data domains, you must explicitly add the _Dev suffix.

If db_name is not specified, the tables in the project_name set in the URL are listed by default.

Results

Name | Type | Comment |

dim_user | Logical Table | User table. |

ods_user | Physical Table | User source table. |

ods_user_logical_view | Logical View | Logical view. |

ods_user_physical_view2 | Physical View | Physical view. |

2. Get the structure of a table

Gets the field details of a Physical Table or Physical View.

Syntax

{DESCRIBE | DESC} table_name;Only Physical Tables and Physical Views are supported.

Parameters

table_name: The name of the Physical Table or Physical View.

Results

Name | Type | Comment |

ID | BigInt | User ID. |

Name | String | User name. |

DS | String | Partition time. |

Server-side connection control

Maximum connections: The default is 100.

Connection timeout: The default is 288,000s (80 hours). If a connection is idle for this period, it is automatically closed. Subsequent operations on this connection will fail.

JDBC task information in MaxCompute

When a Dataphin JDBC task is submitted to a MaxCompute instance, Dataphin-related information is also passed to the instance.

Parameter | Description |

logical_project | The name of the Dataphin project where the JDBC task is executed. |

EXT_JDBC_TASKRUN_ID | The task ID of the JDBC task. |

EXT_DPN_TENANT_ID | The ID of the Dataphin tenant where the JDBC task is executed. |

EXT_PLATFORM_ID | The ID of the upper-level platform that submitted the task to MaxCompute. The default is Dataphin. |

biz_id | The Dataphin member ID of the user who executed the JDBC task. |

odps.idata.userenv | User environment information, including the Java SDK revision, Java version, IP address, and device MAC address. For example:

|

You can use this information for scenarios such as analyzing MaxCompute bills and viewing job execution times. For more information, see Collect statistics on TOP N cost accounts and time-consuming jobs.