Zero-ETL streams data from ApsaraDB RDS for MySQL into ApsaraDB for ClickHouse in real time without requiring you to build or maintain a data pipeline. The feature is powered by Data Transmission Service (DTS) and is free of charge.

How it works

Zero-ETL handles three phases automatically:

-

Schema synchronization — DTS reads the source schema and creates corresponding tables in ClickHouse. It adds

_sign,_is_deleted, and_versionfields to each destination table. -

Full data synchronization — DTS copies all existing rows from the source to ClickHouse.

-

Incremental data synchronization — DTS reads binary logs from the source MySQL instance and applies INSERT, UPDATE, and DELETE changes to ClickHouse continuously.

When an UPDATE or DELETE runs on the source, ClickHouse appends a new record and uses _sign, _is_deleted, and _version to track the change. The destination database may be larger than the source as a result. To query only current rows, add FINAL after the table name or filter on _sign or _is_deleted.

Supported pipeline

| Source | Destination | Access method | Supported phases |

|---|---|---|---|

| ApsaraDB RDS for MySQL | ApsaraDB for ClickHouse | Alibaba Cloud instance | Schema sync, full sync, incremental sync |

Billing

Zero-ETL synchronization pipelines are free of charge.

Prerequisites

Before you begin, make sure that:

-

The ApsaraDB for ClickHouse cluster and the RDS for MySQL instance are in the same region

-

A database account exists for the source RDS for MySQL instance. See Create an account for an ApsaraDB RDS for MySQL instance

-

A database account exists for the destination ClickHouse cluster. See Create an account for an ApsaraDB for ClickHouse cluster

Limits

Source database (RDS for MySQL)

| Constraint | Details |

|---|---|

| Primary keys | All tables to be synchronized must have primary keys |

| Table rename | RENAME TABLE operations are not synchronized |

| Table-level sync limit | Synchronizing individual tables (not entire databases) supports up to 1,000 tables per task. For more than 1,000 tables, split into multiple tasks or synchronize at the database level |

| DDL during schema sync or full sync | Do not run DDL operations while schema synchronization or full data synchronization is in progress. Doing so causes the task to fail |

pt-online-schema-change |

If you select one or more tables (instead of an entire database) as the objects to be synchronized, do not use pt-online-schema-change or similar tools for DDL operations on those tables during synchronization. Otherwise, data may fail to be synchronized |

| Non-standard DDL | DDL statements on the source that do not comply with standard MySQL syntax may cause the task to fail or result in data loss |

| Binary logging | binlog_row_image must be set to full. Binary logging is enabled by default for ApsaraDB RDS for MySQL. For self-managed MySQL, also set binlog_format=row. For a self-managed MySQL database in a primary/primary architecture, enable log_slave_updates |

| Binary log retention | Retain local binary logs for at least 3 days (7 days recommended) for ApsaraDB RDS for MySQL; at least 7 days for self-managed MySQL. Tasks fail if DTS cannot read the required binary logs, and issues caused by insufficient retention are outside the DTS Service-Level Agreement (SLA) |

| Invisible columns (MySQL 8.0.23+) | Invisible columns are not synchronized and data may be lost. Run ALTER TABLE <table_name> ALTER COLUMN <column_name> SET VISIBLE; to make them visible before starting the task |

| Always-confidential (EncDB) | Full data synchronization is not supported when EncDB is enabled. Transparent Data Encryption (TDE) is supported for schema synchronization, full data synchronization, and incremental data synchronization |

| Read-only instances without transaction logs | Cannot be used as the source (for example, read-only RDS for MySQL 5.6 instances) |

| Heartbeat statement | The zero-ETL task periodically runs CREATE DATABASE IF NOT EXISTS \test\`` on the source to advance the binary log offset |

| Changes not in binary logs | Changes applied through physical backup recovery or cascade operations are not synchronized. If this occurs, remove and re-add the affected object from the synchronization scope |

If you synchronize data from multiple instances to a single ApsaraDB for ClickHouse cluster, make sure that the synchronization objects in different tasks do not overlap.

General limits

| Constraint | Details |

|---|---|

| Max databases per cluster | Up to 256 databases can be synchronized to a single ApsaraDB for ClickHouse cluster |

| Naming conventions | Database, table, and column names must comply with ApsaraDB for ClickHouse naming conventions. See Limits on object naming conventions |

| DATETIME range | DATETIME values in the source must fall within the time ranges supported by the destination cluster. See the Time range section |

Data type mappings

RDS for MySQL and ApsaraDB for ClickHouse data types do not map one-to-one. During schema synchronization, DTS maps source field types to the closest supported type in ClickHouse. For the full mapping table, see Data type mappings for schema synchronization.

Usage notes

Maximum number of zero-ETL tasks per cluster

The maximum number of zero-ETL tasks you can create for a cluster depends on the edition:

-

Enterprise Edition:

ceil(lower CCU limit / 8). For a cluster with a lower ClickHouse Compute Unit (CCU) limit of 22, the calculation isceil(22 / 8) = 3. -

Community Edition:

ceil(total CPU cores / 8). For a two-node cluster where each node has 8 cores, the calculation isceil(16 / 8) = 2.

If a cluster reaches its limit, delete unused tasks or create additional synchronization tasks directly in the DTS console.

Table structure in the destination

During schema synchronization, the zero-ETL feature adds _sign, _is_deleted, and _version fields to each destination table.

For Community-Compatible Edition clusters, the task creates both a local table and a distributed table for each source table:

-

Distributed table: Same name as the source table. Use this table for queries.

-

Local table: Named

<distributed_table_name>_local. Used internally by ClickHouse for shard-level storage.

Scheduling and performance

Run the task during off-peak hours. During full data synchronization, DTS uses read and write resources on both the source and destination, which may increase server load.

Preparations

Before creating a zero-ETL task, set up the required service-linked role and Resource Access Management (RAM) user permissions.

Step 1: Create the service-linked role

Create the AliyunServiceRoleForClickHouseZeroETL service-linked role.

When you select a database instance ID during task configuration, the console prompts you to create this role automatically. Manual creation is not required in that case.

Step 2: Grant RAM user permissions

To allow a RAM user to create zero-ETL tasks, grant the following permissions. See Manage RAM user permissions.

-

Source RDS for MySQL:

AliyunRDSFullAccess -

Destination ClickHouse cluster:

AliyunClickHouseFullAccess -

DTS: Create a custom policy using the following script. See Create a custom permission policy.

{

"Version": "1",

"Statement": [

{

"Action": "dts:*",

"Resource": "*",

"Effect": "Allow"

},

{

"Action": "ram:PassRole",

"Resource": "*",

"Effect": "Allow",

"Condition": {

"StringEquals": {

"acs:Service": "dts.aliyuncs.com"

}

}

}

]

}Create and start a zero-ETL task

The overall flow has five steps: navigate to the zero-ETL page, configure source and destination databases, select objects to synchronize, configure table fields, and run the precheck before starting.

Step 1: Go to the zero-ETL page

-

Log on to the ApsaraDB for ClickHouse console.

-

In the upper-left corner, select the region of your cluster.

-

On the Cluster List page, click the List of Community Edition Instances tab, then click the target cluster ID.

-

In the left navigation pane, click Zero-ETL (Seamless Integration).

Step 2: Configure source and destination databases

-

Click Create Zero-ETL Task.

-

Enter a Task Name.

-

Configure the source and destination databases.

Configure the source and destination databases based on the following parameters. After you complete the configurations, click Next.

Source database

Parameter

Description

Database type

RDS for MySQL (only supported option)

Access method

Alibaba Cloud Instance (only supported option)

Instance region

Region of the source RDS for MySQL instance

RDS instance ID

ID of the source RDS for MySQL instance

Database account

Database account for the RDS for MySQL instance

Database password

Password for the database account of the RDS for MySQL instance

Encryption

Select Non-encrypted or SSL-encrypted. If you select SSL-encrypted, enable SSL encryption for the RDS for MySQL instance first. See Use a cloud certificate to quickly enable SSL link encryption.

Destination database

Parameter

Description

Database type

ClickHouse

Connection type

Alibaba Cloud instance (only supported option)

Instance region

Region of the destination cluster

Cluster ID

ID of the destination cluster

Cluster type

Community Edition or Enterprise Edition

Database account

Database account for the destination cluster

Database password

Password for the database account of the destination cluster

-

Click Test Connectivity and Proceed.

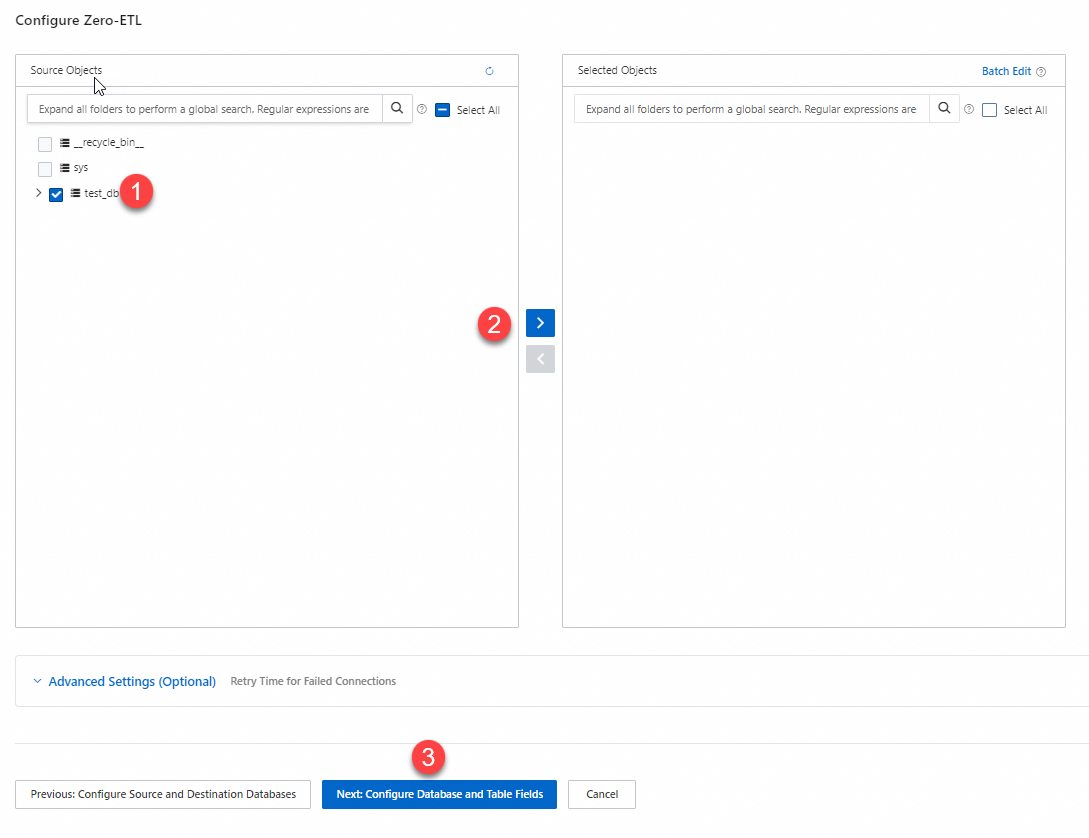

Step 3: Select objects to synchronize

In the Source Objects section, select the databases or tables to synchronize. Click ![]() to move them to the Selected Objects section.

to move them to the Selected Objects section.

Click Next: Configure Database and Table Fields.

Step 4: Configure table fields

On the Configurations for Databases, Tables, and Columns page, set the following for each table:

| Field | Description |

|---|---|

| Type | Table engine type |

| Primary key column | One or more columns forming the primary key. Composite keys are supported |

| Sort key | One or more columns for sorting. Composite keys are supported |

| Distribution key | A single column for data distribution across shards |

| Partition key | (Optional) A non-empty column for partitioning. The column cannot be blank if set |

By default, only tables with undefined configurations are shown. To view all tables, set Definition Status to All. The partition key must be selected from the primary key column. For details on these fields, see CREATE TABLE.

Click Next: Save Task Settings and Precheck. The task is saved regardless of whether the precheck passes.

Step 5: Run the precheck and start the task

When Success Rate reaches 100%, click Start.

If the precheck fails, fix the reported issues in the source or destination, then modify and rerun the precheck from the zero-ETL page.

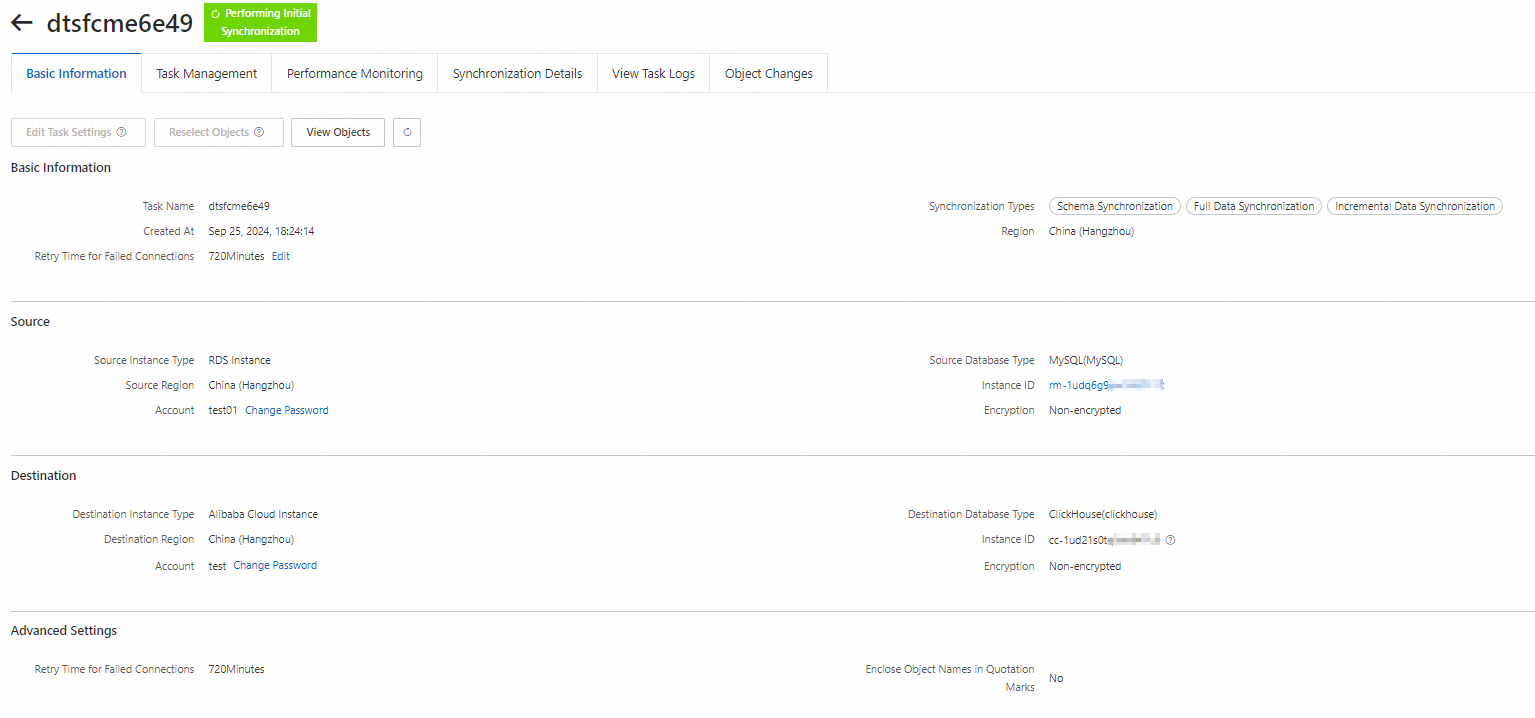

After the task starts, the zero-ETL page shows the task ID/name, source/destination, and status.

Monitor zero-ETL tasks

Use one or more of the following methods to track task health. For ongoing production workloads, configure alerts or event subscriptions so you receive automatic notifications when issues occur.

| Method | Best for | Limitation |

|---|---|---|

| Active viewing in the ClickHouse console | Reviewing replication performance, synchronization details, and task logs | No automatic notifications |

| Synchronization latency alerts in CloudMonitor | Receiving automatic alerts when latency exceeds a threshold | Monitors latency only |

| Event subscription in CloudMonitor | Receiving notifications on task failure and recovery | Monitors failure and recovery only |

Monitor a task in the ApsaraDB for ClickHouse console

-

Log on to the ApsaraDB for ClickHouse console.

-

In the upper-left corner, select the region of your cluster.

-

On the Cluster List page, click the List of Community Edition Instances tab, then click the cluster ID.

-

In the left navigation pane, click Zero-ETL (Seamless Integration).

-

Find the task and click Task Details in the Actions column.

The task details page shows replication performance, synchronization status, and logs.

Set up synchronization latency alerts in CloudMonitor

-

Create an alert rule in CloudMonitor. See Create an alert rule in the CloudMonitor console. Set the following parameters:

Parameter Value Product Clickhouse - ZeroETL Latency Monitoring metrics Synchronization Latency -

To view current latency, log on to the CloudMonitor console. In the ClickHouse - ZeroETL Latency list, click Monitoring Charts in the Actions column for the target cluster.

Subscribe to zero-ETL task events in CloudMonitor

Subscribe to system events to receive automatic notifications when a task fails or recovers. See Manage event subscriptions.

When creating a subscription policy, set the following parameters:

| Event | Parameter | Value |

|---|---|---|

| Task failure | Subscription type | System Event |

| Product | ApsaraDB Clickhouse | |

| Event type | Abnormal | |

| Event name | ZeroETL task abnormal | |

| Task recovery | Subscription type | System Event |

| Product | ApsaraDB Clickhouse | |

| Event type | Restore | |

| Event name | ZeroETLTaskRestore |

FAQ

Why is the destination database larger than the source after synchronization?

ClickHouse does not overwrite rows on UPDATE or DELETE. Instead, it appends a new record and uses _sign, _is_deleted, and _version to track the change. The destination accumulates more rows than the source over time.

To query only the latest data, add FINAL after the table name, or filter on _sign or _is_deleted (the exact field depends on your cluster version). For details, see Field information.

Why do tables named `<table>_local` appear in the destination database?

For Community-Compatible Edition clusters, zero-ETL creates both a local table and a distributed table for each source table. The distributed table (named after the source table) is the one you query. The _local table is used internally by ClickHouse for shard-level storage.

What happens if the binary log retention period is too short?

DTS uses binary logs to apply incremental changes. If the binary logs needed to continue replication have already been purged, the task fails and cannot resume. In this case, remove the affected database or table from the synchronization scope and re-add it to trigger a fresh full synchronization. For ApsaraDB RDS for MySQL, retain binary logs for at least 3 days (7 days recommended). For self-managed MySQL, retain binary logs for at least 7 days.

What causes a zero-ETL task to fail with a binary log offset error?

The task loses its position in the binary log if the log file is purged before DTS reads it, typically due to a short retention period. When this happens, the task cannot resume automatically. Remove the affected table or database from the synchronization scope and re-add it to trigger a fresh full synchronization. To prevent recurrence, for ApsaraDB RDS for MySQL, retain binary logs for at least 3 days (7 days recommended); for self-managed MySQL, retain binary logs for at least 7 days.