When a new pod starts during scale-out, rolling updates, or traffic bursts, it immediately receives a proportional share of traffic. For services that depend on JVM warm-up, cache population, or lazy initialization, this sudden load causes request timeouts, slow responses, and degraded user experience.

The warm-up feature in Service Mesh (ASM) progressively increases traffic to newly started pods over a configurable time window. A new pod starts with minimal traffic and ramps up gradually until the warm-up period ends.

How it works

Configure warm-up through the trafficPolicy.loadBalancer field in a DestinationRule. Two fields control the behavior:

| Field | Description |

|---|---|

simple | The load balancing policy. Valid values: ROUND_ROBIN and LEAST_REQUEST. |

warmupDurationSecs | The duration of the warm-up window. During this period, Istio progressively increases traffic to the new endpoint instead of distributing traffic proportionally. |

apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: mocka

spec:

host: mocka

trafficPolicy:

loadBalancer:

simple: ROUND_ROBIN

warmupDurationSecs: 100sAfter the warm-up window expires, the endpoint exits warm-up mode and receives traffic according to the configured load balancing policy.

Note: Warm-up requires at least one existing pod replica in the current zone to take effect:

Single-zone cluster (zone A only): Warm-up takes effect starting from the second pod. The first pod has no baseline for comparison.

Multi-zone cluster (zone A and zone B): If only one pod exists in zone A, starting a second pod in zone B does not trigger warm-up because cross-zone scheduling treats it as a separate group. Warm-up takes effect starting from the third pod.

Prerequisites

Before you begin, make sure that you have:

An ASM instance of Enterprise Edition or Ultimate Edition, version 1.14.3 or later. See Create an ASM instance

A cluster added to the ASM instance. See Add a cluster to an ASM instance

kubectl connected to the Container Service for Kubernetes (ACK) cluster. See Obtain the kubeconfig file of a cluster and use kubectl to connect to the cluster

An ingress gateway created. See Create an ingress gateway

The Bookinfo sample application deployed. This tutorial uses the reviews service. See Deploy an application in an ASM instance

Step 1: Set up routing rules and generate traffic

Before enabling warm-up, establish a baseline by routing traffic through the ingress gateway without warm-up.

First, scale the reviews-v3 Deployment to zero replicas. You will scale it back up in later steps to observe traffic behavior with and without warm-up.

Define the ingress gateway

Create a file named

bookinfo-gateway.yaml:Apply the configuration:

kubectl apply -f bookinfo-gateway.yaml

Create the DestinationRule for the reviews service (without warm-up)

Create a file named

reviews.yaml:Apply the configuration:

kubectl apply -f reviews.yaml

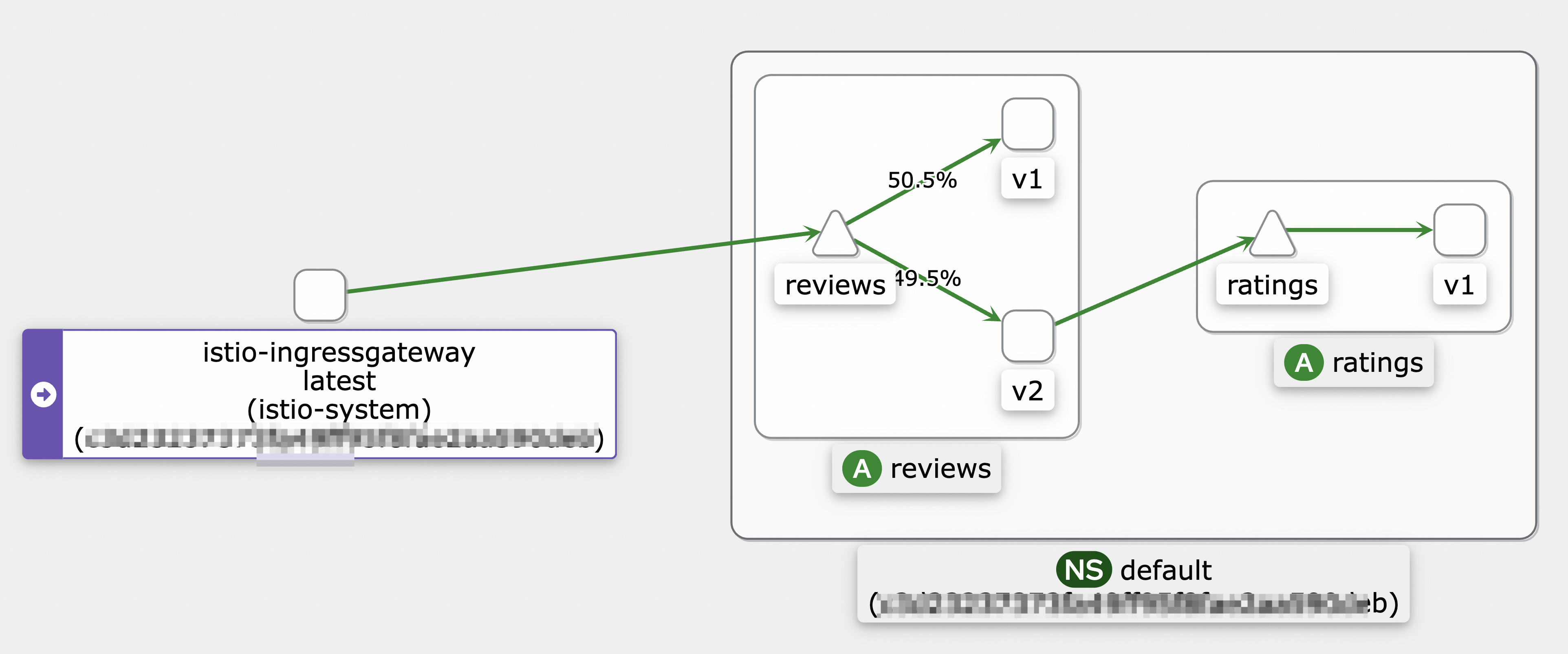

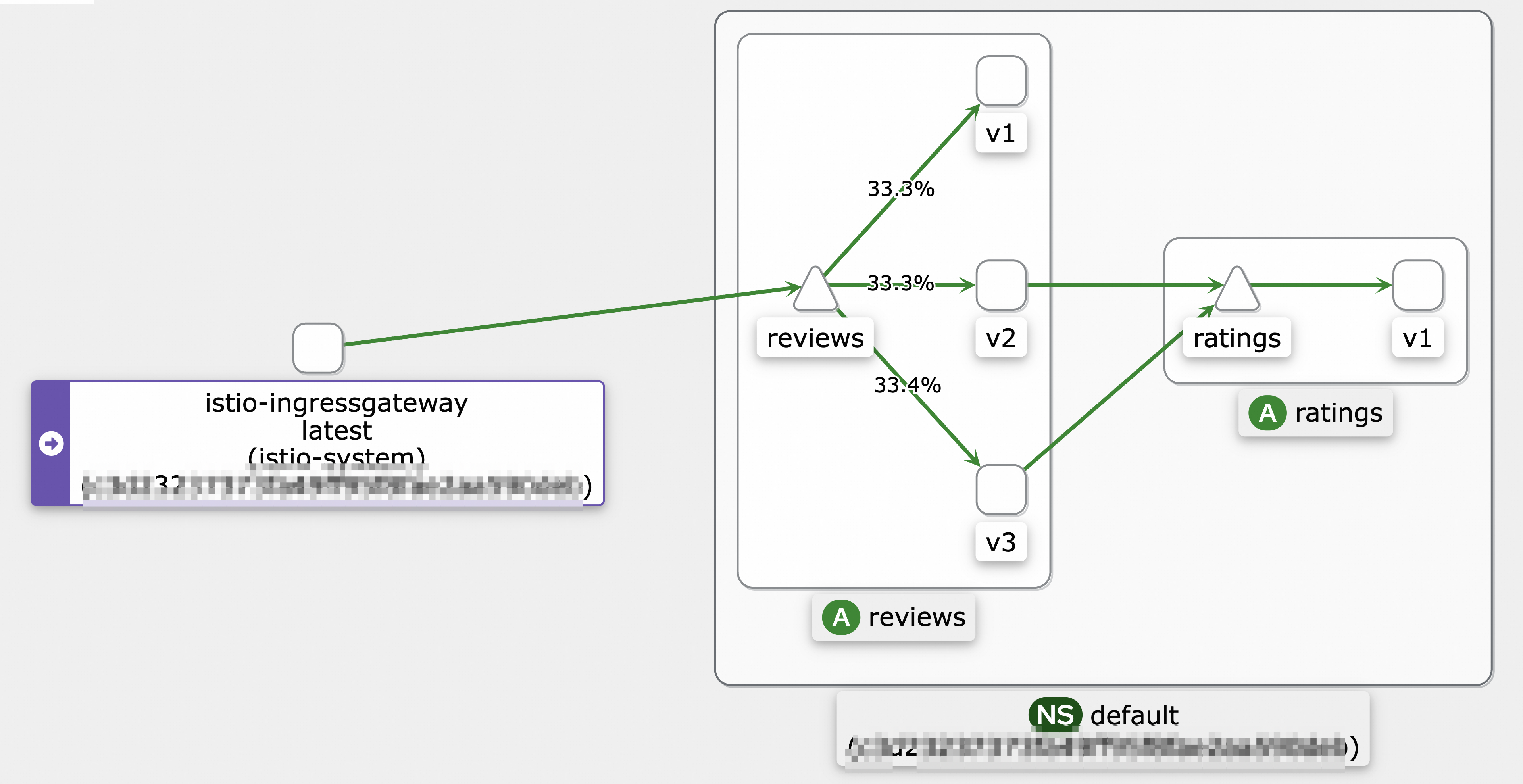

Send traffic and verify the topology

Use the hey load testing tool to send requests for 10 seconds:

hey -z 10s -q 100 -c 4 http://<ingress-gateway-ip>/reviews/0Replace <ingress-gateway-ip> with the IP address of the ingress gateway.

To verify the traffic flow, view the service mesh topology. See Use Mesh Topology to view the topology of an application.

Step 2: Observe traffic distribution without warm-up

This step establishes a baseline: how traffic reaches a new pod when warm-up is disabled.

Log on to the ASM console.

In the left-side navigation pane, choose Service Mesh > Mesh Management.

On the Mesh Management page, click the name of the target ASM instance or click Manage in the Actions column.

In the left-side navigation pane, choose Observability Management Center > Monitoring indicators.

For ASM versions earlier than 1.17.2.35: Click the Monitoring instrument tab, then click the Cloud ASM Istio Service tab and select the reviews service.

For ASM version 1.17.2.35 or later: Click the Cloud ASM Istio Work load tab, select reviews-v3 from the Workload drop-down list, and select source from the Reporter drop-down list.

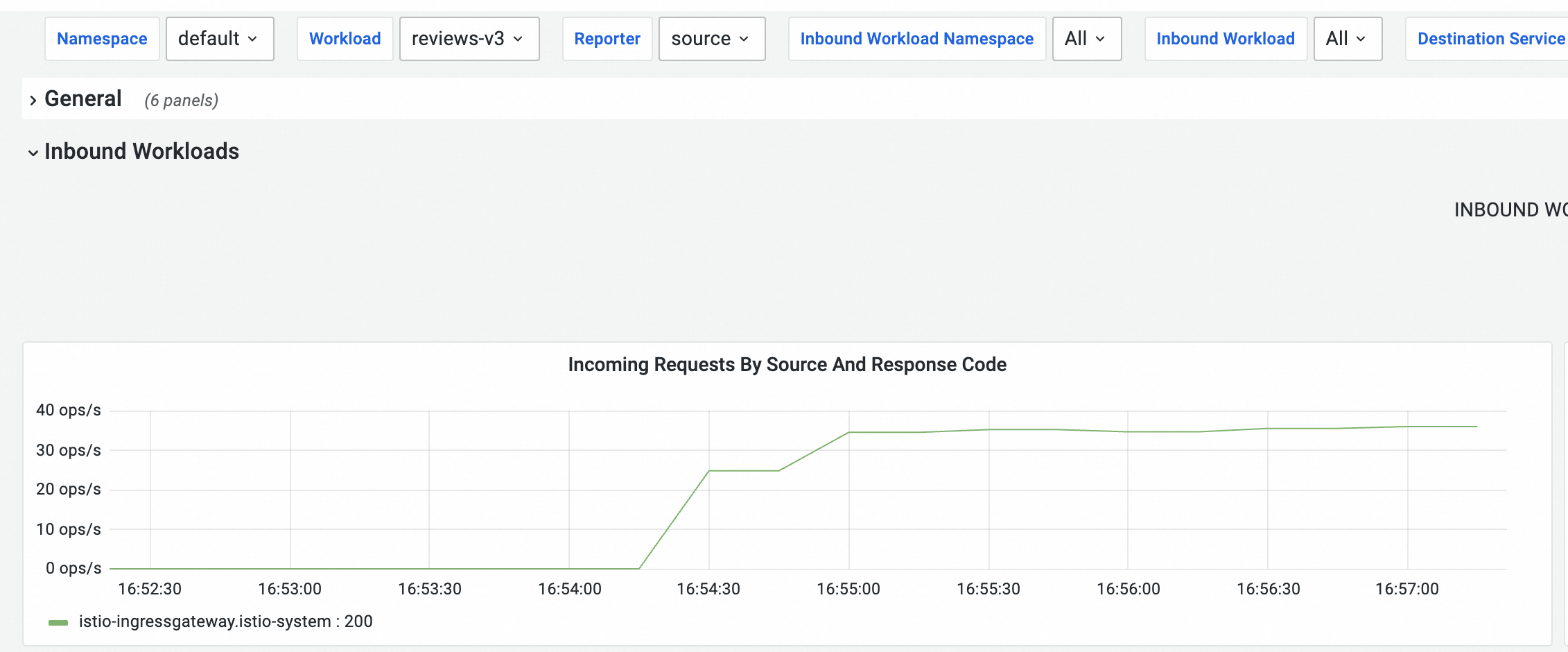

Scale the reviews-v3 Deployment from zero to one replica.

Run a 120-second load test:

hey -z 120s -q 100 -c 4 http://<ingress-gateway-ip>/reviews/0Observe the monitoring dashboard. Without warm-up, the reviews-v3 pod starts receiving requests at a stable rate approximately 45 seconds after the load test begins. The exact timing depends on the environment.

After observing the baseline, scale the reviews-v3 Deployment back to zero replicas.

Step 3: Enable warm-up

Update

reviews.yamlto add thewarmupDurationSecsfield with a value of120s:apiVersion: networking.istio.io/v1beta1 kind: DestinationRule metadata: name: reviews spec: host: reviews trafficPolicy: loadBalancer: simple: ROUND_ROBIN warmupDurationSecs: 120sApply the updated configuration:

kubectl apply -f reviews.yaml

Step 4: Verify the warm-up effect

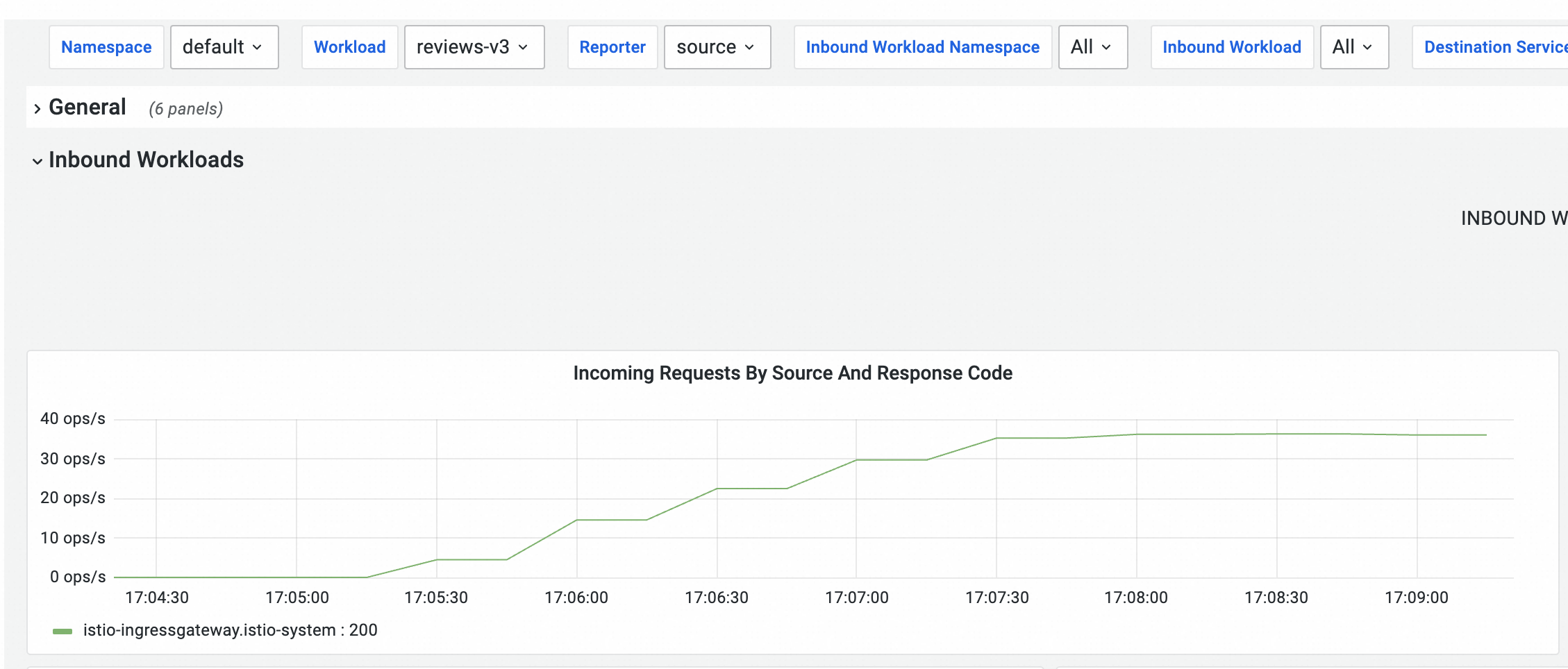

Scale the reviews-v3 Deployment from zero to one replica.

Run a 150-second load test:

hey -z 150s -q 100 -c 4 http://<ingress-gateway-ip>/reviews/0On the Cloud ASM Istio Service tab, observe the monitoring dashboard. With warm-up enabled, the reviews-v3 pod receives traffic gradually. It reaches a stable request rate approximately 120 seconds after the load test begins, matching the configured warm-up duration.

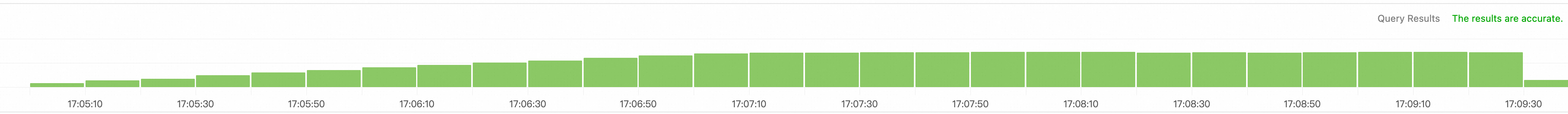

Note: The curve may appear stepped because metrics are collected at intervals. The actual traffic increase is smooth. To view fine-grained traffic data, enable sidecar proxy log collection and search the logs in the Simple Log Service (SLS) console.

After the warm-up window completes, traffic is evenly distributed across reviews-v1, reviews-v2, and reviews-v3. In this example, even distribution is reached approximately 150 seconds after the load test begins.

See also

Configure local throttling in Traffic Management Center and Configure global throttling for inbound traffic: Keep traffic within configured thresholds to maintain service availability.

Use ASMAdaptiveConcurrency for adaptive concurrency control: Dynamically adjust the maximum concurrent requests allowed for a service based on sampled request data.

Configure the connectionPool field for circuit breaking: Protect services from cascading failure during overload.