When you need to serve multiple machine learning models, dedicating separate pods to each model wastes resources and complicates operations. ModelMesh solves this by letting multiple models share a single pool of serving pods. Built on KServe ModelMesh, it dynamically loads and unloads models based on request traffic -- frequently accessed models stay in memory while idle models are evicted to free resources.

This guide walks through enabling ModelMesh on Service Mesh (ASM), configuring the networking layer, and deploying a sample scikit-learn model to verify the setup end to end.

How it works

When ModelMesh receives an inference request for a specific model:

It routes the request to a pod in the shared serving pool.

If the model is not already in memory, ModelMesh selects an optimal pod, loads the model, and caches it.

When memory pressure increases, ModelMesh evicts the least recently used models to make room.

This approach reduces resource costs compared to deploying each model on its own set of pods, and it simplifies operations because you manage one shared deployment rather than many individual ones.

| Feature | Description |

|---|---|

| Cache management | Optimizes pod memory based on access frequency and recency. Loads and unloads model copies based on traffic volume. |

| Intelligent placement | Balances model placement by cache age and request load. Queues concurrent model loads to minimize impact on live traffic. |

| Resiliency | Retries failed model loads automatically on different pods. |

| Rolling updates | Handles model updates automatically without downtime. |

Prerequisites

Before you begin, make sure that you have:

A Container Service for Kubernetes (ACK) cluster added to your ASM instance (version 1.18.0.134 or later). For details, see Add a cluster to an ASM instance

An ingress gateway created for the cluster. For details, see Create an ingress gateway

This guide uses an ASM ingress gateway with the default name ingressgateway, port 8008 enabled, and the HTTP protocol.

Step 1: Enable KServe and ModelMesh on ASM

Log on to the ASM console. In the left-side navigation pane, choose Service Mesh > Mesh Management.

On the Mesh Management page, click the name of your ASM instance. In the left-side navigation pane, choose Ecosystem > KServe on ASM.

On the KServe on ASM page, click Enable KServe on ASM.

NoteKServe depends on CertManager. Enabling KServe automatically installs CertManager in the cluster. If you already run a self-managed CertManager, clear Automatically install the CertManager component in the cluster.

After KServe is enabled, connect to the ACK cluster with kubectl and verify that the ServingRuntime resources are available: Expected output:

kubectl get servingruntimes -n modelmesh-servingNAME DISABLED MODELTYPE CONTAINERS AGE mlserver-1.x sklearn mlserver 1m ovms-1.x openvino_ir ovms 1m torchserve-0.x pytorch-mar torchserve 1m triton-2.x keras triton 1m

A ServingRuntime defines the pod template for serving a particular model format. ModelMesh automatically provisions pods based on the framework of the deployed model.

Supported runtimes and model formats

| ServingRuntime | Supported frameworks |

|---|---|

| mlserver-1.x | scikit-learn, XGBoost, LightGBM |

| ovms-1.x | OpenVINO, ONNX |

| torchserve-0.x | PyTorch (MAR) |

| triton-2.x | TensorFlow, PyTorch, ONNX, TensorRT |

For the full list, see Supported model formats. To add runtimes not listed here, see Create a custom model serving runtime with ModelMesh.

Step 2: Configure ASM networking

ModelMesh natively communicates over gRPC. To send REST/JSON inference requests through the ASM ingress gateway, you need to configure an Istio gateway, a virtual service, and a gRPC-JSON transcoder. The transcoder automatically converts incoming JSON requests to gRPC and returns JSON responses.

Create the Istio gateway and virtual service

Synchronize the

modelmesh-servingnamespace from the ACK cluster to the ASM instance. For details, see Synchronize namespace labels from a Kubernetes cluster to ASM.Create an Istio gateway that listens on port 8008 for gRPC traffic:

kubectl apply -f - <<EOF apiVersion: networking.istio.io/v1beta1 kind: Gateway metadata: name: grpc-gateway namespace: modelmesh-serving spec: selector: istio: ingressgateway servers: - hosts: - '*' port: name: grpc number: 8008 protocol: GRPC EOFCreate a virtual service that routes traffic from the gateway to the ModelMesh serving endpoint:

kubectl apply -f - <<EOF apiVersion: networking.istio.io/v1beta1 kind: VirtualService metadata: name: vs-modelmesh-serving-service namespace: modelmesh-serving spec: gateways: - grpc-gateway hosts: - '*' http: - match: - port: 8008 name: default route: - destination: host: modelmesh-serving port: number: 8033 EOFVerify that both resources exist: Confirm that

grpc-gatewayandvs-modelmesh-serving-serviceappear in the output.kubectl get gateway,virtualservice -n modelmesh-serving

Configure the gRPC-JSON transcoder

Deploy the transcoder that converts REST/JSON requests to gRPC:

kubectl apply -f - <<EOF apiVersion: istio.alibabacloud.com/v1beta1 kind: ASMGrpcJsonTranscoder metadata: name: grpcjsontranscoder-for-kservepredictv2 namespace: istio-system spec: builtinProtoDescriptor: kserve_predict_v2 isGateway: true portNumber: 8008 workloadSelector: labels: istio: ingressgateway EOFIncrease the connection buffer limit so the gateway can handle larger inference responses:

kubectl apply -f - <<EOF apiVersion: networking.istio.io/v1alpha3 kind: EnvoyFilter metadata: labels: asm-system: "true" manager: asm-voyage provider: asm name: grpcjsontranscoder-increasebufferlimit namespace: istio-system spec: configPatches: - applyTo: LISTENER match: context: GATEWAY listener: portNumber: 8008 proxy: proxyVersion: ^1.* patch: operation: MERGE value: per_connection_buffer_limit_bytes: 100000000 workloadSelector: labels: istio: ingressgateway EOFVerify the transcoder and EnvoyFilter: Confirm that both resources are listed.

kubectl get asmgrpcjsontranscoders -n istio-system kubectl get envoyfilter grpcjsontranscoder-increasebufferlimit -n istio-system

Step 3: Deploy a sample model

Deploy an MNIST handwritten digit recognition model trained with scikit-learn to verify the end-to-end setup.

Set up persistent storage

Create a NAS-backed StorageClass. For details, see Mount a dynamically provisioned NAS volume.

Log on to the ACK console. In the left-side navigation pane, click Clusters.

On the Clusters page, click the name of your cluster. In the left-side navigation pane, choose Volumes > StorageClasses.

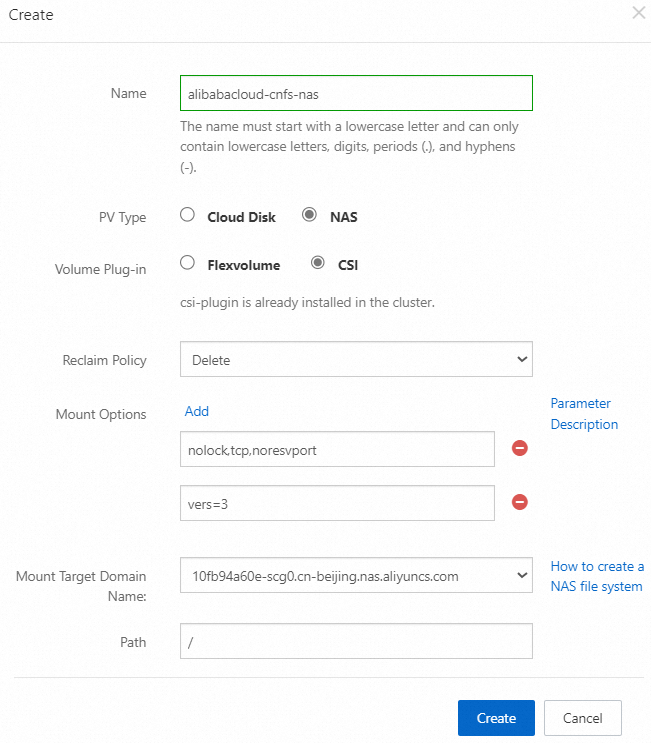

In the upper-right corner of the StorageClasses page, click Create, configure the parameters as shown below, and then click Create.

Create a Persistent Volume Claim (PVC) in the

modelmesh-servingnamespace:kubectl apply -f - <<EOF apiVersion: v1 kind: PersistentVolumeClaim metadata: name: my-models-pvc namespace: modelmesh-serving spec: accessModes: - ReadWriteMany resources: requests: storage: 1Gi storageClassName: alibabacloud-cnfs-nas volumeMode: Filesystem EOFVerify that the PVC is bound: Expected output:

kubectl get pvc -n modelmesh-servingNAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE my-models-pvc Bound nas-379c32e1-c0ef-43f3-8277-9eb4606b53f8 1Gi RWX alibabacloud-cnfs-nas 2h

Upload the model file

Create a temporary pod that mounts the PVC:

kubectl apply -n modelmesh-serving -f - <<EOF apiVersion: v1 kind: Pod metadata: name: pvc-access spec: containers: - name: main image: ubuntu command: ["/bin/sh", "-ec", "sleep 10000"] volumeMounts: - name: my-pvc mountPath: /mnt/models volumes: - name: my-pvc persistentVolumeClaim: claimName: my-models-pvc EOFWait for the pod to start: Expected output:

kubectl get pods -n modelmesh-serving | grep pvc-accesspvc-access 1/1 Running 0 51mDownload the mnist-svm.joblib model file from the kserve/modelmesh-minio-examples repository, then copy it into the pod:

kubectl -n modelmesh-serving cp mnist-svm.joblib pvc-access:/mnt/models/Verify that the file exists on the persistent volume: Expected output:

kubectl -n modelmesh-serving exec -it pvc-access -- ls -alr /mnt/models/-rw-r--r-- 1 501 staff 344817 Oct 30 11:23 mnist-svm.joblib

Create the InferenceService

Deploy the

sklearn-mnistInferenceService:kubectl apply -f - <<EOF apiVersion: serving.kserve.io/v1beta1 kind: InferenceService metadata: name: sklearn-mnist namespace: modelmesh-serving annotations: serving.kserve.io/deploymentMode: ModelMesh spec: predictor: model: modelFormat: name: sklearn storage: parameters: type: pvc name: my-models-pvc path: mnist-svm.joblib EOFWait for the InferenceService to become ready. This may take a few minutes depending on image pull speed. Expected output:

kubectl get isvc -n modelmesh-servingNAME URL READY sklearn-mnist grpc://modelmesh-serving.modelmesh-serving:8033 True

Run an inference request

Send a test request to classify a handwritten digit. The fp32_contents array contains 64 grayscale pixel values from an 8x8 image scan.

MODEL_NAME="sklearn-mnist"

ASM_GW_IP="<IP-address-of-the-ingress-gateway>"

curl -X POST -k "http://${ASM_GW_IP}:8008/v2/models/${MODEL_NAME}/infer" \

-d '{

"inputs": [{

"name": "predict",

"shape": [1, 64],

"datatype": "FP32",

"contents": {

"fp32_contents": [0.0, 0.0, 1.0, 11.0, 14.0, 15.0, 3.0, 0.0, 0.0, 1.0, 13.0, 16.0, 12.0, 16.0, 8.0, 0.0, 0.0, 8.0, 16.0, 4.0, 6.0, 16.0, 5.0, 0.0, 0.0, 5.0, 15.0, 11.0, 13.0, 14.0, 0.0, 0.0, 0.0, 0.0, 2.0, 12.0, 16.0, 13.0, 0.0, 0.0, 0.0, 0.0, 0.0, 13.0, 16.0, 16.0, 6.0, 0.0, 0.0, 0.0, 0.0, 16.0, 16.0, 16.0, 7.0, 0.0, 0.0, 0.0, 0.0, 11.0, 13.0, 12.0, 1.0, 0.0]

}

}]

}'Replace <IP-address-of-the-ingress-gateway> with the actual IP address of your ASM ingress gateway.

Expected response -- the model classifies the digit as 8:

{

"modelName": "sklearn-mnist__isvc-3c10c62d34",

"outputs": [

{

"name": "predict",

"datatype": "INT64",

"shape": ["1", "1"],

"contents": {

"int64Contents": ["8"]

}

}

]

}What's next

Custom runtimes: If the built-in runtimes do not meet your requirements, create a custom serving runtime. See Create a custom model serving runtime with ModelMesh.

Large language models: To serve an LLM as an inference service, see Use an LLM as an inference service.

Troubleshooting: If pods fail to start, see Pod troubleshooting.