Filebeat collects logs from servers and forwards them to downstream systems. When Filebeat and your ApsaraMQ for Kafka instance are in the same virtual private cloud (VPC), you can send logs directly to a Kafka topic over the internal network -- avoiding public internet exposure and reducing latency.

This guide walks you through the full setup: retrieving the broker endpoint, creating a topic, configuring Filebeat, and verifying message delivery.

Before you begin

Make sure you have:

Step 1: Get the VPC endpoint

Filebeat connects to ApsaraMQ for Kafka through a broker endpoint. Retrieve it from the console.

Log on to the ApsaraMQ for Kafka console.

In the Resource Distribution section of the Overview page, select the region where your instance is deployed.

On the Instances page, click the instance name.

On the Instance Details page, locate the VPC endpoint in the Endpoint Information section. If your instance uses SASL authentication, also note the Username and Password in the Configuration Information section.

ApsaraMQ for Kafka provides several endpoint types (default, SASL, and others). For details on when to use each type, see Comparison among endpoints.

Step 2: Create a topic

Create a topic to receive the messages that Filebeat sends.

The topic must be in the same region as the ECS instance that runs Filebeat. Topics cannot be used across regions. For example, if Filebeat runs on an ECS instance in the China (Beijing) region, create the topic in China (Beijing) as well.

Log on to the ApsaraMQ for Kafka console.

In the Resource Distribution section of the Overview page, select the region where your instance is deployed.

On the Instances page, click the instance name.

In the left-side navigation pane, click Topics.

On the Topics page, click Create Topic.

In the Create Topic panel, configure the following parameters and click OK.

Parameter | Description | Example |

Name | The topic name. | filebeat |

Description | A brief description of the topic. | Filebeat log output |

Partitions | The number of partitions. | 12 |

Storage Engine | The storage engine for messages. Cloud Storage -- uses Alibaba Cloud disks with three replicas in distributed mode (low latency, high durability). Local Storage -- uses the Apache Kafka ISR algorithm with three replicas. If you created a Standard (High Write) instance, only Cloud Storage is available. You can specify the storage engine type only for non-serverless Professional Edition instances. Other instance types default to Cloud Storage. | Cloud Storage |

Message Type | The message ordering behavior. Normal Message -- messages with the same key go to the same partition in send order, but order may not be preserved during a broker failure. Automatically selected when Storage Engine is Cloud Storage. Partitionally Ordered Message -- messages with the same key go to the same partition in send order, and order is preserved during a broker failure (some partitions become temporarily unavailable). Automatically selected when Storage Engine is Local Storage. | Normal Message |

Log Cleanup Policy | Available only when Storage Engine is Local Storage (Professional Edition). Delete -- retains messages based on the maximum retention period; removes the oldest messages when storage exceeds 85%. Compact -- retains only the latest value for each key (log compaction in Apache Kafka). Use this policy only for specific components such as Kafka Connect and Confluent Schema Registry. For more information, see aliware-kafka-demos. | Delete |

Tag | Optional tags to attach to the topic. | filebeat |

After the topic is created, it appears on the Topics page.

Step 3: Configure and start Filebeat

Create a Filebeat configuration file that sends output to your ApsaraMQ for Kafka topic, then start Filebeat.

Write the configuration file

Go to the Filebeat installation directory.

Create a file named

output.confwith the following content:

filebeat.inputs:

- type: stdin

output.kafka:

# Replace with your VPC endpoint addresses (default endpoint or SASL endpoint).

hosts:

- "<endpoint-1>:9092"

- "<endpoint-2>:9092"

- "<endpoint-3>:9092"

topic: "<your-topic-name>"

required_acks: 1

compression: none

max_message_bytes: 1000000

# If your instance uses SASL authentication, uncomment the following lines.

# username: "<your-username>"

# password: "<your-password>"Replace the following placeholders with your actual values:

Placeholder | Description | Example |

| VPC endpoint addresses from Step 1 |

|

| Topic name from Step 2 |

|

| Username from the Configuration Information section (SASL only) | -- |

| Password from the Configuration Information section (SASL only) | -- |

The following table describes each output.kafka parameter:

Parameter | Description | Default |

| Broker addresses for the VPC endpoint. ApsaraMQ for Kafka supports the default endpoint and the SASL endpoint. | -- |

| The target topic name. | -- |

| The ACK reliability level. |

|

| Compression codec applied to messages. Valid values: |

|

| Maximum size of a single message in bytes. Must be smaller than the maximum message size configured on the ApsaraMQ for Kafka instance. |

|

For the full parameter reference, see Kafka output plugin in the Filebeat documentation.

Start Filebeat and send a test message

Run the following command from the Filebeat installation directory:

./filebeat -c ./output.ymlType

testand press Enter. Filebeat sends this input as a message to the specified topic.

Step 4: Verify message delivery

Check the topic partition status to confirm that messages arrived.

Log on to the ApsaraMQ for Kafka console.

In the Resource Distribution section of the Overview page, select the region where your instance is deployed.

On the Instances page, click the instance name.

In the left-side navigation pane, click Topics.

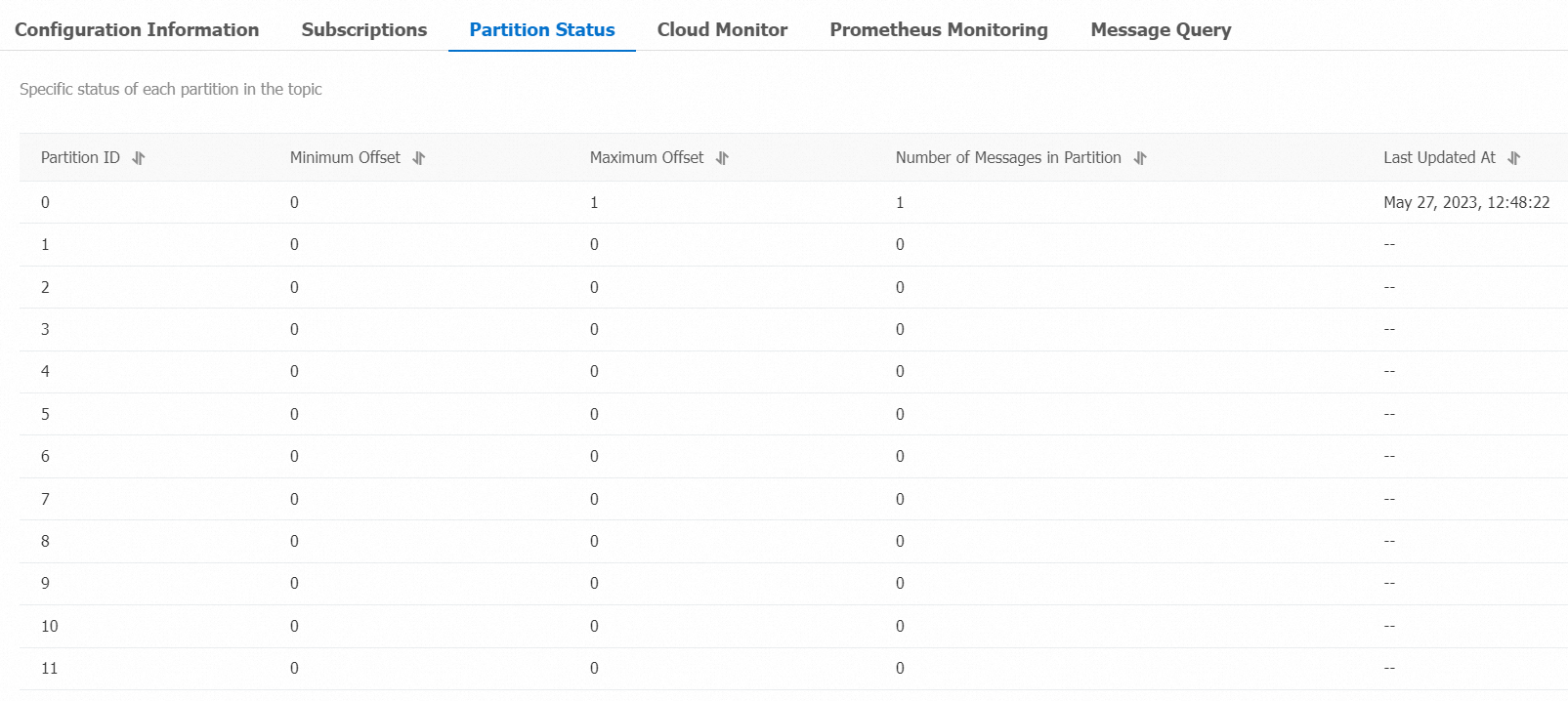

Click the topic name. On the Topic Details page, click the Partition Status tab.

Parameter | Description |

Partition ID | The partition ID. |

Minimum Offset | The earliest offset. |

Maximum Offset | The latest offset. |

Messages | Number of messages in the partition. |

Last Updated At | When the most recent message was stored. |

If Messages is greater than zero, Filebeat is successfully delivering data to ApsaraMQ for Kafka.

Step 5: Query a message by offset

Look up a specific message by partition and offset to inspect its content.

Log on to the ApsaraMQ for Kafka console.

In the Resource Distribution section of the Overview page, select the region where your instance is deployed.

On the Instances page, click the instance name.

In the left-side navigation pane, click Message Query.

From the Search Method drop-down list, select Search by offset.

Select a Topic and a Partition, enter an Offset value, and click Search. The console returns all messages with an offset greater than or equal to the value you specified. For example, selecting partition

5with offset5returns all messages from partition 5 starting at offset 5.

Parameter | Description |

Partition | The partition from which the message was retrieved. |

Offset | The position of the message within the partition. |

Key | The message key, displayed as a string. |

Value | The message body (content), displayed as a string. |

Created At | The timestamp when the message was produced. If the producer set a custom timestamp in the |

Actions | Download Key -- download the message key. Download Value -- download the message body. The console displays up to 1 KB per message; larger messages are truncated. Download the message to view full content. Each download is limited to 10 MB. |