Messages in an ApsaraMQ for Kafka topic may not be evenly distributed across partitions. This can be expected behavior or a sign of a misconfigured producer. This topic explains how partition assignment works, what causes uneven distribution, and how to resolve it.

Symptom

Some partitions receive significantly more messages than others, and some partitions receive no messages at all.

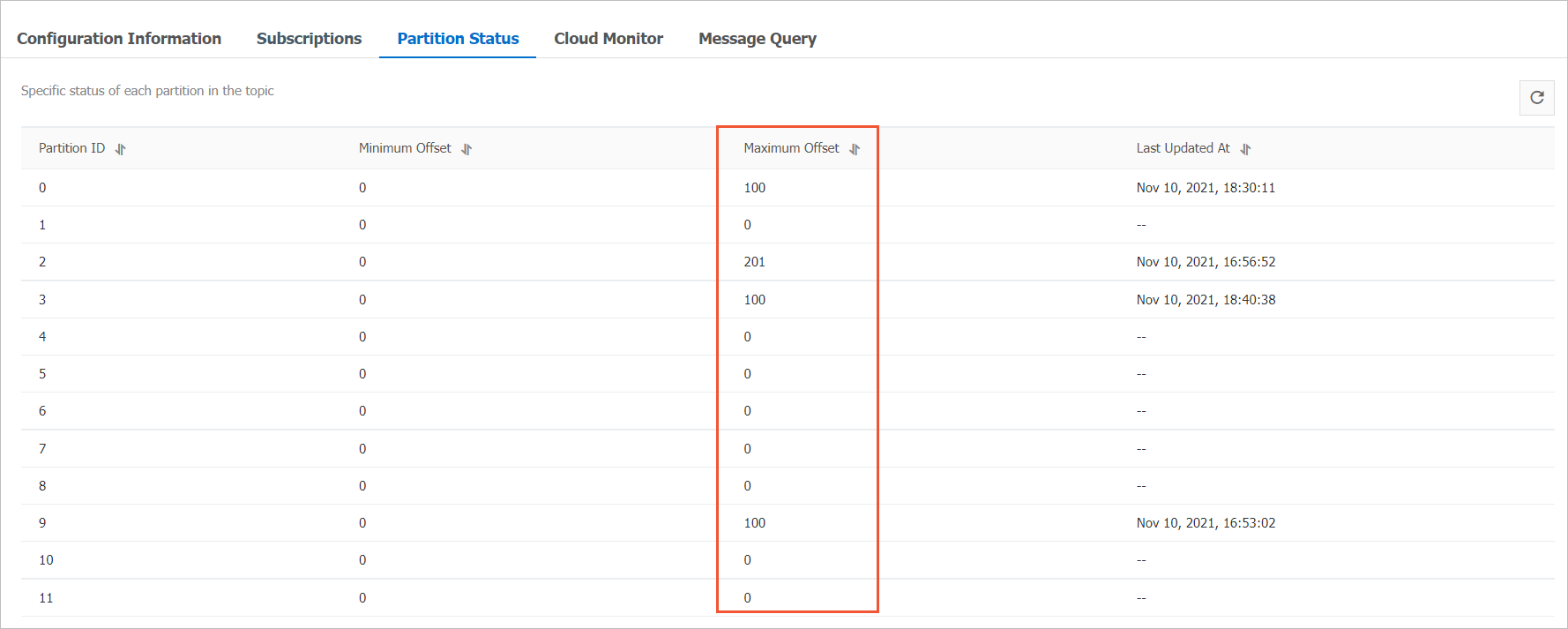

To check the distribution, open the Topic Details page in the ApsaraMQ for Kafka console and click the Partition Status tab. The offset value for each partition indicates how many messages it contains.

In this example, Partition 2 has far more messages than the other partitions, and several partitions have none.

How partition assignment works

When a producer sends a message, one of three mechanisms determines which partition receives it:

| Scenario | Behavior |

|---|---|

| Explicit partition | The producer specifies a partition number directly. The message always goes to that partition. |

| Message key provided | The client hashes the key with the murmur2 algorithm and maps the result to a partition. Messages with the same key always go to the same partition. |

| No partition or key | The default partitioner uses a sticky strategy: it sends consecutive messages to the same partition until a batch is full, then switches to another partition. Over time, messages distribute roughly evenly. |

The sticky partitioner (default since Kafka 2.4) intentionally creates short-term unevenness. It batches messages to the same partition to reduce latency. If you check partition offsets at a single point in time, some variation is normal.

Causes and solutions

Cause 1: Messages are sent to a hardcoded partition

If the producer explicitly sets the partition field when creating a message record, all messages go to that partition. Other partitions receive nothing.

Solution:

Remove the explicit partition assignment from the producer code. Let the client determine partitions based on the message key or the default partitioner.

If you must target specific partitions, distribute messages across all intended partitions rather than a single one.

Cause 2: Skewed message key distribution

When all messages share the same key or a small set of keys, the hash-based assignment concentrates messages in a few partitions.

Solution:

Review the key space. Choose keys that distribute evenly across the expected message volume. For example, use a user ID, order ID, or other high-cardinality identifier instead of a fixed category name.

If partition affinity is not required, omit the message key. The default partitioner distributes messages across all partitions automatically.

Cause 3: Custom partitioner logic defect

If the producer uses a custom partitioner.class implementation, a bug in the partition selection logic can route most or all messages to a subset of partitions.

Solution:

Check the

partitioner.classsetting in the producer configuration. If it points to a custom class, review the implementation for logic errors.Test the partitioner with a representative message sample and verify that it maps to all partitions.

If even distribution is the goal and no custom logic is needed, remove the

partitioner.classsetting to revert to the default partitioner.

The RoundRobinPartitioner has a known issue (KAFKA-9965) that can cause uneven distribution when new batches are created. If you use this partitioner, consider switching to the default sticky partitioner.When unevenness is expected

Not all uneven distribution is a problem. Consider these scenarios before taking action:

Short-term snapshots: The sticky partitioner batches messages to one partition at a time. A point-in-time snapshot of partition offsets almost always shows some variation. Check the distribution over a longer period to determine whether it evens out.

Key-based routing by design: If messages are keyed by a business identifier (such as a tenant ID), partitions that correspond to high-traffic tenants naturally receive more messages. This is expected when partition affinity matters more than even distribution.

Low message volume: With a small number of messages spread across many partitions, statistical variation alone can produce uneven distribution.