Alibaba Cloud AI Containers (AC2) provides a set of AI container images that are optimized for and deeply integrated with Alibaba Cloud infrastructure, such as Elastic Compute Service (ECS) and Container Service for Kubernetes (ACK). These images can significantly reduce the cost of deploying AI application environments. This topic describes how to configure the runtime environment on an ECS instance and run an AC2 container for PyTorch training.

Purchase and connect to an ECS instance

To use AC2 on an ECS instance, you must first purchase and connect to an ECS instance. For more information, see Create and manage an ECS instance in the console (express version).

When you purchase an instance, Alibaba Cloud provides a wide selection of images. We recommend using the Alibaba Cloud Linux 3.2104 LTS 64-bit image to ensure full service support.

Configure the ECS instance

Install Docker

Before you can use AC2, you must set up the Docker runtime environment. The installation steps for Docker vary depending on the operating system. For more information, see Install and use Docker and Docker Compose. The following steps show how to set up the Docker runtime environment on Alibaba Cloud Linux 3.

Add the Docker Community Edition (Docker-CE) software repository and install a compatible plugin.

sudo dnf config-manager --add-repo=https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo sudo dnf install -y --repo alinux3-plus dnf-plugin-releasever-adapterInstall Docker-CE.

sudo dnf install -y docker-ceAfter the installation is complete, run the following command to view the Docker version number and check whether the installation was successful.

docker -vStart the Docker service and enable it to start on system startup.

sudo systemctl start docker # Start the Docker service sudo systemctl enable docker # Set the Docker service to start on system startupCheck the status of the Docker service.

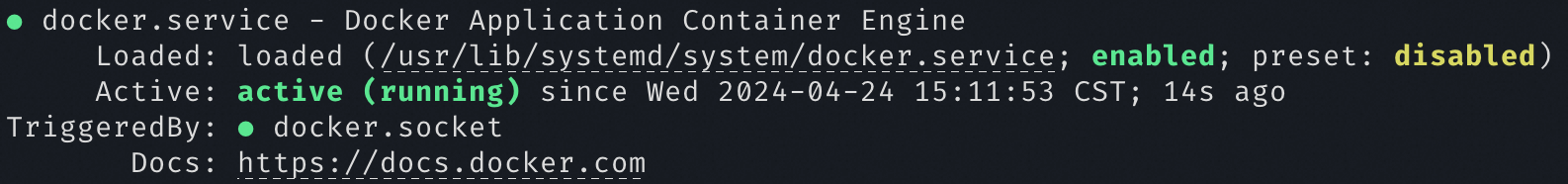

systemctl status dockerThe output in the following figure indicates that the Docker service has started successfully.

Install the NVIDIA Container Toolkit (for GPU-accelerated instances)

For GPU-accelerated instances, you must install the NVIDIA Container Toolkit to enable GPU support for containers. The NVIDIA Container Toolkit is supported on Alibaba Cloud Linux 3. Run the following commands to complete the configuration on a GPU-accelerated instance.

Add the NVIDIA CUDA repository and enable the driver for installation. You can switch driver versions by enabling different module streams, such as 570-open or open-dkms. Run

dnf module list nvidia-driverto view all available versions. For information about recommended driver versions for different instances, see Automatically install or load a Tesla driver when you create a GPU-accelerated instance.sudo dnf config-manager --add-repo=https://developer.download.nvidia.com/compute/cuda/repos/rhel8/x86_64/cuda-rhel8.repo sudo dnf module enable nvidia-driver:570-open sudo dnf install nvidia-driver-cuda kmod-nvidia-open-dkmsTo uninstall or switch the driver version, run the following commands to remove the installed driver and reset the module configuration.

dnf module remove --all nvidia-driver dnf module reset nvidia-driverInstall the NVIDIA Container Toolkit.

sudo dnf install -y nvidia-container-toolkitRestart the Docker daemon process to enable GPU access for containers.

sudo systemctl restart dockerAfter the service restarts, Docker can pass through GPUs. When you create a container, you can add the

--gpus <gpu-request>parameter to specify the GPUs to pass through.

After the ECS instance is configured, you can pull AC2 images for development and deployment. For more information, see Alibaba Cloud AI Containers image list.

Use AC2 for training

AC2 provides images for many training frameworks, such as PyTorch and TensorFlow. These images integrate various AI runtime frameworks and contain verified runtime drivers and acceleration libraries for different platforms, such as NVIDIA drivers and CUDA. This can save developers significant time during environment deployment.

PyTorch framework images

Run a PyTorch CPU image to train a model

Run the CPU container image.

Replace

<ac2-pytorch-cpu-image>with the address of the PyTorch CPU image.sudo docker run -it --rm <ac2-pytorch-cpu-image>Download the sample code.

git clone https://github.com/pytorch/examples.gitDownload the PyTorch sample code and use the

--no-cudaparameter to specify that the training task runs on the CPU.python3 examples/mnist/main.py --no-cuda

Run a PyTorch GPU image to train a model

Run the GPU container image. Use the

--gpus allparameter to allow the container to use all GPUs.Replace <ac2-pytorch-gpu-image> with the address of the PyTorch GPU image.

sudo docker run -it --rm --gpus all <ac2-pytorch-gpu-image>Download the sample code.

git clone https://github.com/pytorch/examples.gitDownload the PyTorch sample code. By default, the GPU is used for training.

python3 examples/mnist/main.py

Clean up the runtime environment

After the training tasks are complete, you can follow these steps to clean up the container environment.

Run the following command to list all containers.

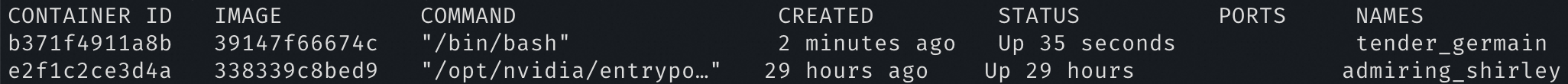

sudo docker ps -aThe command output lists information such as the container ID (CONTAINER ID), image (IMAGE), status (STATUS), and container name (NAMES).

Run the following commands to remove a container. Before you remove a container, make sure that it is stopped.

Replace

<CONTAINER ID>with the ID of the container that you want to remove.sudo docker stop <CONTAINER ID> # Stop the container sudo docker rm <CONTAINER ID> # Remove the containerRun the following command to list the downloaded images.

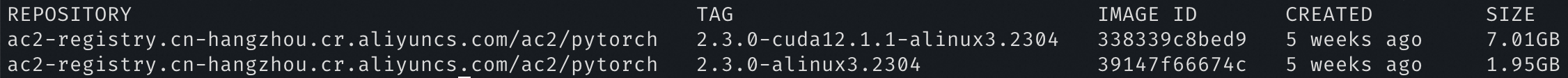

sudo docker imagesThe output shows information such as the image repository, image tag, and image ID.

Run the following command to delete the specified image.

Replace

<IMAGE ID>with the ID of the image that you want to delete.sudo docker rmi <IMAGE ID>