The Application Load Balancer (ALB) multi-cluster gateway of Distributed Cloud Container Platform for Kubernetes (ACK One) lets you implement zone-disaster recovery across availability zones (AZs) — routing traffic by weight in steady state and failing over automatically when a cluster goes down. This topic walks you through the end-to-end setup using a Fleet instance, GitOps, and Ingress weight annotations.

How it works

A typical business architecture has three layers: the access layer that receives inbound traffic, the application layer that processes it, and the data layer that persists it. Zone-disaster recovery addresses each layer:

-

Access layer — ACK One deploys the ALB instance across multiple zones within a region by default, so the entry point is inherently highly available.

-

Application layer — An ALB multi-cluster gateway distributes traffic across clusters in different AZs using weight-based Ingress rules. When a cluster fails, ALB detects unhealthy pods and reroutes traffic to the remaining cluster automatically.

-

Data layer — Disaster recovery and data synchronization have middleware dependencies (for example, ApsaraDB RDS). This topic does not cover data-layer configuration.

Zone-disaster recovery compared to other approaches

| Approach | Network latency | Scope of protection | Complexity |

|---|---|---|---|

| Zone-disaster recovery (this topic) | Low — same region | Zone-level failures (power outage, network interruption, fire) | Low |

| Active geo-redundancy | Higher — cross-region | Region-level disasters (flood, earthquake) | High |

| Three data centers across two zones | Low + higher | Zone-level and region-level combined | Highest |

ALB multi-cluster gateway vs. DNS-based traffic distribution

DNS-based traffic distribution requires a separate load balancer IP address per cluster and relies on DNS caching during failover, which causes temporary service interruptions. The ALB multi-cluster gateway approach:

-

Uses a single IP address for the region, with multi-zone deployment by default

-

Supports Layer 7 request forwarding and weight-based routing

-

Fails over to backend pods in another cluster without client-side DNS cache delays

-

Lets you manage all traffic rules from the Fleet instance — no Ingress controller per cluster needed

Architecture

This topic uses a web application (a Deployment and a Service) to demonstrate zone-disaster recovery in the China (Hong Kong) region:

-

Cluster 1 runs in AZ 1; Cluster 2 runs in AZ 2.

-

ACK One GitOps distributes the

web-demoapplication to both clusters. -

An AlbConfig on the Fleet instance creates an ALB multi-cluster gateway that routes traffic across both clusters.

-

Ingress weight annotations control the traffic split. When one cluster is unhealthy, ALB automatically shifts traffic to the other.

-

Data synchronization (ApsaraDB RDS) has middleware dependencies and is outside the scope of this topic.

Prerequisites

Before you begin, ensure that you have:

-

The Fleet management feature enabled — see Enable multi-cluster management

-

Two ACK clusters associated with the Fleet instance, both in the same virtual private cloud (VPC) as the Fleet instance — see Manage associated clusters

-

The security group inbound rules of each associated cluster configured to allow traffic from all IP addresses and ports within the vSwitch CIDR block (required for the ALB instance to reach backend pods)

-

The kubeconfig file of the Fleet instance downloaded from the ACK One console and a kubectl client connected to the Fleet instance

-

The latest version of Alibaba Cloud CLI installed and configured

Step 1: Distribute the application to multiple clusters

Use GitOps to deploy the web-demo application to both associated clusters. You can also use the multi-cluster application distribution feature — see Create a multi-cluster application and Get started with application distribution.

-

Log on to the ACK One console. In the left-side navigation pane, choose Fleet > Multi-cluster Applications.

-

In the upper-left corner of the Multi-cluster Applications page, click

to the right of the Fleet instance name and select your Fleet instance from the drop-down list.

to the right of the Fleet instance name and select your Fleet instance from the drop-down list. -

Choose Create Multi-cluster Application > GitOps to go to the Create Multi-cluster Application - GitOps page.

If GitOps is not enabled for the Fleet instance, enable it first. See Enable GitOps for the Fleet instance. To allow internet access to GitOps, see Enable public access to Argo CD.

-

On the Create from YAML tab, paste the following ApplicationSet and click OK.

This ApplicationSet deploys

web-demoto every cluster associated with the Fleet instance. The Quick Create tab provides a form-based alternative — changes made there sync automatically to the YAML on the Create from YAML tab.apiVersion: argoproj.io/v1alpha1 kind: ApplicationSet metadata: name: appset-web-demo namespace: argocd spec: template: metadata: name: '{{.metadata.annotations.cluster_id}}-web-demo' namespace: argocd spec: destination: name: '{{.name}}' namespace: gateway-demo project: default source: repoURL: https://github.com/AliyunContainerService/gitops-demo.git path: manifests/helm/web-demo targetRevision: main helm: valueFiles: - values.yaml parameters: - name: envCluster value: '{{.metadata.annotations.cluster_name}}' syncPolicy: automated: {} syncOptions: - CreateNamespace=true generators: - clusters: selector: matchExpressions: - values: - cluster key: argocd.argoproj.io/secret-type operator: In - values: - in-cluster key: name operator: NotIn goTemplateOptions: - missingkey=error syncPolicy: preserveResourcesOnDeletion: false goTemplate: true

Step 2: Create the ALB multi-cluster gateway

Create an AlbConfig on the Fleet instance to provision an ALB instance and associate your clusters with it.

-

Get the IDs of two vSwitches in the VPC where the Fleet instance resides.

-

Create

gateway.yamlwith the following content. Replace${vsw-id1}and${vsw-id2}with the vSwitch IDs from the previous step, and replace${cluster1}and${cluster2}with the IDs of the associated clusters.apiVersion: alibabacloud.com/v1 kind: AlbConfig metadata: name: ackone-gateway-demo annotations: # Cluster IDs to associate with the ALB instance alb.ingress.kubernetes.io/remote-clusters: ${cluster1},${cluster2} spec: config: name: one-alb-demo addressType: Internet addressAllocatedMode: Fixed zoneMappings: - vSwitchId: ${vsw-id1} - vSwitchId: ${vsw-id2} listeners: - port: 8001 protocol: HTTP --- apiVersion: networking.k8s.io/v1 kind: IngressClass metadata: name: alb spec: controller: ingress.k8s.alibabacloud/alb parameters: apiGroup: alibabacloud.com kind: AlbConfig name: ackone-gateway-demoKey parameters:

Parameter Required Description metadata.nameYes Name of the AlbConfig. metadata.annotations: alb.ingress.kubernetes.io/remote-clustersYes Comma-separated IDs of the clusters to associate with the ALB instance. These clusters must already be associated with the Fleet instance. spec.config.nameNo Name of the ALB instance. spec.config.addressTypeNo Network type: Internet(default, public-facing) orIntranet(VPC-internal). Internet-facing ALB instances require an elastic IP address (EIP) and incur instance and bandwidth fees. See Pay-as-you-go.spec.config.zoneMappingsYes vSwitch IDs for the ALB instance. Specify vSwitches in at least two zones supported by ALB to ensure high availability. See Regions and zones in which ALB is available and Create and manage a vSwitch. spec.listenersNo Listener port and protocol. The example sets HTTP on port 8001. Keep this configuration — ALB Ingresses require a listener before they can route traffic. -

Apply the configuration:

kubectl apply -f gateway.yaml -

Wait 1–3 minutes, then verify that the ALB multi-cluster gateway was created:

kubectl get albconfig ackone-gateway-demoExpected output:

NAME ALBID DNSNAME PORT&PROTOCOL CERTID AGE ackone-gateway-demo alb-xxxx alb-xxxx.<regionid>.alb.aliyuncs.com 4d9hNote the

DNSNAMEvalue — you'll use it to send test requests in Step 4. -

Verify that the clusters are connected to the gateway:

kubectl get albconfig ackone-gateway-demo -ojsonpath='{.status.loadBalancer.subClusters}'The output lists the IDs of the associated clusters.

Step 3: Configure Ingress routing rules

Create a namespace and an Ingress on the Fleet instance. The Ingress weight annotations control how traffic is split across clusters.

-

Create the

gateway-demonamespace on the Fleet instance. This must match the namespace where the application Services are deployed. -

Create

ingress-demo.yamlwith the following content. Replace${cluster1-id}and${cluster2-id}with the actual cluster IDs.The weights in

alb.ingress.kubernetes.io/cluster-weight.*annotations must sum to 100.apiVersion: networking.k8s.io/v1 kind: Ingress metadata: annotations: alb.ingress.kubernetes.io/listen-ports: | [{"HTTP": 8001}] alb.ingress.kubernetes.io/cluster-weight.${cluster1-id}: "20" alb.ingress.kubernetes.io/cluster-weight.${cluster2-id}: "80" name: web-demo namespace: gateway-demo spec: ingressClassName: alb rules: - host: alb.ingress.alibaba.com http: paths: - path: /svc1 pathType: Prefix backend: service: name: service1 port: number: 80 -

Apply the Ingress:

kubectl apply -f ingress-demo.yaml -n gateway-demo

Step 4: Verify zone-disaster recovery

Verify weighted traffic distribution

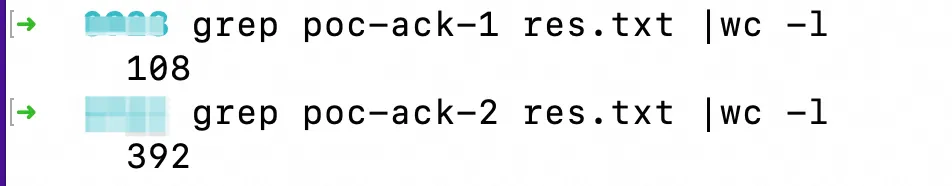

Send 500 requests to confirm the 20/80 traffic split between clusters.

Replace alb-xxxx.<regionid>.alb.aliyuncs.com with the DNSNAME from Step 2.

for i in {1..500}; do curl -H "host: alb.ingress.alibaba.com" alb-xxxx.<regionid>.alb.aliyuncs.com:8001/svc1; done > res.txtThe results show approximately 20% of responses from Cluster 1 (poc-ack-1) and 80% from Cluster 2 (poc-ack-2):

Simulate a cluster failure and verify automatic failover

-

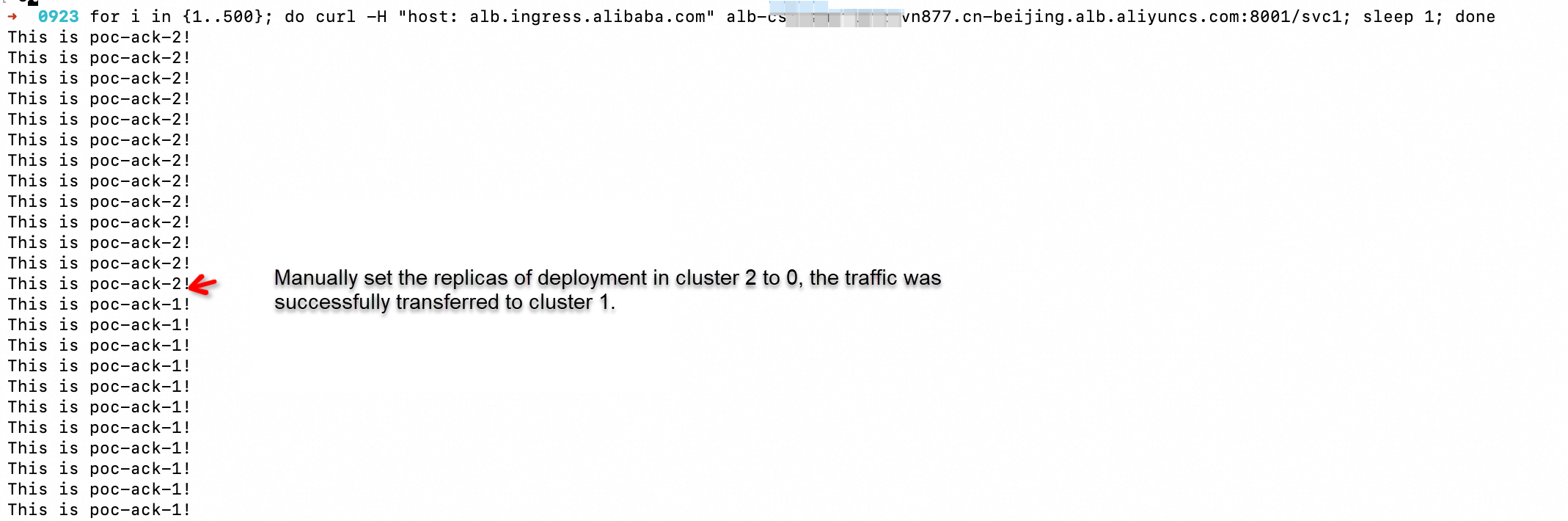

Start a continuous request stream:

for i in {1..500}; do curl -H "host: alb.ingress.alibaba.com" alb-xxxx.<regionid>.alb.aliyuncs.com:8001/svc1; sleep 1; done -

While requests are running, scale the application Deployment in Cluster 2 to 0 replicas.

-

Observe the output: after the change takes effect, all traffic shifts to Cluster 1 automatically in a seamless manner.

ALB detects that no healthy pods are available in Cluster 2 and routes all subsequent requests to Cluster 1 seamlessly.