This topic describes how to update expiring certificates for ACK dedicated clusters. You can update all node certificates using the console or kubectl, or you can manually update the certificates for master nodes and worker nodes.

ACK automatically updates master node certificates in ACK managed clusters. Manual intervention is not required.

Prerequisites

Before you begin, ensure that you have:

-

An ACK dedicated cluster with certificates expiring within approximately two months

-

kubectl installed and connected to the cluster. For more information, see Connect to a Kubernetes cluster using kubectl

-

Access to the ACK console

Update all node certificates using the console

-

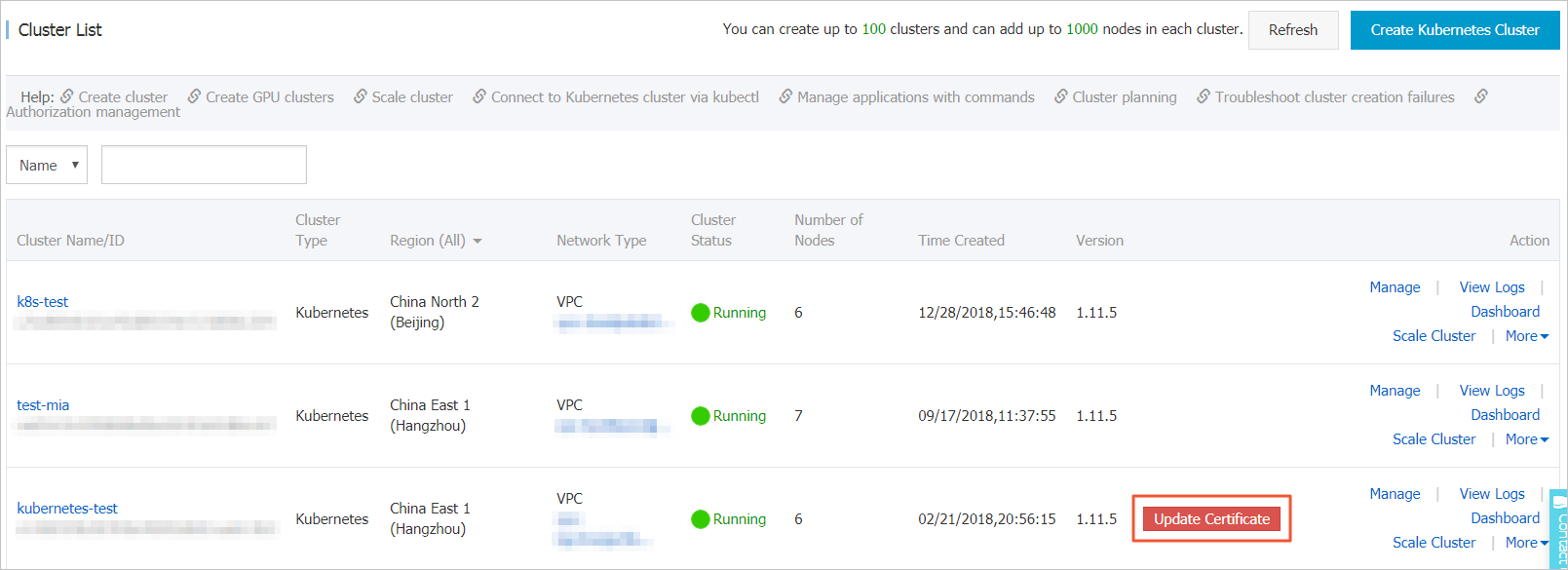

Log on to the ACK console. In the left navigation pane, click Clusters.

-

To the right of the cluster whose certificates are expiring, click Update Certificate. The Update Certificate page appears.

NoteThe Update Certificate option appears if the cluster certificates are due to expire within approximately two months.

-

On the Update Certificate page, click Update Certificate. Follow the on-screen instructions to update the certificates. After the certificates are updated:

-

The Update Certificate page shows The certificate has been updated.

-

The Update Certificate prompt is no longer displayed for the cluster on the Clusters page.

-

Update all node certificates using kubectl

Run the following command on any master node in the cluster to update certificates for all nodes.

curl http://aliacs-k8s-cn-hangzhou.oss-cn-hangzhou.aliyuncs.com/public/cert-update/renew.sh | bashVerify the update

-

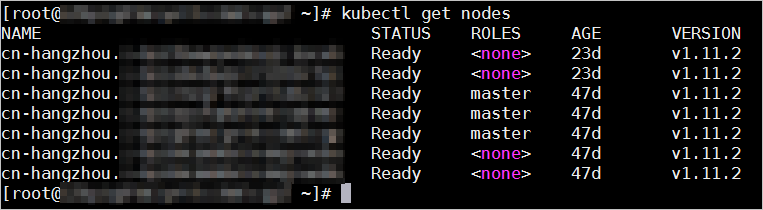

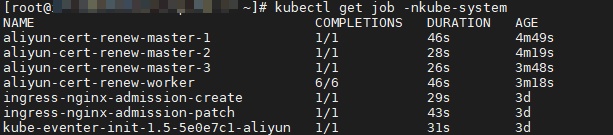

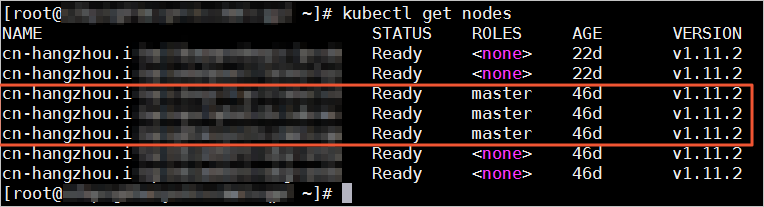

Run the following command to view the status of the master nodes and worker nodes.

kubectl get nodes

-

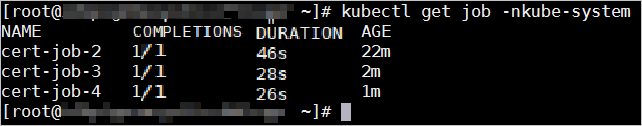

Run the following command to check the Job completion status. The certificates are updated when the

COMPLETIONSvalue for master nodes is1and theCOMPLETIONSvalue for worker nodes matches the number of worker nodes in the cluster.kubectl -n kube-system get job

Manually update master node certificates

Step 1: Create the Job manifest

In any directory, create a file named job-master.yml with the following content:

apiVersion: batch/v1

kind: Job

metadata:

name: ${jobname}

namespace: kube-system

spec:

backoffLimit: 0

completions: 1

parallelism: 1

template:

spec:

activeDeadlineSeconds: 3600

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- ${hostname}

containers:

- command:

- /renew/upgrade-k8s.sh

- --role

- master

image: registry.cn-hangzhou.aliyuncs.com/acs/cert-rotate:v1.0.0

imagePullPolicy: Always

name: ${jobname}

securityContext:

privileged: true

volumeMounts:

- mountPath: /alicoud-k8s-host

name: ${jobname}

hostNetwork: true

hostPID: true

restartPolicy: Never

schedulerName: default-scheduler

securityContext: {}

tolerations:

- effect: NoSchedule

key: node-role.kubernetes.io/master

volumes:

- hostPath:

path: /

type: Directory

name: ${jobname}Step 2: Get master node information

Get the number and names of master nodes in the cluster using one of the following methods:

-

kubectl: Run

kubectl get nodes.

-

Console: Log on to the ACK console. On the Clusters page, click the cluster name or click View Details in the Actions column. In the left navigation pane of the cluster details page, choose Nodes > Nodes to view the number of master nodes, their names, IP addresses, and instance IDs.

Step 3: Set variables and create the Job

-

Replace the

${jobname}and${hostname}variables injob-master.yml, then save the output tojob-master2.yml.sed 's/${jobname}/cert-job-2/g; s/${hostname}/hostname/g' job-master.yml > job-master2.ymlReplace the placeholders with the following values:

Placeholder Value ${jobname}The Job name. Set this to cert-job-2.${hostname}The master node name obtained in step 2. -

Create the Job.

kubectl create -f job-master2.yml -

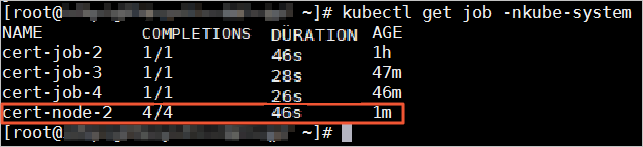

Check the Job status. The certificate update is complete when the

COMPLETIONSvalue is1.kubectl get job -nkube-system -

Repeat steps 1–3 for each master node in the cluster.

Manually update worker node certificates

Step 1: Create the Job manifest

In any directory, create a file named job-node.yml with the following content:

apiVersion: batch/v1

kind: Job

metadata:

name: ${jobname}

namespace: kube-system

spec:

backoffLimit: 0

completions: ${nodesize}

parallelism: ${nodesize}

template:

spec:

activeDeadlineSeconds: 3600

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: job-name

operator: In

values:

- ${jobname}

topologyKey: kubernetes.io/hostname

containers:

- command:

- /renew/upgrade-k8s.sh

- --role

- node

- --rootkey

- ${key}

image: registry.cn-hangzhou.aliyuncs.com/acs/cert-rotate:v1.0.0

imagePullPolicy: Always

name: ${jobname}

securityContext:

privileged: true

volumeMounts:

- mountPath: /alicoud-k8s-host

name: ${jobname}

hostNetwork: true

hostPID: true

restartPolicy: Never

schedulerName: default-scheduler

securityContext: {}

volumes:

- hostPath:

path: /

type: Directory

name: ${jobname}If worker nodes have taints, add a tolerations entry to job-node.yml for each tainted node. Insert the following block between securityContext: {} and volumes:, repeating it once per tainted node:

tolerations:

- effect: NoSchedule

key: ${key}

operator: Equal

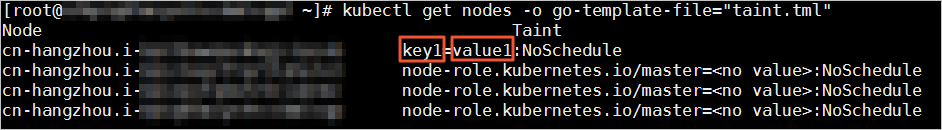

value: ${value}To get the taint key and value for each worker node:

-

Create a file named

taint.tmlwith the following content:{{printf "%-50s %-12s\n" "Node" "Taint"}} {{- range .items}} {{- if $taint := (index .spec "taints") }} {{- .metadata.name }}{{ "\t" }} {{- range $taint }} {{- .key }}={{ .value }}:{{ .effect }}{{ "\t" }} {{- end }} {{- "\n" }} {{- end}} {{- end}} -

Run the following command to query the taint keys and values for each worker node:

kubectl get nodes -o go-template-file="taint.tml"

Step 2: Get the cluster CA key

Run the following command on a master node to get the cluster CA key:

sed '1d' /etc/kubernetes/pki/ca.key | base64 -w 0Step 3: Set variables and create the Job

-

Replace the

${jobname},${nodesize}, and${key}variables injob-node.yml, then save the output tojob-node2.yml.sed 's/${jobname}/cert-node-2/g; s/${nodesize}/nodesize/g; s/${key}/key/g' job-node.yml > job-node2.ymlReplace the placeholders with the following values:

Placeholder Value ${jobname}The Job name. Set this to cert-node-2.${nodesize}The number of worker nodes in the cluster. ${key}The CA key obtained in step 2. -

Create the Job.

kubectl create -f job-node2.yml -

Check the Job status. The certificate update is complete when the

COMPLETIONSvalue matches the number of worker nodes in the cluster.kubectl get job -nkube-system