kube-apiserver exposes RESTful APIs that external clients and internal components use to interact with a Container Service for Kubernetes (ACK) cluster. This topic describes the monitoring metrics exposed by kube-apiserver, explains the dashboard panels, and provides guidance for diagnosing common metric anomalies.

Usage notes

Access method

For more information, see View cluster control plane component monitoring dashboards.

Metrics

Each metric reflects a specific aspect of kube-apiserver's status or behavior. The following table describes the metrics supported by kube-apiserver.

|

Metric |

Type |

Description |

|

apiserver_request_duration_seconds_bucket |

Histogram |

The latency between a request sent to the API server and the response returned. Requests are classified by the following dimensions:

Histogram buckets: |

|

apiserver_request_total |

Counter |

The total number of requests received by the API server, classified by verb, group, version, resource, scope, component, HTTP content type, HTTP status code, and client. |

|

apiserver_request_no_resourceversion_list_total |

Counter |

The number of LIST requests sent to the API server without the |

|

apiserver_current_inflight_requests |

Gauge |

The number of requests currently being processed by the API server, split into two types:

|

|

apiserver_dropped_requests_total |

Counter |

The number of requests dropped by the API server during throttling. A request is dropped when the API server returns the |

|

etcd_request_duration_seconds_bucket |

Histogram |

The latency between a request sent from the API server and the response returned by etcd, classified by operation and operation type. Histogram buckets: |

|

apiserver_flowcontrol_request_concurrency_limit |

Gauge |

The maximum number of requests a priority queue can process concurrently under API Priority and Fairness (APF) throttling, which is the theoretical maximum for that queue. This helps you understand how the API server distributes capacity across priority queues to keep high-priority requests processing on time. This metric is deprecated in Kubernetes 1.30 and will be removed from Kubernetes 1.31. For clusters that run Kubernetes 1.31 and later, we recommend that you use the apiserver_flowcontrol_nominal_limit_seats metric instead. |

|

apiserver_flowcontrol_current_executing_requests |

Gauge |

The number of requests currently executing in a priority queue, representing the actual concurrent load on that queue. Monitor this alongside the concurrency limit to detect when the queue is approaching saturation. |

|

apiserver_flowcontrol_current_inqueue_requests |

Gauge |

The number of requests currently waiting in a priority queue. A growing backlog here indicates traffic pressure on the API server and possible queue overload. |

|

apiserver_flowcontrol_nominal_limit_seats |

Gauge |

The nominal maximum number of concurrent seats available in the API server under APF, showing how capacity is allocated across priority queues. Unit: seats. |

|

apiserver_flowcontrol_current_limit_seats |

Gauge |

The actual maximum number of concurrent seats allowed in a priority queue after dynamic adjustment. Unlike apiserver_flowcontrol_nominal_limit_seats, this value can be reduced by global throttling policies. |

|

apiserver_flowcontrol_current_executing_seats |

Gauge |

The number of seats consumed by requests currently executing in a priority queue. When this value approaches apiserver_flowcontrol_current_limit_seats, the queue's concurrent capacity is nearly exhausted. To increase capacity, raise the maxMutatingRequestsInflight and maxRequestsInflight parameter values for the API server. For more information, see Customize the parameters of control plane components in ACK Pro clusters. |

|

apiserver_flowcontrol_current_inqueue_seats |

Gauge |

The number of seats occupied by requests waiting in a priority queue, reflecting the seat backlog for pending requests. |

|

apiserver_flowcontrol_request_execution_seconds_bucket |

Histogram |

The actual execution time of requests, measured from when execution starts to when the request completes. Histogram buckets: 0, 0.005, 0.02, 0.05, 0.1, 0.2, 0.5, 1, 2, 5, 10, 15, and 30. Unit: seconds. |

|

apiserver_flowcontrol_request_wait_duration_seconds_bucket |

Histogram |

The time requests spend waiting in a priority queue, measured from queue entry to execution start. Histogram buckets: 0, 0.005, 0.02, 0.05, 0.1, 0.2, 0.5, 1, 2, 5, 10, 15, and 30. Unit: seconds. |

|

apiserver_flowcontrol_dispatched_requests_total |

Counter |

The total number of requests successfully scheduled and processed by the API server under APF. |

|

apiserver_flowcontrol_rejected_requests_total |

Counter |

The number of requests rejected because they exceeded the concurrency limit or the queue capacity. |

|

apiserver_admission_controller_admission_duration_seconds_bucket |

Histogram |

The processing latency of the admission controller, identified by controller name, operation (CREATE, UPDATE, or CONNECT), API resource, operation type (validate or admit), and whether the request was denied. Histogram buckets: |

|

apiserver_admission_webhook_admission_duration_seconds_bucket |

Histogram |

The processing latency of the admission webhook, identified by webhook name, operation (CREATE, UPDATE, or CONNECT), API resource, operation type (validate or admit), and whether the request was denied. Histogram buckets: |

|

apiserver_admission_webhook_admission_duration_seconds_count |

Counter |

The number of requests processed by the admission webhook, identified by webhook name, operation (CREATE, UPDATE, or CONNECT), API resource, operation type (validate or admit), and whether the request was denied. |

|

cpu_utilization_core |

Gauge |

The number of CPU cores in use. Unit: cores. |

|

memory_utilization_byte |

Gauge |

The amount of memory in use. Unit: bytes. |

|

up |

Gauge |

Whether kube-apiserver is available.

|

The following resource utilization metrics are deprecated. Remove any alerts and monitoring configurations that depend on these metrics:

cpu_utilization_ratio: CPU utilization.memory_utilization_ratio: Memory utilization.Dashboard panels

Dashboards are generated from metrics using Prometheus Query Language (PromQL). The kube-apiserver dashboard includes the following panels, listed in the recommended usage sequence:

-

Key metrics: Get a quick overview of API server health.

-

Overview: Analyze response latency, in-flight request counts, and throttling status.

-

Resource analysis: Review resource usage of managed components.

-

QPS and latency: Break down QPS and response time by multiple dimensions.

-

APF throttling: Analyze request traffic distribution, throttling status, and performance bottlenecks using APF metrics.

-

Admission controller and webhook: Analyze admission controller and webhook QPS and response time.

-

Client analysis: Break down client QPS by multiple dimensions.

Filters

Filters above the dashboard let you narrow requests by verb and resource, adjust the quantile, and change the PromQL sampling interval.

Use the quantile filter to control which percentile is used for histogram metrics. P90 (0.9) excludes long-tail samples, giving a cleaner signal for typical latency. P99 (0.99) includes long-tail samples and is better for catching worst-case behavior.

The following filters control the time range and update interval.

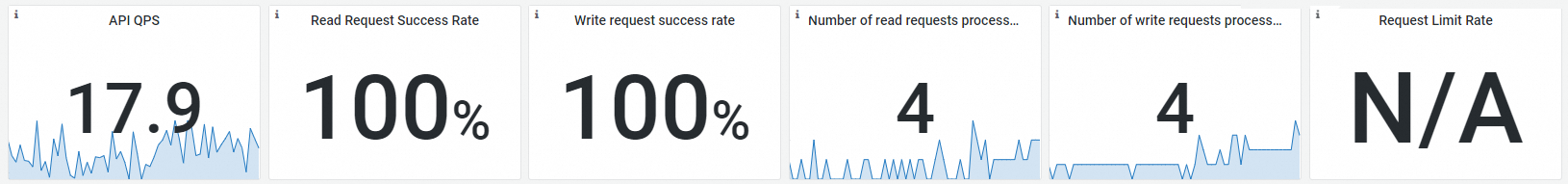

Key metrics

Observability

Feature

|

Metric |

PromQL |

Description |

|

API QPS |

sum(irate(apiserver_request_total\[$interval\])) |

The overall QPS of the API server. |

|

Read request success rate |

sum(irate(apiserver_request_total{code=\~"20.\*",verb=\~"GET\|LIST"}\[$interval\]))/sum(irate(apiserver_request_total{verb=\~"GET\|LIST"}\[$interval\])) |

The success rate of read requests (GET and LIST) sent to the API server. |

|

Write request success rate |

sum(irate(apiserver_request_total{code=\~"20.\*",verb!\~"GET\|LIST\|WATCH\|CONNECT"}\[$interval\]))/sum(irate(apiserver_request_total{verb!\~"GET\|LIST\|WATCH\|CONNECT"}\[$interval\])) |

The success rate of write requests sent to the API server. |

|

Number of read requests processed |

sum(apiserver_current_inflight_requests{requestKind="readOnly"}) |

The number of read requests currently being processed by the API server. |

|

Number of write requests processed |

sum(apiserver_current_inflight_requests{requestKind="mutating"}) |

The number of write requests currently being processed by the API server. |

|

Request limit rate |

sum(irate(apiserver_dropped_requests_total\[$interval\])) |

The rate of requests dropped by the API server due to throttling. |

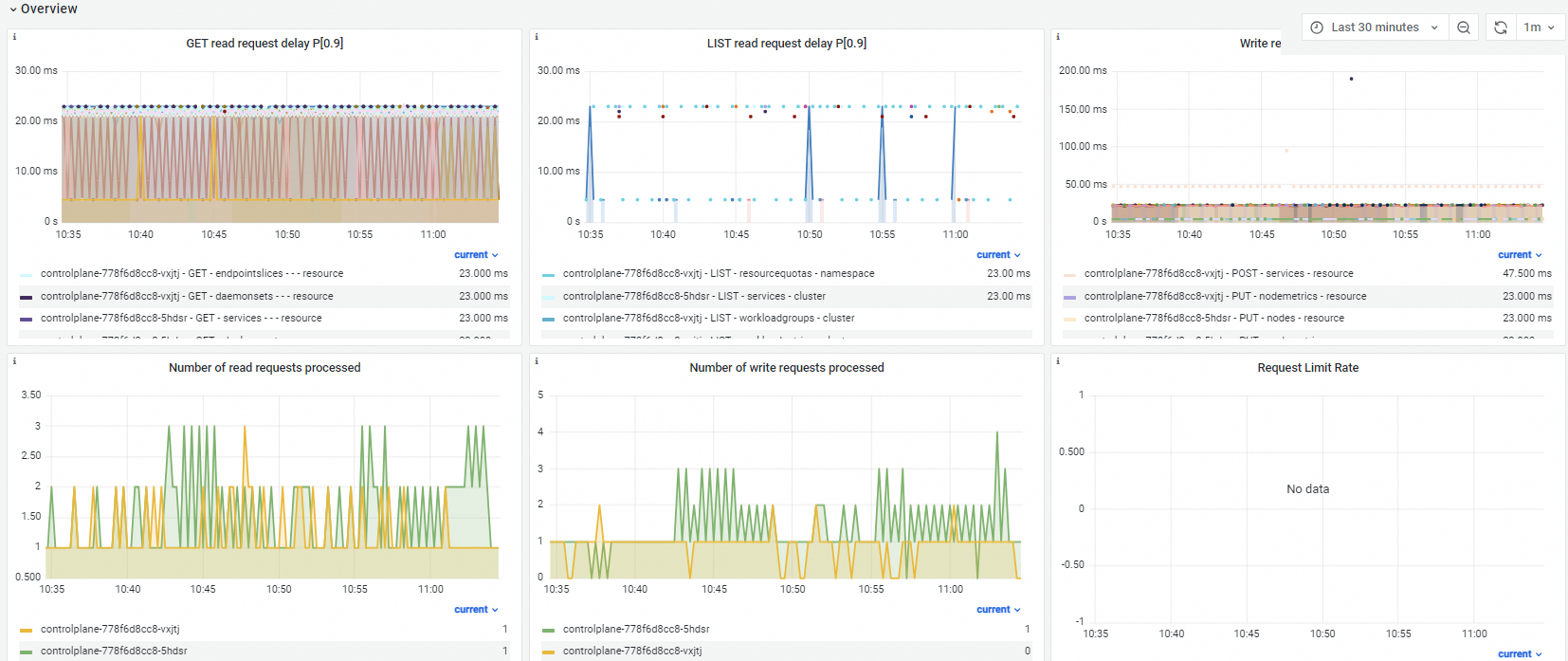

Overview

Observability

Feature

|

Metric |

PromQL |

Description |

|

GET read request delay P[0.9] |

histogram_quantile($quantile, sum(irate(apiserver_request_duration_seconds_bucket{verb="GET",resource!="",subresource!\~"log\|proxy"}\[$interval\])) by (pod, verb, resource, subresource, scope, le)) |

The response time of GET requests, broken down by API server Pod, verb, resource, and scope. |

|

LIST read request delay P[0.9] |

histogram_quantile($quantile, sum(irate(apiserver_request_duration_seconds_bucket{verb="LIST"}\[$interval\])) by (pod_name, verb, resource, scope, le)) |

The response time of LIST requests, broken down by API server Pod, verb, resource, and scope. |

|

Write request delay P[0.9] |

histogram_quantile($quantile, sum(irate(apiserver_request_duration_seconds_bucket{verb!\~"GET\|WATCH\|LIST\|CONNECT"}\[$interval\])) by (cluster, pod_name, verb, resource, scope, le)) |

The response time of mutating requests, broken down by API server Pod, verb, resource, and scope. |

|

Number of read requests processed |

apiserver_current_inflight_requests{request_kind="readOnly"} |

The number of read requests currently being processed by the API server. |

|

Number of write requests processed |

apiserver_current_inflight_requests{request_kind="mutating"} |

The number of write requests currently being processed by the API server. |

|

Request limit rate |

sum(irate(apiserver_dropped_requests_total{request_kind="readOnly"}\[$interval\])) by (name) sum(irate(apiserver_dropped_requests_total{request_kind="mutating"}\[$interval\])) by (name) |

The throttling rate of the API server. |

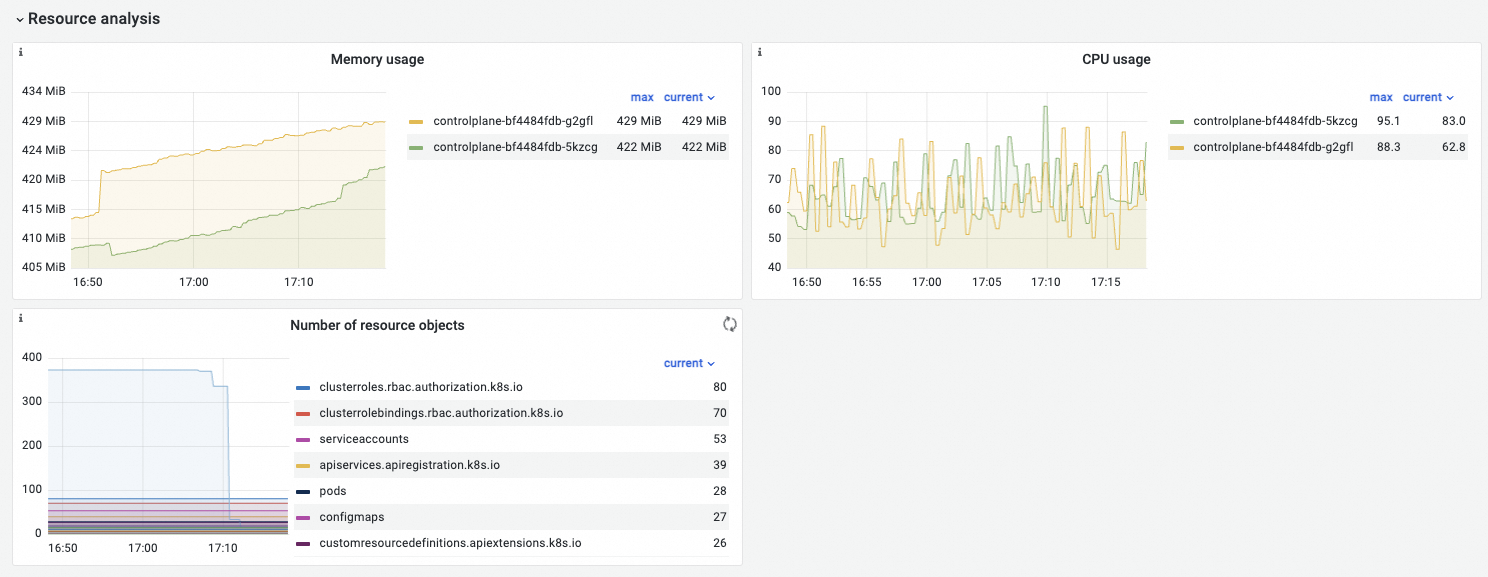

Resource analysis

Observability

Feature

|

Metric |

PromQL |

Description |

|

Memory usage |

memory_utilization_byte{container="kube-apiserver"} |

The memory used by the API server. Unit: bytes. |

|

CPU usage |

cpu_utilization_core{container="kube-apiserver"}\*1000 |

The CPU used by the API server. Unit: millicores. |

|

Number of resource objects |

|

Note

Due to compatibility, both apiserver_storage_objects and etcd_object_counts exist in Kubernetes 1.22. |

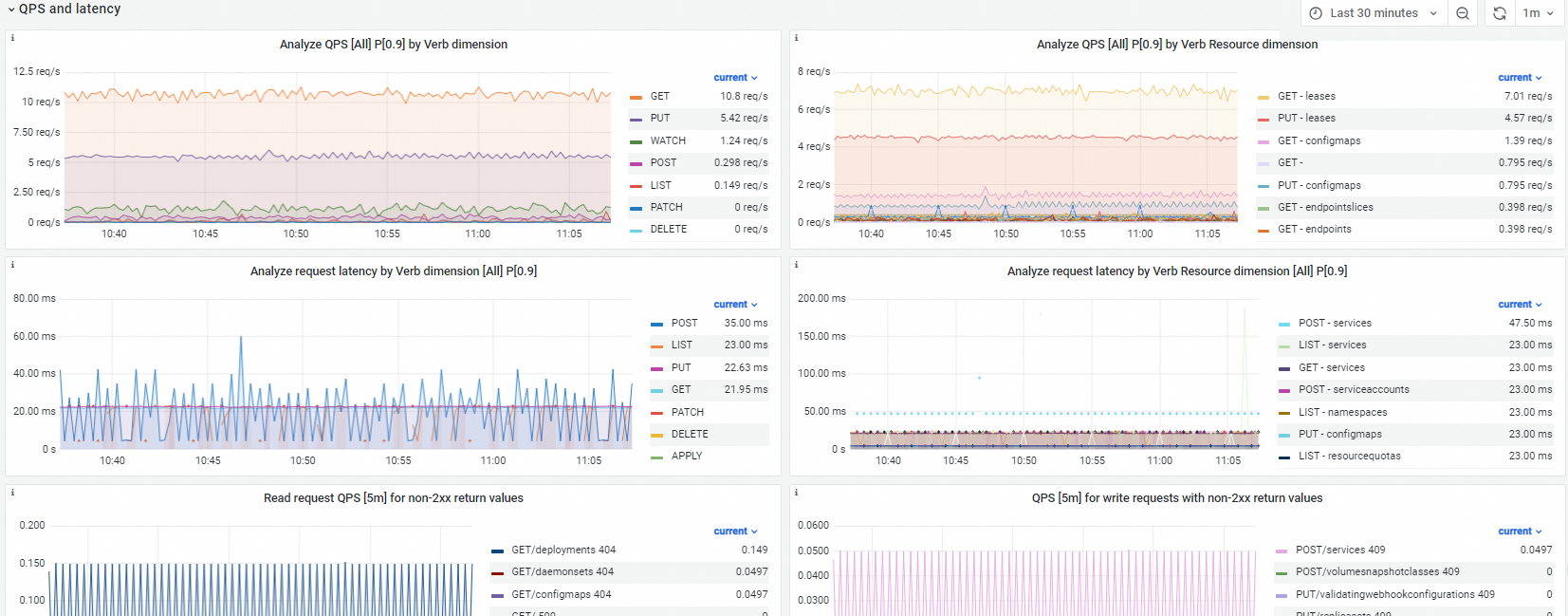

QPS and latency

Observability

Feature

|

Metric |

PromQL |

Description |

|

Analyze QPS [All] P[0.9] by verb dimension |

sum(irate(apiserver_request_total{verb=\~"$verb"}\[$interval\]))by(verb) |

QPS broken down by verb. |

|

Analyze QPS [All] P[0.9] by verb resource dimension |

sum(irate(apiserver_request_total{verb=\~"$verb",resource=\~"$resource"}\[$interval\]))by(verb,resource) |

QPS broken down by verb and resource. |

|

Analyze request latency by verb dimension [All] P[0.9] |

histogram_quantile($quantile, sum(irate(apiserver_request_duration_seconds_bucket{verb=\~"$verb", verb!\~"WATCH\|CONNECT",resource!=""}\[$interval\])) by (le,verb)) |

Request latency broken down by verb. |

|

Analyze request latency by verb resource dimension [All] P[0.9] |

histogram_quantile($quantile, sum(irate(apiserver_request_duration_seconds_bucket{verb=\~"$verb", verb!\~"WATCH\|CONNECT", resource=\~"$resource",resource!=""}\[$interval\])) by (le,verb,resource)) |

Request latency broken down by verb and resource. |

|

Read request QPS [5m] for non-2xx return values |

sum(irate(apiserver_request_total{verb=\~"GET\|LIST",resource=\~"$resource",code!\~"2.\*"}\[$interval\])) by (verb,resource,code) |

QPS of read requests that returned non-2xx HTTP status codes (4xx or 5xx). |

|

QPS [5m] for write requests with non-2xx return values |

sum(irate(apiserver_request_total{verb!\~"GET\|LIST\|WATCH",verb=\~"$verb",resource=\~"$resource",code!\~"2.\*"}\[$interval\])) by (verb,resource,code) |

QPS of write requests that returned non-2xx HTTP status codes (4xx or 5xx). |

|

API server to etcd request latency [5m] |

histogram_quantile($quantile, sum(irate(etcd_request_duration_seconds_bucket\[$interval\])) by (le,operation,type,instance)) |

The latency of requests sent from the API server to etcd. |

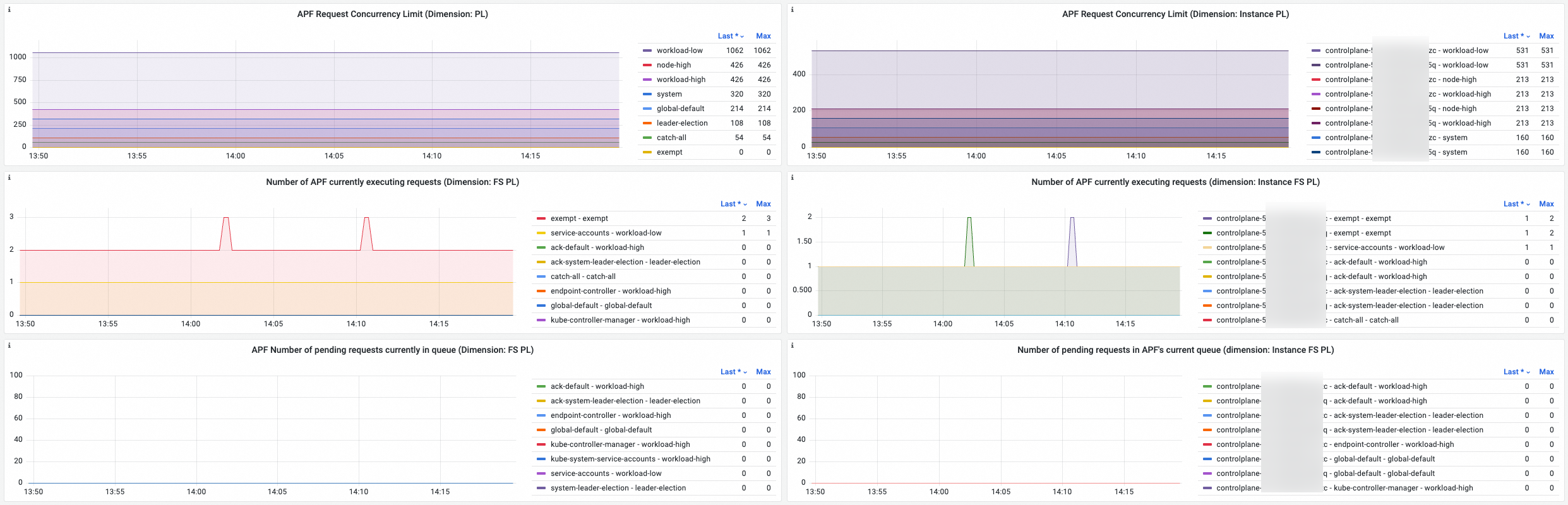

APF throttling

APF throttling metrics monitoring is in canary release.

Clusters running Kubernetes 1.20 or later support APF-related metrics. To upgrade your cluster, see Manually upgrade a cluster.

APF-related metric dashboards also require updated versions of the following components. See Upgrade monitoring components.

Cluster monitoring component: 0.06 or later.

ack-arms-prometheus: 1.1.31 or later.

Managed probe: 1.1.31 or later.

Observability

Feature

APF metrics are reported across three dimensions:

-

PL (priority level): statistics per priority level.

-

Instance: statistics per API server instance.

-

FS (Flow Schema): statistics per request classification.

For more information about APF and these dimensions, see APF.

|

Metric |

PromQL |

Description |

|

APF request concurrent limit (dimension: PL) |

sum by(priority_level) (apiserver_flowcontrol_request_concurrency_limit) |

The maximum number of requests a priority queue can process concurrently, aggregated by priority level or by instance and priority level. apiserver_flowcontrol_request_concurrency_limit is deprecated in Kubernetes 1.30 and will be removed from version 1.31. For clusters that run Kubernetes 1.31 and later, we recommend that you use the apiserver_flowcontrol_nominal_limit_seats metric instead. |

|

APF request concurrent limit (dimension: instance PL) |

sum by(instance,priority_level) (apiserver_flowcontrol_request_concurrency_limit) |

|

|

Number of APF currently executing requests (dimension: FS PL) |

sum by(flow_schema,priority_level) (apiserver_flowcontrol_current_executing_requests) |

The number of requests currently executing, aggregated by Flow Schema and priority level, or by instance, Flow Schema, and priority level. |

|

Number of APF currently executing requests (dimension: instance FS PL) |

sum by(instance,flow_schema,priority_level)(apiserver_flowcontrol_current_executing_requests) |

|

|

APF number of pending requests in queue (dimension: FS PL) |

sum by(flow_schema,priority_level) (apiserver_flowcontrol_current_inqueue_requests) |

The number of requests waiting in a priority queue, aggregated by Flow Schema and priority level, or by instance, Flow Schema, and priority level. |

|

APF number of pending requests in queue (dimension: instance FS PL) |

sum by(instance,flow_schema,priority_level) (apiserver_flowcontrol_current_inqueue_requests) |

|

|

APF nominal concurrency limit seats |

sum by(instance,priority_level) (apiserver_flowcontrol_nominal_limit_seats) |

APF seat-count metrics aggregated by instance and priority level, covering:

|

|

APF current concurrency limit seats |

sum by(instance,priority_level) (apiserver_flowcontrol_current_limit_seats) |

|

|

Number of APF seats in execution |

sum by(instance,priority_level) (apiserver_flowcontrol_current_executing_seats) |

|

|

Number of seats in APF queue |

sum by(instance,priority_level) (apiserver_flowcontrol_current_inqueue_seats) |

|

|

APF request execution time |

histogram_quantile($quantile, sum(irate(apiserver_flowcontrol_request_execution_seconds_bucket\[$interval\])) by (le,instance, flow_schema,priority_level)) |

The time from when a request starts executing to when it completes. |

|

APF request wait time |

histogram_quantile($quantile, sum(irate(apiserver_flowcontrol_request_wait_seconds_bucket\[$interval\])) by (le,instance, flow_schema,priority_level)) |

The time from when a request enters the queue to when it starts executing. |

|

APF dispatched request QPS |

sum(irate(apiserver_flowcontrol_dispatched_requests_total\[$interval\]))by(instance,flow_schema,priority_level) |

QPS of requests successfully scheduled and processed by APF. |

|

APF rejected request QPS |

sum(irate(apiserver_flowcontrol_rejected_requests_total\[$interval\]))by(instance,flow_schema,priority_level) |

QPS of requests rejected due to exceeding the concurrency limit or queue capacity. |

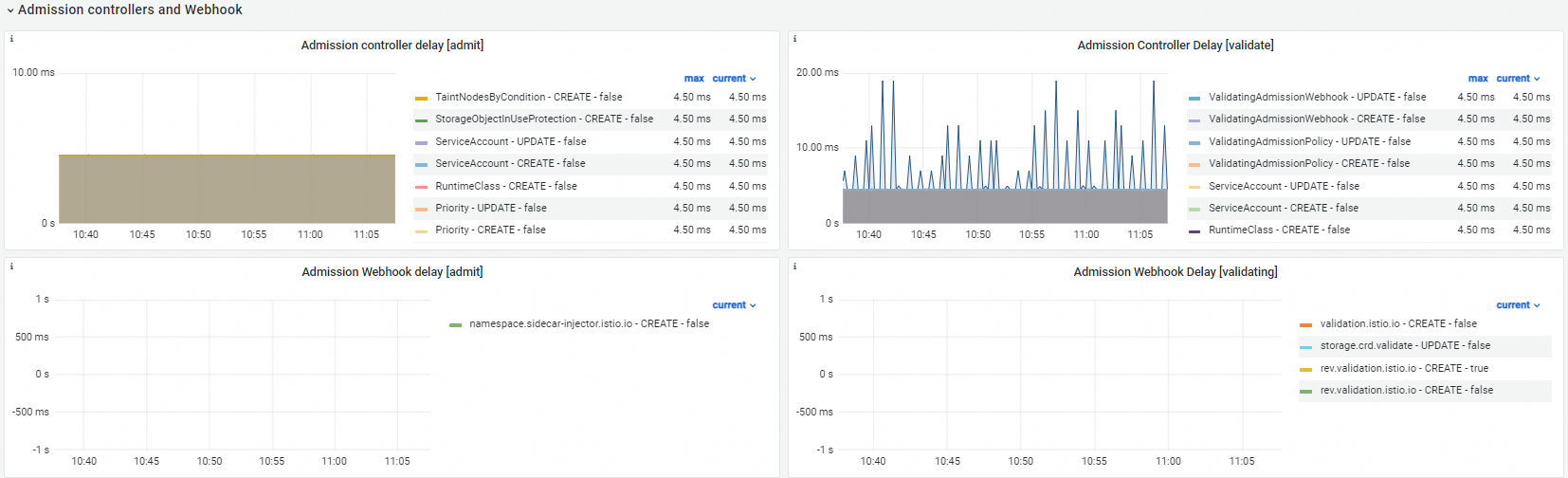

Admission controller and webhook

Observability

Feature

|

Metric |

PromQL |

Description |

|

Admission controller delay [admit] |

histogram_quantile($quantile, sum by(operation, name, le, type, rejected) (irate(apiserver_admission_controller_admission_duration_seconds_bucket{type="admit"}\[$interval\])) ) |

Latency statistics for the admit phase of admission controllers, broken down by controller name, operation, whether the request was denied, and duration. Histogram buckets: |

|

Admission controller delay [validate] |

histogram_quantile($quantile, sum by(operation, name, le, type, rejected) (irate(apiserver_admission_controller_admission_duration_seconds_bucket{type="validate"}\[$interval\])) ) |

Latency statistics for the validate phase of admission controllers, broken down by controller name, operation, whether the request was denied, and duration. Histogram buckets: |

|

Admission webhook delay [admit] |

histogram_quantile($quantile, sum by(operation, name, le, type, rejected) (irate(apiserver_admission_webhook_admission_duration_seconds_bucket{type="admit"}\[$interval\])) ) |

Latency statistics for the admit phase of admission webhooks, broken down by webhook name, operation, whether the request was denied, and duration. Histogram buckets: |

|

Admission webhook delay [validating] |

histogram_quantile($quantile, sum by(operation, name, le, type, rejected) (irate(apiserver_admission_webhook_admission_duration_seconds_bucket{type="validating"}\[$interval\])) ) |

Latency statistics for the validating phase of admission webhooks, broken down by webhook name, operation, whether the request was denied, and duration. Histogram buckets: |

|

Admission webhook request QPS |

sum(irate(apiserver_admission_webhook_admission_duration_seconds_count\[$interval\]))by(name,operation,type,rejected) |

The QPS of the admission webhook. |

Client analysis

Observability

Feature

|

Metric |

PromQL |

Description |

|

Analyze QPS by client dimension |

sum(irate(apiserver_request_total{client!=""}\[$interval\])) by (client) |

QPS broken down by client, showing which clients are sending requests to the API server and at what rate. |

|

Analyze QPS by verb resource client dimension [All] |

sum(irate(apiserver_request_total{client!="",verb=\~"$verb", resource=\~"$resource"}\[$interval\]))by(verb,resource,client) |

QPS broken down by verb, resource, and client. |

|

Analyze LIST request QPS by verb resource client dimension (no resourceVersion field) |

sum(irate(apiserver_request_no_resourceversion_list_total\[$interval\]))by(resource,client) |

|

Common metric anomalies

When kube-apiserver metrics become abnormal, use the following sections to diagnose the root cause.

Read/write request success rate

Case description

|

Normal |

Abnormal |

Description |

|

Read request success rate and Write request success rate are close to 100%. |

Read request success rate or Write request success rate drops below 90%. |

A large number of requests are returning non-200 HTTP status codes. |

Recommended solution

Check Read request QPS [5m] for non-2xx return values and QPS [5m] for write requests with non-2xx return values to identify the request types and resources causing non-2xx responses. Evaluate whether those requests are expected and optimize accordingly. For example, if GET/deployment 404 appears, GET Deployment requests are returning 404, which lowers the read request success rate.

Latency of GET/LIST requests and write requests

Case description

|

Normal |

Abnormal |

Description |

|

Latency varies with cluster size and the number of resources accessed. Typical values: GET read request delay P[0.9] and Write request delay P[0.9] are under 1 second; LIST read request delay P[0.9] can exceed 5 seconds under normal conditions. All values are acceptable as long as workloads are not adversely affected. |

|

Increased latency is often caused by a slow or unresponsive admission webhook, or a spike in client requests accessing cluster resources. |

Recommended solution

-

Check GET read request delay P[0.9], LIST read request delay P[0.9], and Write request delay P[0.9] to identify which request types and resources have elevated latency. Evaluate whether the behavior is expected and optimize accordingly. The

apiserver_request_duration_seconds_bucketmetric has a maximum bucket of 60 seconds — any response taking longer than 60 seconds is recorded as 60 seconds. Pod exec requests (POST pod/exec) and log retrieval requests create persistent connections with latencies that routinely exceed 60 seconds. Exclude these when analyzing latency anomalies. -

Check whether a slow admission webhook is increasing API server response time. See the Admission webhook latency section.

In-flight requests and dropped requests

Case description

|

Normal |

Abnormal |

Description |

|

Number of read requests processed and Number of write requests processed are both below 100, and Request limit rate is 0. |

|

The request queue is full. This can be caused by a temporary request spike or a slow admission webhook. When pending requests exceed queue capacity, the API server throttles traffic — the Request limit rate rises above 0, and cluster stability is affected. |

Recommended solution

-

Check the QPS and latency and Client analysis dashboards to identify which requests are at the top of the list. If those requests come from workloads, investigate whether you can reduce their frequency.

-

Check whether a slow admission webhook is driving up API server latency and causing queue buildup. See the Admission webhook latency section.

Admission webhook latency

Case description

|

Normal |

Abnormal |

Description |

|

Admission webhook delay is below 0.5 seconds. |

Admission webhook delay remains above 0.5 seconds. |

A slow or unresponsive admission webhook increases API server response latency across all mutating and validating requests. |

Recommended solution

Review the admission webhook logs to check whether the webhook is working as expected. If a webhook is no longer needed, uninstall it.

What's next

-

For metrics, dashboards, and anomaly guidance for other control plane components, see:

-

To query Prometheus monitoring data using the console or API, see Use PromQL to query Prometheus monitoring data.

-

To create alert rules using custom PromQL statements in Managed Service for Prometheus, see Create an alert rule for a Prometheus instance.