Kubernetes clusters use etcd as persistent storage for cluster state and metadata. As a distributed key-value store, etcd provides strong consistency and high availability (HA) for cluster data.

Use this topic to:

-

Quickly determine whether the etcd cluster is healthy (leader status, member availability).

-

Identify performance bottlenecks such as disk I/O latency and Raft proposal backlogs.

-

Understand each dashboard panel and know what action to take when a metric is anomalous.

Prerequisites

For instructions on accessing the monitoring dashboard, see View the monitoring dashboard for control plane components.

Metric checklist

Metrics expose the internal status and parameters of a component. The following table lists the active metrics for the etcd component, along with what anomalous values typically indicate.

| Metric | Type | Description |

|---|---|---|

cpu_utilization_core |

Gauge | CPU usage. Unit: cores. |

etcd_server_has_leader |

Gauge | Whether a leader exists among etcd members. etcd uses the Raft consensus algorithm: one member is elected as the Leader (primary node), and the remaining members become Followers (secondary nodes). The Leader periodically sends heartbeats to maintain cluster stability. Value: 1 (leader exists) or 0 (no leader). A value of 0 on any member warrants immediate investigation. |

etcd_server_is_leader |

Gauge | Whether this etcd member is the Leader. Value: 1 (yes) or 0 (no). In a healthy cluster, exactly one member has a value of 1. |

etcd_server_leader_changes_seen_total |

Counter | The number of leader changes seen by this etcd member over a period of time. |

etcd_mvcc_db_total_size_in_bytes |

Gauge | The total size of the etcd member database (DB). |

etcd_mvcc_db_total_size_in_use_in_bytes |

Gauge | The actual size in use of the etcd member DB. |

etcd_disk_backend_commit_duration_seconds_bucket |

Histogram | The latency of backend commits in etcd — the time for data changes to be written to the storage backend and successfully committed. Bucket thresholds: [0.001, 0.002, 0.004, 0.008, 0.016, 0.032, 0.064, 0.128, 0.256, 0.512, 1.024, 2.048, 4.096, 8.192]. Sustained latency at hundreds of milliseconds or higher indicates a disk I/O problem. |

etcd_debugging_mvcc_keys_total |

Gauge | The total number of keys stored in etcd. |

etcd_server_proposals_committed_total |

Gauge | The number of Raft proposals successfully committed to the Raft log. In Raft, any action that changes system state is submitted as a proposal. |

etcd_server_proposals_applied_total |

Gauge | The number of proposals successfully applied (executed). |

etcd_server_proposals_pending |

Gauge | The number of pending proposals. A non-zero value indicates a backlog; analyze alongside backend commit latency to identify the root cause. |

etcd_server_proposals_failed_total |

Counter | The number of proposals that failed. Any value greater than 0 warrants investigation. |

memory_utilization_byte |

Gauge | Memory usage. Unit: bytes. |

The following metrics are deprecated. Remove any alerts or monitoring that depend on them.

-

cpu_utilization_ratio: CPU utilization. -

memory_utilization_ratio: Memory usage.

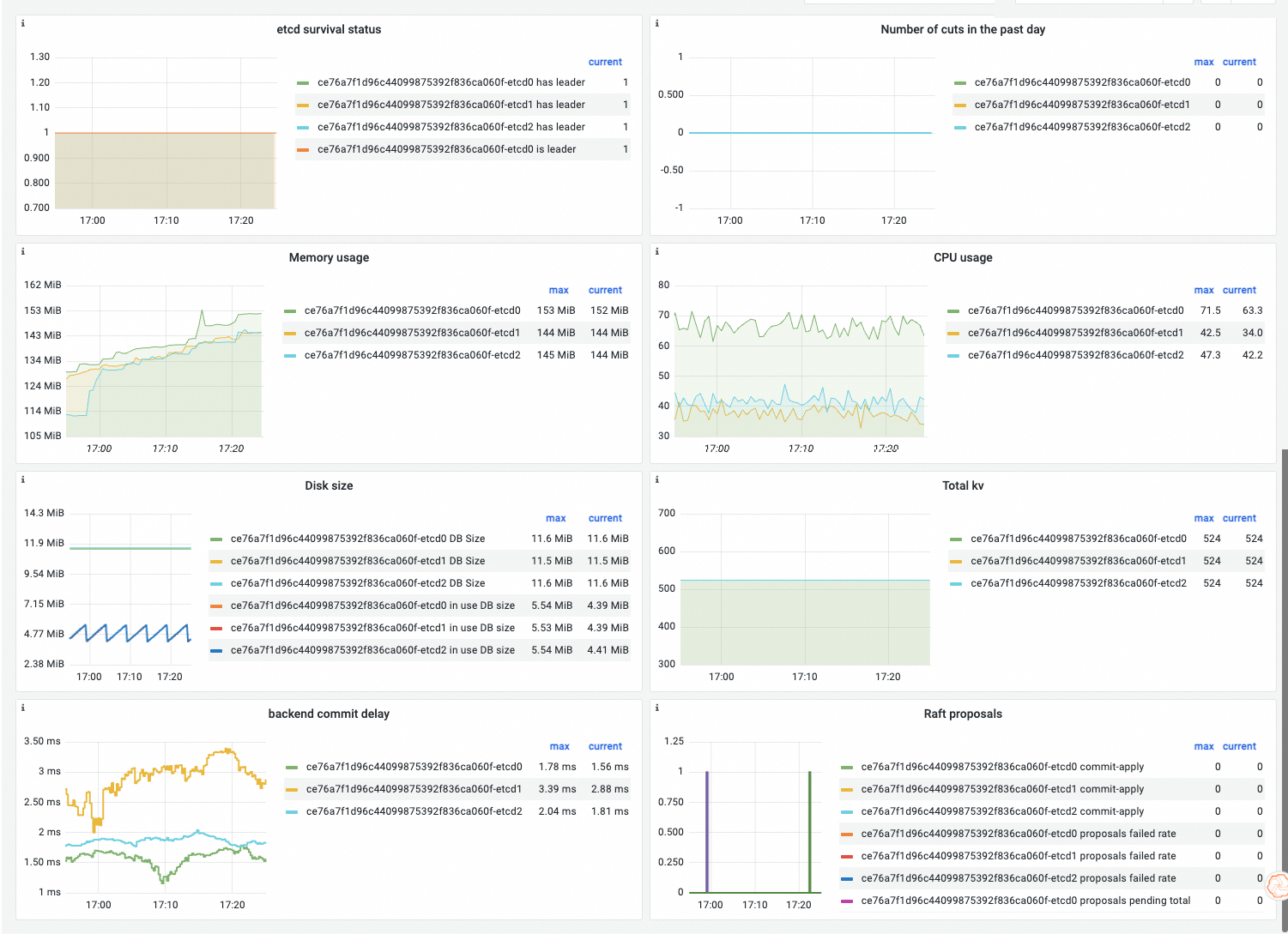

Dashboard guide

The dashboard is built from component metrics and Prometheus Query Language (PromQL) queries. The following sections describe the observability display and the dashboard panels.

Observability display

Panel reference

| Panel | PromQL | What it shows |

|---|---|---|

| etcd Health Status | etcd_server_has_leader<br>etcd_server_is_leader == 1 |

Number of etcd members that have a leader (normal: 3); number of members that are the Leader (normal: 1). |

| Leader Changes In The Last Day | changes(etcd_server_leader_changes_seen_total{job="etcd"}[1d]) |

How many times the leader has changed in the etcd cluster over the past day. Frequent changes indicate instability. |

| Memory Usage | memory_utilization_byte{container="etcd"} |

Memory usage. Unit: bytes. |

| CPU Usage | cpu_utilization_core{container="etcd"}*1000 |

CPU usage. Unit: millicores. |

| Disk Size | etcd_mvcc_db_total_size_in_bytes<br>etcd_mvcc_db_total_size_in_use_in_bytes |

Total size of the etcd backend DB, and the portion actually in use. |

| Total Key-value Pairs | etcd_debugging_mvcc_keys_total |

Total number of key-value (KV) pairs in the etcd cluster. |

| Backend Commit Latency | histogram_quantile(0.99, sum(rate(etcd_disk_backend_commit_duration_seconds_bucket{job="etcd"}[5m])) by (instance, le)) |

The p99 time for a proposal to be persistently stored in the etcd database. Normal range: a few milliseconds to tens of milliseconds. |

| Raft Proposal Status | rate(etcd_server_proposals_failed_total{job="etcd"}[1m])<br>etcd_server_proposals_pending{job="etcd"}<br>etcd_server_proposals_committed_total{job="etcd"} - etcd_server_proposals_applied_total{job="etcd"} |

Rate of failed proposals per minute; total pending proposals; and the committed-minus-applied gap. If the gap exceeds 5,000, etcd rejects incoming requests and returns too many requests until the backlog clears. |

Common metric anomalies

etcd health status

| Normal case | Abnormal case | What it means |

|---|---|---|

sum(etcd_server_has_leader) = 3; exactly one member has etcd_server_is_leader == 1. |

A single member has etcd_server_has_leader != 1. |

That member is abnormal. Cluster service is unaffected because the remaining members can still provide services. |

| — | More than one member has etcd_server_has_leader != 1, or no member has etcd_server_is_leader == 1. |

Multiple members have etcd_server_has_leader != 1. The etcd cluster cannot provide services. Also check whether any member has etcd_server_is_leader == 1. If not, etcd has no leader and cannot provide services. |

Backend commit latency

| Normal case | Abnormal case | What it means |

|---|---|---|

| A few milliseconds to tens of milliseconds. | Latency sustained at hundreds of milliseconds or seconds. | The underlying disk has an I/O problem. Investigate disk throughput and I/O wait times on the etcd node. |

Raft proposal anomalies

| Metric | Normal | Abnormal | What it means |

|---|---|---|---|

| Failed proposals (rate) | 0 | > 0 | Some Raft proposals failed to be submitted. If this number is high, further investigation is required. |

| Pending proposals | 0 | > 0 | Proposals are queuing up, usually because the apply speed is slow. Analyze alongside backend commit latency to identify the bottleneck. |

| Committed minus applied | 0 | > 0 | Too many client requests are putting pressure on etcd. If the gap exceeds 5,000, etcd rejects subsequent requests and returns too many requests until the backlog is processed. |

What's next

For metrics, dashboard guides, and anomaly analysis for other control plane components, see: