The maximum number of pods a worker node can support depends on the network plug-in and generally cannot be changed. For the Terway network plug-in, the limit is determined by the number of elastic network interfaces (ENIs) supported by its instance type. For the Flannel network plug-in, this limit is specified when you create the cluster and cannot be modified later. If you reach the pod limit, we recommend scaling out your node pool to add more nodes, which increases the total number of available pods.

Per-node pod limit

Terway

Maximum container network pods

For more information, see Work with Terway.

Terway mode | Maximum number of pods per node | Example | Maximum number of pods per node that support static IP addresses, separate vSwitches, and separate security groups |

Shared ENI mode | (Number of ENIs supported by the ECS instance type - 1) × Number of private IP addresses supported by an ENI. (EniQuantity - 1) × EniPrivateIpAddressQuantity Note A node can join a cluster only if the maximum number of pods per node is greater than 11. | For example, the general-purpose ecs.g7.4xlarge instance type supports 8 ENIs, and each ENI supports 30 private IP addresses. The maximum number of pods per node is (8 - 1) × 30 = 210. Important The maximum number of pods that can use ENIs on a node is a fixed value determined by the instance type. Modifying the | 0 |

Shared ENI + Trunk ENI | Single-node Trunk Pod quota: Total number of network interfaces supported by the ECS instance type - Number of ENIs supported by the ECS instance type. EniTotalQuantity - EniQuantity | ||

Exclusive ENI mode | ECS instances: Number of ENIs supported by the ECS instance type - 1. EniQuantity - 1 Lingjun instances: Create and manage Lingjun ENIs - 1. LeniQuota - 1 Note A node can join a cluster only if the maximum number of pods per node is greater than 6. | For example, the general-purpose ecs.g7.4xlarge instance type supports 8 ENIs. The maximum number of pods per node is (8 - 1) = 7. | Number of ENIs supported by the ECS instance type - 1. EniQuantity - 1 Note Lingjun instances are not supported. |

Host network pods

The default number of host network pods is 3. Do not change this value. Modifying it may cause IP address allocation failures for new pods. After the node restarts, the per-node pod limit resets to its default value.

Flannel

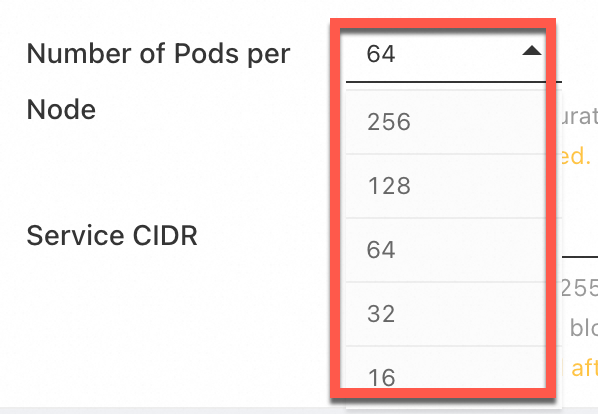

When you use the Flannel network plug-in, the maximum number of pods per node is specified by the Number of Pods per Node setting during cluster creation. You cannot change this value after the cluster is created.

Increase the number of available pods

The method for increasing the number of pods depends on your network plug-in. The following methods increase the total number of available pods in your cluster, but do not necessarily raise the per-node limit.

Scale out a node pool (recommended)

Applicable to: Terway and Flannel.

Manually or automatically scale out a node pool to increase the number of available pods. For more information, see Manually scale a node pool and Node scaling.

Impact: This operation does not affect running services. However, excessively large clusters may affect cluster availability and performance. Plan and use large-scale clusters appropriately. For more information, see Suggestions on using large-scale clusters.

Upgrade instance types

Applicable to: Terway

Upgrade the instance type of worker nodes to increase the per-node pod limit. For more information, see Upgrade or downgrade the configurations of a worker node. The maximum number of pods per node does not have a direct linear relationship with the instance type. It depends on the number of ENIs provided by the ECS instance family.

Impact: Upgrading an instance type requires an ECS instance restart for the change to take effect. This can cause a brief service interruption. Before upgrading, assess your workload and decide if you need to add redundant nodes to handle pod traffic. Drain the node you intend to upgrade and remove it from the ACK cluster. Perform the upgrade during off-peak hours. After the upgrade is complete, add the node back to the cluster. For more information about instance upgrades (including billing) and specific steps, see Overview of instance configuration changes and Upgrade or downgrade the configurations of a worker node. For instructions and important notes on removing and adding nodes, see Remove a node and Add existing ECS instances.

Recreate the cluster

Applicable to: Flannel

Create a cluster and set the value for Number of Pods per Node. The value for Number of Pods per Node specifies the maximum number of pods that a single node can support. For more information, see Using the Flannel network plug-in.

Impact: Requires rebuilding your services.

FAQ

Check the maximum container pods in Terway

-

Method 1: When you create a node pool, check the Terway Mode (Supported Pods) column in the Instance Type section to view the maximum number of container network pods supported by an instance type.

-

Method 2: Obtain the required data as described in the following steps, and then use it to manually calculate the number of pods supported by the instance type.

-

Query the number of elastic network interfaces supported by the instance type. For more information, see instance family.

-

Use the OpenAPI Explorer to run the DescribeInstanceTypes operation. Specify the InstanceTypes of your existing nodes and click Initiate Call. In the response,

EniQuantityindicates the maximum number of ENIs supported by the instance type, andEniPrivateIpAddressQuantityindicates the number of private IPs supported by a single ENI.

-

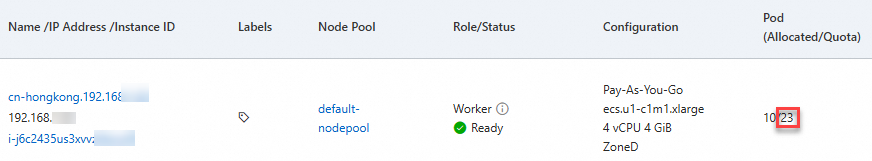

Check the pod limit of a node

To check the pod limit for an existing node, follow these steps:

Log on to the ACK console. In the left navigation pane, click Clusters.

-

On the Clusters page, click the name of the target cluster. In the navigation pane on the left, choose .

-

On the Nodes page, view the Quota for pods. This value is the maximum number of pods supported by the node.

Why is the initial pod usage high?

Cluster components run as pods and consume node resources. Some components may use multiple replicas. If you enable many features when you create a cluster, a significant number of pods are consumed. We recommend that you follow the methods in Increase the number of available pods.

Can I manually increase the Terway pod limit?

No. In Terway mode, the number of pods a node can run depends on the number of ENIs provided by its ECS instance type. Even if you manually increase the pod limit, newly created pods that exceed the actual capacity will fail to schedule due to a lack of available IP addresses. This also causes errors during cluster health checks and pre-upgrade checks.

If you have already manually modified the maximum number of pods for a node, remove the node and then add it back to the cluster. For instructions and important notes, see Remove a node and Add existing ECS instances.

Why do similar nodes have different pod limits?

The per-node pod limit is not directly related to CPU or memory. In Terway networking, the limit depends on the number of ENIs provided by the ECS instance family. In Flannel networking, the default maximum is 256 pods per node, but this can be increased for certain cluster types.