Outdated Kubernetes versions expose clusters to known security vulnerabilities and limit access to technical support. ACK Edge uses in-place upgrades to move your clusters to a new Kubernetes version without requiring cluster recreation. This document covers version constraints, the three-phase upgrade procedure, and runtime migration requirements for upgrading to 1.24.

Why upgrade

-

Security vulnerabilities: New versions patch known vulnerabilities. ACK Edge does not backport security fixes to outdated versions.

-

Limited support: ACK Edge does not guarantee support quality for outdated versions. Upgrading restores full technical support coverage.

-

New features: Each release includes improvements that deliver a smoother development and operations experience.

Usage notes

-

ACK Edge supports upgrades between Kubernetes versions 1.18 and 1.24. Upgrades to 1.26 and later are also supported.

-

Upgrades are sequential — you can only move to the next minor version. To upgrade from 1.18 to 1.22, first upgrade to 1.20, then upgrade to 1.22.

-

Rollback is not supported. Once upgraded, a cluster cannot be reverted to an earlier Kubernetes version.

-

To upgrade from Kubernetes 1.26 to 1.30, submit a ticket to be added to the whitelist.

-

Edge node pools and the control plane can differ by at most two minor versions. For example, if the control plane runs Kubernetes 1.22, edge node pools must run at least Kubernetes 1.20.

How it works

An ACK Edge cluster upgrade runs in three sequential phases:

| Phase | Scope | Estimated duration |

|---|---|---|

| Control plane | kube-apiserver, kube-controller-manager, kube-scheduler | ~5 minutes |

| On-cloud node pools | kubelet, container runtime per node pool | ~5 minutes per batch |

| Edge node pools | All nodes, updated one at a time | Varies by node count |

The control plane uses rolling updates. On-cloud node pools are updated one pool at a time, with nodes updated in batches: the first batch contains one node, and each subsequent batch doubles in size. Edge node pools require running the upgrade command on each node individually.

Prerequisites

Before you begin, ensure that you have:

-

Access to the ACK console with cluster management permissions

-

Reviewed the release notes for ACK Edge Kubernetes 1.30 or the release notes for your target version

-

Verified the current Kubernetes version of your cluster in the Version column on the ACK consoleClusters page

Step 1: Update the control plane

-

Log on to the ACK console. In the left-side navigation pane, click Clusters.

-

On the Clusters page, click the name of the cluster you want to upgrade. In the left-side pane, choose Operations > Upgrade Cluster.

-

On the Upgrade Cluster page, set Destination Version and click Precheck. After the precheck completes, review the results in the Pre-check Results section:

-

No issues: The cluster passes the precheck. Proceed to the next step.

-

Issues found: The cluster continues to run normally and its status does not change. Fix the issues based on the console suggestions before proceeding. For details, see Cluster check items and suggestions on how to fix cluster issues.

For clusters running Kubernetes 1.20 or later, the precheck also scans for deprecated API usage. A deprecated API finding does not block the upgrade — the result is informational only. For details, see Deprecated APIs.

-

-

Click Start Update and follow the on-screen instructions. Track progress in the update history panel in the upper-right corner of the Upgrade Cluster page. After the update completes, go to the Clusters page and confirm the Kubernetes version has changed. New nodes added after this point automatically use the new version.

Step 2: Update on-cloud node pools

After the control plane update completes, update your on-cloud node pools during off-peak hours. Each node pool update replaces the kubelet and container runtime on every node.

Node pools update sequentially — only one pool updates at a time. Within each pool, nodes update in batches: the first batch contains one node, subsequent batches double in size, and the batch size cap applies even after resuming a paused update. Set the maximum batch size on the Node Pool Upgrade page. A maximum batch size of 10 works well for most clusters.

For detailed steps and update notes, see Update an on-cloud node pool.

Step 3: Update edge node pools

Complete the control plane update before starting this step. An edge node pool is considered fully updated only when all nodes in the pool are updated.

Run the upgrade command on all nodes one at a time in the edge node pool:

export REGION="" INTERCONNECT_MODE="" TARGET_CLUSTER_VERSION=""; export ARCH=$(uname -m | awk '{print ($1 == "x86_64") ? "amd64" : (($1 == "aarch64") ? "arm64" : "amd64")}') INTERNAL=$( [ "$INTERCONNECT_MODE" = "private" ] && echo "-internal" || echo "" ); wget http://aliacs-k8s-${REGION}.oss-${REGION}${INTERNAL}.aliyuncs.com/public/pkg/run/attach/${TARGET_CLUSTER_VERSION}/${ARCH}/edgeadm -O edgeadm; chmod u+x edgeadm;./edgeadm upgrade --interconnect-mode=${INTERCONNECT_MODE} --region=${REGION}Replace the placeholders before running the command:

| Parameter | Description | Example |

|---|---|---|

TARGET_CLUSTER_VERSION |

The Kubernetes version of the updated control plane | 1.24.6-aliyunedge.1 — see Release notes for supported Kubernetes versions |

REGION |

Region ID of the cluster | cn-hangzhou — see Supported regions |

INTERCONNECT_MODE |

Network type for node connections: basic (public network) or private (Express Connect circuits) |

basic |

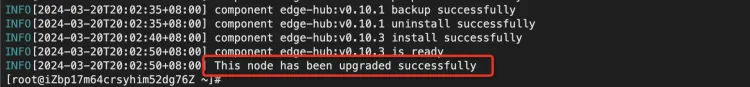

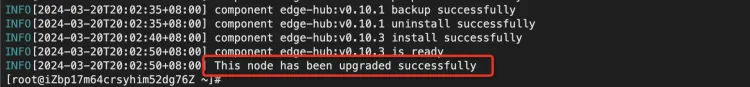

A successful upgrade prints the following output:

Runtime migration for Docker (upgrading to 1.24)

Kubernetes 1.24 dropped support for the Docker runtime. If any nodes in your cluster use Docker, migrate them to containerd as part of the upgrade to 1.24.

-

(Recommended) Rolling migration: Create a new node pool with the containerd runtime and expand its capacity. Gradually migrate workloads by setting the old node pool to unschedulable or by updating workload scheduling labels. Then take the old node pool offline. For details on creating a node pool, see Edge node pool management. For details on setting nodes to unschedulable, see Node draining and scheduling status.

-

In-place update: Drain the node before the update, then run the upgrade command. All containers on the node restart during the update.

-

Drain the node.

-

Run the following command on all nodes one at a time in the edge node pool:

export REGION="" INTERCONNECT_MODE="" TARGET_CLUSTER_VERSION=""; export ARCH=$(uname -m | awk '{print ($1 == "x86_64") ? "amd64" : (($1 == "aarch64") ? "arm64" : "amd64")}') INTERNAL=$( [ "$INTERCONNECT_MODE" = "private" ] && echo "-internal" || echo "" ); wget http://aliacs-k8s-${REGION}.oss-${REGION}${INTERNAL}.aliyuncs.com/public/pkg/run/attach/${TARGET_CLUSTER_VERSION}/${ARCH}/edgeadm -O edgeadm; chmod u+x edgeadm;./edgeadm upgrade --interconnect-mode=${INTERCONNECT_MODE} --region=${REGION}Replace the placeholders before running the command:

Parameter

Description

Example

TARGET_CLUSTER_VERSIONThe Kubernetes version of the updated control plane

1.24.6-aliyunedge.1— see Release notes for supported Kubernetes versionsREGIONRegion ID of the cluster

cn-hangzhou— see Supported regionsINTERCONNECT_MODENetwork type for node connections:

basic(public network) orprivate(Express Connect circuits)basicA successful upgrade prints the following output:

-

Verify the upgrade

After all three phases complete, confirm the upgrade succeeded:

-

On the Clusters page, verify the Kubernetes version shown in the Version column matches the target version.

-

Check that node pools are running and nodes are in a healthy state.

-

Verify that workloads in the cluster are operating as expected.

FAQ

Does ACK Edge force cluster upgrades?

No. ACK Edge clusters are upgraded manually only. If you choose not to upgrade, the cluster stays on its current Kubernetes version. We recommend upgrading promptly to maintain access to security patches and full technical support.

What do I do if an edge node fails to upgrade?

If the upgrade command does not return This node has been upgraded successfully, refer to What do I do if an edge node fails to be upgraded when I upgrade an ACK Edge cluster?

What's next

-

If your cluster fails a precheck, see Cluster check items and suggestions on how to fix cluster issues.

-

To create or manage edge node pools, see Edge node pool management.