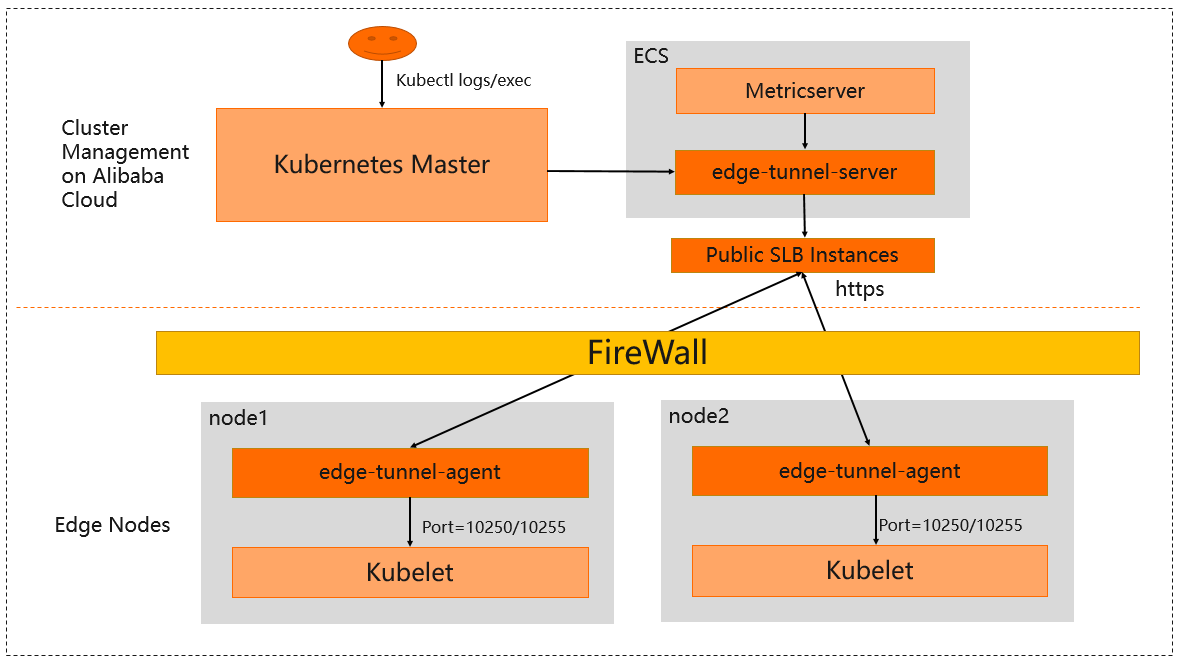

ACK Edge clusters running Kubernetes earlier than 1.26 use edge-tunnel-server and edge-tunnel-agent to establish encrypted tunnels from the cloud to edge nodes. These tunnels let kube-apiserver and metrics-server reach edge nodes in private networks without any changes to those components.

For ACK Edge clusters running Kubernetes 1.26 or later, Raven replaces the tunnel components. For more information, see Work with the cloud-edge communication component Raven.

How it works

edge-tunnel-server runs as a Deployment on cloud nodes. edge-tunnel-agent runs as a DaemonSet on every edge node.

When the cluster is created, ACK provisions a Server Load Balancer (SLB) instance in front of edge-tunnel-server. Each edge-tunnel-agent connects outward through the SLB instance to establish an encrypted tunnel over the Internet.

Once the tunnels are up, requests from kube-apiserver or metrics-server targeting port 10250 or port 10255 on any edge node are automatically forwarded through edge-tunnel-server. This includes O&M operations such as kubectl logs and kubectl exec, whose requests are sent to port 10250 and port 10255 on kubelet on edge nodes. No component configuration changes are needed.

If the SLB instance is deleted or stopped, all cloud-edge tunnels stop working. If an edge node loses its connection to the cloud or the connection becomes unstable, the tunnels may also fail.

Prerequisites

Before you begin, ensure that you have:

-

An ACK Edge cluster running Kubernetes earlier than 1.26

-

At least one Elastic Compute Service (ECS) instance in the cloud to host edge-tunnel-server

For clusters running 1.16.9-aliyunedge.1, metrics-server must be deployed on the same ECS node as edge-tunnel-server. For clusters running 1.18.8-aliyunedge.1 or later, you can deploy them on separate ECS nodes.

Extend monitoring to non-standard ports

By default, cloud-edge tunnels only forward traffic on ports 10250 and 10255. To collect monitoring data from edge nodes over other ports, configure the edge-tunnel-server-cfg ConfigMap in the kube-system namespace.

The configuration fields and supported protocols depend on your cluster version:

| Cluster version | Supported protocols | Configuration field | Value format |

|---|---|---|---|

| 1.20.11-aliyunedge.1 | HTTP | http-proxy-ports |

port1, port2 |

| 1.20.11-aliyunedge.1 | HTTPS | https-proxy-ports |

port1, port2 |

| 1.20.11-aliyunedge.1 | localhost endpoints | localhost-proxy-ports |

port1, port2 (default: 10250, 10255, 10266, 10267) |

| 1.18.8-aliyunedge.1 | HTTP only | dnat-ports-pair |

port=10264 |

Configure additional ports on 1.20.11-aliyunedge.1

The following example enables:

-

Port 9051 over HTTP

-

Port 9052 and port 8080 over HTTPS

-

Access to localhost endpoints including

https://127.0.0.1:8080

Apply the ConfigMap:

cat <<EOF | kubectl apply -f -

apiVersion: v1

data:

http-proxy-ports: "9051"

https-proxy-ports: "9052, 8080"

localhost-proxy-ports: "10250, 10255, 10266, 10267, 8080"

kind: ConfigMap

metadata:

name: edge-tunnel-server-cfg

namespace: kube-system

EOFConfigure additional ports on 1.18.8-aliyunedge.1

On 1.18.8-aliyunedge.1, only HTTP is supported for non-standard ports. Use the dnat-ports-pair field with the format <target-port>=10264.

The following example enables access to port 9051 on edge nodes from the cloud:

Apply the ConfigMap:

cat <<EOF | kubectl apply -f -

apiVersion: v1

data:

dnat-ports-pair: '9051=10264'

kind: ConfigMap

metadata:

name: edge-tunnel-server-cfg

namespace: kube-system

EOF