This topic provides answers to frequently asked questions about data development.

Resources

PyODPS

EMR Spark

Nodes and workflows

Tables

Logs and retention

Batch operations

BI integration

API calls

Others

Call third-party packages in PyODPS

You must use an exclusive resource group for scheduling to perform this operation. For details, see Use third-party packages and custom Python scripts in PyODPS nodes

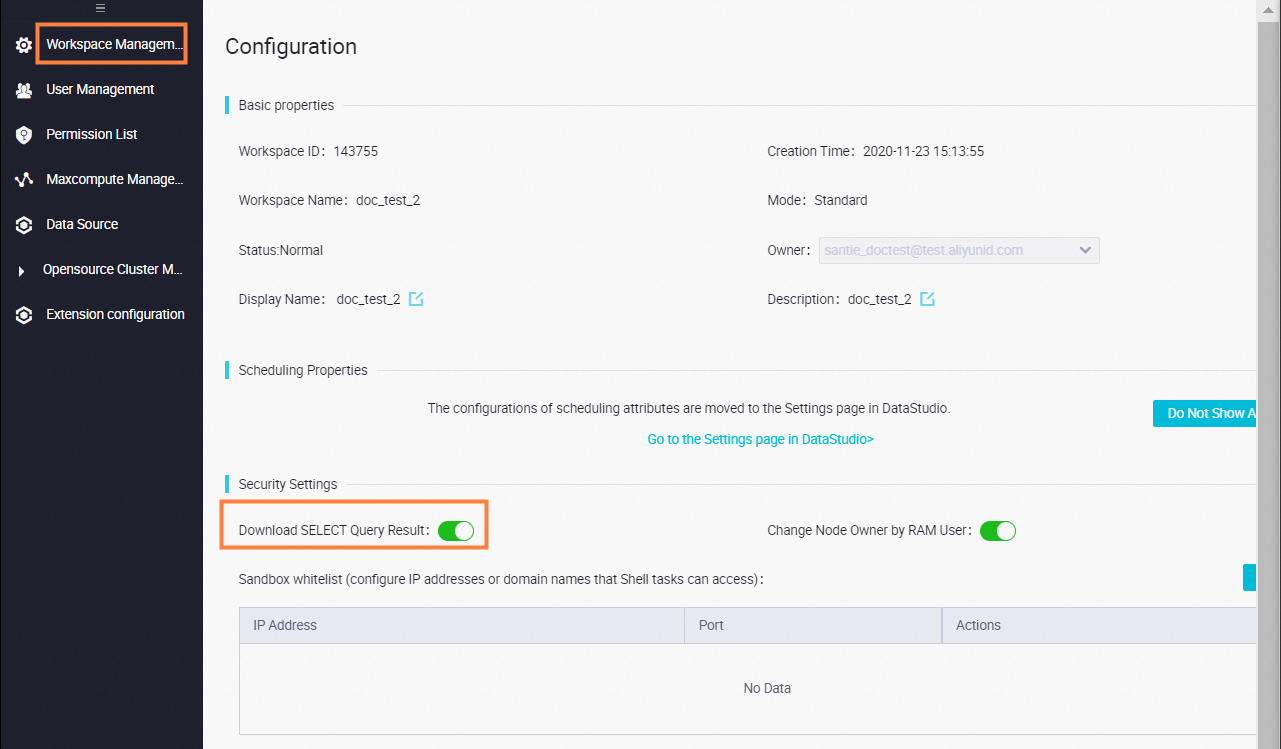

Control table data download

To download data in DataWorks, the download feature must be enabled. If the download option is not visible, the feature is disabled for the current workspace. Contact the workspace owner or administrator to enable it in the workspace management settings.

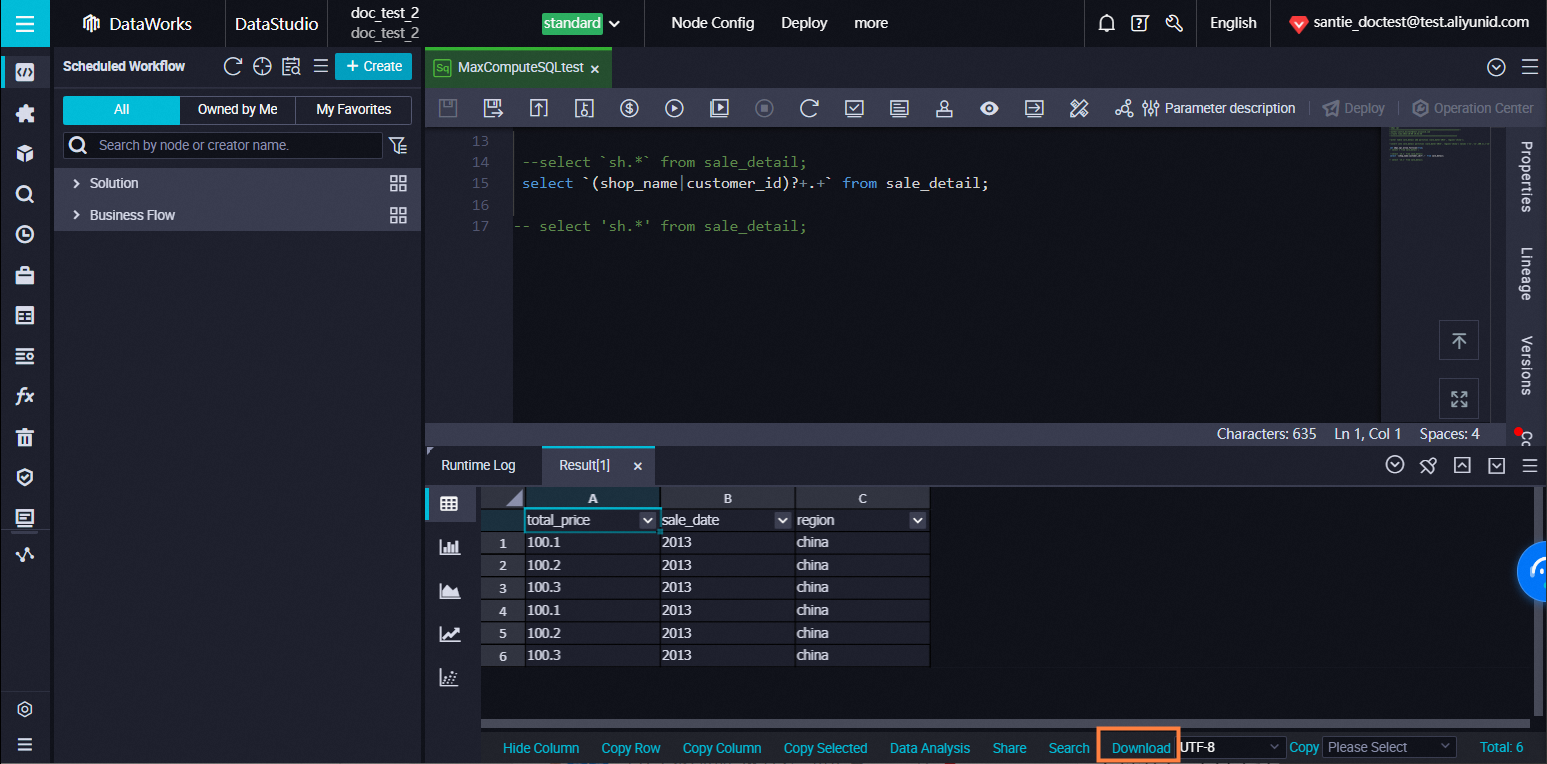

After a query is executed, a download button appears in the bottom-right corner of the result section.

Due to engine limitations, the DataWorks console supports downloading a maximum of 10,000 records.

Download more than 10,000 records

Use the MaxCompute Tunnel command. See Use SQLTask and Tunnel to export a large amount of data

EMR table creation error

Possible cause: The ECS cluster hosting EMR lacks the required security group rules. You must add security group policies when registering an EMR cluster; otherwise, table creation may fail.

The ECS cluster hosting EMR lacks the required security group rules. Add the following policies:

Authorization Policy: Allow

Protocol Type: Custom TCP

Port Range: 8898/8898

Authorization Object: 100.104.0.0/16

Solution: Check the security group configuration of the ECS cluster where EMR is located and add the required policies.

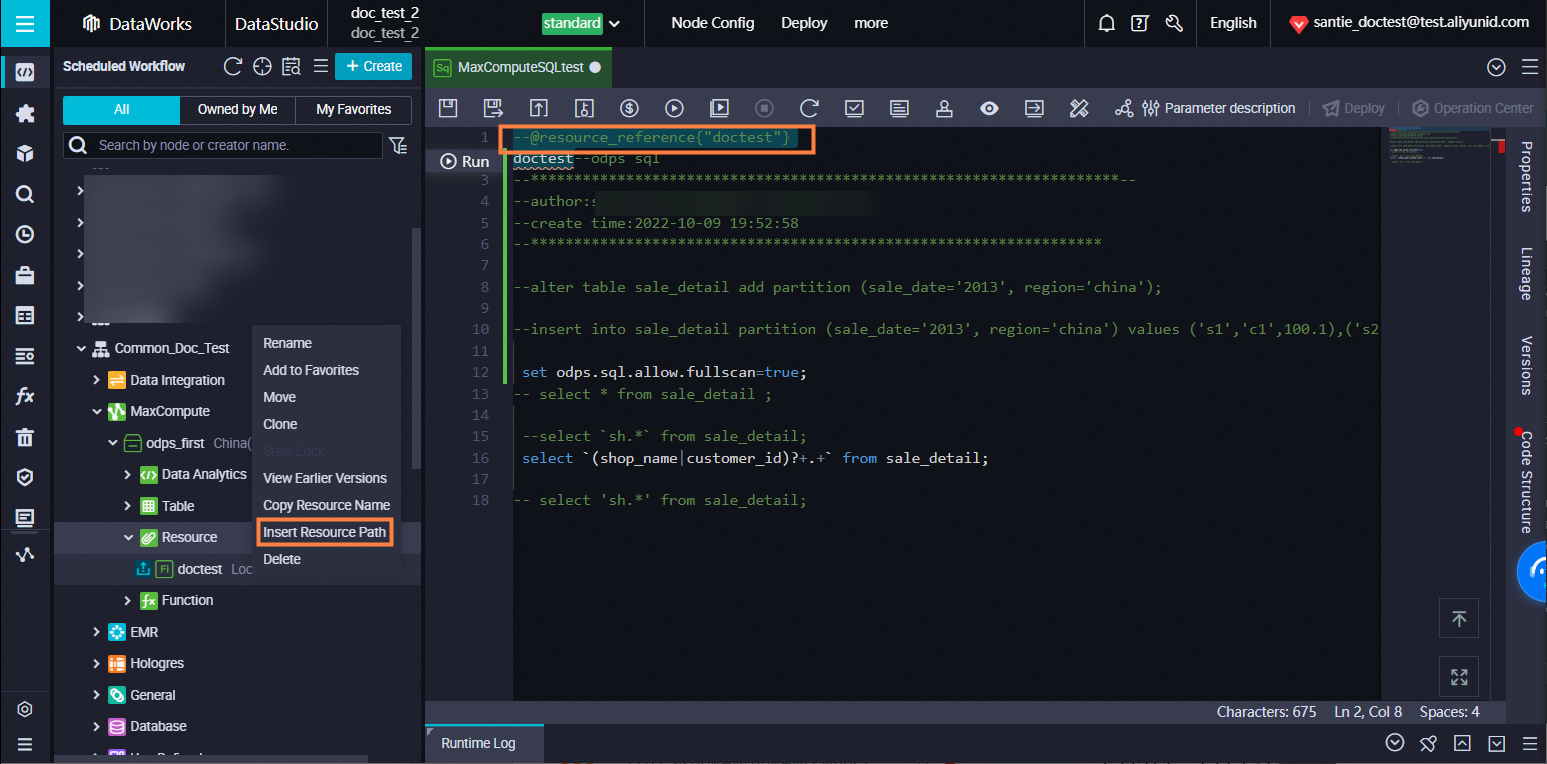

Use resources within a node

Right-click the target resource and select Reference Resource.

Download resources uploaded to DataWorks

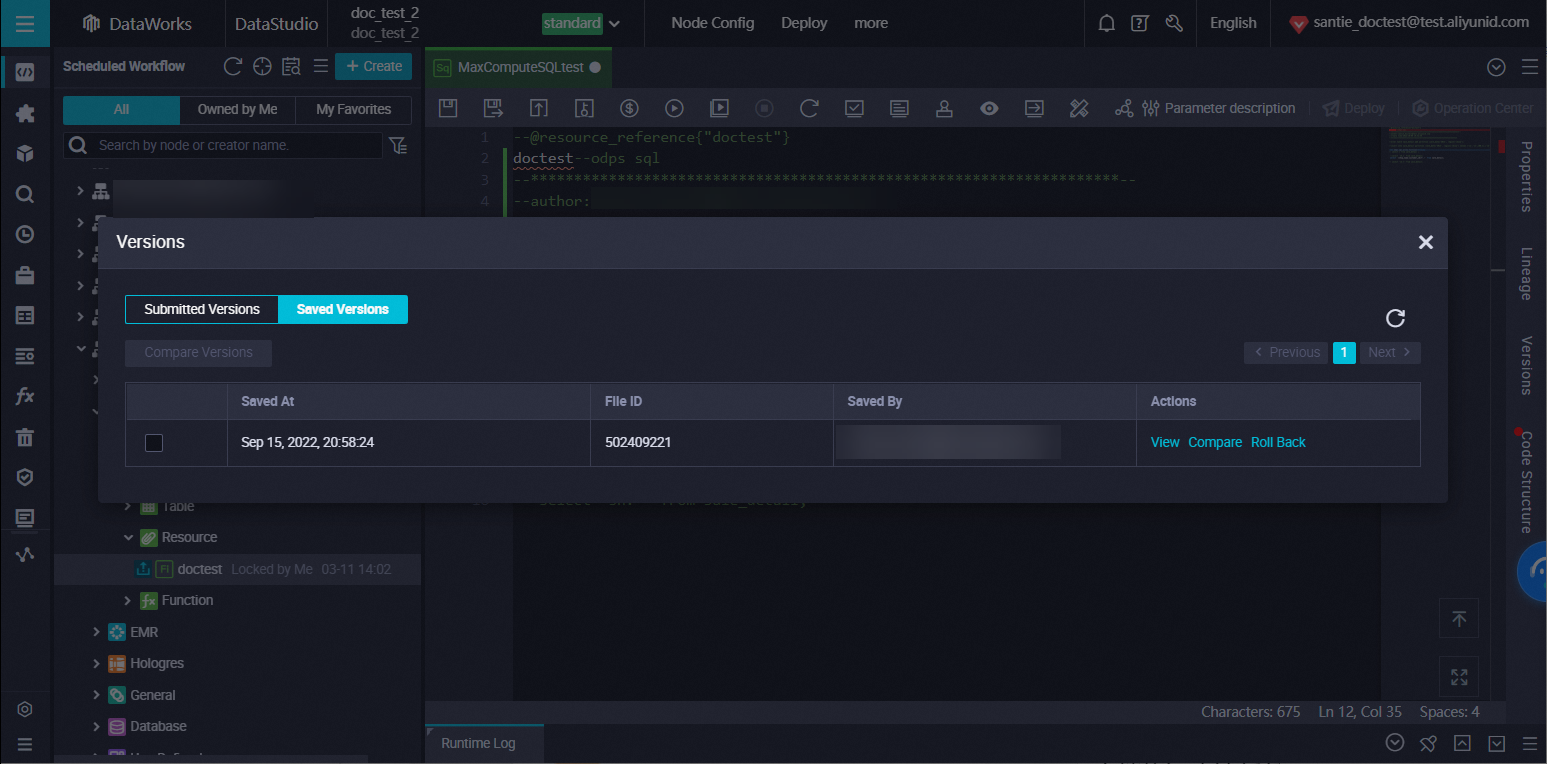

Right-click the target resource and select View Version History.

Upload resources over 30 MB

Resources larger than 30 MB must be uploaded using the Tunnel command. After uploading, add them to DataWorks via the MaxCompute resource feature. See Use Tunnel to upload and download data and Use resources uploaded via odpscmd.

Use resources uploaded via odpscmd

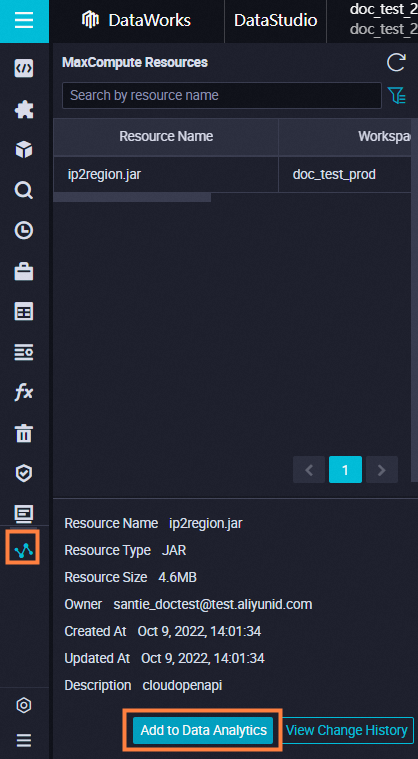

To use resources uploaded via odpscmd in DataWorks, add the resources to the MaxCompute resources section in DataStudio.

Upload and execute local JAR

You must upload the JAR file as a resource in DataStudio. To use the resource in a node, right-click the resource and select Reference Resource. A comment will automatically appear at the top of the node code. You can then execute the resource by its name.

Example: In a Shell node ##@resource_reference{"test.jar"}java -jar test.jar

Use MaxCompute table resources

DataWorks does not support uploading MaxCompute table resources directly via the console. See Example: Reference a table resource to reference resource tables. To use MaxCompute table resources in DataWorks, follow these steps:

Add the table as a resource in MaxCompute by executing the following SQL statement. For details, see Add resources.

add table <table_name> [partition (<spec>)] [as <alias>] [comment '<comment>'][-f];Create a Python resource in DataStudio. In this example, the resource `get_cache_table.py` iterates through and locates the MaxCompute table resource. For the Python code, see Development Code.

Create a function named

table_udfin DataStudio and configure it as follows:Class Name:

get_cache_table.DistCacheTableExampleResource List: Select the

get_cache_table.pyfile. Add table resources in Script Mode.

After registering the function, you can construct test data and call the function. For details, see Usage Example.

Resource group configuration error

To resolve this issue, go to the Scheduling tab on the right side of the node configuration page. Locate Resource Group and select a resource group from the drop-down list. If no resource group is available, bind one to your workspace:

Log in to the DataWorks console. Select the desired region and click Resource Group in the left-side navigation pane.

Find the target resource group and click in the Actions column.

Find the created DataWorks workspace and click Associate in the Actions column.

After completing the above steps, go to Schedulig on the right side of the node editing page and select the scheduling resource group you want to use from the drop-down list.

Call between Python resources

Yes. A Python resource can call another Python resource provided both reside in the same workspace.

Call custom functions

Yes. In addition to using the test function via the DataFrame map method, PyODPS supports calling custom functions to import third-party packages. For details, see Reference a third-party package in a PyODPS node.

PyODPS 3 Pickle error

If your code contains special characters, compress the code into a ZIP file before uploading it. Then, unzip and use the code within your script.

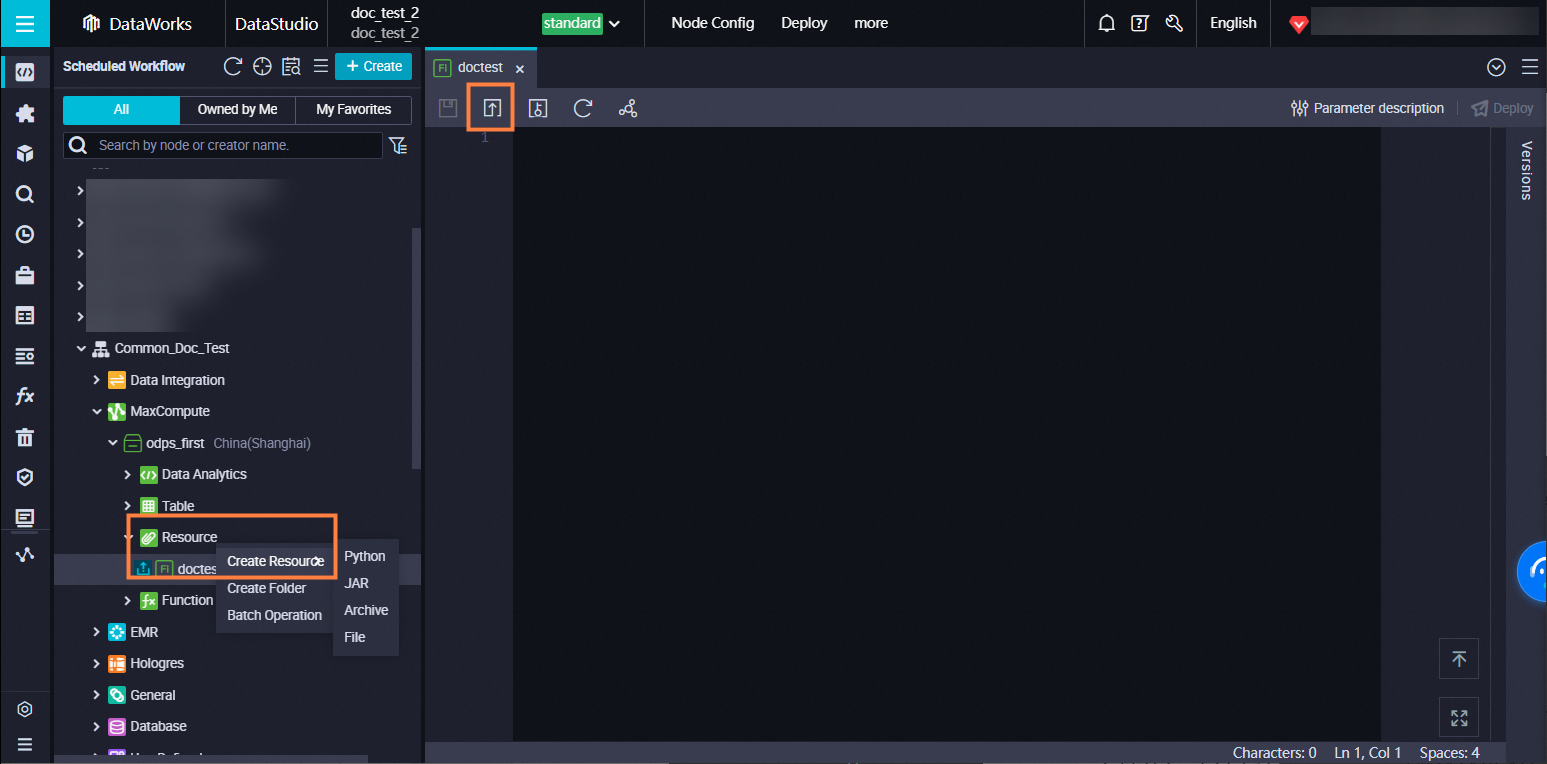

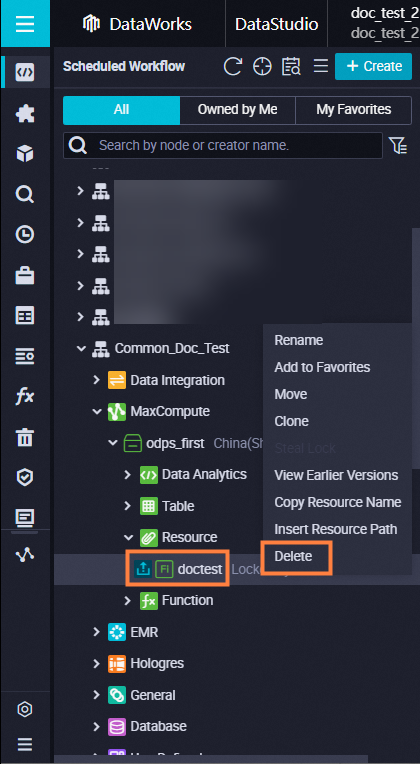

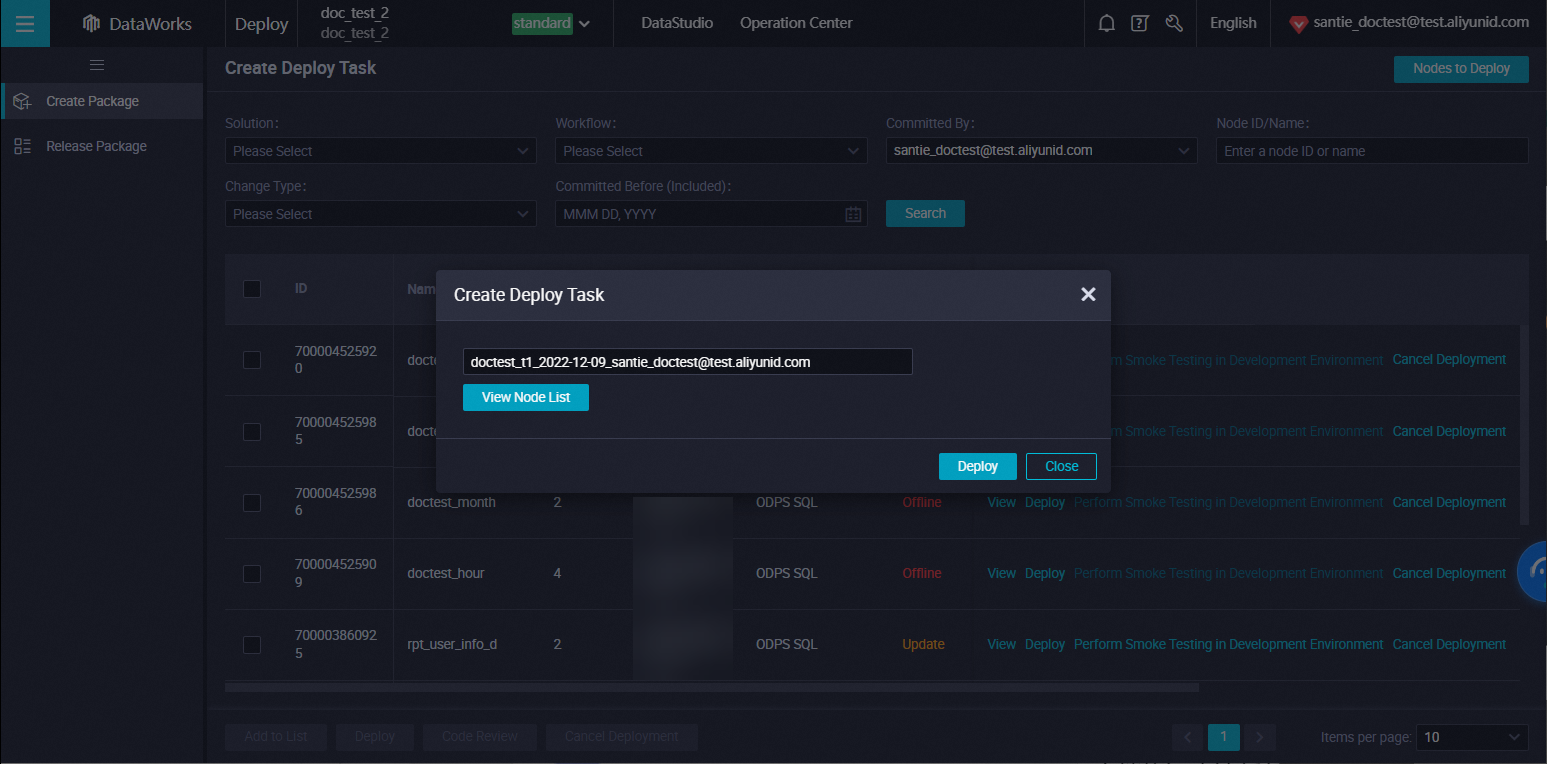

Delete MaxCompute resources

To delete a resource in Basic Mode, simply right-click the resource and select Delete. In Standard Mode, you must delete the resource in the development environment first, and then delete it in the production environment. The following example demonstrates how to delete a resource in the production environment.

In Standard Mode, deleting a resource in DataStudio only removes it from the development environment. You must publish the deletion operation to the production environment to remove the resource from production.

Delete the resource in the development environment. In the workflow, go to , right-click the resource, and select Delete. Click Confirm.

Delete the resource in the production environment. Go to deploy tasks in DataStudio. Set the change type filter to offline, locate the deletion record, and click Deploy in the Actions column.

Once published, the resource is removed from the production environment.

Once published, the resource is removed from the production environment.

Spark-submit failure with Kerberos

Error Details:

Class com.aliyun.datalake.metastore.hive2.DlfMetaStoreClientFactory not foundCause: After Kerberos is enabled in the EMR cluster, the Driver classpath does not automatically include JAR files from the specified directory when running in YARN-Cluster mode.

Solution: Manually specify DLF-related packages.

Add the

--jarsparameter when submitting a task usingspark-submitin YARN-Cluster mode. You must include the JAR packages required by your program as well as all JAR packages located in/opt/apps/METASTORE/metastore-current/hive2.To manually specify DLF-related packages in EMR Spark Node Yarn Cluster mode, refer to the following code.

ImportantIn YARN-Cluster mode, all dependencies in the

--jarsparameter must be separated by commas (,). Specifying directories is not supported.spark-submit --deploy-mode cluster --class org.apache.spark.examples.SparkPi --master yarn --jars /opt/apps/METASTORE/metastore-current/hive2/aliyun-java-sdk-dlf-shaded-0.2.9.jar,/opt/apps/METASTORE/metastore-current/hive2/metastore-client-common-0.2.22.jar,/opt/apps/METASTORE/metastore-current/hive2/metastore-client-hive2-0.2.22.jar,/opt/apps/METASTORE/metastore-current/hive2/metastore-client-hive-common-0.2.22.jar,/opt/apps/METASTORE/metastore-current/hive2/shims-package-0.2.22.jar /opt/apps/SPARK3/spark3-current/examples/jars/spark-examples_2.12-3.4.2.jarWhen specifying DLF packages, configure an AccessKey pair with permissions to access DLF and OSS to prevent STS authentication errors. You may encounter one of the following error messages:

Process Output>>> java.io.IOException: Response{protocol=http/1.1, code=403, message=Forbidden, url=http://xxx.xxx.xxx.xxx/latest/meta-data/Ram/security-credentials/}at com.aliyun.datalake.metastore.common.STSHelper.getEMRSTSToken(STSHelper.java:82)

Task level: Add the following parameters in the advanced configuration section on the right side of the node. (To apply this globally, configure the Spark global parameters in the cluster service configuration.) "spark.hadoop.dlf.catalog.akMode":"MANUAL", "spark.hadoop.dlf.catalog.accessKeyId":"xxxxxxx", "spark.hadoop.dlf.catalog.accessKeySecret":"xxxxxxxxx"

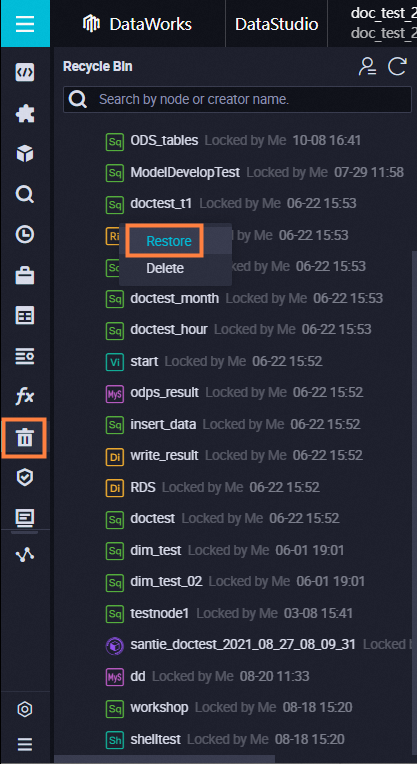

Restore a deleted node

You can restore deleted nodes from the Recycle Bin.

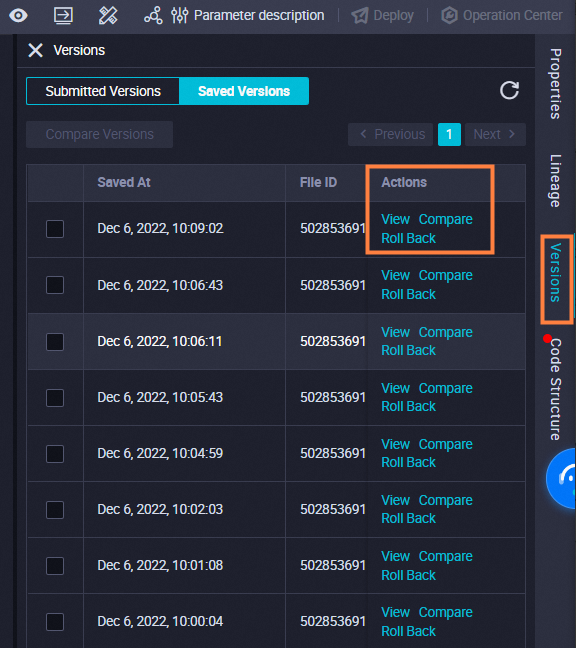

View node version

Open the node configuration tab to view the node version.

Versions are generated only after the node is submitted.

Clone a workflow

You can use the Node Group feature. See Use node groups

Export workspace node code

You can use the Migration Assistant feature. See Migration Assistant

View node submission status

To view the submission status, go to DataStudio > workflow and expand the target workflow. If the  icon appears to the left of the node name, the node has been submitted. If the icon is absent, the node has not been submitted.

icon appears to the left of the node name, the node has been submitted. If the icon is absent, the node has not been submitted.

Batch configure scheduling

DataWorks does not support batch scheduling configuration for workflows or nodes. You cannot configure scheduling parameters for multiple nodes at once; you must configure them for each node individually.

Instance impact of deleted nodes

The scheduling system generates instances based on the configured schedule. Deleting a task does not delete its historical instances. However, if an instance of a deleted task is triggered to run, it will fail because the associated code cannot be found.

Overwrite logic for modified nodes

Submitting a modified node does not overwrite previously generated instances. Pending instances will run using the latest code. However, if scheduling parameters are changed, you must regenerate the instance for the changes to take effect.

Visually create a table

You can create tables visually in DataStudio, Table Management, or within the table folder of a workflow.

Add fields to production table

The workspace owner can add fields to a production table on the Table Management page and submit the changes to the production environment.

RAM users must hold the O&M or Project Administrator role to add fields to a production table on the Table Management page and submit the changes to the production environment.

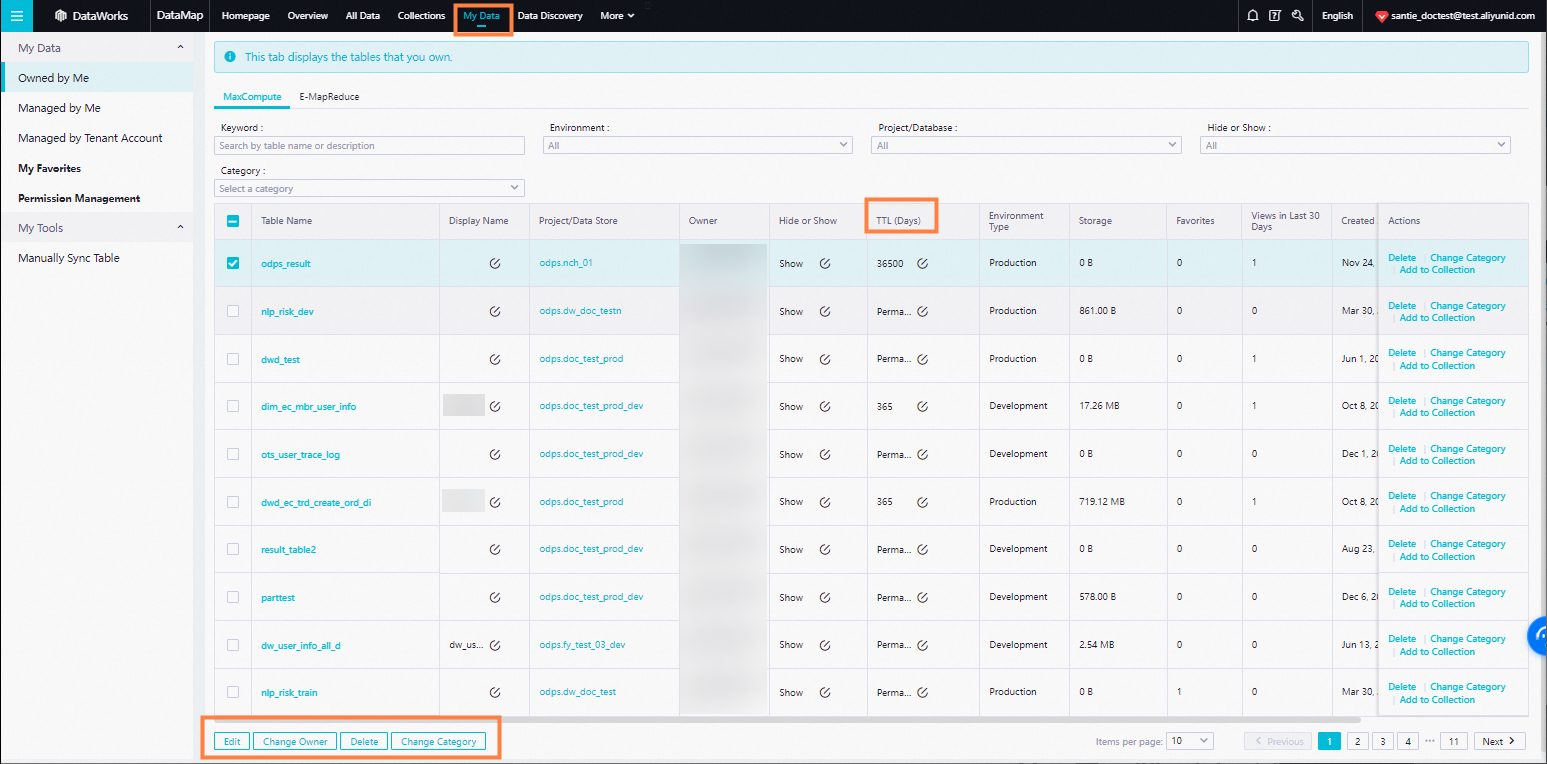

Delete a table

Delete a development table: Delete the table directly in DataStudio.

Delete a production table:

Delete the table from My Data in Data Map.

Alternatively, create an ODPS SQL node and execute a

DROPstatement. For details on creating an ODPS SQL node, see Develop an ODPS SQL task. For the syntax used to delete a table, see Table operations.

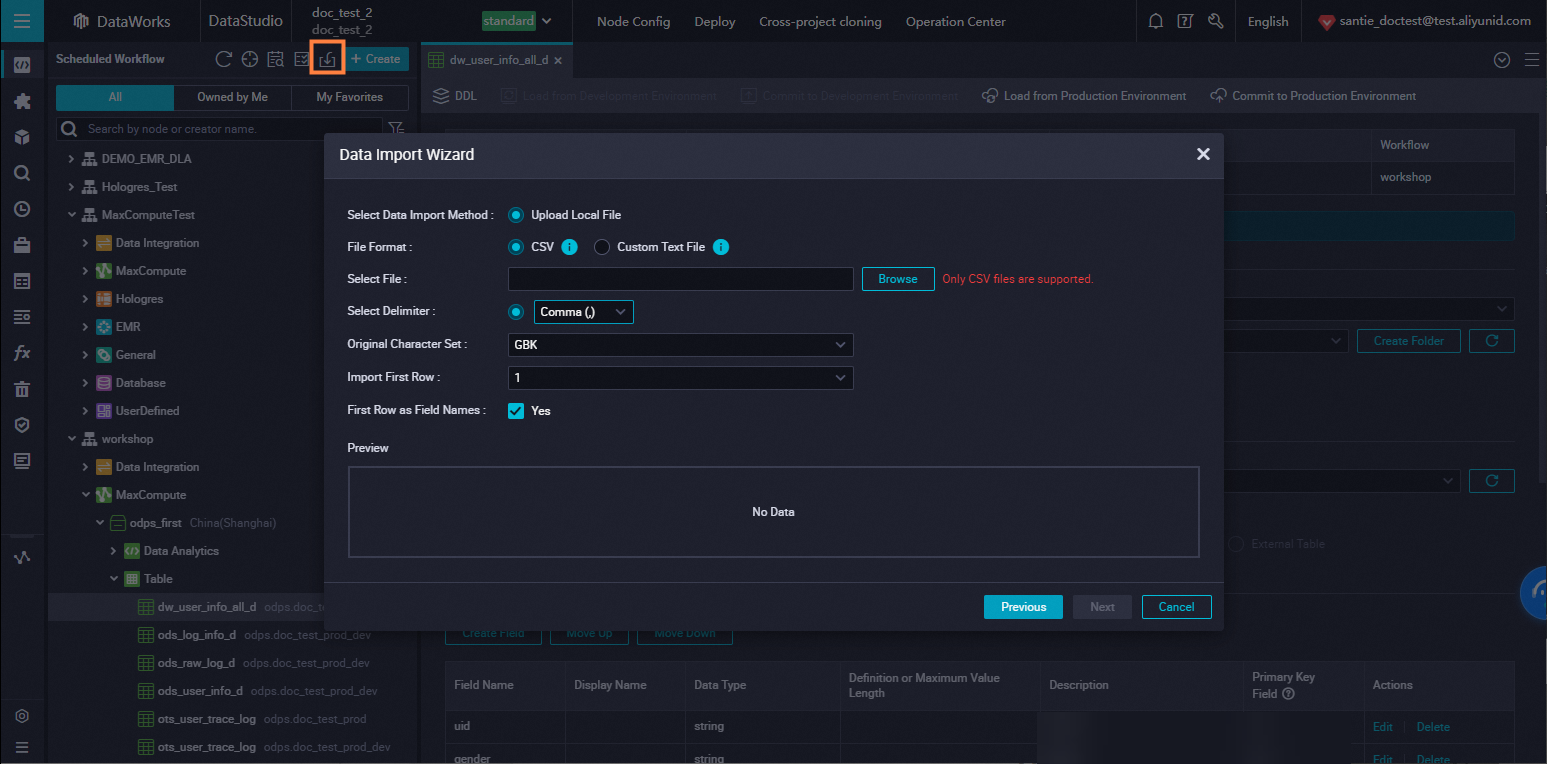

Upload local data to MaxCompute

In DataStudio, use the Import Table feature to upload local data.

EMR table creation exception

Possible cause:

The ECS cluster hosting EMR lacks the required security group rules. Add the following policies:

Authorization Policy: Allow

Protocol Type: Custom TCP

Port Range: 8898/8898

Authorization Object: 100.104.0.0/16

Solution:

Check the security group configuration of the ECS cluster where EMR is located and add the security group policies listed above.

Access production data in dev

In Standard Mode, use the format project_name.table_name to query production data within DataStudio.

If you upgraded from Basic Mode to Standard Mode, you must first apply for Producer role permissions before using project_name.table_name. For details on applying for permissions, please refer to Request permissions on tables

Obtain historical execution logs

In DataStudio, you can view historical logs in the Operation History pane on the left side.

Data development history retention

The execution history in DataStudio is retained for 3 days.

For the retention period of logs and instances in the Production O&M Center, please refer to: How long are logs and instances retained?

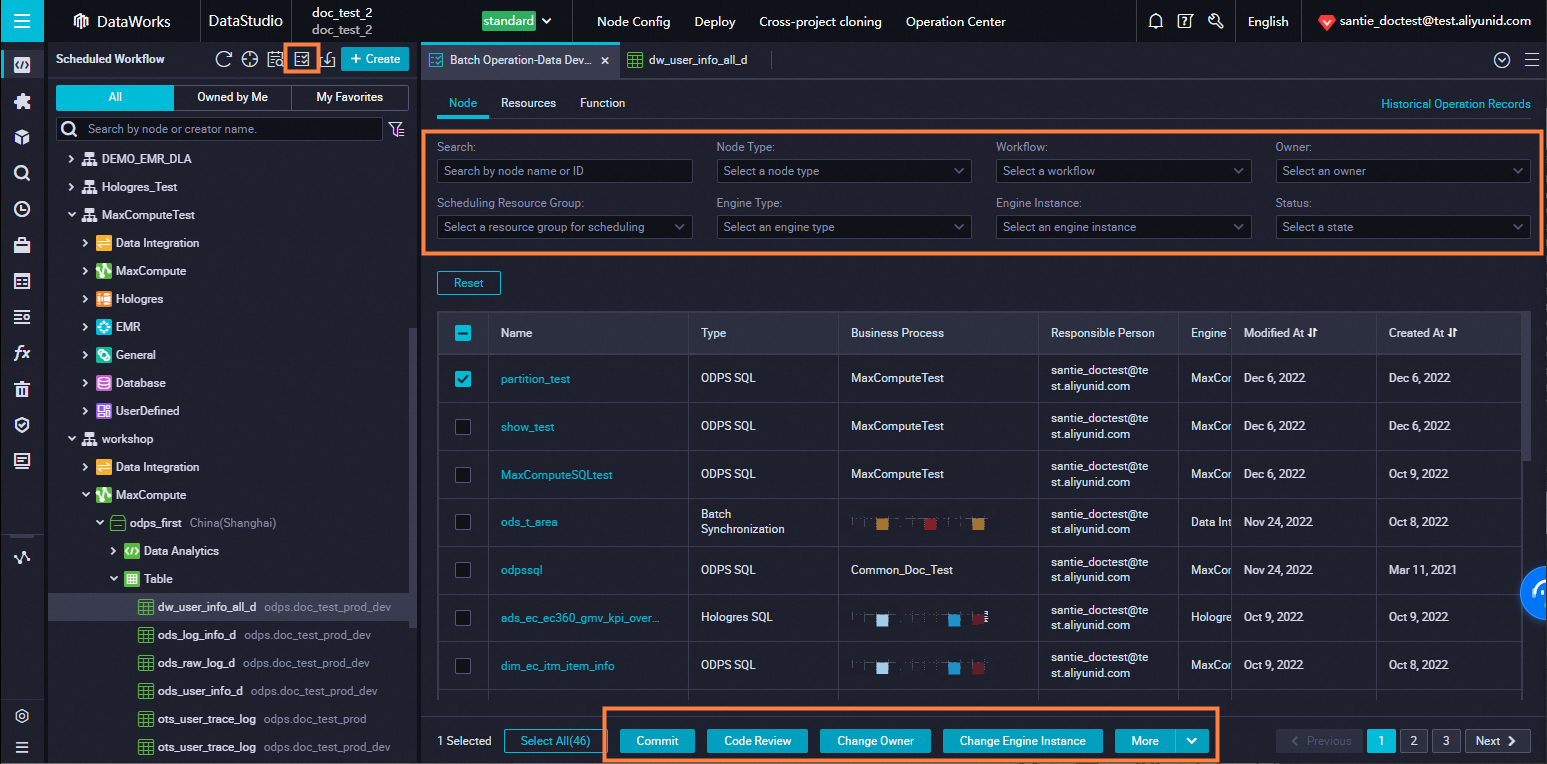

Batch modify attributes

Use the Batch Operation tool in the left-side navigation pane of DataStudio to modify nodes, resources, and functions in bulk. After modification, submit and publish the changes to the production environment.

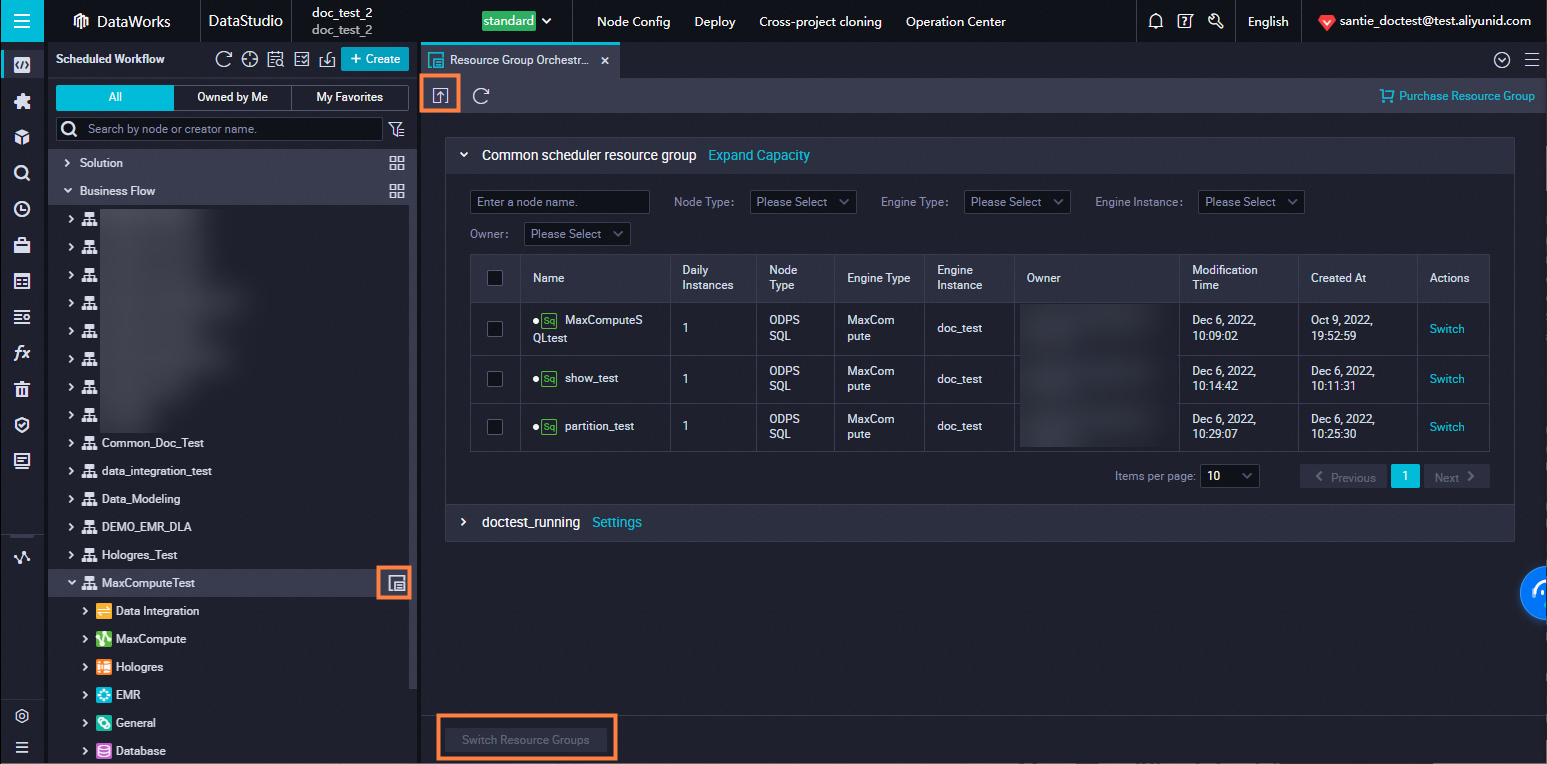

Batch modify scheduling resource groups

Click Resource Group Orchestration next to the workflow name in DataStudio to batch modify scheduling resource groups. Submit and publish the changes to the production environment.

Power BI connection errors

MaxCompute does not support direct connections to Power BI. We recommend using Interactive Analysis (Hologres) instead. For details, please refer to Endpoints

OpenAPI access forbidden error

OpenAPI availability depends on the DataWorks edition. You must activate DataWorks Enterprise Edition or Flagship Edition. For details, please refer to: Overview

Obtain Python SDK examples

Click Debug on the API page to view Python SDK examples.

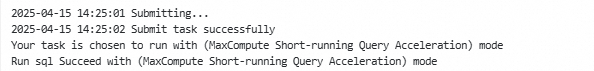

Disable ODPS acceleration mode

To obtain an instance ID, you must disable the acceleration mode.

DataWorks allows downloading up to 10,000 records. To download more records using Tunnel, an instance ID is required.

Add set odps.mcqa.disable=true; in the ODPS SQL node editor and execute it alongside the SELECT statement.

Exception: [202:ERROR_GROUP_NOT_ENABLE]:group is not available

The error Job Submit Failed! submit job failed directly! Caused by: execute task failed, exception: [202:ERROR_GROUP_NOT_ENABLE]:group is not available occurs during task execution.

Possible Cause: The bound resource group is unavailable.

Solution: Log in to the DataWorks console and go to Resource Groups. Ensure the resource group status is Running. If it is not, restart the resource group or switch to a different one.