AnalyticDB Ray is a fully managed Ray service built on AnalyticDB for MySQL. Running distributed AI workloads in production requires handling cluster operations, resource scheduling, and system stability — complexity that open source Ray leaves to you. AnalyticDB Ray manages these concerns, so you can focus on building AI applications at scale.

Use cases

| Use case | Description |

|---|---|

| Multimodal processing | Process large volumes of mixed media — images, video, audio, and text — in parallel across distributed nodes. |

| Search and recommendation | Run real-time inference and ranking pipelines at scale for search relevance and personalized recommendations. |

| Financial risk control | Execute high-throughput, latency-sensitive risk scoring and fraud detection jobs across massive datasets. |

| Embodied intelligence | One of the validated scenarios for AnalyticDB Ray deployments. |

How it works

Each Ray cluster resource group contains two types of nodes:

Head node — manages Ray metadata, runs the Global Control Store (GCS) service, and schedules tasks. The head node does not execute tasks.

Worker nodes — execute tasks and actors. Worker nodes scale automatically based on job demand.

When you submit a job, AnalyticDB Ray schedules tasks to available worker nodes and stores intermediate data in Ray's distributed object store. If the head node restarts, a Redis-based disaster recovery mechanism recovers the cluster state, actors, and tasks automatically.

Prerequisites

Before you begin, ensure that you have:

An AnalyticDB for MySQL cluster (Enterprise Edition, Basic Edition, or Data Lakehouse Edition)

Create a Ray cluster resource group

Log on to the AnalyticDB for MySQL console. In the upper-left corner, select a region. In the left-side navigation pane, click Clusters. Find the cluster and click the cluster ID.

In the left-side navigation pane, choose Cluster Management > Resource Management. Click the Resource Groups tab. In the upper-right corner, click Create Resource Group.

In the Create Resource Group panel, enter a resource group name, set Job Type to AI, and configure the following parameters.

Parameter Description Deployment Mode Select RayCluster. Head Resource Specifications The head node manages Ray metadata, runs GCS, and schedules tasks. Options: small, m.xlarge, m.2xlarge. CPU core counts match Spark resource specifications. For details, see Spark resource specifications. Select specifications based on the overall scale of your Ray cluster — the head node handles all scheduling. Worker Group Name A name for this worker group. One resource group can contain multiple worker groups with different names. Worker Resource Type CPU: for daily computing, multitasking, or complex logic. GPU: for large-scale data parallel processing, machine learning, or deep learning training. Worker Resource Specifications CPU: small, m.xlarge, m.2xlarge (same core counts as Spark; see Spark resource specifications). GPU: submit a ticket — GPU specifications depend on available models and inventory. Worker Disk Storage Disk space for Ray logs, temporary data, and distributed object store overflow. Unit: GB. Range: 30–2000. Default: 100. Disks are for temporary storage only — do not rely on them for persistent data. Minimum Workers / Maximum Workers The minimum number of worker nodes in this group (minimum: 1) and the maximum (maximum: 8). If minimum and maximum differ, AnalyticDB Ray scales worker count automatically based on current job load. When multiple worker groups exist, AnalyticDB Ray distributes jobs across groups to avoid overloading or underutilizing any single group. Distribution Unit (GPU workers only) The number of GPUs allocated to each worker node. Example: 1/3. Click OK.

Connect to and use the Ray service

Step 1: Get the endpoint URLs

In the left-side navigation pane, choose Cluster Management > Resource Management. Click the Resource Groups tab.

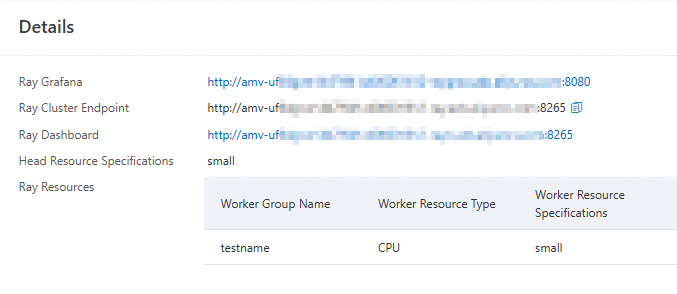

Find the resource group and choose More > Details in the Actions column.

URL Description Ray Grafana Grafana visualization page for monitoring the cluster. Ray Cluster Endpoint Internal endpoint URL for connecting to the cluster from within the VPC. Ray Dashboard Public dashboard URL (port 8265). View cluster status and job progress.

Step 2: Submit jobs

Prerequisites

Python 3.7 or later is installed.

Choose a submission method

Two methods are available. Use the Cloud Task Launcher (CTL) method unless you have a specific reason to run the driver locally.

| Method | How it works | Considerations |

|---|---|---|

| Cloud Task Launcher (CTL) (recommended) | Packages and uploads your script to the Ray cluster. The driver runs in the cluster and consumes cluster resources. | Simpler setup; no version-matching requirements. |

| ray.init | Connects a locally running driver to the Ray cluster. The driver runs on your local machine and does not consume cluster resources. | Local Ray and Python versions must match the Ray cluster version. Update your local environment when the cluster version changes. |

Submit jobs using CTL

Install Ray:

pip3 install ray[default](Optional) Set the

RAY_ADDRESSenvironment variable to avoid specifying the URL in every command:export RAY_ADDRESS="RAY_URL"Replace

RAY_URLwith the URL obtained in Step 1.Submit a job:

If

RAY_ADDRESSis set:ray job submit --working-dir <working-directory> -- python <script-file>If

RAY_ADDRESSis not set:ray job submit --address <ray-url> --working-dir <working-directory> -- python <script-file>

Placeholder Description <ray-url>The Ray URL from Step 1. Example: http://amv-uf64gwe14****-rayo.ads.aliyuncs.com:8265<working-directory>Directory containing your script and all its dependencies. Example: /root/Ray<script-file>The Python script to run. Example: scripts.pyImportantThe system uploads everything in

<working-directory>to the head node. Keep the directory minimal — large directories can cause upload failures. All dependency scripts must be in this directory.Example:

ray job submit --address http://amv-uf64gwe14****-rayo.ads.aliyuncs.com:8265 --working-dir /root/Ray -- python scripts.pyCheck job status:

Run

ray job listto list all jobs and their statuses.Or view the Ray Dashboard: in the Resource Groups tab, find the resource group, choose More > Details, and click the Ray Dashboard URL.

Submit jobs using ray.init

Install Ray:

pip3 install rayConvert the Ray Dashboard URL to a Ray protocol URL: The dashboard URL uses port 8265. The

ray.init()connection requires port 10001 and theray://protocol.Dashboard URL (from Step 1) ray.init URL http://amv-uf64gwe14****-rayo.ads.aliyuncs.com:8265ray://amv-uf64gwe14****-rayo.ads.aliyuncs.com:10001Connect and run your script:

Option A — set the

RAY_ADDRESSenvironment variable, then run the script directly:export RAY_ADDRESS="ray://<host>:10001" python scripts.pyOption B — specify the address inside the script, then run it:

ray.init(address="RAY_URL")python scripts.py

ImportantIf the Ray URL is incorrect,

ray.init()silently starts a local Ray cluster instead of connecting to the remote cluster. Check the output logs to confirm you are connected to the correct cluster.

Billing

Charges begin when you create a Ray cluster resource group. You are billed for:

| Billing item | Charged by |

|---|---|

| Worker Disk Storage | Storage size specified in the Worker Disk Storage parameter |

| CPU workers | Used AnalyticDB Compute Unit (ACU) elastic resources |

| GPU workers | GPU specifications and quantity |

Usage notes

Worker node restart and deletion

Modifying worker configurations restarts or deletes worker nodes. Schedule these changes during off-peak hours and avoid running jobs on nodes that are about to restart.

When a worker node restarts or is deleted:

Drivers, actors, and tasks running on the affected node fail. Ray automatically redeploys actors and tasks.

Data in Ray's distributed object store is lost. Jobs that depend on data from the restarted node also fail.

Resource group changes

| Change | Effect |

|---|---|

| Delete a resource group | Running tasks are interrupted immediately. |

| Delete a worker group | All worker nodes in the group are deleted. See Worker node restart and deletion. |

| If the maximum number of worker nodes after the change is less than the minimum number of worker nodes before the change | Worker nodes are deleted. See Worker node restart and deletion. |

| Change head resource specifications or worker resource type | Head node or worker nodes restart. See Worker node restart and deletion. |

Automatic scaling

Ray clusters scale based on logical resource requirements, not physical utilization. Scaling may be triggered even when physical resource usage is low.

Some third-party applications create as many tasks as possible to saturate available resources. When automatic scaling is enabled, this can quickly scale the cluster to its maximum size. Understand the task-creation behavior of any third-party program before enabling automatic scaling.