Classic Load Balancer (CLB) はクラスターにデプロイされ、レイヤー 4 (TCP および UDP) とレイヤー 7 (HTTP および HTTPS) のロードバランシングサービスを提供します。CLB はセッションを同期し、単一障害点 (SPOF) を排除することで、冗長性を向上させ、サービスの安定性を確保します。

CLB は、CLB クラスターを使用してクライアントリクエストをバックエンドサーバーに転送し、内部ネットワークを介してバックエンドサーバーから応答を受信します。

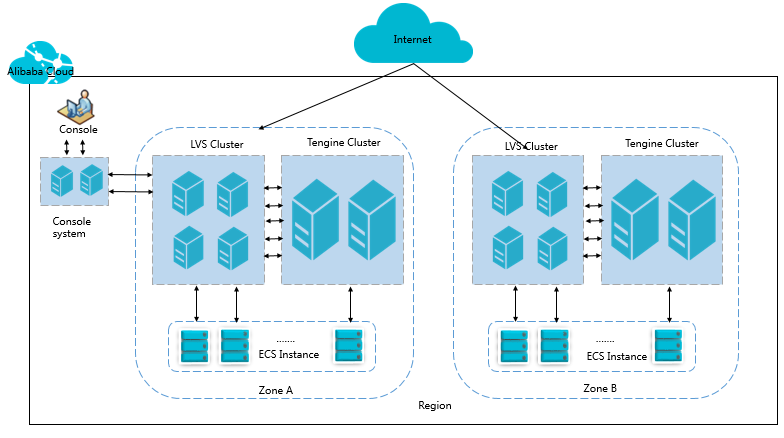

基本アーキテクチャ

CLB は、レイヤー 4 とレイヤー 7 でロードバランシングサービスを提供します。

レイヤー 4 では、CLB はオープンソースの Linux Virtual Server (LVS) と Keepalived を組み合わせて負荷を分散し、クラウドコンピューティングの要件を満たすために最適化をカスタマイズします。

レイヤー 7 では、CLB は Tengine を使用して負荷を分散します。Tengine は Taobao が立ち上げた Web サーバープロジェクトです。NGINX をベースにしており、多くのアクセスがある Web サイト向けに最適化された幅広い高度な特徴を備えています。

次の図に示すように、各リージョンでは、レイヤー 4 の CLB は複数の物理サーバーで構成される LVS クラスターで実行されます。このクラスターデプロイモデルは、異常が発生した場合でも CLB の可用性、安定性、拡張性を強化します。

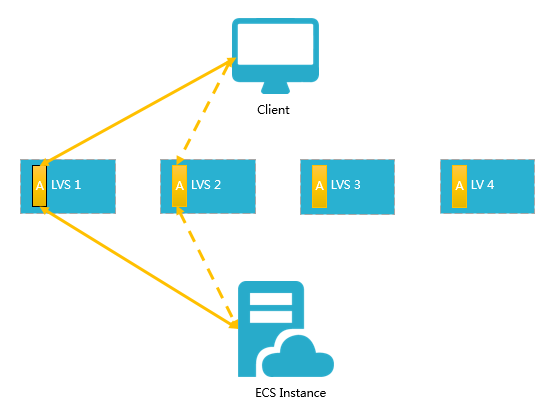

LVS クラスター内の各バックエンドサーバーは、マルチキャストパケットを使用してクラスター全体でセッションを同期します。たとえば、次の図に示すように、クライアントがサーバーに 3 つのパケットを送信した後、LVS1 で確立されたセッション A は他のサーバーと同期されます。実線はアクティブな接続を示します。破線は、LVS1 が故障した場合やメンテナンス中の場合に、リクエストが他の正常なサーバー (この場合は LVS2) に転送されることを示します。これにより、ご利用のアプリケーションが提供するサービスに影響を与えることなく、ホットアップグレードの実行、サーバーのトラブルシューティング、およびメンテナンスのためのクラスターのオフライン化が可能になります。

3 ウェイハンドシェイクが失敗して接続が確立されない場合、またはホットアップグレード中に接続は確立されたがセッションが同期されない場合、サービスが中断される可能性があります。この場合、クライアントから接続リクエストを再開する必要があります。

インバウンドトラフィックフロー

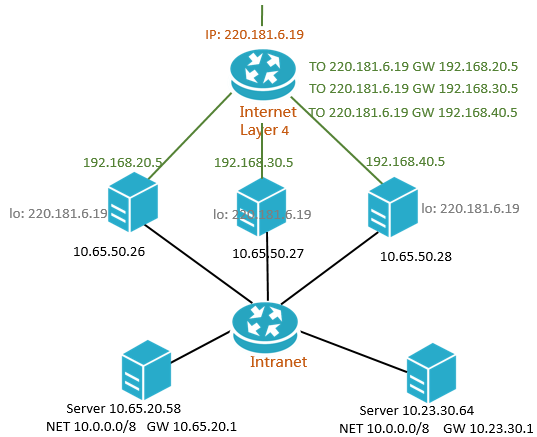

CLB は、CLB コンソールで設定した、または API 操作を使用して設定した転送ルールに基づいて、インバウンドトラフィックを分散します。次の図は、インバウンドトラフィックフローを示しています。

図 1. インバウンドトラフィックフロー

TCP、UDP、HTTP、または HTTPS を使用するインバウンドトラフィックは、レイヤー 4 クラスターを介して転送される必要があります。

大量のインバウンドトラフィックは、レイヤー 4 クラスター内のすべてのノードに均等に分散され、ノードはセッションを同期して高可用性を確保します。

CLB インスタンスで UDP または TCP ベースのレイヤー 4 リスナーが使用されている場合、レイヤー 4 クラスターのノードは、CLB インスタンスに設定された転送ルールに基づいて、リクエストをバックエンドの Elastic Compute Service (ECS) インスタンスに直接分散します。

CLB インスタンスで HTTP ベースのレイヤー 7 リスナーが使用されている場合、レイヤー 4 クラスターのノードはまずリクエストをレイヤー 7 クラスターに分散します。その後、レイヤー 7 クラスターのノードは、CLB インスタンスに設定された転送ルールに基づいて、リクエストをバックエンドの ECS インスタンスに分散します。

CLB インスタンスで HTTPS ベースのレイヤー 7 リスナーが使用されている場合、リクエストは HTTP ベースのリスナーを使用する CLB インスタンスによるリクエストの分散方法と同様に分散されます。違いは、リクエストがバックエンドの ECS インスタンスに分散される前に、システムがキーサーバーを呼び出して証明書を検証し、データパケットを復号することです。

アウトバウンドトラフィックフロー

CLB とバックエンドの ECS インスタンスは、内部ネットワークを介して通信します。

バックエンドの ECS インスタンスが CLB から分散されたトラフィックのみを処理する場合、ECS インスタンス用にパブリック IP アドレス、Elastic IP Address (EIP)、Anycast EIP、NAT Gateway などのインターネット帯域幅リソースを購入する必要はありません。

説明以前に作成された ECS インスタンスには、パブリック IP アドレスが直接割り当てられます。

ipconfigコマンドを実行することで、パブリック IP アドレスを表示できます。ECS インスタンスが CLB を介してのみ外部サービスを提供する場合、Elastic Network Interface (ENI) でトラフィック統計が読み取られても、インターネットトラフィックに対するトラフィック料金は発生しません。バックエンドの ECS インスタンスが直接外部サービスを提供したり、インターネットにアクセスしたりする場合は、インスタンス用にパブリック IP アドレス、EIP、Anycast EIP、または NAT Gateway を設定または購入する必要があります。

次の図は、アウトバウンドトラフィックフローを示しています。

図 2. アウトバウンドトラフィックフロー

アウトバウンドトラフィックフローの一般原則は、トラフィックは入ってきたところから出ていくということです。

CLB インスタンスを通過するトラフィックは、CLB インスタンスでスロットリングまたは課金されます。CLB インスタンスとバックエンドの ECS インスタンス間の内部通信には課金されません。

EIP または NAT Gateway からのトラフィックには課金されます。EIP または NAT Gateway でトラフィックスピードをスロットルできます。ECS インスタンスにパブリック帯域幅リソースが設定されている場合、ECS インスタンスからのトラフィックに課金され、ECS インスタンスでトラフィックスピードをスロットルできます。

CLB は、インターネットへの応答的なアクセスをサポートします。バックエンドの ECS インスタンスは、インターネットからのリクエストに応答する必要がある場合にのみインターネットにアクセスできます。リクエストは CLB インスタンスによってバックエンドの ECS インスタンスに転送されます。バックエンドの ECS インスタンスが能動的にインターネットにアクセスする必要がある場合は、EIP を関連付けるか、ECS インスタンスで NAT Gateway を使用する必要があります。

ECS インスタンス、EIP、Anycast EIP、および NAT Gateway に設定されたパブリック帯域幅リソースにより、ECS インスタンスはインターネットにアクセスしたり、インターネットからアクセスされたりできますが、前述のリソースはトラフィックを分散したり、トラフィックの負荷を分散したりすることはできません。

よくある質問

CLB インスタンスの購入時に設定した最大帯域幅の値は、最大インバウンド帯域幅と最大アウトバウンド帯域幅の合計と等しいですか?

いいえ、そうではありません。

最大帯域幅の値は、インバウンドトラフィックとアウトバウンドトラフィックの両方に個別に適用されます。各タイプのトラフィックは、もう一方に影響を与えることなく、指定した最大帯域幅の値に個別に到達できます。

詳細については、「帯域幅の制限」をご参照ください。