Alibaba Cloud has supported HTTP/2 since the CDN 6.0 service launched in 2016, and has seen a 68% improvement to access rates. In this article, we will cover four aspects of HTTP/2: history, features, debugging, and performance.

HTTP (HyperText Transfer Protocol) is the most widely used network protocol on the Internet. The purpose of HTTP is to provide a method for publishing and receiving HTML pages. Resources requested through HTTP or HTTPS are identified by URI (Uniform Resource Identifier).

Although HTTP/1.1 has been running stably for more than 10 years, HTTP/2 is the wave of the future, which all engineers should learn. This article takes a deep dive in the history, features, debugging, and performance of HTTP/2 as shared by Jinjiu, a security technical expert at Alibaba Cloud CDN.

The earliest prototype published in 1991, it is very simple. It only supports the GET method, and does not support MIME types and various HTTP headers.

Published in 1996, it is based on HTTP/0.9 and has added a variety of methods, HTTP headers, as well as processing for multimedia objects.

In addition to the GET method, the POST and HEAD methods were also introduced, which enriched interactions between browsers and servers. Using this protocol, you can send contents in any format. The result is that the Internet can transfer not only text, but also images, videos, and binary files. This laid the foundation for the rapid development of the Internet.

The formats of HTTP requests and responses also changed. In addition to the data itself, every communication must include an HTTP header that describes relevant metadata.

Even though HTTP/1.0 presented a revolutionary change to HTTP/0.9, it still has some disadvantages. Primarily, each TCP connection can only send one request, and the connection is disabled when data is sent. If you want to request other resources, you have to create a new connection. Although some browsers have addressed this problem with a non-standard connection header, this is does not fully solve the problem. Since it is not a standard header, implementations between different browsers and servers may be different, so this solution is considered sub-optimal.

Officially published in 1999, HTTP/1.1 is the current mainstream HTTP protocol. It repairs the structural defects in previous HTTP design, makes semantics explicit, adds and deletes some features, and supports more complex Web applications.

After nearly 20 years of development, this version of the HTTP protocol is now very stable. Compared to HTTP/1.0, it adds various noticeable new features such as Host protocol headers, range segment requests, default sustained connections, compressed and chunked transfer encoding, cache processing, and many more. These features are still widely used and relied upon by most software.

Although HTTP/1.1 is not as revolutionary a change as HTTP/1.0 to HTTP/0.9, it still had a lot of improvements. Current mainstream browsers are still using HTTP/1.1 by default to this day.

The SPDY (pronounced as "speedy") protocol is developed by Google and mainly solves the problem of low efficiency in HTTP/1.1. It was published in 2009, but terminated in 2016. Since HTTP/2 has been standardized by IETF and will be supported by several browsers in the future, Google believes that it will make SPDY obsolete.

HTTP/2 is the latest HTTP protocol, which was published in May 2015. It is supported by mainstream browsers such as Chrome, IE11, Safari, and Firefox.

Note that HTTP/2 is not called HTTP/2.0 because IETF (Internet Engineering Task Force) considers HTTPP/2 to be a mature technology that does not require future sub-versions. If significant changes are made necessary in the future, they will be published in HTTP/3.

Actually, SPDY is the predecessor of HTTP/2 as it has very similar goals, principles, and implementations. As there are many Google engineers in the IETF committee, it is not surprising that SPDY became the standard for HTTP/2.

HTTP/2 not only features optimized performance, but is also compatible with the HTTP/1.1 syntax, whose features are similar to SPDY. HTTP/2 is quite different from HTTP/1.1; it is a binary protocol and not a text protocol. It also uses HPACK to compress HTTP headers, and supports multiplexing, server push, etc.

HTTP/2 transfers data in binary format rather than text format used in HTTP/1.x. Both header and body are in binary format and collectively called the "Frame". Basic binary format of the Frame is as follows (from rfc7540#section-4.1)

+-----------------------------------------------+ | Length (24) | +---------------+---------------+---------------+ | Type (8) | Flags (8) | +-+-------------+---------------+-------------------------------+ |R| Stream Identifier (31) | +=+=============================================================+ | Frame Payload (0...) ... +---------------------------------------------------------------+

We call it the basic format because all HTTP/2 Frames are packaged according to this basic format, which is similar to the TCP header. There are currently 10 Frames, which are distinguished by field Type, and each Frame has its own binary format packaged in Frame Payload.

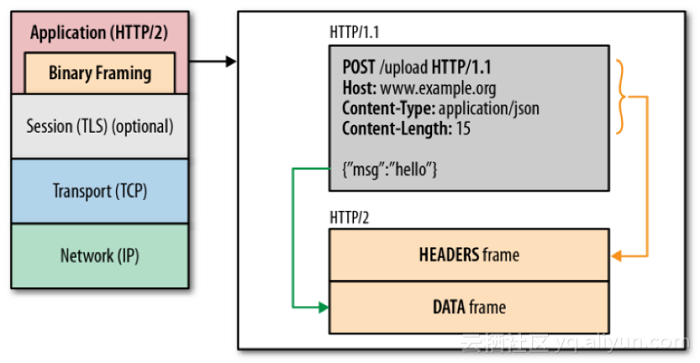

There are two important Frames: the Headers Frame (Type = 0x1) and the Data Frame (Type = 0x0), corresponding to the Header and Body in HTTP/1.1 respectively. Obviously the semantics hasn't changed significantly, only the text format has changed to binary. The conversion and relationship between Frames are shown in the figure below (from High Performance Browser Networking):

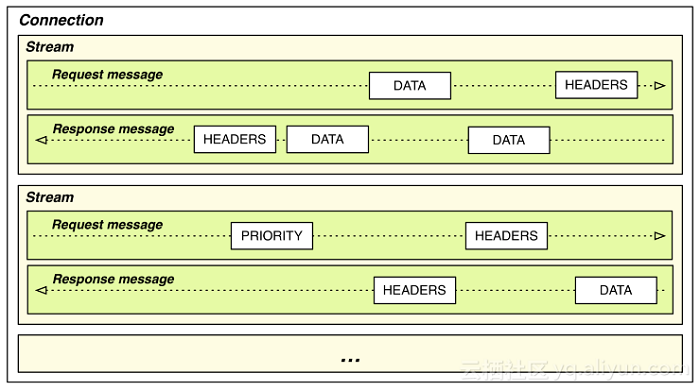

Furthermore, there are concepts like Stream and Message in HTTP/2, which are identified by the field Stream Identifier (Stream ID). Streams with the same Stream ID refer to the same Stream, and Message is included in Stream, corresponding to Request Message or Response Message in HTTP/1.x. Message is transferred through a Frame, while Response Message is larger and may be transferred through multiple Data Frames. The relationship of Stream, Message, and Frame in HTTP/2 is shown in the figure below (from High Performance Browser Networking):

Each request and response in HTTP/1.x will carry a lot of redundant header information, such as Cookie and User Agent. These are similar in content, but are carried by the browser in each request, which is a waste of bandwidth resources and transfer rate. This is because HTTP is a stateless protocol, and all information has to be attached in each request, including a lot of redundant headers.

Thus, there is an optimization in HTTP/2 to compress and transfer headers in the HPACK format, and create an index table for the headers. Only the index number has to be sent for the same header, which improves efficiency and transfer rate. The cost is that the client and the server have to maintain an index table, but since memory is not currently as expensive as it used to be, the tradeoff is worthwhile.

For more information on HPACK, see RFC7541.

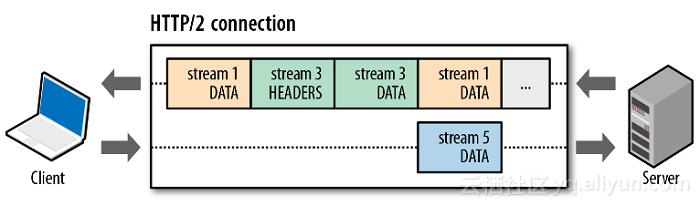

Multiplexing is when the client and server are able to send multiple requests and responses at the same time in a single TCP connection. In HTTP/1.x there are strict requirements for the order of the requests and responses, while in HTTP/2 the requests and responses do not have to be in matching order and a block in any of the multiple concurrent requests and responses will not affect the rest. This effectively avoids the issue of Head-of-line blocking. This is demonstrated in the figure below (from High Performance Browser Networking):

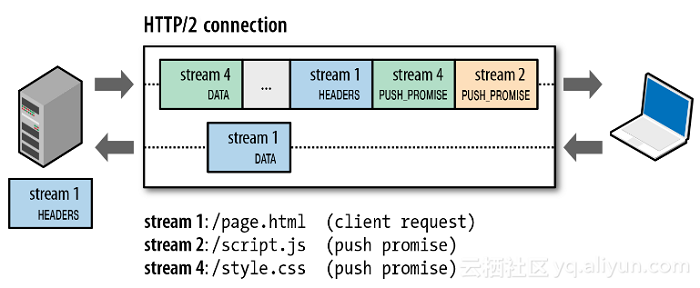

Server Push refers to the HTTP/2 ability for the server to actively push resources to clients without a request. For example, a server can actively push images, JS and CSS files to browsers, without waiting for the browser to resolve the HTML before sending the request. When the browser has resolved the HTML, the required resources are already in the browser, which makes web pages load significantly faster. This is demonstrated in the figure below (from High Performance Browser Networking):

A browser initiates a request for the page page.html, in which script.js and style.css are introduced. The server pushes the files script.js and style.css after the response to page.html, so that script.js and style.css are already available locally when the browser resolves page.html. This means that the browser does not have to send a separate request for these files, saving two rounds of requests and responses and effectively increasing the loading speed of the page.

HTTP security is guaranteed by SSL/TLS, i.e. HTTPS. In fact, HTTP/2 does not have to rely on SSL/TLS, however, current mainstream browsers only support HTTP/2 based on SSL/TLS, and in an Internet environment with increasing number of network hijackings, HTTPS is the trend of future, and HTTP/2 based on HTTPS is also the trend of future. Various mainstream browsers only supporting HTTP/2 based on SSL/TLS at the beginning of HTTP/2 show that security is also an important feature of HTTP/2.

HTTP/2 and SPDY are similar in principle and target, and official Nginx code shows that they are also similar in implementation. The SPDY module code has been deleted from the official Nginx code, and replaced with the code for the HTTP/2 module (ngx_http_v2_module).

1.1 Add the HTTP/2 module in compile parameters (SSL module already exists by default):

# git clone https://github.com/alibaba/tengine.git

# cd tengine

# ./configure --prefix=/opt/tengine --with-http_v2_module

# make

# make install1.2 Generate test certificate and private key

# cd /etc/pki/CA/

# touch index.txt serial

# echo 01 > serial

# openssl genrsa -out private/cakey.pem 2048

# openssl req -new -x509 -key private/cakey.pem -out cacert.pem

# cd /opt/tengine/conf

# openssl genrsa -out tengine.key 2048

# openssl req -new -key tengine.key -out tengine.csr

...

Country Name (2 letter code) [AU]:CN

State or Province Name (full name) [Some-State]:ZJ

Locality Name (eg, city) []:HZ

Organization Name (eg, company) [Internet Widgits Pty Ltd]:Aliyun

Organizational Unit Name (eg, section) []:CDN

Common Name (e.g. server FQDN or YOUR name) []:www.tengine.com

Email Address []:

Please enter the following 'extra' attributes

to be sent with your certificate request

A challenge password []:

An optional company name []:

...

# openssl x509 -req -in tengine.csr -CA /etc/pki/CA/cacert.pem -CAkey /etc/pki/CA/private/cakey.pem -CAcreateserial -out tengine.crt1.3 Configure HTTP/2

server {

listen 443 ssl http2;

server_name www.tengine.com;

default_type text/plain;

ssl_protocols TLSv1 TLSv1.1 TLSv1.2;

ssl_certificate tengine.crt;

ssl_certificate_key tengine.key;

ssl_ciphers EECDH+CHACHA20:EECDH+CHACHA20-draft:EECDH+AES128:EECDH+AES256:EECDH+3DES:RSA+3DESi:RC4-SHA:ALL:!MD5:!aNULL:!EXP:!LOW:!SSLV2:!NULL:!ECDHE-RSA-AES128-GCM-SHA256;

ssl_prefer_server_ciphers on;

location / {

return 200 "http2 is ok";

}

}1.4 Start tengine:

# /opt/tengine/sbin/nginx -c /opt/tengine/conf/nginx.conf

1.5. Test

First bind to /etc/hosts:

127.0.0.1 www.tengine.com

Test with nghttp tool:

jinjiu@j9mac ~/work/pcap$ nghttp 'https://www.tengine.com/' -v

[ 0.019] Connected

[ 0.043][NPN] server offers:

* h2

* http/1.1

The negotiated protocol: h2

[ 0.064] recv SETTINGS frame <length=18, flags=0x00, stream_id=0>

(niv=3)

[SETTINGS_MAX_CONCURRENT_STREAMS(0x03):128]

[SETTINGS_INITIAL_WINDOW_SIZE(0x04):2147483647]

[SETTINGS_MAX_FRAME_SIZE(0x05):16777215]

[ 0.064] recv WINDOW_UPDATE frame <length=4, flags=0x00, stream_id=0>

(window_size_increment=2147418112)

[ 0.064] send SETTINGS frame <length=12, flags=0x00, stream_id=0>

(niv=2)

[SETTINGS_MAX_CONCURRENT_STREAMS(0x03):100]

[SETTINGS_INITIAL_WINDOW_SIZE(0x04):65535]

[ 0.064] send SETTINGS frame <length=0, flags=0x01, stream_id=0>

; ACK

(niv=0)

[ 0.064] send PRIORITY frame <length=5, flags=0x00, stream_id=3>

(dep_stream_id=0, weight=201, exclusive=0)

[ 0.064] send PRIORITY frame <length=5, flags=0x00, stream_id=5>

(dep_stream_id=0, weight=101, exclusive=0)

[ 0.077] send PRIORITY frame <length=5, flags=0x00, stream_id=7>

(dep_stream_id=0, weight=1, exclusive=0)

[ 0.077] send PRIORITY frame <length=5, flags=0x00, stream_id=9>

(dep_stream_id=7, weight=1, exclusive=0)

[ 0.077] send PRIORITY frame <length=5, flags=0x00, stream_id=11>

(dep_stream_id=3, weight=1, exclusive=0)

[ 0.077] send HEADERS frame <length=39, flags=0x25, stream_id=13>

; END_STREAM | END_HEADERS | PRIORITY

(padlen=0, dep_stream_id=11, weight=16, exclusive=0)

; Open new stream

:method: GET

:path: /

:scheme: https

:authority: www.tengine.com

accept: */*

accept-encoding: gzip, deflate

user-agent: nghttp2/1.9.2

[ 0.087] recv SETTINGS frame <length=0, flags=0x01, stream_id=0>

; ACK

(niv=0)

[ 0.087] recv (stream_id=13) :status: 200

[ 0.087] recv (stream_id=13) server: Tengine/2.2.0

[ 0.087] recv (stream_id=13) date: Mon, 26 Sep 2016 03:00:01 GMT

[ 0.087] recv (stream_id=13) content-type: text/plain

[ 0.087] recv (stream_id=13) content-length: 11

[ 0.087] recv HEADERS frame <length=63, flags=0x04, stream_id=13>

; END_HEADERS

(padlen=0)

; First response header

http2 is ok[ 0.087] recv DATA frame <length=11, flags=0x01, stream_id=13>

; END_STREAM

[ 0.087] send GOAWAY frame <length=8, flags=0x00, stream_id=0>

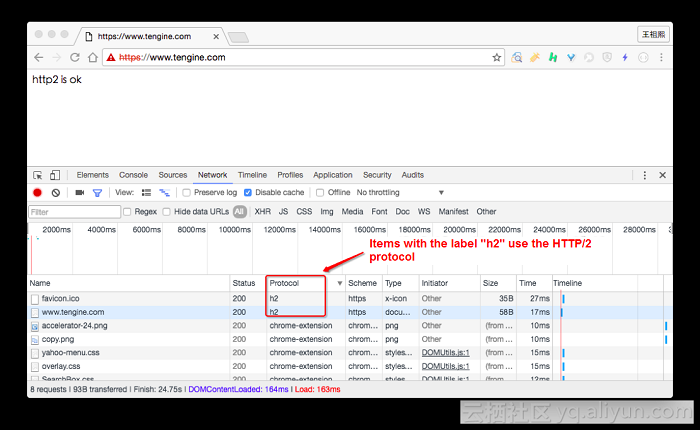

(last_stream_id=0, error_code=NO_ERROR(0x00), opaque_data(0)=[])Test with Chrome:

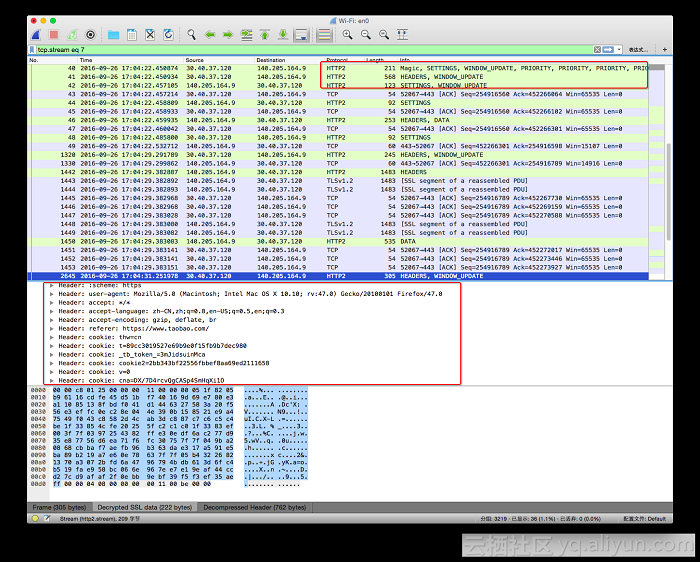

The best way to learn the HTTP/2 format is to start with packet capturing. While HTTP/2 is based on HTTPS and is therefore encrypted, which means that the contents of captured packets will be encoded, you can easily decrypt the packets with tools in Wireshark.

2.1. First export the system variable $SSLKEYLOGFILE, taking OS-X as an example

#bash

echo "\nexport SSLKEYLOGFILE=~/ssl_debug/ssl_pms.log" >> ~/.bash_profile && . ~/.bash_profile2.2 Open Chrome or Firefox

#chrome

open /Applications/Google\ Chrome.app/Contents/MacOS/Google\ Chrome

#firefox

open /Applications/Firefox.app/Contents/MacOS/firefoxOpen an https website with Chrome or Firefox, for example: https://www.taobao.com

and see if there is content in ~/ssl_debug/ssl_pms.log. If there is, you can use Wireshark to decrypt the https data.

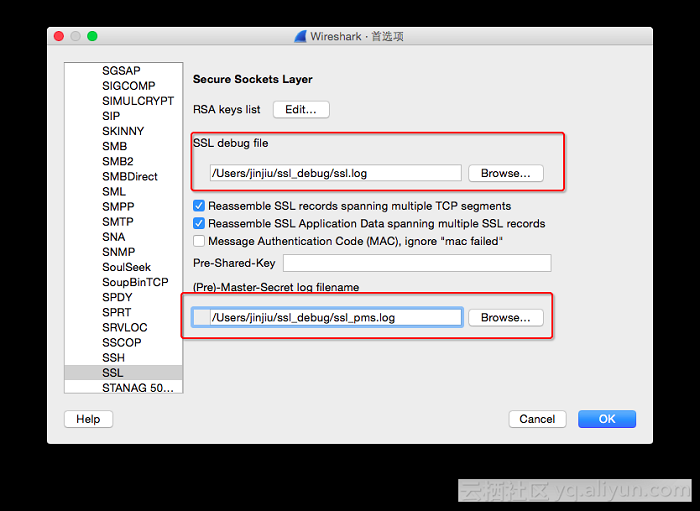

2.3 Wireshark settings

Wireshark->Perferences...->Protocols->SSL

Start packet capture

As you can see, we have now obtained the plaintext content of the HTTP/2 packets. As the scope of this article is limited, we won't go into any further explanation of HTTP/2. For more information please see: RFC7540.

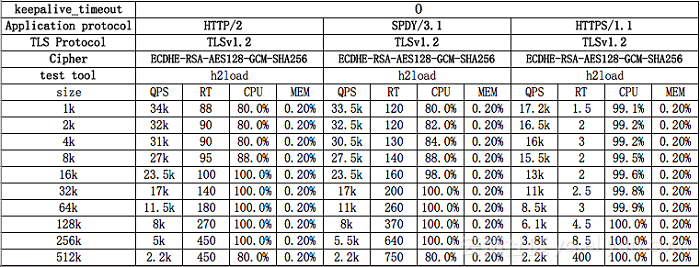

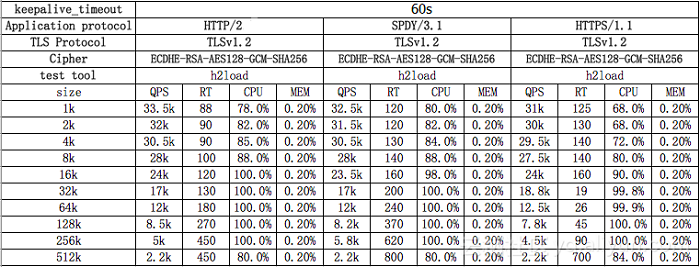

Test machine configuration: cache1.cn1, 115.238.23.13, 16-core Intel(R) Xeon(R) CPU L5630 @ 2.13GHz,48 GB RAM, 10 G network card Test tool: h2load Test result:

Even with or without keepalive, HTTP/2 is similar in performance to SPDY/3.1, in fact slightly better. In the test results with sizes of 1kb, 2kb, and 4kb, QPS is relatively high when RT is low, and the CPU bottleneck is not a factor. QPS is slightly increased after increasing compression test clients. When RT is increased, so is 5xx (data for this part is not given, it is just the recorded phenomenon during the test). In the test results with sizes 16kb, 32kb, 64kb, 128kb and 256kb, the CPU reaches its bottleneck. As the size increases, QPS reduces and RT increases.

Looking at the CPU performance, it seems the culprit behind consuming more resources is gcm_ghash_clmul. The network card reaches its bottleneck when size is 512k, while the CPU does not. When keepalive is enabled, there is no significant difference between the performances of HTTP/1.1 and HTTP/2, but when disabled, HTTP/2 performs better.

HTTP/2 features a number of underlying optimizations compared to the previous transfer protocol. These advantages have lead numerous companies to begin using the HTTP/2 protocol. Alibaba Cloud has supported HTTP/2 since the CDN 6.0 service launched last year, and has seen a 68% improvement in access rates. Though we may not have been aware of its approach previously, we now have a more secure, reliable, and faster era of the internet.

2,593 posts | 794 followers

FollowAlibaba Cloud Native Community - October 10, 2025

OpenAnolis - March 25, 2026

Farruh - February 19, 2024

Alibaba Cloud Data Intelligence - April 30, 2024

Alibaba Cloud Indonesia - March 31, 2026

XianYu Tech - May 13, 2021

2,593 posts | 794 followers

Follow CDN(Alibaba Cloud CDN)

CDN(Alibaba Cloud CDN)

A scalable and high-performance content delivery service for accelerated distribution of content to users across the globe

Learn More Content Delivery Solution

Content Delivery Solution

Save egress traffic cost. Eliminate all complexity in managing storage cost.

Learn More Edge Node Service

Edge Node Service

An all-in-one service that provides elastic, stable, and widely distributed computing, network, and storage resources to help you deploy businesses on the edge nodes of Internet Service Providers (ISPs).

Learn More Edge Security Acceleration (Original DCDN)

Edge Security Acceleration (Original DCDN)

Edge Security Acceleration (ESA) provides capabilities for edge acceleration, edge security, and edge computing. ESA adopts an easy-to-use interactive design and accelerates and protects websites, applications, and APIs to improve the performance and experience of access to web applications.

Learn MoreMore Posts by Alibaba Clouder