By Xiang Hua

Hago is a star product released by JOYY for multi-person interaction and socializing. Hago provides a variety of services, such as interactive games, multi-person voice calls, live streaming, and 3D virtual image interaction, committed to creating an efficient, diverse, and immersive social entertainment experience for users. It has an extensive user base in Southeast Asia, the Middle East, and South America.

At the technical level, Hago provides excellent self-developed audio and video technology to achieve more stable, efficient, and high-quality digital services, which include 3D hyperrealistic models, live anchor model production, virtual human voice, expression-driven service, natural sound text-to-speech (TTS), and mature virtual live streaming capabilities.

For a long time, Hago has been running big data tasks in its own IDC to support various products. In 2022, Hago decided to migrate its big data services to the cloud and adopted Spark on ACK for running them. This article will mainly focus on the migration process.

Initially, Hago's Spark tasks were executed in Hadoop clusters within the IDC. During this time, Hago encountered several challenges:

To address these challenges, Hago decided to migrate its big data business to the cloud in a cloud-native manner.

Starting from Spark 3.1, Spark on Kubernetes has been officially available. As a managed Kubernetes release edition, Alibaba Cloud Container Service for Kubernetes (ACK) offers higher performance and greater stability. It serves as the optimal operating base for Spark on Alibaba Cloud. To achieve better elasticity, Hago opted for the ACK Serverless.

In an ACK Serverless cluster, you can deploy container applications directly without the need to purchase nodes. You don't have to worry about node maintenance and capacity planning for the cluster. Moreover, you will be charged based on the CPU and memory resources configured for the application. Thanks to the comprehensive Kubernetes compatibility and the simplified usage of ACK Serverless clusters, you can focus on your applications rather than managing the underlying infrastructure.

In addition, pods in ACK Serverless clusters run in a secure and isolated container runtime environment based on Alibaba Cloud Elastic Container Instances (ECI). Each pod container instance is completely isolated by lightweight virtualization security sandbox technology, ensuring that the instances do not affect each other.

ACK Serverless clusters exhibit their elasticity advantages in scenarios with large-scale business spikes and task scheduling, such as Spark. They can deliver thousands of pods within 30 seconds.

However, there are some issues that need to be resolved before running:

As mentioned earlier, Spark tasks don't require continuous computing 24/7, while storage needs to be retained. Instead of building HDFS clusters on virtual machines, which would require a significant amount of resident computing power and result in wastage.

Hago has chosen to separate storage from computing. Data is stored in Object Storage Service (OSS), and the data is exposed using the HDFS interface through the OSS-HDFS service, enabling convenient access for Spark tasks.

For more information, see Overview of the OSS-HDFS service[1]

Shuffle is a fundamental process in Spark and plays a critical role in the performance of Spark applications. The Spark community provides a default shuffle service [2], but there are some issues:

• Spark Shuffle relies on local storage, but in scenarios where storage and computing are decoupled and ECI is used, local disks are unavailable. This requires purchasing and attaching additional disks, which is neither cost-effective nor efficient.

• Spark has implemented dynamic allocation based on ShuffleTracking, but the efficiency of executor reclamation is low.

The following scenarios may occur:

• Data overflow in shuffle write tasks resulting in write amplification.

• Connection reset due to a large number of small-size network packets in shuffle read tasks.

• High disk and CPU loads caused by a large number of small-size I/O requests and random reads in shuffle read tasks.

• In cases where thousands of mappers (M) and reducers (N) are used, a large number of connections (M × N) are generated, making it nearly impossible for jobs to run.

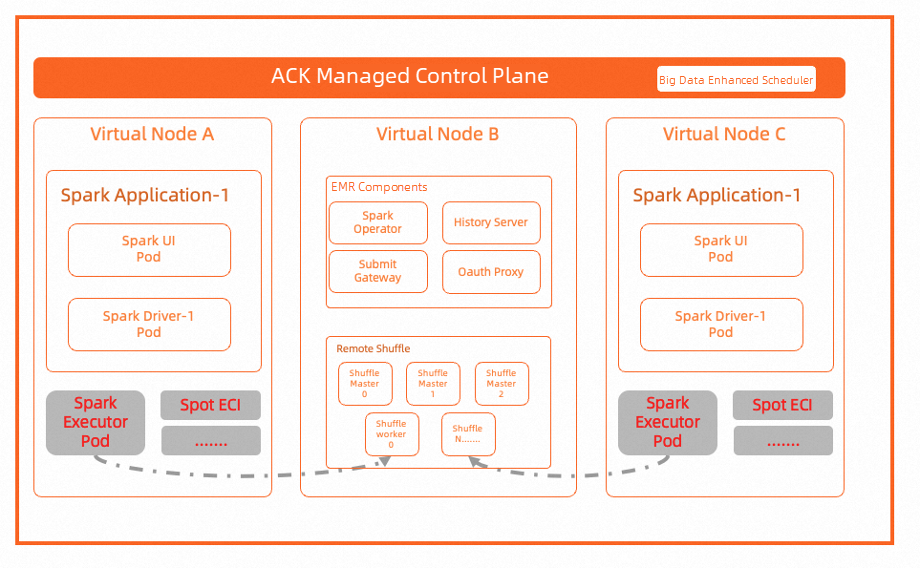

E-MapReduce (EMR)'s Remote Shuffle Service (RSS) can optimize the issues above with the Spark Shuffle solution and support dynamic allocation in the ACK environment.

For more information, see: EMR Remote Shuffle Service [3]

The general architecture diagram of the practice is shown above. It has ideal results:

• The scale-out operation only takes 30 seconds, so there is a need to prepare in advance.

• Tasks no longer need to be queued.

• The IDC hardware failure is not a concern.

[1] Overview of the OSS-HDFS service

https://www.alibabacloud.com/help/en/oss/user-guide/overview-1

[2] shuffle service

https://github.com/lynnyuan-arch/spark-on-k8s/blob/master/resource-managers/kubernetes/architecture-docs/external-shuffle-service.md

[3] EMR Remote Shuffle Service

https://www.alibabacloud.com/help/en/emr/emr-on-ecs/user-guide/celeborn#task-2184004

Manage End-to-end Traffic Based on Alibaba Cloud Service Mesh (ASM): Traffic Lanes in Loose Mode

Slow Trace Diagnostics - ARMS Hotspots Code Analysis Feature

212 posts | 13 followers

FollowAlibaba Container Service - April 8, 2025

Alibaba Container Service - June 26, 2025

Alibaba Cloud Native Community - July 26, 2022

Alibaba EMR - August 24, 2021

Alibaba EMR - May 11, 2021

Alibaba Container Service - November 15, 2024

212 posts | 13 followers

Follow Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More ACK One

ACK One

Provides a control plane to allow users to manage Kubernetes clusters that run based on different infrastructure resources

Learn More Cloud-Native Applications Management Solution

Cloud-Native Applications Management Solution

Accelerate and secure the development, deployment, and management of containerized applications cost-effectively.

Learn More CloudMonitor

CloudMonitor

Automate performance monitoring of all your web resources and applications in real-time

Learn MoreMore Posts by Alibaba Cloud Native