This article describes the status quo of basic Java technologies in terms of JavaSE open-source status, OpenJDK version ecosystem, and OpenJDK technology trends. It will further discuss the future evolution trends of Java Virtual Machine (JVM) technology. JVM technology is the foundation for supporting Java applications in the cloud-native, Artificial Intelligence (AI), and multi-language ecosystem fields.

Over the past 20 years, Java technology has evolved around productivity and performance. In many cases, productivity outweighs performance in Java design. While the Garbage Collector introduced in Java frees programmers from complex memory management, Java applications are affected by Garbage Collection (GC) downtime. Based on the intermediate bytecode design of stack virtual machines, Java properly abstracts the differences between different platforms (such as Intel and ARM) and uses the JIT compiler to solve Java applications' peak performance problem. However, JIT leads to the warmup cost. In normal cases, Java applications need to load, interpret and execute classes before executing a highly optimized code.

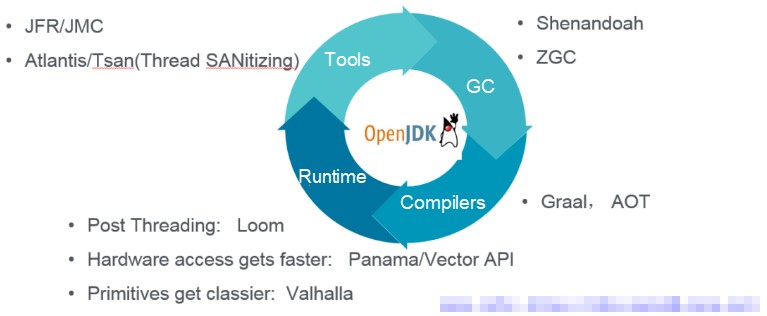

In terms of JVM, the emerging technologies of the OpenJDK community can be classified into tools, GC, compilers, and runtime:

JFR/JMC

Oracle has made its commercially available JFR open-source since Java 11. JFR is a powerful tool for Java application troubleshooting and performance analysis. Alibaba Cloud is a major contributor and has ported JFR to OpenJDK 8 with the community, including RedHat. OpenJDK 8u262 (Java 8) that will be released in July 2020 will provide the JFR feature by default so that Java 8 users will be able to use the JFR feature for free.

ZGC/Shandoath

Concurrent copy GC has been available in both the Z Garbage Collector (ZGC) that has been released with Java 11 by Oracle and the Shandoath that has been implemented by RedHat for several years. Concurrent copy GC solves the GC downtime problem upon a large heap. In JDK 15, to be released in September, ZGC will change from experimental to available for production. Actually, in AJDK 11, the Alibaba JVM team has done a lot of work to port ZGC from Java 11+ to Java 11 and fixed related problems. During the Double 11 Shopping Festival in 2019, the Alibaba JVM team worked with the Alibaba database team to run database applications on ZGC. Upon a heap memory of more than 100 GB, the GC downtime remains less than 10 ms.

Graal

The new-generation JIT compiler developed based on Java is used to replace the C1/C2 compiler of the HostSot JVM. The Ahead-of-Time (AOT) technology on OpenJDK is developed based on the Graal compiler.

Loom

The OpenJDK community coroutine project corresponds to the Wisp 2.0 implementation of AJDK.

This article discusses Java's future development in three aspects: cloud-native, AI, and multi-language ecosystem. Some discussions are beyond Java.

Language Evolution for Cloud-native

In the era of cloud-native, the way of delivering software has been fundamentally changed. Take Java as an example. Java developers previously delivered applications as JAR or WAR files. In the era of cloud-native, Java developers deliver containers.

In terms of operation, requirements for cloud-native applications are as follows:

Leading in the enterprise computing and internet fields, the Java language provides consistency, a rich ecosystem, rich third-party libraries, and various serviceability supports. As applications are microservice-based and serverless in the cloud era, Java application speed cannot be further improved due to the Java startup and running overhead.

Our discussion about language evolution is not limited to the compiler level at runtime in the new cloud-native context. The new computing form must be accompanied by the transformation of the programming model, which involves a series of transformations on program languages' libraries, frameworks, and tools. In the industry, there are many upcoming projects such as the next-generation programming frameworks Quarkus, Micronaut, and Helidon of GraalVM and static compilation technology of Java (SVM). Quarkus puts forward the concept of "container first". The layer-based lightweight uber-jar is exactly in line with the trend of delivery by the container. The "Checkpoint Restore Fast Start-up" technology (AZul proposed a similar idea at the JVM technology summit 2019) jointly developed by Red Hat's Java team and the OS team implements fast Java startup on the underlying technology stack.

We have also carried out relevant R&D work in Cloud-native Java. Java is a static language, but it contains a large number of dynamic characteristics, including reflection, class loading, and Bytecode Instrumentation (BCI). These dynamic characteristics are essentially counter to the Closed-World Assumption (CWA) principle of GraalVM and SVM. This is also the main reason why it is difficult for traditional Java applications running on JVM to be compiled and run on SVM. The Alibaba JVM team has made static tailoring on AJDK to find a definite boundary between static and dynamic Java characteristics, making static compilation possible for Java at the JDK level. Also, the JVM team works with the intermediate team of Ant Financial to define a Java programming model for static compilation and uses a programming framework to ensure that the development of Java applications is user-friendly for static compilation. We have statically compiled the service registry's meta node application that is built based on Ant Financial's open-source middleware SOFAStack. Compared with the traditional applications that run on JVMs, the performance of this application has been improved greatly: The service startup time is reduced by 17 times, the size of an executable file is reduced by 3.4 times, and the runtime memory usage is reduced by half.

AI Emerging as a New Challenge for The Heterogeneous Computing of Programming Languages

In 2005, Justin Rattner, the CTO of Intel at that time, said "We are at the cusp of a transition to multicore, multithreaded architectures". In more than a decade, the fields of programming languages and compilers have been exploring optimization for the parallel architectural paradigm. With the emergence of artificial intelligence (AI) in recent years, new challenges are raised for the fields of programming languages and compilers for Field Programmable Gate Array (FPGA) and graphics processing unit (GPU) heterogeneous computing at different times in similar scenarios.

In addition to the automatic parallelizing work done by traditional compilers such as IBM XL Compilers and Intel Compilers, in terms of exploring ultimate performance, the polytope model-based compilation optimization technology is used as the solution for program parallelization and partial data optimization, which has become a hot topic for research in the field of compilation optimization.

In terms of parallel languages, CUDA reduced the difficulty of GPU programming for C and C++ developers. However, the essential difference between GPU and CPU results in excessively high development costs. Developers need to learn more about the underlying hardware details and the huge gap between underlying hardware models and advanced languages faced by advanced development languages such as Java.

In the Java field, AMD shared its Sumatra project as early as the JVM Technology Summit of 2014, to try to implement interaction between JVM and target hardware of Heterogeneous System Architecture. Recently, the TornadoVM project launched by the University of Manchester contained a JIT compiler (supporting the mapping from Java bytecode to OpenCL), an optimized runtime engine, and a memory manager that maintains memory consistency between the Java heap and the heterogeneous device heap. TornadoVM aims to enable developers to write heterogeneous parallel programs without knowledge of GPU programming languages or GPU architecture. It transparently runs on AMD GPUs, NVIDIA GPUs, Intel integrated GPUs, and multi-core CPUs.

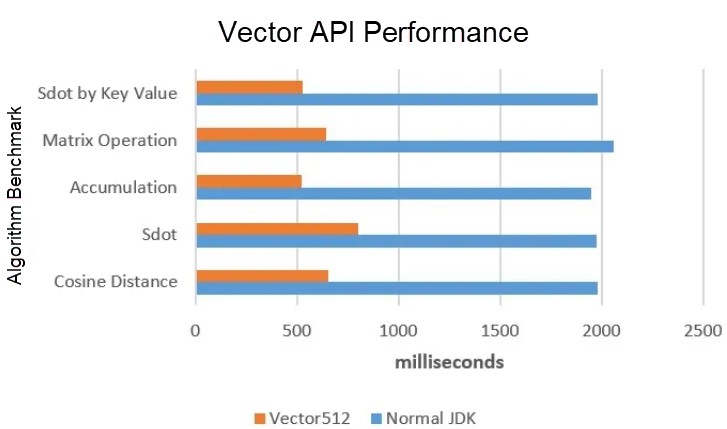

In the general CPU field, the Vector API project (a subproject of Panama) of the OpenJDK community achieves computing performance improvement several times by using single instruction, multiple data (SIMD) of the CPU. The Vector API project is also widely used in big data and AI computing. The Alibaba JVM team has ported the Vector API project to AJDK 11 and will make it open-source in Alibaba Dragonwell. We have obtained the following basic performance data.

The shorter the time (in milliseconds), the better the performance.

Polyglot Programming Connecting Multi-language Ecosystems

Polyglot programming is not a new concept. In the managed runtime field, IBM made Open Managed Runtime (OMR) open-source in 2017. Oracle made the Truffle and Graal technologies open-source in 2018. OMR and Graal technologies allow developers to develop languages at much lower costs. OMR provides GC, JIT, and reliability, availability, and serviceability (RAS) in the form of C and C++ components. Developers implement high-performance languages based on these components in the form of 'glue'. Truffle is a Java framework that relies on the AST parser to implement a new language. Essentially, it maps your new language to the JVM world. Different from languages (such as Scala and JRuby) that are built on JVM ecosystems and are still Java languages essentially, OMR and Truffle provide production-level GC, JIT, and RAS support, and newly developed languages do not need to re-implement these underlying technologies.

In the industry, Domain-Specific Language (DSL) for specific fields are being migrated to these technologies. Goldman Sachs is working with the Graal community to migrate their DSL to Graal. In addition, projects (such as Ruby, OMR, Python, GraalVM JavaScript, and GraalWasm) that connect to different language ecosystems are also rapidly developing.

Graal has been supported since AJDK 8, and mature technologies such as GraalVM JavaScript have been applied to Alibaba's internal businesses.

Over the years, Java has proliferated in Alibaba. Many applications are written in Java. Approximately 10,000 Java developers have written more than a billion lines of Java code! Alibaba has customized most of its Java software based on the vibrant open-source ecosystem. In Alibaba Cloud, these Java programs are developed for online trading, payments, and logistics operations. Many of them are developed as microservices running on top of Kubernetes native environment to service online requests.

At Alibaba, we use the native image technology of GraalVM to statically compile a microservice application into an ELF executable file which results in native code startup times for Java applications. This is needed to address the horizontal scaling challenge described above.

In our scenario, this serverless application is developed based on the SOFABoot framework. Its fat jar size is 120MB+. Many typical components in Java Enterprise space are included such as Spring, Spring Boot, Tomcat, MySQL-Connector, and many others. We refer to applications using this framework as SOFABoot applications. SOFABoot applications were originally running on top of Alibaba Dragonwell (OpenJDK based) designed for a distributed architecture, handling online transactions, and communicating with many other different applications through RPC.

In the global online shopping festival (also called Double 11, or Nov 11), we deployed a number of SOFABoot applications compiled as native images. They managed to serve real online requests in our production environment on a day with the highest transaction volume.

Besides the SOFABoot application, we have also explored the possibility of introducing statically compiled applications into Alibaba Cloud. We successfully deployed a native image version of the Micronaut demo application on Alibaba Cloud's function computing platform.

In this article, we describe the challenges we overcame to use GraalVM native image to do the static compilation to achieve the performance gains in our production environment.

Machine Learning Platform for AI provides end-to-end machine learning services, including data processing, feature engineering, model training, model prediction, and model evaluation. Machine Learning Platform for AI combines all of these services to make AI more accessible than ever.

Alibaba Cloud Function Compute is a fully managed, event-driven compute service. Function Compute allows you to focus on writing and uploading code without having to manage infrastructure such as servers. Function Compute provides compute resources to run code flexibly and reliably. Additionally, Function Compute provides a generous amount of free resources. No fees are incurred for up to 1,000,000 invocations and 400,000 CU-second compute resources per month.

When you use Java for programming in Function Compute, you must define a Java function as a handler. This topic describes the structure and features of Java event functions.

This topic describes how to manually deploy a Java web environment on an ECS instance. This topic is applicable to individual users who are new to website construction on ECS instances.

Autonomous Digital Enterprise: A Self-Sustaining Smart Enterprise

2,593 posts | 794 followers

FollowAlibaba Clouder - October 21, 2020

Apache Flink Community China - December 25, 2019

Apache Flink Community China - August 2, 2019

Alibaba F(x) Team - September 30, 2021

Alibaba Container Service - April 16, 2021

Alibaba Clouder - November 26, 2020

2,593 posts | 794 followers

Follow Function Compute

Function Compute

Alibaba Cloud Function Compute is a fully-managed event-driven compute service. It allows you to focus on writing and uploading code without the need to manage infrastructure such as servers.

Learn More Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn MoreMore Posts by Alibaba Clouder