Bin Fu, a data technical expert from Alibaba Cloud, delivered a live speech entitled "N Methods for Migrating Data to MaxCompute" in the MaxCompute developer exchange group on DingTalk in 2018. In the presentation, he introduced an internal combat demo system developed by Alibaba Cloud. The system can be used to process big data, including both offline and real-time data, in an automatic and full-link manner. He also showed how to use the combat demo system to process big data. The following article is based on the live speech.

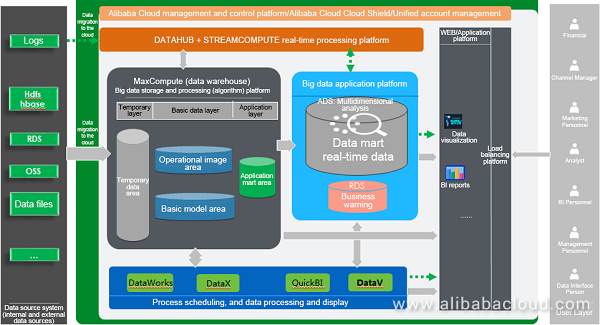

The preceding figure shows the overall architecture of the big data combat demo system, which migrates data to the cloud in offline mode. In order to implement applications, the architecture of the big data combat demo system must include a data source system, a data warehouse, a big data application system platform, a web/application platform, process scheduling, data processing and display, and a real-time processing platform. The data warehouse consists of the temporary layer, basic data layer, and application layer.

In the entire architecture, data migration to the cloud enjoys the highest priority. The method for migrating data to the cloud can vary according to different data sources.

First, data is uploaded to MaxCompute, a big data storage and processing platform. Then, data is processed and transmitted to the temporary layer. After simple conversion, data is then transmitted to the basic data layer. Finally, data is aggregated at the application layer to provide services.

In this architecture, the process is scheduled through the DataWorks and DataX protocol tools, data is displayed through QuickBI and DataV, and real-time data is processed through DataHub + StreamCompute. After data processing, the system stores the processed data on Relational Database Service (RDS) of the big data application platform and displays data through QuickBI and DataV.

There are many methods for migrating data to the cloud. Typical MaxCompute built-in tools for migrating data to the cloud include Tunnel, DataX, and DataWorks:

Then, data can be migrated to MaxCompute.

Logstash is a simple and powerful distributed log collection framework. It is often configured with ElasticSearch and Kibana to form the famous ELK stack, which is very suitable for analyzing log data. Alibaba Cloud StreamCompute provides a DataHub output/input plug-in for Logstashto help users collect more data on DataHub. Using Logstash, users can easily access more than 30 data sources in the Logstash open source community. Logstash also supports filters to customize the processing of transmission fields and provide other functions.

Data Transmission Service (DTS) supports data transmission among data sources such as relational databases, NoSQL, and big data (OLAP). It integrates data migration, data subscription, and real-time data synchronization. Compared to third-party data streaming tools, DTS can provide more diversified, high-performance, and highly secure and reliable transmission links. It also offers plenty of convenient functions that greatly facilitate the creation and management of transmission links.

DTS is dedicated to resolving difficulties of long-distance and millisecond-level asynchronous data transmission in public cloud and hybrid cloud scenarios. With the remote multi-active infrastructure that supports the Alibaba Double 11 Shopping Carnival as its underlying data streaming infrastructure, DTS provides real-time data streams for thousands of downstream applications. It has been running online stably for three years.

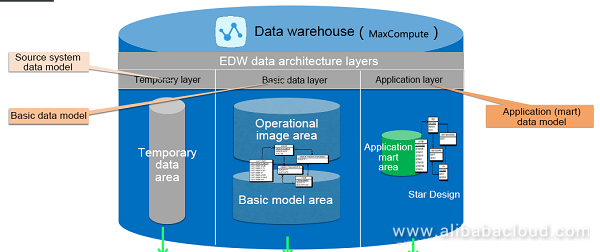

When enterprise data models are implemented at data architecture layers, each layer has its own specifications. After being uploaded to MaxCompute, data is processed and transmitted to the temporary layer. After simple conversion, data is then transmitted to the basic data layer and finally aggregated at the application layer. The model design method of the temporary layer is the same as the source system data model, both of which have unified naming rules. The temporary layer is designed to store only temporary data and can be applied to the processing of extract, transform, and load (ETL) user data. The basic data layer uses a 3NF design. It is designed for data integration, long-term historical data storage, detailed data, and general summary data. It can be used for ad hoc queries and provides data sources for the application layer. The model of the application layer is designed in anti-regularization or star/snowflake mode for one or several applications. This layer can be applied to report queries and data mining.

Data sources are generated as follows:

Download the TPC-DS tool from the TPC official website. Use the downloaded tool to generate TPC-DS data files, and then generate HDFS, HBase, OSS, and RDS data sources as follows:

The design of the directory structure and naming rules for data migration to the cloud tasks must meet the following requirements:

Note that task types and naming rules vary in script mode and wizard mode.

The development of data migration to the cloud tasks consists of four modules: data source configuration, task development in script mode, task development in wizard mode, and task scheduling attribute configuration. The specific procedures of these four modules are as follows:

Performance Breakthrough - Reconstruction of IoT System Based on Kafka + OTS + MaxCompute

137 posts | 21 followers

FollowAlibaba Cloud MaxCompute - June 22, 2020

Alibaba Clouder - April 22, 2021

Alibaba Clouder - July 20, 2020

Alibaba Cloud MaxCompute - January 4, 2022

Alibaba Cloud MaxCompute - January 22, 2024

Alibaba Cloud Community - October 20, 2025

137 posts | 21 followers

Follow Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More ApsaraDB for HBase

ApsaraDB for HBase

ApsaraDB for HBase is a NoSQL database engine that is highly optimized and 100% compatible with the community edition of HBase.

Learn MoreMore Posts by Alibaba Cloud MaxCompute

Raja_KT March 11, 2019 at 4:22 am

Nice..The high level Architecture is fine. But oragnizations need optimizations at each and every low level as the soltuions aggregate to the best optimized system. Performance tuning is needed at many micro-level....Realtime Compute, Dataphin, Analytics choices , DLA.... and plenty of solutions around