Summary: At the Alibaba Cloud MaxCompute session during the 2017 Computing Conference held in Hangzhou, Wang Chaoqun, Data Director of ZhongAn Insurance, delivered a speech on how MaxCompute empowers the business expansion of ZhongAn Insurance. This article first introduces the advantages of MaxCompute, presents the benefits big data brings to the company management, and finally focuses on the analysis of the data platform construction of ZhongAn Insurance, including task scheduling, metadata, and data quality monitoring.

The highlights of the speech are as follows:

As the first Internet company in China, ZhongAn Insurance has been using MaxCompute as its computing platform since its founding.

At the early stage, we made a choice between a self-created platform and MaxCompute in terms of robustness, interaction with application systems, scalability, data security, and cost effectiveness.

Robustness: 24/7 service and exception recovery time

Interaction with application systems: efficiency and cost of data source acquisition and data delivery

Scalability: elastic computing when data grows exponentially

Data security: protection against exceptional data attacks, multiple sandbox protection, and permission systems;

Cost: comparison between the self-created platform and MaxCompute

Firstly, in 2013, we could not find too many computing platforms that provide complete computing capabilities. In this case, MaxCompute, created to support the verified use of production system of Alibaba Finance, is a good choice that satisfies our requirements on both auto scaling and scalability by supporting the computing capabilities over 5,000 machines. Secondly, ZhongAn relies on the professional competencies of Alibaba Cloud for its leading position in China's computing market. Finally, MaxCompute, as a computing platform, also provides analyzing and mining tools, as well as available IDE (DataWorks, Studio) development tools, which helps reduce our development cost in our initial processing and development phase.

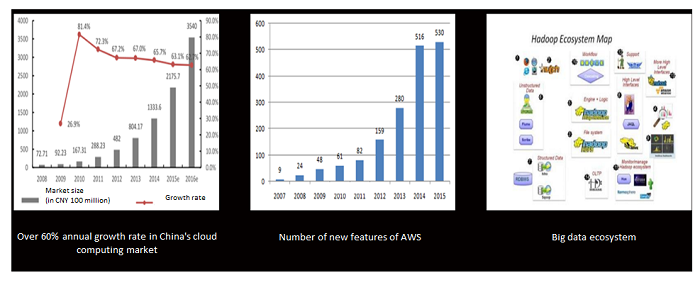

The development of the overall ecosystem chain of the cloud computing and big data is shown in the preceding figure. With over 60% annual growth rate in China's cloud computing market and the considerable number of new features of AWS, cloud computing is increasingly present in our daily lives. In the past ten years after the birth of Hadoop, the cloud computing products have been enriched greatly, and the ecosystem has become increasingly larger.

Big data excels not only in its tools, platforms and ecosystems, but also in its supports for the ecosystem development with the empowerment of personnel and scenarios. Over 10,000 employees of the Alibaba Group are using MaxCompute in their daily works. With the empowerment of personnel, big data scenarios can be diversified from the rich feedback data. During ten years' development, the investment in manpower and resources and the positive returns has contributed to the big data sector, so as to form a closed loop.

ZhongAn is an insurance-centered company, providing cross-ecosystem connections that empower the cooperation with different sub-sectors, including e-commerce, 3C, and autos. These products interconnect all the ecosystem partners and increase the contacts with users. By cooperating with more than 300 ecosystem partners, we have accumulated a wealth of user data. We hope ZhongAn can support the ecosystems and expand its own open platform by means of data accumulation, customer accumulation and brand accumulation.

By the end of 2016, we have served 492 million users with 7.2 billion insurance policies, providing the initial guarantee for the new generation of Internet companies in China. Among our users, people under 30 occupy approximately 50%, which indicates that ZhongAn Insurance represents a new idea and way of life. In addition, these people are well able to make money, and they better approve of and are more aware of insurance. They are the future main force of consumption.

Behind each string of numbers lie the efforts of all the staff. Then, based on MaxCompute, what does the data platform do? How is the business supported to develop rapidly?

The data platform contains platform tools, data monitoring and data services. Data itself includes multi-source heterogeneous data. Data value is embodied in its mobility and openness. Only after the data is processed, inspected and provided for users can value be generated. The platform tools include MaxCompute, data synchronization, task scheduling and computing storage management. Data monitoring covers early-warning systems, metadata, kinship and data quality. Data services include data portals, self-service data acquisition and service APIs.

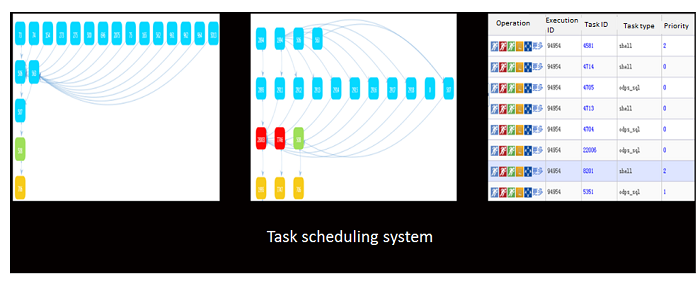

Task scheduling is essentially aimed at completing the state of the workflow of data processing. Data processing is a multi-link course. In order to ensure the data order is correct, we support scheduling by different periods, such as a day, a week, a month and the like; we support grouping priorities, hourly tasks, and self-defined time scheduling; the amount of daily tasks exceeds 10,000.

Task scheduling is a directed graph, and we can see a lot of source data from each node, where red data indicates the state of error, blue indicates success, green indicates operating, and yellow indicates existence. Different task processing courses come from a plurality of data sources, which confuses us. If an error is found in the information, does the error come from the task itself, or is the error caused by the result of the upstream data source? Then, how can the developer be enabled to faster locate the problem, reduce development cost and give the unified statement? We solve the problem by metadata.

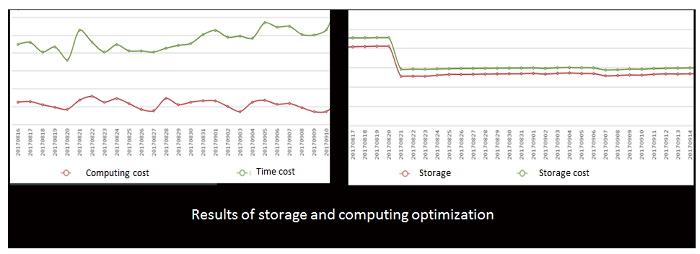

The data includes the breaking of barriers between data, which is favorable for model optimization and exception location; and the breaking of barriers between data and humans, which is favorable for cost optimization. Data relationships include data dictionary information, kinship information, storage and output information, table owner information and business metadata information, so as to drive the optimization of storage computing to reduce the use cost of MaxCompute.

The left diagram shows the basic information between data, as well as data output information and kinship. The right diagram shows the source of the tables, as well as which tables next round will be affected by the output. After acquiring the information, we will break the barriers between data, as well as the barriers between humans and data.

Cost is reduced by 30% after storage is optimized. By the optimization of storage computing, invalid storage is reduced, and computing efficiency will be improved.

Data quality monitoring is embedded in the execution state of the task itself in slice mode, to execute the self-treatment of the task and judge the state of itself by itself. The accuracy of the data is verified based on rules and templates, and only 0 KB will be used by downstream data. This avoids data contamination, and errors are exposed by themselves independently of downstream data. It is characterized by the facts that the counter-collecting function of MaxCompute is utilized; that its rules are counter rules, containing tables and filed levels; that its template is the combination of rules, periods and statistical functions; that after-process monitoring is changed to in-process monitoring; that user defining is supported; that key tasks are covered; and that the rate of coverage reaches 30%.

What will we consider during consumption? Data needs to be opened and circulated,and what do we need to notice during the opening and circulation? Data leakage and insecurity will both bring disaster to a company.

Technically, based on ACL and role management, we endow different levels; we enable permission grade control and establish the encrypted approval process for sensitive information masks and secret-related information; opening and security are based on technical control and process control as well as data required for various roles. The basis of opening is security control, the key to opening is process management, and we keep a balance between opening and security.

During the establishment of a data platform, three stages of usability, ease of use, and adaptability need to be retained, and multiple iterations are needed to upgrade the system. Data is a service, and we need to satisfy users' different data requirements. Data is an infrastructure, and every company faces the building and use of data platforms.

The richness of ecosystems, the sharing of resources and tools, the deep research on and support for mining algorithms, of MaxCompute, are strong enough to meet our use requirements, so that we have more time to contact users and create value for users. The cost of MaxCompute is also decreasing gradually. In the future, we hope MaxCompute can provide more types of mode support, including UDF resource libraries, such as IP libraries (including python algorithm packages for mining, and artificial intelligence platform support).

Deploy Web Apps with High Availability, Fault Tolerance, and Load Balancing on Alibaba Cloud

2,593 posts | 793 followers

FollowApache Flink Community China - November 5, 2020

Alibaba Cloud Community - January 2, 2024

Alibaba Clouder - March 6, 2019

Alibaba Cloud New Products - November 10, 2020

Alibaba Clouder - December 19, 2018

Alibaba EMR - March 18, 2022

2,593 posts | 793 followers

Follow MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn MoreMore Posts by Alibaba Clouder

Raja_KT February 13, 2019 at 5:37 am

Good one. Hope if you can share the data model on , "....Data relationships include data dictionary information, kinship information, storage and output information, table owner information and business metadata information" and why not application model.