This is the second and concluding part of the blog – Low-Latency Distributed Messaging with RocketMQ. We will be shedding light on the three keys to guaranteeing capacity, the architecture of RocketMQ, and the future or Alibaba Cloud's messaging engine.

The guarantee of low latency optimization may not necessarily result in a latency-free situation for a messaging engine. To secure a smooth experience for applications, the messaging engine requires flexible planning based on capacity. How can we make the system respond to overwhelming traffic peaks with ease? To tackle this concern, the three major factors of latency - degradation, traffic limiting, and fusing, must be addressed. Guarantee to resources of core services occur at the cost of degrading and suspending marginal services and components. The system's priority is not to register failure due to a burst traffic.

From the perspective of architecture stability, and with limited resources, the service capability that the system can provide per unit of time is also limited. The entire service may halt upon exceeding the bearing capacity causing the application to crash, which may transfer the risk to the service caller and cause service unavailability for the whole system, eventually resulting in an application avalanche. Additionally, as per the queuing theory, the average response time of services with latencies increases rapidly with the increasing requests. To ensure service SLAs, it is necessary to control the requests per unit of time. Therefore, traffic limiting becomes even more crucial.

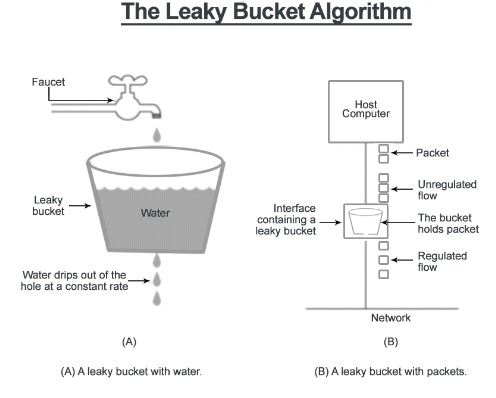

The concept of traffic limiting is also known as Traffic Shaping in the academic world. It originated in the field of network communication, typically including the leaky bucket algorithm and the token bucket algorithm. Below is a further explanation:

Figure 1.

The basic idea of the leaky bucket algorithm is that we have a leaking bucket from which water drips at a constant rate, and a tap above from which water pours into the bucket. If the water pours in at a rate faster than water dripping out, the bucket will overflow. Ideally, we can understand this like a request overload.

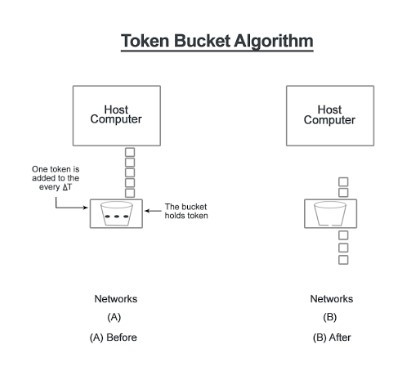

The basic idea of token, just as the bucket algorithm relates to a bucket, tokens enter the bucket at a constant rate. A limit exists to the number of tokens that can enter the bucket. Each request will acquire a token. If there are no tokens left in the bucket when a request arrives, request overload results. It should be apparent when there is a sudden surge in requests for the bucket tokens.

Figure 2.

Precisely, we can regard either a leaky bucket, token bucket or any other variant of these algorithms as a form of traffic limitation which controls the rate of traffic. Furthermore, there are traffic limiting modes which control concurrencies, such as semaphores in an operating system and Semaphore in JDK.

Asynchronous decoupling, peak time reductions, and load shifting are basic functions of messaging engines. These functions are focused on traffic speed, but we still need to consider some necessary traffic controls. Unlike what we mentioned earlier, out-of-the-box rates and traffic control components such as Guava and Netty are not part of RocketMQ. Slow requests are subjected to fault-tolerant processing by referencing to queuing theory. Slow requests here refer to requests with wait time in the queue and service time that exceed a specified threshold.

For offline application scenarios, fault-tolerant processing uses the sliding window mechanism, and by slowly collapsing the window, to lower the frequency of pulling from the server and message sizes, and minimize the influence on the server. For scenarios with high-frequency trading and data replication, the system has rapid-fail strategies applied to prevent application avalanches resulting from application chains causing exhausted resources, and effectively reduces the pressure on the server, effectively guaranteeing the low end-to-end latency.

Service degradation is a typical example of sacrificing something minor for the greater good, with 20%-80% in principle when practiced. The means of degradation are no other than such a simple and crude operation as shutting the server down and taking it offline. The selection of degradation targets is more dependent on the definition of QoS. The initial processing of degradation by messaging engines mainly comes from two aspects: the collection of user data and the QoS engine components' settings. For the former, pushing application's QoS data through operations & maintenance and management & control systems will generally output the following table: For core functions, QoS of engine components, such as chain track modules serving the tracing of message issues, are low in gradation and risk closure before the peak arrives.

We cannot talk about fusing without bringing up the fuses in the classic power system. In the case of excessive loads, circuit faults, or exceptions, the current will continue to rise. To prevent the rising current from possibly damaging some crucial or expensive component in the circuit, burning the circuit or even causing a fire, fuses will melt to break the current when it rapidly rises to a certain level or heats up, to protect the safe operation of the circuit.

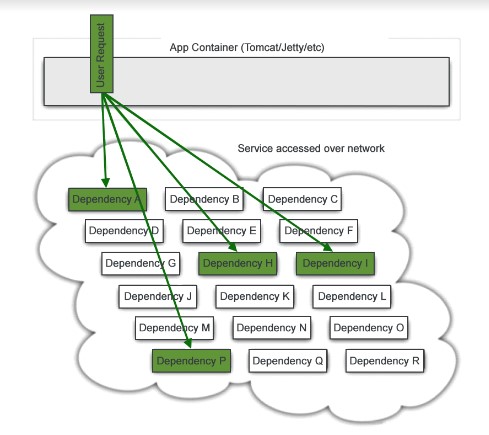

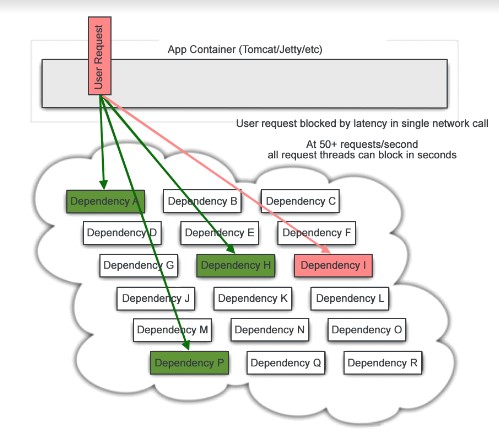

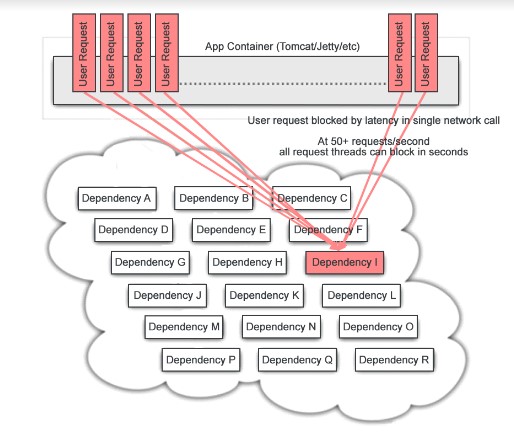

Also, in the distributed systems, if the remote services or resources are unavailable for some reason, the lack of overload protection will block the requested resources in the server, forcing it to wait, thus using up system or server resources. In many cases, there are only local or small-scale system faults initially. However, for various reasons, the scope of these faults' effects increase and finally lead to global consequences. This type of overload protection is commonly known as a circuit breaker. To solve this problem, Netflix opened the source of its fusing solution Hystrix.

Figure 3.

Figure 4.

Figure 5.

The three images above describe the scenarios of the system from the initial healthy status to its blocked status in some key dependent component downstream in a high concurrency scenario. Practically, this situation can easily induce an avalanche. Introducing the fusing mechanism of Hystrix allows applications to fail rapidly and avoids a worst-case scenario.

Figure 6.

By referencing the idea of Hystrix, the team at Alibaba Cloud developed a set of messaging engine fusing mechanisms. During load testing, there were cases where services were unavailable because of computer hardware failure. In using the conventional fault-tolerant means, it takes 30 seconds before the unavailable computer ceases to be part of the list. However, through this set of fusing mechanisms, it is possible to identify and isolate affected services within milliseconds. Thus, the idea further upgrades the availability of engines.

Although we recommend relying on the three factors influencing capacity guarantee; however, as the scale of messaging engine clusters increases uniformly to reach a certain degree, the possibility of computer faults in the cluster becomes higher. This significantly reduces the reliability of messages and system availability. At the same time, cluster modes based on the deployment of multiple server rooms will also result in broken networks in server rooms, further compromising the availability of messaging systems.

To tackle this problem, the team at Alibaba Cloud introduced multi-replica based highly available solutions that enable dynamic identification of disaster scenarios such as computer faults and broken networks in server rooms. Furthermore, the solutions allow automatic recovery from faults. The whole recovery process is transparent to users, requiring no intervention from operation & maintenance personnel, an aspect that has significantly improved the reliability of message storage and higher availability of the entire cluster.

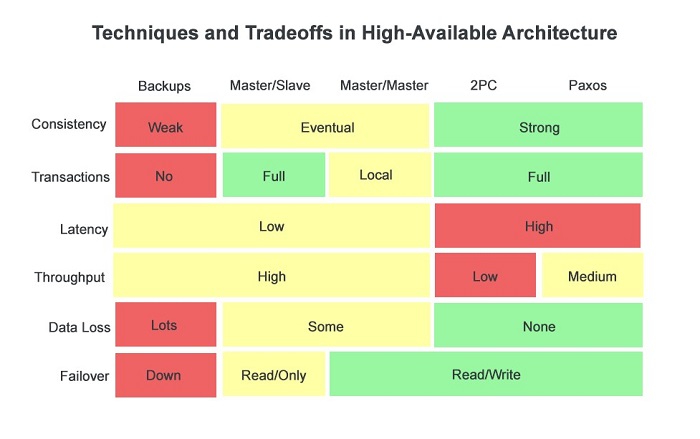

Greater availability is an important feature that almost every distributed system must consider. Based on the principle of CAP (Consistency, Availability, and Partition), it is impossible to satisfy tolerance at the same time in a distributed system. Below are some general highly available solutions proposed for distributed systems in the industry:

Figure 7.

The rows represent the highly available solutions in distributed systems, including cold backups, master/slave, master/master, 2PC, and Paxos algorithms based solutions. The columns represent the indicators that form the core focus of the distributed systems, including data consistency, transaction support level, data latency, system throughput, the possibility of data loss, as well as mode of recovery from failover.

As seen from the picture, the degree of support for indicators varies with solutions. Based on the CAP principle, it is strenuous to design a highly available solution capable of meeting the optimal value of all indicators. In the case of a master/slave system, for example, it generally meets the following characteristics:

• Setting the number of slaves based on the degree of importance of the data.

Data hits the master when writing requests and the master or the slave when reading requests.

• After hitting the master when writing requests, data replication takes place from the master to the slave in a synchronous or asynchronous manner. For sync replication mode, it is imperative to make sure both the master and the slave successfully write before giving feedback of success to the client. For asynchronous replication mode, it is only necessary to make sure the master successfully writes before giving feedback of success to the client.

Given the ability to copy data from the master to the slave in a synchronous or asynchronous manner, the structure of master/slave can at least ensure the final consistency of data. In an asynchronous replication mode where it is possible to feedback success to the client following the successful writing of the data in the master, the system has lower latency and higher throughput, but the loss of data is still possible due to a master fault.

If one does not expect data loss to be a factor of a master fault in the asynchronous replication mode, the slave can only wait for the recovery of the master in read-only mode, extending the time of recovery from system faults. On the other hand, the synchronous replication mode in the master/slave structure will ensure no data loss due to computer breakdown and reduce the time of recovery from system faults at the cost of increasing data write-in latencies and reducing system throughput.

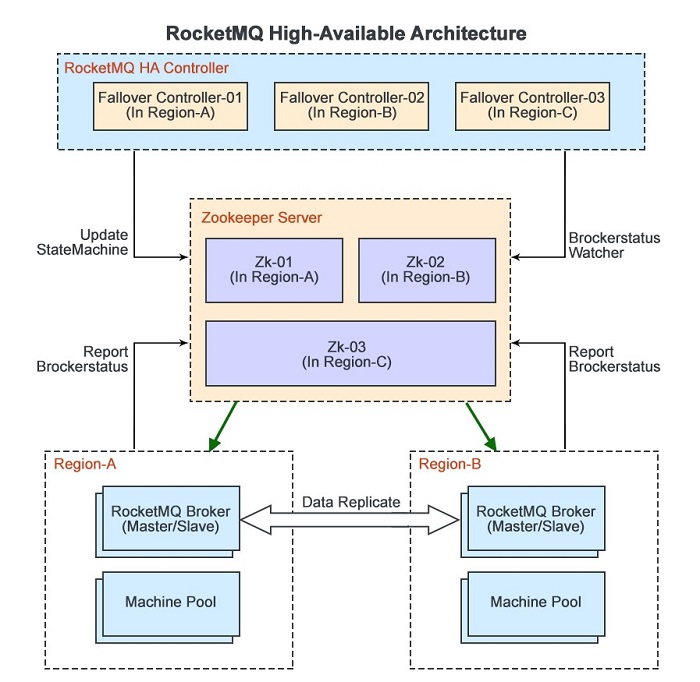

Based on the original deployment of multiple server rooms, RocketMQ cluster mode uses distributed locks and notification mechanisms, with the aid of component Controller, to design and achieve the highly available architecture of the master/slave structure, as shown below:

Figure 8.

As a distributed scheduling framework, Zookeeper requires the deployment of at least three server rooms A, B, and C to ensure its availability and provide the following features for a highly available architecture of RocketMQ:

• Maintain PERSISTENT nodes and save the machine states of the master/slave;

• Maintain EPHEMERAL nodes and save the current state of the RocketMQ;

• Notify relevant observers in case of any changes to the current state of the machine states of the master/slave servers

RocketMQ achieves peer-based deployment of multiple server rooms with the master/slave structure. Message write requests will hit the master and replicate to the slave for persistent storage in a sync or asynchronous mode; message read requests will hit the master as a matter of priority, and will subsequently move to the slave when messages build up, causing greater pressure on the disk. RocketMQ interacts directly with Zookeeper by:

• Reporting the current state to Zookeeper in the form of EPHEMERAL nodes;

• Monitoring the change in the machine state of the master/slave on Zookeeper as an observer. If we find any change in the machine state of the master/slave, the current state will change, according to the new machine state;

The RocketMQ HA Controller is a stateless component in the highly available architecture of messaging engines for reducing the time of recovery from system faults deployed separately in server rooms A, B, and C. It mainly serves to:

• Monitor the change in the current state of RocketMQ on Zookeeper as an observer

• Control the switching of the machine state of the master/slave based on the current state of the cluster and report to Zookeeper the new machine state of the master/slave

Considering the system complexity and the adaptability of a messaging engine itself towards the CAP principle, the design of the RocketMQ highly available architecture utilizes the master/slave structure. Based on providing messaging services with low latency and high throughput, the system adopts the master/slave sync replication to avoid message loss due to faults.

In the process of data synchronization, maintaining an incremental and globally unique SequenceID will ensure strong data consistency. The system introduces the mechanism for automatic recoveries from faults at the same time to shorten the time of recovery and improve system availability.

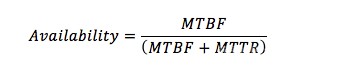

System availability is the ability of an information system to provide continuous service measured within the information industry. It indicates the probability of the system or a system capability to work normally in a specific environment, within a given time interval. In short, availability is the result of Mean Time Between Failures (MTBF) divided by the sum of MTBF and Mean Time To Repair (MTTR), namely:

The general practice in the industry is to represent system availability with the number of nines. For example, three nines means the availability is at least 99.9%, indicating that the duration of unavailability throughout the year is within 8.76 hours. Availability of 99.999%, or five nines, indicate that the duration of unavailability throughout the year must be within 5.26 minutes. A system without automatic recovery from faults cannot achieve five nines availability.

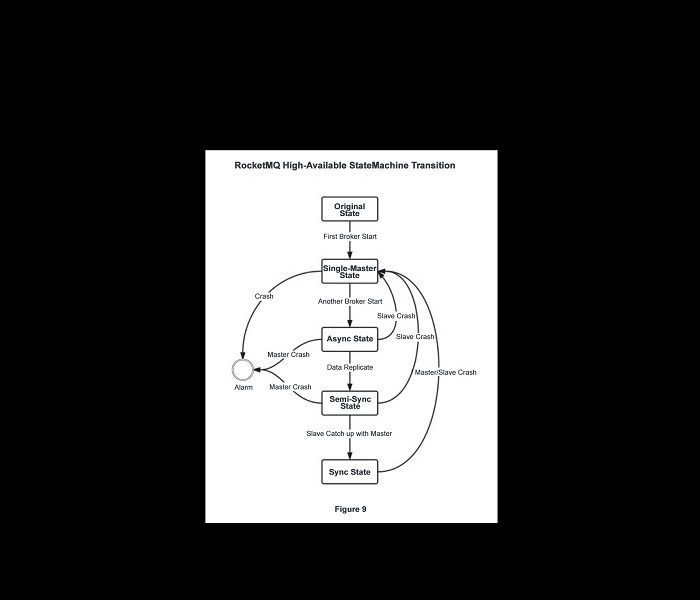

As seen from the formula of availability, to improve system availability, it is crucial to further enhance a system's ability to automatically recover from faults to shorten MTTR based on securing system robustness to extend MTBF. RocketMQ's highly available architecture has designed and implemented a component Controller that switches a finite state machine in the order of the single-master state, async replication state, semi-sync state, and final sync replication state. In the final sync replication state, if a fault occurs to any master and slave node, it is possible for other nodes to switch to the single-master state within seconds to continue the provisions of service. Compared to previously using manual intervention to recover service, RocketMQ's highly available architecture offers the capability of automatically recovering from system faults, substantially shortening MTTR and improving system availability.

The picture below describes the switch of finite state machines in RocketMQ's highly available architecture:

Figure 9

• Following initiation of the first node, the Controller controls the machine state to switch to a single-master state, and notifies the initiated node to provide services as the master.

• Upon initiating the second node, the Controller controls the state machine to switch to an async replication state. The master replicates data to the slave in the asynchronous mode.

• When the data of the slave is about to catch up with that of the master, the Controller controls the state machine to switch to a semi-sync state, which ensures the holding of write request hitting the master until the master replicates the data of all discrepancies to the slave in the asynchronous mode.

• In a semi-sync state, when the data of the slave totally catches up with that of the master, the Controller controls the state machine to switch to synchronous replication mode. The master starts to replicate data to the slave in synchronous mode. If a fault occurs in any node in this state, it is possible for other nodes to switch to a single-master state within seconds to continue providing the services.

Controller component controls the RocketMQ to switch the state machine in the order of the single-master state, asynchronous replication state, semi-sync state, and final synchronous replication state. The duration of intermediate states is a factor of the master/slave data discrepancy and network bandwidth. However, they will eventually remain in a state of sync replication.

After several years of online working-condition like tests, there still exists scope for optimization of the Alibaba Cloud messaging engine. The team is trying to further reduce the latency of message storage through storage optimization algorithms and cross-language calls. In front of emerging scenarios such as the Internet of Things, big data, and VR, the team has started to create the 4th generation of messaging engines featuring multi-level protocol QoS. Furthermore, it also features cross-networking, cross-terminal, and cross-language support, the lower response time for online applications and higher throughput for offline applications. In the idea of taking from open source and giving back to the same open source, our belief is that RocketMQ will develop towards a healthier ecology.

Reference

[1]Ryan Barrett. http://snarfed.org/transactions_across_datacenters_io.html

[2]http://www.slideshare.net/vimal25792/leaky-bucket-tocken-buckettraffic-shaping

[3]http://systemdesigns.blogspot.com/2015/12/rate-limiter.html

[4]Little J D C, Graves S C. Little’s law[M]//Building intuition. Springer US, 2008: 81-100.

[5]https://access.redhat.com/documentation/en-US/Red_Hat_Enterprise_Linux/6/html-single/Performance_Tuning_Guide/index.html

[6]http://highscalability.com/blog/2012/3/12/google-taming-the-long-latency-tail-when-more-machines-equal.html

[7]https://www.azul.com/files/EnablingJavaInLatencySensitiveEnvs_DotCMSBootcamp_Nashville_23Oct20141.pdf

Three Reasons to Add Alibaba Cloud to Your Multi-Cloud Strategy

2,593 posts | 794 followers

FollowAlibaba Clouder - March 19, 2018

Alibaba Cloud Community - December 21, 2021

Alibaba Cloud Native Community - January 5, 2023

Alibaba Cloud Native - June 12, 2024

Alibaba Cloud Native Community - July 4, 2023

Alibaba Cloud Native Community - July 20, 2023

2,593 posts | 794 followers

Follow ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ is a distributed message queue service that supports reliable message-based asynchronous communication among microservices, distributed systems, and serverless applications.

Learn MoreMore Posts by Alibaba Clouder

Raja_KT February 14, 2019 at 7:03 am

So RocketMQ also uses ZK , similar to Kafka. Maybe legends color description can be highlighted , signifying their indication, though for many it is understandable.