The Alibaba Cloud 2021 Double 11 Cloud Services Sale is live now! For a limited time only you can turbocharge your cloud journey with core Alibaba Cloud products available from just $1, while you can win up to $1,111 in cash plus $1,111 in Alibaba Cloud credits in the Number Guessing Contest.

Today, I would like to share the concepts and implementation of Alibaba's colocation technology with you in the following four aspects:

The colocation technology is originated from the consideration to balance between the growing business and the ever increasing resource costs. We hope to use the minimum resource costs to support more business requirements. Colocation is intended to reuse the existing resources to meet new business requirements.

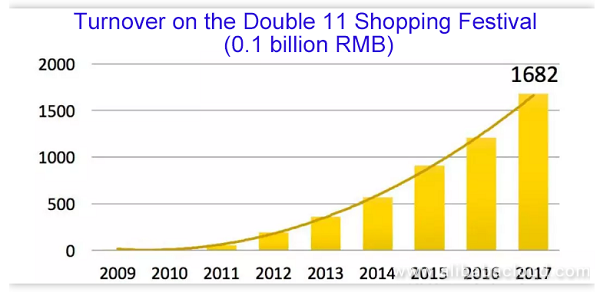

The preceding figure shows the turnover of Alibaba Group over the years after the Double 11 Shopping Festival in 2009. This curve is remarkable in the eyes of the business staff, but means great challenges and huge resource pressure for the technical staff and O&M staff.

As known by all the industry peers who are engaged in e-commerce platform services, the technical pressure often comes from the first second after a major promotion activity starts, which means a pulse peak.

The peak traffic (expressed by transactions per second) of Alibaba at 00:00 of the Double 11 Shopping Festival is basically consistent as the curve shown in this figure. Since 2012, the peak pressure at 00:00 has basically doubled over the previous year. This indicates that rapid development of online services is mainly attributed to the promotion activities.

In addition to online services, Alibaba also provides the large-scale offline computing services. With emergence of new technologies such as AI, the computing services are also on rise. By now, Alibaba holds big data at exabyte scale, and daily tasks at million scale.

Services are continuously increasing, and a lot of resources are reserved at the infrastructure layer to meet the online and offline service requirements. As online services use resources in quite a different way from offline services, two independent DCs were originally designed to provide resources for the online and offline services, respectively. Currently, each of the two DCs has had over 10,000 servers.

However, we find out that despite of the huge amount of resources in the DC, the usages of some resources are unsatisfying. In particular, the daily resource usage is only about 10% in the online service DC.

Different services may use resources in different ways and require for different resources. On the one hand, traffic of different services may reach the peak at different times, and therefore resources can be reused based on the time.

On the other hand, different services have different requirements for the resource response time, and therefore resources can be competed and seized by priority. Based on the two aspects, we explore the service colocation technology in different directions.

Colocation is a server where two different types of services are deployed. This technology allows the provision of two services by sharing the resources on one server that are equivalent to the resources on two servers.

First, resources are consolidated, that is, services that are physically separated before are now deployed on the same physical server.

Secondly, resources are shared. The resources support both services. From the perspective of either service, only the resources that the service requires are presented.

Finally, services compete for resources in a rational manner. As the resources are shared by two services, competition for resources inevitably exists. Therefore, proper competition measures are necessary for the services to obtain the resources that meet their requirements.

The greatest value of colocation is that resources can be fully utilized by means of sharing, realizing "acquisition of resources out of nothing". Colocation has been designed to ensure the resources for the service with higher priority when resource competition occurs between two services. Therefore, we hope to use the scheduling management and kernel isolation measures to fully share resources and isolate competition.

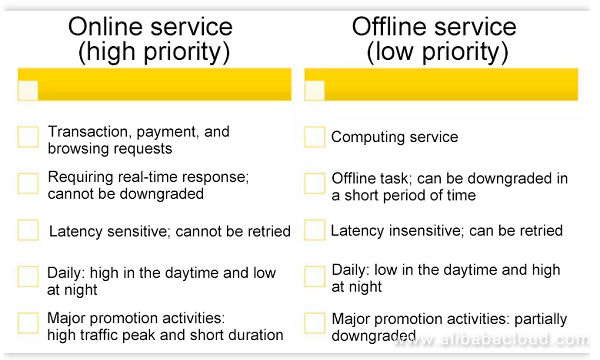

In the colocation technology, online services refer to transactions, payment services, and browsing requests.

Online services are characterized by their rigid requirement for the real-time performance, which cannot be downgraded. If a user has to wait for a long time (for example, several seconds) while shopping, the user may probably give up buying the items. If the user is requested to try again later, the user is unlikely to stay on that page in most cases.

The traffic trend is obvious for online services, especially the e-commerce. The traffic varies with users' time tables, high in the daytime and low at night, because most users like online shopping in the daytime.

Another significant characteristic of the e-commerce platform is that the daily traffic is much lower than the traffic during a major promotion activity. On the very day of a major promotion activity, the number of transactions created in several seconds may be ten times or even hundreds of times the peak trading volume on an ordinary day. This indicates that the traffic of an e-commerce platform is heavily time dependent.

Offline services, such as the computing service, algorithm service, statistical report, and data processing, are latency insensitive. Tasks and jobs submitted by users often take seconds or minutes (or even hours or days) to complete. Therefore, they can be completed after running for a while. In addition, they can be retried. Technically, we concern more about who takes care of retrying these tasks or jobs. Retries by users are unacceptable. The system is expected to have retries done so that users cannot perceive these retries.

Compared to online services, offline services are time independent, and can run at any time. Sometimes, offline services show the time characteristic opposite to that of online services, and have a certain probability of low traffic in the daytime and high traffic in the early morning.

Such characteristic is associated with user behaviors. For example, a user submits a statistical task, which will be executed after 00:00. The user receives the report when going to work the next morning.

Based on the preceding analysis of service runtime characteristics, we can learn that online and offline services can reach the traffic peak and resource usage peak alternatively.

Online services have higher priority and better resource preemption capability, whereas offline services are more tolerable when resources are insufficient. All these factors contribute to feasibility of co-locating the online and offline services.

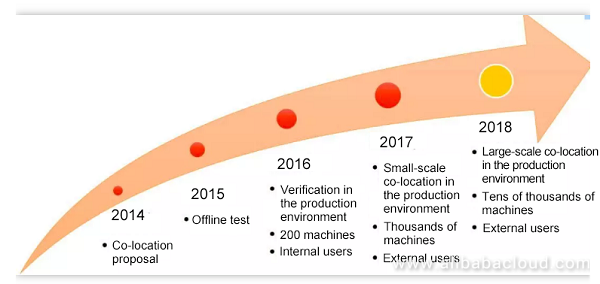

Before elaborating on the colocation technology, we would like to brief the course of Alibaba's exploration in colocation.

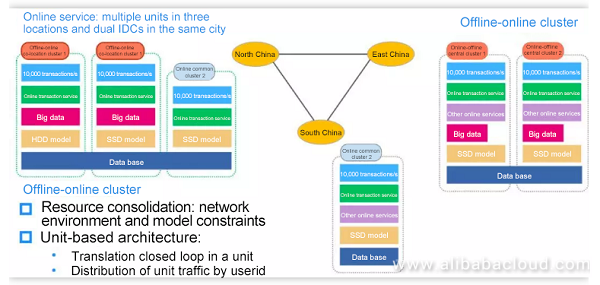

Currently, two scenarios exist when colocation is initially deployed. In the first scenario, the online cluster provides resources for colocation, and online resources provide the extra offline computing capability for offline services. In the second scenario, the offline cluster provides resources for colocation, and offline resources are used to create the online service transactions (mainly to cope with the traffic peaks of online services during major promotion activities).

As a simple convention in Alibaba, we put the services (online or offline services) that provide the machines in the front when naming a colocation scenario. Therefore, we have online-offline colocation, as well as offline-online colocation.

On the Double 11 Shopping Festival in 2017, 375,000 transactions were created per second according to the official announcement. The offline-online colocation cluster created 10,000 transactions per second, and offline resources were used to support the traffic peaks of online services, which reduces the resource overhead for the major promotion activity.

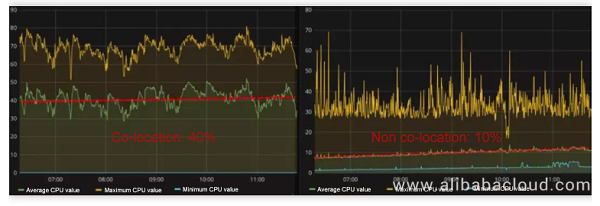

Meanwhile, after the online-offline colocation cluster is deployed, the daily mean resource usage of the native cluster for online services increases from 10% to 40%, and the online services provide the extra daily computing capability for offline services, as shown in the following figure.

The preceding figures show the real data of the monitoring system. The right figure shows the non-colocation scenario, in which the time is from 07:00 to about 11:00, and the CPU usage of the online service DC is 10%. The left figure shows the colocation scenario, in which the mean usage is about 40% and the jitter is large because the offline services themselves have relatively large fluctuations.

With so many resources saved, is the QoS (especially quality of online services) downgraded?

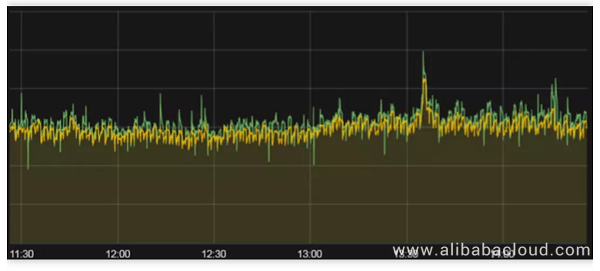

The following figure shows the real-time curves of the online core service that processes transactions. The green curve indicates the real-time performance of the colocation cluster, and the yellow one indicates the real-time performance of the non colocation cluster. The two curves basically coincide with each other, with the difference less than 5%. The QoS meets applicable requirements.

The colocation technology is associated with the business system and O&M system of a company. Therefore, this article may briefly mention different technical backgrounds.

This chapter describes the colocation solution, including the overall architecture, service deployment policies, management and distribution of colocation clusters, and the service operation policies.

Colocation is implemented by taking the following measures:

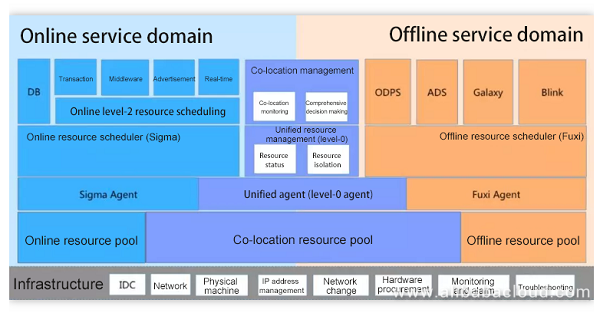

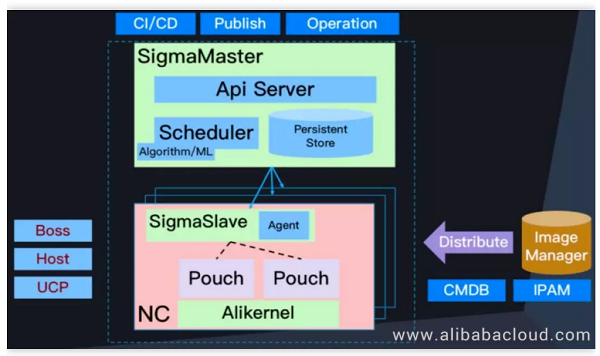

Before deployment of colocation, Alibaba Group already has several resource scheduling platforms, such as Sigma used for online services and Fuxi used for offline services.

The challenge of colocation lies in that resources must be allocated properly to different services, and multiple resource scheduling systems must be unified for decision making and arbitration.

The underlying layer is the infrastructure layer. The data centers of Alibaba Group share the same hardware facilities and supporting devices, such as servers and networks, regardless of the ways that these resources are used for the upper layers.

Above the underlying layer is the resource layer. In this layer, resource pools are integrated for centralized management.

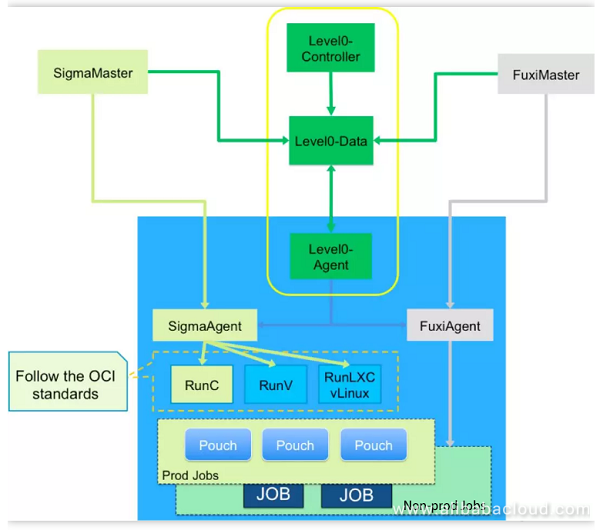

Above the resource layer is the scheduling layer, which is divided into the server end and client end. Sigma is the resource scheduling system for online services, whereas Fuxi is for offline services. Both Sigma and Fuxi are level-1 schedulers serving their own services. In the colocation architecture, a level-0 scheduler is introduced for management and allocation of resources in the two level-1 schedulers. The level-0 scheduler has a dedicated agent.

The uppermost layer is the service-oriented resource scheduling and management layer. Some resources are directly allocated to services by the level-1 schedulers, and some are allocated by level-2 schedulers, for example, Hippo Manager.

The colocation architecture also has a special colocation management layer, which orchestrates and implements service operation, manages configuration of physical resources, monitors services, and makes decisions.

The preceding resource allocation architecture allocates servers and resources to different services. After allocation is completed, how can the service priority and SLA be ensured in the operation process? If online and offline services run on the same physical server, what should the system do if resource competition occurs between services? To solve these problems, the kernel isolation technology is used to ensure resources in the operation process. A lot of kernel features are developed to support isolation, switchover, and downgrading of different types of resources. The kernel-related mechanisms will be introduced later in Chapter 3.

This section describes how to apply the colocation technology to the online services to provide the transaction creation capability for the e-commerce platform.

The colocation technology is new and contains many technical transformations. To avoid risks, a small-scale test is expected within a limited and controllable range. Therefore, a service deployment policy is developed based on the unit-based deployment architecture for the Alibaba e-commerce platform (online services) to build an independent transaction unit in the colocation cluster. This not only ensures that convergence of the colocation technology in the local scope does not affect the global system, but also implements closed-loop and independent resource scheduling and management for services within the unit.

In the e-commerce online system, the end-to-end services related to buyers' purchase behaviors are put in a closed-loop service set, which is called a transaction unit. All requests and instructions related to buyers' transaction behaviors are completed in this transaction unit in a closed-loop manner. This is a unit-based deployment architecture with multiple remote backups.

In implementation of the colocation technology, another constraint comes from hardware resources. Offline and online services have different requirements for hardware resources, and existing resources of one service may not be suitable for another service. In the colocation implementation process, the adaptation problem of existing resources is the most significant on disks.

Among the native resources of offline services, a lot of low-cost HDDs are available, and offline services use almost all HDDs when running. Therefore, HDDs are unavailable to online services.

To solve the IOPS performance problem of disks, the computing and storage separation technology is introduced, which is another technology that is now continuously evolving in Alibaba Group. This technology provides the centralized computing and storage service. The compute node is connected to the storage center over a network to shield off the dependency of the compute node on the local disks.

The storage cluster can provide different storage capabilities. Online services are demanding in terms of the storage performance, but their throughput is loaw. Therefore, the computing and storage separation technology is used to receive the remote storage service with IOPS assurance.

After finishing the overall architecture, let's take a look at resource allocation in the colocation cluster from the resources' point of view, and see how to "create resources out of nothing".

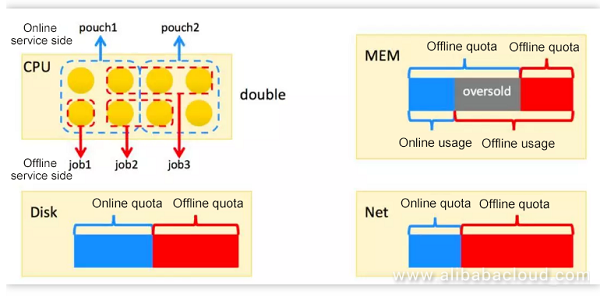

Resources of a single server include the CPU, memory (MEM), disk, and network (Net). The following describes how to obtain extra resources.

In terms of the CPU, the daily CPU usage of a pure online service cluster is about 10%. That is, online services cannot fully utilize the CPU during the daily operations. However, a peak CPU usage will be reached instantly during a major promotion activity.

Offline services are similar to a sponge that absorbs water. They have a huge business volume and consume whatever CPU computing capability that is available. Based on these characteristics of services in resource usage, the colocation technology is developed to split one CPU into two.

CPU cores are allocated to different processes based on the time slice in a polling manner. One CPU core is allocated to an online service and an offline service, where the online service takes precedence over the offline service. When the online service is idle, the offline service can use the CPU core. When the online service needs to use the CPU core, it preempts the CPU core from the offline service and the offline service is suspended.

As described earlier, two resource schedulers are available, including Sigma for online services and Fuxi for offline services. Online services use the pouch containers as their resource units. A pouch container is bound to a certain number of CPU cores, which are used by a certain online service. Sigma assumes that the entire physical server belongs to online services.

Meanwhile, Fuxi assumes that this physical server belongs to offline services, and therefore allocates the CPU resource of the entire server to offline services. In this way, the CPU resource is doubled.

When the same CPU resource is allocated to two services, resource competition arises and the kernel technologies must be used for CPU isolation and scheduling. These technologies will be mentioned later in this article.

The CPU can be shared by multiple process based on the time slice. However, the memory and disks are consumable resources. Once allocated to one process, these resources cannot be used by another process. Otherwise, the resource will be preempted by the new process. Therefore, how to reuse the memory resource becomes another priority for research.

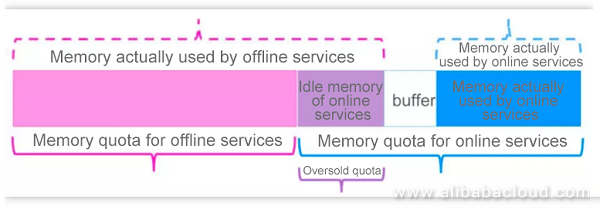

The MEM chart shows the memory overselling in a colocation environment. Above the blocks that indicate the memory sizes, the blue bracket indicates the memory quota that has been allocated to the online service, while the red one indicates that allocated to the offline service. Below the blocks, the blue bracket indicates the memory used by the online service, while the red one indicates the memory used by the offline service.

The chart reveals that the offline service uses the memory quota that has been allocated to the online service. This is known as memory overselling.

Why the online memory can be oversold? This is because the Alibaba online services are mainly developed in Java. One part of the memory allocated to containers is used as the overhead for the Java stack, and the remaining part of the memory is used as the cache.

As a result, a certain amount of idle memory exists in the online container. With the monitoring of the memory usage and protection technologies, the idle memory in the online container can be allocated to offline services. This part of memory belongs to online services, and cannot always be allocated to offline services. Therefore, these resources are allocated only to low-priority offline services that can be downgraded.

In terms of disks, because the disk capacity is sufficient for both online and offline services, few restrictions are imposed. A series of bandwidth limitation measures are set forth to ensure that the maximum IOPS of an offline service is smaller than a certain threshold, preventing offline services from reducing the online service IOPS and system IOPS.

In terms of the network resource of a single server, the capacity is currently sufficient and not a bottleneck for the time being. Therefore, related descriptions are omitted here.

The previous sections describe how to achieve resource sharing and competition isolation on a single server. This section describes how to migrate resources and maximize the resource usage in the entire resource cluster through overall O&M management. Colocation aims to maximize the resource usage and eliminate waste of resources.

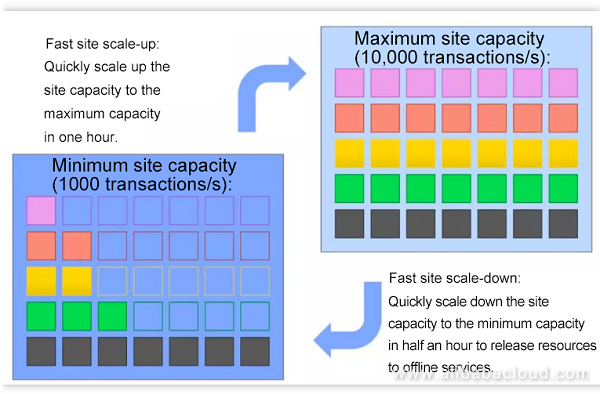

To achieve this objective, the concept of fast site scale-up and scale-down is proposed. This concept is applicable to online services. As described earlier, every colocation cluster is an online transaction unit, which independently supports transaction behaviors of a small amount of users. Therefore, the colocation cluster is treated as a site. The overall capacity of the site can be scaled up or down, which is called fast site scale-up and scale-down.as shown in the following figure.

Online services exhibit huge differences between the daily traffic and the traffic during major promotion activities. The traffic on the Double 11 Shopping Festival may be hundreds of times the daily traffic. This characteristic makes it feasible to implement the fast site scale-up and scale-down solution.

As shown in the preceding figure, each of the two big blocks represents the overall capacity of the online site, each small block represents a container of an online service, and each row represents the total number of containers reserved for an online service. The capacity of the entire site can be planned to switch between the capacity model for daily operations and the capacity model for a major promotion activity to better utilize resources.

In the e-commerce, the business objective, such as the number of transactions created per second, is often used as the baseline for evaluating the site capacity. Generally speaking, it is sufficient to reserve a capacity of 1000 transactions/s for a single site during daily operations. When a major promotion activity approaches, the capacity model of the site is switched to that for a major promotion activity, which is generally 10,000 transactions/s.

In this way, the unused online capacity of the site is scaled down to release resources so that offline services can obtain more physical resources.

The site scale-up process (from a small capacity to a large capacity) is completed in one hour. The site scale-down process (from a large capacity to a small capacity) is completed in half an hour.

During daily operations, the colocation site supports the traffic of online services using the minimum capacity model. When a major promotion activity or an end-to-end stress test approaches, the capacity of the colocation site is quickly scaled up. After the site runs continuously for several hours, the capacity of the site is quickly scaled down.

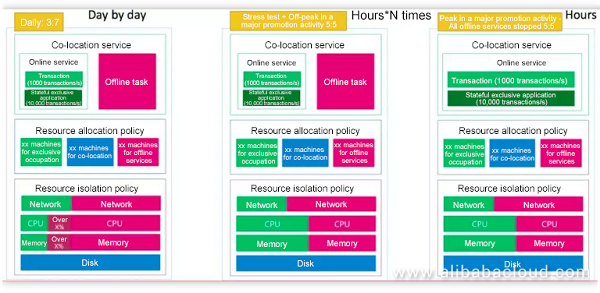

This scheme ensures that the online service occupies few resources most of the time, and more than 90% of resources are fully consumed by the offline service. The following figure shows the resource allocation details in each scale-up or scale-down phase.

In the preceding figure, three rectangle frames show how the resources in a colocation cluster are allocated during daily operations, during a stress test, and during a major promotion activity.

The red blocks indicate offline services, and the green blocks indicate online services. Each rectangle frame has the upper, middle, and lower layers. The upper layer indicates the service operation and weight. The middle layer indicates distribution of resources (host machines), where the blue blocks indicate the colocation resources. The lower layer indicates the resource allocation proportion and running mode at the cluster level.

During daily operations (left rectangle frame), offline services occupy most resources, some of which are obtained by means of allocation, and others are seized from online services (when the online services do not use these resources).

During a stress test (middle rectangle frame) or a major promotion activity (right rectangle frame), offline services give away resources until both the online and offline services occupy 50% of resources. When the traffic of online services is high, offline services do not compete for the oversold resources. In the preparation phase (that is, off-peak hours during a major promotion activity), offline services can still compete for idle resources of online services.

On the very day of the Double 11 Shopping Festival, offline services are downgraded to ensure stability of online services.

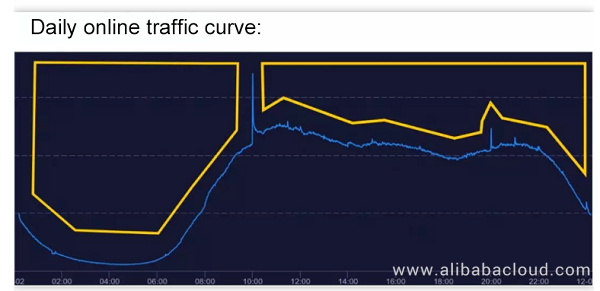

The preceding fast scale-up and scale-down scheme is a process of switching the online site capacity when a major promotion activity approaches. In addition to the significant traffic difference between daily operations and a major promotion activity, online services also regularly exhibit the following characteristics: traffic peak in the daytime and traffic valley in the early morning. To further improve the resource usage, the resource concession mechanism time-based reuse is proposed for daily operations.

The preceding figure shows the curve of online service traffic in a common day. The traffic is low in the early morning and high in the daytime. The capacity is scaled up or down by day for every online service to minimize the amount of resources used by online services and offer the saved resources for offline services.

Key technologies of colocation are divided into the kernel isolation technology and resource scheduling technology. Due to the limited length of this article, the following only lists some technical points without elaborating on the technologies.

Enhanced isolation features have been developed in different dimensions of the kernel resources, including the CPU, I/O, memory, and network dimensions. The online and offline service groups are defined based on the CGroup to differentiate the kernel priorities of the two types of services.

In the CPU dimension, isolation features, such as the hyper-threading pair, scheduler, and level-3 cache, are implemented. In the memory dimension, memory bandwidth isolation and the OOM kill priority are implemented. In the disk dimension, the I/O bandwidth limitation is implemented. In the network dimension, traffic control is implemented on a single server, and end-to-end hierarchical QoS assurance is implemented on the network.

You can search for detailed descriptions about the kernel isolation technologies of colocation. The following only details memory overselling.

Dynamic memory overselling:

As shown in the preceding figure, the solid-line brackets in red and blue represent the memory quotas of the offline and online CGroups, respectively. The sum represents the memory that can be allocated by the entire server, excluding the memory used as the system overhead. The solid-line bracket in purple represents the oversold memory quota for offline services, whose size is determined by the detected size of the idle memory unused by online services in the operation process.

The upper dashed-line brackets represent the actual memory sizes used by offline and online services, respectively, wherein online services generally do not consume all the memory quota, and the memory quota unused by online services is used as the oversold quota by offline services. To prevent a bursty memory requirement of online services, a certain size of memory is reserved as the buffer. In this way, offline services can use the oversold memory.

As the second core technology of colocation, resource scheduling can be further divided into the native level-1 resource scheduling (online resource scheduler Sigma and offline resource scheduler Fuxi), and colocation level-0 scheduling.

Online resource scheduler – Sigma

The online resource scheduler schedules and allocates resources properly based on the resource portraits of applications. It involves a series of packing problems, affinity and mutual exclusion rules, and global optimal solutions. The online resource scheduler implements automatic scaling of application capacities and time-based reuse at the global dimension, and fast scale-up and scale-down upon special events.

The preceding figure shows the architecture of the online level-1 scheduler Sigma. Sigma is compatible with Kubernetes APIs, uses the Alibaba pouch container technology for scheduling, and has been tested by the Alibaba's heavy traffic and the traffic on Double 11 Shopping Festival over the years.

Offline resource scheduler – Fuxi

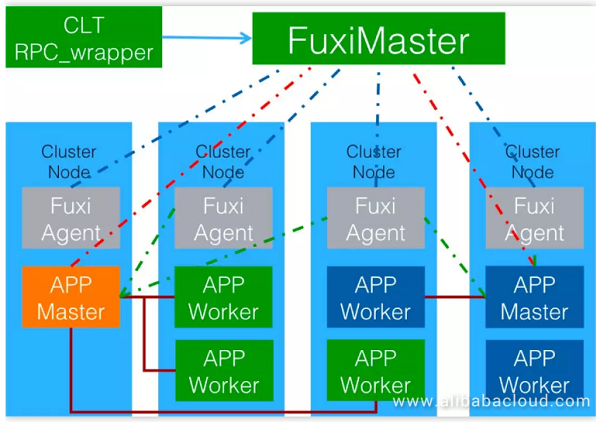

The offline resource scheduler implements hierarchical job scheduling, dynamic memory overselling, and lossful or lossless offline service downgrade solutions.

The preceding figure shows the operation of the offline resource scheduler Fuxi. Fuxi implements scheduling based on jobs and provides a data-driven, multi-level, pipelined, and parallel computing framework for complex applications that require mass data processing and large-scale computing.

Fuxi is compatible with multiple programming modes, including MapReduce, Map-Reduce-Merge, Cascading, and FlumeJava. It also features high scalability, supports scheduling of hundreds of thousands of parallel tasks, and can optimize the network overhead based on the data distribution.

Unified resource scheduling – level 0

In a colocation environment, resources are scheduled and allocated for offline and online services respectively by their own schedulers. A unified resource scheduling level, level 0, is also available under the level-1 schedulers. Level 0 schedulers are responsible for coordination and arbitration of online and offline service resources, and implement monitoring and decision making to allocate resources properly. The following figure shows the overall architecture for colocation resource scheduling.

The colocation technology will evolve towards three directions in the future: large-scale, diversified, and refined.

Large-scale colocation: In 2018, colocation will be deployed in a scale with tens of thousands of servers. It is expected to be a basic capability for resource delivery within Alibaba Group to save more resource costs.

Diversified colocation: In the future, the colocation technology is expected to support more service types, more hardware resource types, and more complex environments. Even better, colocation can integrate resources over the cloud, Alibaba Cloud, and internal resources of Alibaba Group.

Refined colocation: In the future, colocation is expected to have more detailed service resource portraits, better real-time scheduling performance, higher scheduling precision, more refined kernel isolation, and real-time and more precise monitoring and O&M management.

2,593 posts | 793 followers

FollowAlibaba Cloud Native Community - November 3, 2025

Alibaba Cloud Serverless - September 29, 2022

Alibaba Container Service - June 26, 2025

Alibaba Cloud Serverless - November 27, 2023

Daniel Molenaars - December 12, 2024

Alibaba Cloud Native Community - December 7, 2023

2,593 posts | 793 followers

Follow Apsara Stack

Apsara Stack

Apsara Stack is a full-stack cloud solution created by Alibaba Cloud for medium- and large-size enterprise-class customers.

Learn More Security Center

Security Center

A unified security management system that identifies, analyzes, and notifies you of security threats in real time

Learn More ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn MoreMore Posts by Alibaba Clouder