Neural networks and deep learning technologies underpin most of the advanced intelligent applications today. In this article, Dr. Sun Fei (Danfeng), a high-level algorithms expert from Alibaba's Search Department, will provide a brief overview of the evolution of neural networks and discuss the latest approaches in the field. The article is primarily centered on the following five items:

Before we dive into the historical development of neural networks, let's first introduce the concept of a neural network. A neural network is primarily a computing model that simulates the workings of the human brain at a simplified level. This type of model uses a large number of computational neurons which connect via layers of weighted connections. Each layer of neurons is capable of performing large scale parallel computing and passing information between them.

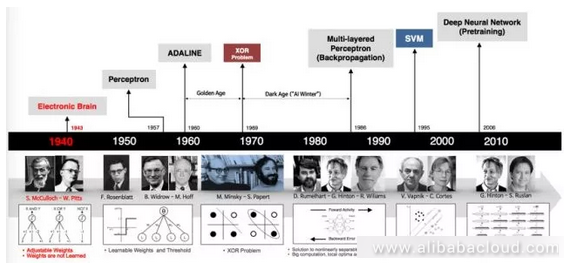

The timeline below shows the evolution of neural networks:

The origin of neural networks goes back to even before the development of computing itself, with the first neural networks appearing in the 1940s. We will go through a bit of history to help everyone gain a better understanding of the basics of neural networks.

The first generation of neural network neurons worked as verifiers. The designers of these neurons just wanted to confirm that they could build neural networks for computation. These networks cannot be used for training or learning; they simply acted as logic gate circuits. Their input and output was binary and weights were predefined.

The second phase of neural network development came about in the 1950s and 1960s. This involved Roseblatt's seminal work on sensor models and Herbert's work on learning principles.

Sensor models and the neuron models as we mentioned above were similar, but had some key differences. The activation algorithm in a sensor model can be either a break algorithm or a sigmoid algorithm, and its input can be a real number vector instead of the binary vectors used by the neuron model. Unlike the neuron model, the sensor model is capable of learning. Next we will talk one by one about some of the special characteristics of the sensor model.

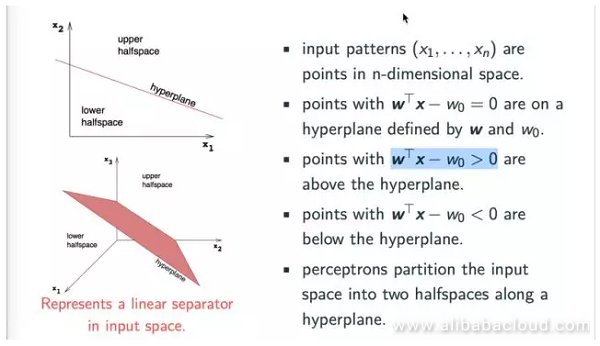

We can think of the input value (x1..., xn) as a coordinate in N dimensional space, while wTx-w0 = 0 is a hyperplane in N dimensional space. Obviously, if wTx-w0 < 0, then the point falls below the hyperplane, while if wTx-w0 > 0, then the point falls above the hyperplane.

The sensor model corresponds to the hyperplane of a classifier and is capable of separating different types of points in N dimensional space. Looking at the figure below, we can see that the sensor model is a linear classifier.

The sensor model is capable of easily performing classification for basic logical operations like AND, OR, and NOT.

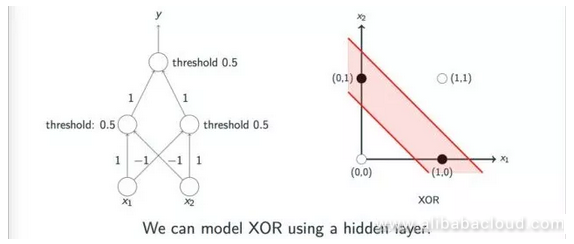

Can we classify all logical operations through the sensor model? The answer is, of course not. For example Exclusive OR operations are very difficult to classify through a single linear sensor model, which is one of the main reasons that neural networks quickly entered a low point in development soon after the first peak. Several authors, including Minsky, discussed this problem on the topic of sensor models. However, a lot of people misunderstood the authors on this subject.

In reality, authors like Minsky pointed out that one could implement Exclusive OR operations through multiple layers of sensor models; however, since the academic world lacked effective methods to study multi-layer sensor models at the time, the development of neural networks dipped into its first low point.

The figure below shows intuitively how multiple layers of sensor models can achieve Exclusive OR operations:

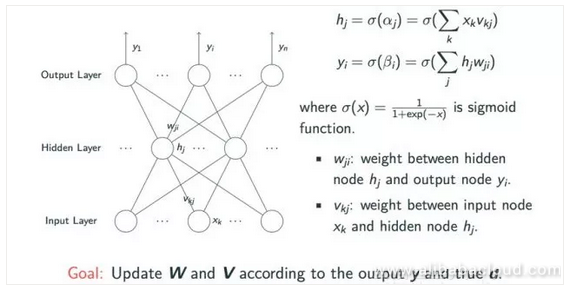

Entering into the 1980s, due to the expressive ability of sensor model neural networks being limited to linear classification tasks, the development of neural networks began to enter the phase of multi-layered sensors. A classic multi-layered neural network is a feed-forward neural network.

We can see from the figure below that it involves an input layer, a hidden layer with an undefined number of nodes, and an output layer.

We can express any logical operation by a multi-layer sensor model, but this introduces the issue of weighted learning between the three layers. When xk is transferred from the input layer to the weighted vkj on the hidden layer, and then passed through an activation algorithm like sigmoid, then we can retrieve the corresponding value hj from the hidden layer. Likewise, we can use a similar operation to derive the yi node value from the output layer using the hj value. For learning, we need the weighted information from the w and v matrices, so that we can finally obtain the estimated value y and the actual value d.

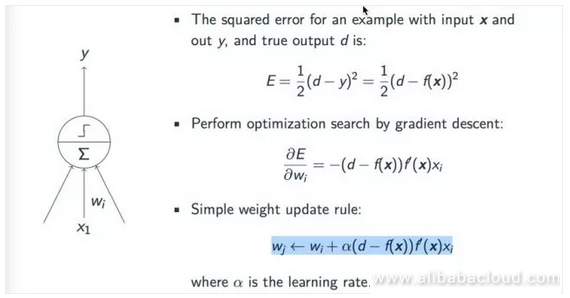

If you have a basic understanding of machine learning, you will understand why we use a gradient descent to learn a model. The principle behind applying a gradient descent to the sensor model is fairly simple, as we can see from the below figure. First we have to determine the model's loss.

The example uses a square root loss and seeks to close the gap between the simulated value y and the real value d. For the sake of convenient computing, in most situations we use the root relationship E = 1/2 (d-y)^2 = 1/2 (d-f(x))^2.

According to the gradient descent principle, the rate of the weighting update cycle is: wj ← wi + α(d − f(x))f′(x)xi, where α is the rate of learning which we can adjust manually.

How do we learn all of the parameters in a multi-layer feed-forward neural network? The parameters for the top layer are very easy to obtain. One can achieve the parameters by comparing the difference between the estimated and real values output by the computing model and using the gradient descent principles to obtain the parameter results. The problem comes when we try to obtain parameters from the hidden layer. Even though we can compute the output from the model, we have no way of knowing what the expected value is, so we have no way of effectively training a multi-layer neural network. This issue plagued researchers for a long time, leading to the lack of development of neural networks after the 1960s.

Later, in the 70s, a number of scientists independently introduced the idea of a back-propagation algorithm. The basic idea behind this type of algorithm is actually quite simple. Even though at the time there was no way to update according to the expected value from the hidden layer, one could update the weights between the hidden and other layers via errors passed from the hidden layer. When computing a gradient, since all of the nodes in the hidden layer are related to multiple nodes on the output layer, so all of the layers on the previous layers are accumulated and processed together.

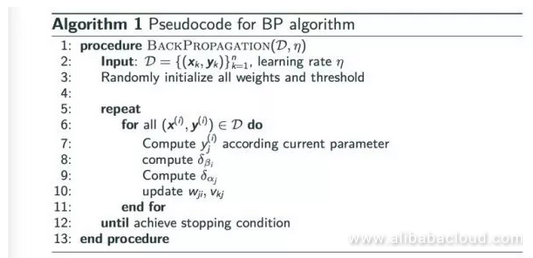

Another advantage of back-propagation is that we can perform gradients and weighting of nodes on the same layer at the same time since they are unrelated. We can express the entire process of back-propagation in pseudocode as below:

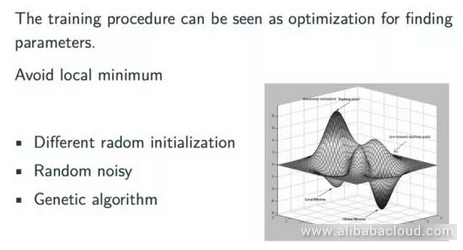

Next let's talk about some of the other characteristics of a back-propagation neural network. A back-propagation is actually a chain rule. It can easily generalize any computation that has a map. According to the gradient function, we can use a back-propagation neural network to produce a local optimized solution, but not a global optimized solution. However, from a general perspective, the result produced by a back-propagation algorithm is usually a satisfactorily optimized solution. The figure below is an intuitive representation of a back-propagation algorithm:

Under most circumstances, a back-propagation neural network will find the smallest possible value within scope; however, if we leave that scope we may find an even better value. In actual application, there are a number of simple and effective ways to address this kind of issue, for example we can try different randomized initialization methods. Moreover, in practice, among the models frequently used in the field of modern deep learning, the method of initialization has significant influence on the final result. Another method of forcing the model to leave the optimized scope is to introduce random noises during training, or use a hereditary algorithm to prevent the training model from stopping at an un-ideal optimized position.

A back-propagation neural network is an excellent model of machine learning, and when speaking about machine learning, we can't help but notice a basic issue that's frequently encountered throughout the process of machine learning, that is the issue of overfitting. A common manifestation of overfitting is that during training, even when the loss of the model constantly drops, the loss and error in the test group rises. There are two typical methods to avoid overfitting:

Even though neural networks were very popular during the 1980s, they unfortunately entered another low point in development in the 1990s. A number of factors contributed to this low point. For example, the Support Vector Machines, which were a popular model in the 1990s, took the stage at all kinds of major conferences and found application in a variety of fields. Support Vector Machines have an excellent statistical learning theory and are easy to intuitively understand. They are also very effective and produce near-ideal results.

Amidst this shift, the rise of the statistical learning theory behind Support Vector Machines applied no small amount of pressure to the development of neural networks. On the other hand, from the perspective of neural networks themselves, even though you can use back-propagation networks to train any neural network in theory, in actual application, we notice that as the number of layers in the neural network increases, the difficulty of training the network increases exponentially. For example, in the beginning of the 1990s, people noticed that in a neural network with a relatively large number of layers, it was common to see gradient loss or gradient explosion.

A simple example of gradient loss, for example, would be where each layer in a neural network is a sigmoid structure layer, therefore its loss during back-propagation is chained into a sigmoid gradient. When a series of elements are strung together, then if one of the gradients is very small, the gradient will then become smaller and smaller. In reality, after propagating one or two layers, this gradient disappears. Gradient loss leads to the parameters in deep layers to stop changing, making it very difficult to get meaningful results. This is one of the reasons that a multi-layer neural network can be very difficult to train.

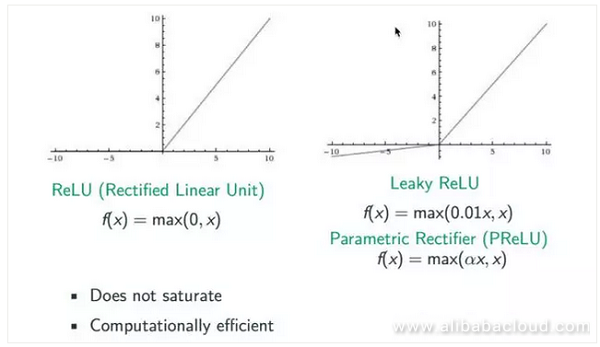

The academic world has studied this issue in-depth, and come to the conclusion that the easiest way to handle it is by changing the activation algorithm. In the beginning we tried to use a Rectified activation algorithm, since the sigmoid algorithm is an index method which can easily bring about the issue of gradient loss. Rectified, on the other hand, replaces the sigmoid function and replaces max (0,x). From the figure below we can see that the gradient for estimates above 0 is 1, which prevents the issue of gradient disappearance. However, when the estimate is lower than 0, we can see that the gradient is 0 again, so the ReLU algorithm must be imperfect. Later, a number of improved algorithms came out, including Leaky ReLU and Parametric Rectifier (PReLU). When the estimate x is smaller than 0, we can convert it to a coefficient like 0.01 or α to prevent it from actually being 0.

With the development of neural networks, we later came up with a number of methods that solve the issue of passing gradients on a structural level. For example, the Metamodel, LSTM model, and modern image analysis use a number of cross-layer linking methods to more easily propagate gradients.

2,593 posts | 794 followers

FollowAlibaba Clouder - July 19, 2018

Alibaba Clouder - October 30, 2019

Alibaba Clouder - October 31, 2019

Alibaba Clouder - November 5, 2019

shiming xie - November 4, 2019

Alibaba F(x) Team - June 20, 2022

2,593 posts | 794 followers

Follow Image Search

Image Search

An intelligent image search service with product search and generic search features to help users resolve image search requests.

Learn More Intelligent Service Robot

Intelligent Service Robot

A dialog platform that enables smart dialog (based on natural language processing) through a range of dialog-enabling clients

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn MoreMore Posts by Alibaba Clouder