Every day, the Alibaba economy serves millions of customers seeking help via Customer Services. While bots are helping out with powerful capabilities, human customer service agents are still irreplaceable and play an essential role in ensuring positive customer experience. The CCO Technology Department of Alibaba New Retail Technology business unit developed an assistant called Alibaba Customer Services Assistant that implements human-machine collaboration for better customer service.

Facing an economic downturn, enterprises in all industries seek to optimize efficiency, especially in the business area of customer service. Generally, employees who are new to the customer service domain deliver poor performance with low efficiency, which further deteriorates user experience.

The Alibaba intelligent customer services workbench supports the customer services of the majority of Alibaba economy BUs and an increasing number of large external enterprises. Improving efficiency is now a major challenge that technicians must solve. Raising efficiency through better tools and technical means not only cuts costs but also improves the satisfaction of customer services employees

When it comes to video games, you can boost the strength of your character in two ways, either by using plugins or by training it with more challenges.

This idea also applies to the improvement of customer service.

(1) Build an assistant to implement human-machine collaboration to help new agents with their tasks and reduce their workload.

(2) The major reason why new agents are less productive is that they lack hands-on experience. Therefore, building a training bot to emulate member calls and train agents in both online and hotline scenarios, enables new agents to learn faster.

This article focuses on the first aspect and introduces the smart solution of Customer Services Assistant to enhance the quality of customer service.

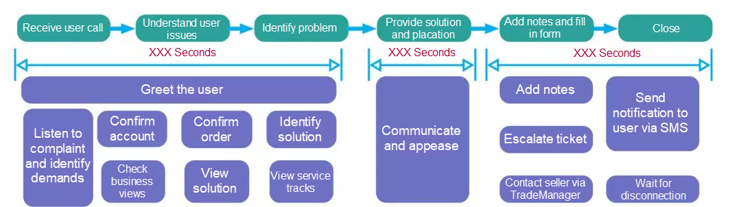

The following figure summarizes the process of a typical customer service call after the observation of over 500 customer service operations in fifteen days.

Phase 1 is the most crucial part since the opening determines the success or failure of a conversation. Observation shows that in 80% of conversations, agents follow three steps:

1) Confirm the name of the member

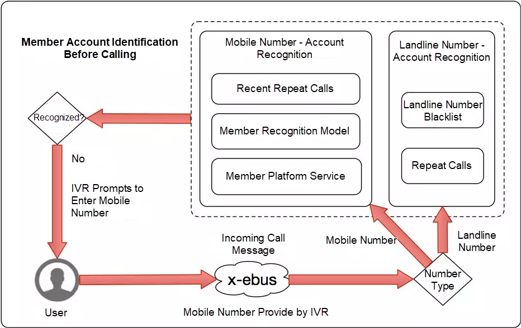

The first task when a member call comes in is to confirm the member's name. With an automatic member recognition functionality based on phone numbers of incoming calls, the system correctly recognizes only 55% of calling members. Firstly, it's critical to note that landline numbers are excluded from automatic recognition as they account for a very small portion of total calls. Secondly, for mobile numbers, the member platform is invoked to obtain the member information attached to each mobile number. However, the recognition rate is still low for a variety of reasons. For example, a caller has multiple mobile numbers and member accounts, or a caller uses a family member's phone. In the event of a recognition fault, the agent asks the caller for the member name. The confirmation process takes anywhere from seconds to minutes depending on the complexity of member names, ranging from a simple combination of alphabets and numbers to obscure Chinese characters.

2) Confirm the order ID

The next step is to confirm the order ID. Most members call about order issues, therefore agents must locate the right order before they resolve the problem. With a growing list of orders, it is very time-consuming to find the right one, so agents usually ask callers for the order IDs. An order ID, which consists of a long string of digits and will probably grow longer in the future, is very inconvenient to read and note down. Things worsen when the mobile phone signal drops during calls, from 4G to 3G for instance, leading to a longer time to find the order.

3) Identify demands and find the solution

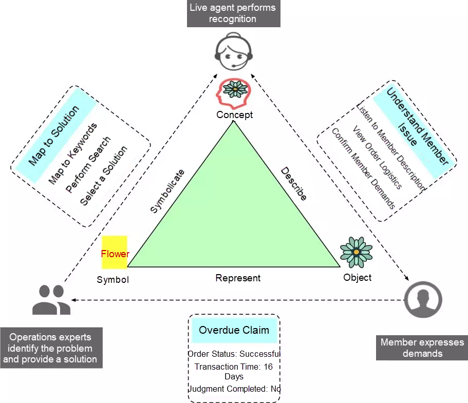

Currently, solutions are configured by operations experts while agents directly interact with members. Relying on experience, agents need to go through two mappings before translating members' concrete descriptions into final solutions, as shown in the following diagram.

Identify Demands: A conversation is a translation of high-dimensional ideas into low-dimensional information. Humans understand the meaning of words by interpreting. If agents only rely on the literal meaning of conversations to infer callers' demands, they may get distorted results. In addition to relying on experience, agents need reference information from multiple channels including various business views to identify callers' real demands.

Find the Solution: After identifying the member's real demands, the agent maps its understanding to keywords, searches with the keywords, and eventually selects the solution that deems to be most helpful from search results.

For instance, Taobao businesses are complex and provide numerous data views and solutions. For a newcomer in customer services, the two mappings require considerable time to learn and improve. Hence, it makes the process inefficient and gives rise to wrong solutions that deteriorate the member experience.

Overlapping with Phase 1 and Phase 3, Phase 2 involves communication as well as reassurance and takes a longer time. In this process, agents need to focus and multitask. It is common for a hotline agent to add notes, chat with the member, look for a solution and placate the member at the same time. Generally lacking this concurrent operations ability, new agents may easily miss some descriptions during multitasking and ask callers to repeat the information, which decreases service efficiency and quality. In the online scenario, agents have to spend a lot of time placating members after they deliver solutions. When an agent concurrently serves several members at different stages, the agent needs to switch frequently between them, which is very costly. Additionally, if the agent fails to give quick responses, the member's experience is negatively impacted.

Phase 3 includes customer services operations such as creating service summary, creating escalation tickets, and sending SMS notifications. All these operations require a lot of input from agents. Take creating a service summary as an example. Agents need to copy member names, order IDs, and solutions from different locations. In escalation ticket creation, agents need to scroll down a very long drop-down list to find the appropriate category. These tedious manual operations increase the talk time and agent effort. Often in an online interaction, the agent waits until the session ends by the system due to timeout when the member leaves the conversation long before. This is a waste of manpower and efficiency.

After outlining the core issues in each phase that affect the efficiency of live agents, now, let's understand how Alibaba Customer Services Assistant approaches these problems.

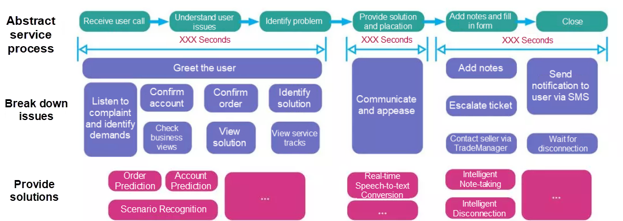

In phase 1, the major objective of Customer Services Assistant is to help agents quickly identify problems and build the following capabilities:

1) Member Recognition (increases member recognition coverage).

2) Order Recognition (assists agents in identifying orders).

3) Scenario Recognition (assists agents in identifying solutions).

Customer Services Assistant aims to free agents from frantic multitasking so they handle calls with ease and confidence, only having to confirm several pieces of information. This phase is where Customer Services Assistant plays the biggest role.

In Phase 2, Customer Services Assistant aims to make communication and placation easier and enhance the ability of concurrent operations. In the hotline scenario, Customer Services Assistant offers real-time speech-to-text capability to show agents the caller utterances sentence by sentence in real-time, enhancing the concurrent operations ability of hotline agents to avoid repetitive communication.

In Phase 3, the Customer Services Assistant automates note-adding and form-filling (intelligent summary) to make question recording easier. In the online scenario, the Customer Services Assistant provides intelligent disconnection recognition capability to determine whether members left conversations, reducing agents' wait time.

An event-driven Customer Services Assistant framework helps to quickly integrate these intelligent capabilities into the Customer Services Assistant and include them within the existing service systems.

This section focuses on how to implement the strategy. Though this article does not elaborate on each feature, as there are many, it describes the solutions for some of the problems described in Phase 1.

For the small set of landline calls, matches are made based on the Historical Repeat Calls data (agents confirm the member name in each call and input the phone number-member name pair into the database). With this approach, over half of the calls are successfully recognized. Also, blacklist telephone numbers that cause frequent recognition faults, mostly public telephone numbers, to exclude them from recognition.

To a majority of mobile calls, first apply the Repeat Calls in 24 Hours data, because the probability is high that callers calling with the same mobile number within 24 hours are the same member. But, what about the remaining calls? Do we resort to the member platform interface? The answer is NO. For such calls, use models to perform filtering. Simply put, use the same person recognition model to find member names attached to a mobile number, and then use a member recognition model to identify the member name that is most likely to be the caller.

The model works as follows:

For example, with member names A and B found, the model determines B is more likely to be the caller because A hasn't bought anything within the recent year while B recently placed an order for which a refund is pending. Finally, use the member platform as a last resort.

After all these measures, a few calls still remain unrecognized. In this case, users are prompted by Interactive Voice Response (IVR) to enter their mobile numbers used for member registration, which is optional. If a mobile number is entered, it will be used as an input parameter. After multiple iterations, the member recognition rate increases to 90% from 55%.

The objective is to reduce the time to ask for an order ID. Therefore, as soon as a call comes in, the Customer Services Assistant must predict the order with which the caller has problem and shows to the agent.

AlimeBot is embedded with an order recognition feature, which uses as a preferred solution, but it covers only 30% of calls. To optimize coverage, we try to get the most out of data, such as the Repeat Calls data from different sources. For example, a member called yesterday to inquire about an order, if the member calls again today, there is a high probability (over 90%) that he/she will ask about the same order. The order recognition model uses this data for training. After multiple iterations, the pre-call order recognition coverage reaches 50% with a 90% accuracy.

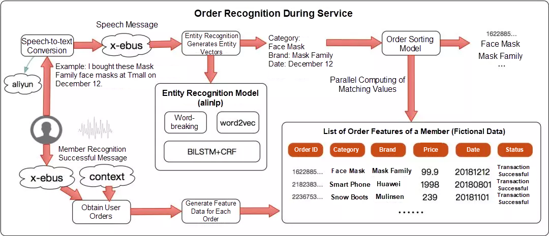

Now consider the calls that are not covered by the recognition feature. Rather than spending effort to get order IDs from callers, it would be more convenient to ask for information like product names, prices, and dates of purchase, which helps in identifying orders. Therefore, enabling order recognition during a call.

In practice, Intelligent Speech Interaction of Alibaba's DAMO Academy coverts utterances of a caller to text in real-time, from which an entity recognition model (BILSTM + CRF) extracts entity features including categories, brands, and dates. A sorting machine learning model then calculates matching values against all orders and displays the top-ranking order. After multiple iterations, the order recognition coverage during calls reaches 20% with an 89% accuracy.

As mentioned earlier in this article, to rebuild a member's real demands, the agent needs to both interpret literal meanings and take reference of various business views. Post that, the agent searches with keywords and selects a solution.

To streamline these steps, we develop a scenario recognition engine that monitors corpus information in real-time, captures contextual data including orders, tickets, and historical calls, recognizes member intents and data factors, and eventually matches and enables solutions. All of these actions happen automatically, while a customer service call or online service is takes place.

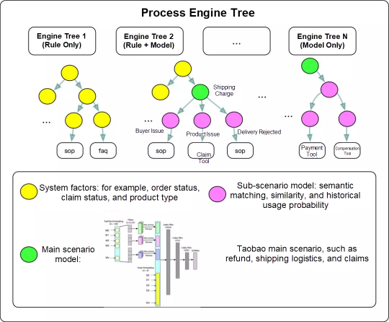

The challenge here is the complexity of the business. In Taobao businesses, agents have hundreds of solutions available, which frequently overlap with each other or have parent-child relationships. However, classifying these solutions leads to low recognition accuracy. Beyond Taobao, we hope that the engine is used out-of-box by economy BUs and external enterprises using the Alibaba intelligent customer service platform. The engine uses a layered scenario recognition model to address the accuracy problem.

The following figure shows the main business scenarios used to train the model to guarantee accuracy. The recognition of sub-scenarios (slots) by using simple approaches including, lightweight semantic matching and similarity computing. With a simple configuration of data factors or intent factors specific to tenants or businesses, the engine accurately recognizes scenarios without requiring intervention from developers.

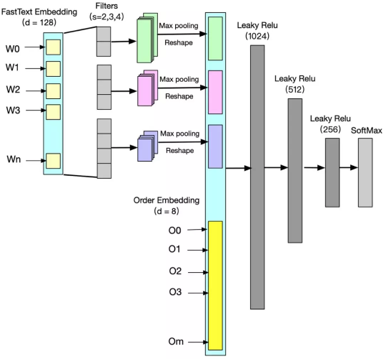

The most basic requirement for the main scenario model is to integrate text features such as real-time speech with business features such as orders. The figure shows one of our early attempts.

After multiple iterations, scenario recognition covers 75% of Taobao businesses with 90% accuracy.

The paradigm shift from human-machine interaction to human-machine collaboration enables the use of Customer Services Assistant across Taobao BUs. Judging from business metrics such as Average Talk Time (ATT), Customer Services Assistant contributes significantly to performance improvement, quickly approaching the 30% target. Not just that, it increased agent satisfaction on platform products significantly by a historical 11%.

Instead of replacing human workers, the value of technology lies in coordinating with humans and allowing them to focus on more creative tasks. Going forward, Alibaba will continue to optimize technologies and upgrade products, helping the economy BUs, sellers, and enterprises drive businesses, and making human-machine collaboration an inclusive capability across the service industry.

Case Study: How Alibaba Helped a Fast-Growing Financial Technology Company Expand in Southeast Asia

How to Digitize Customers' Actions and Product Movements in the New Retail Era

2,593 posts | 794 followers

FollowAlibaba Clouder - January 22, 2020

Alibaba Cloud Native Community - June 16, 2025

Alipay Technology - December 26, 2019

Alibaba Clouder - November 27, 2018

Alibaba Cloud Security - December 5, 2019

Alibaba Developer - March 3, 2020

2,593 posts | 794 followers

Follow Platform For AI

Platform For AI

A platform that provides enterprise-level data modeling services based on machine learning algorithms to quickly meet your needs for data-driven operations.

Learn More Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn MoreMore Posts by Alibaba Clouder