接入 ARMS 應用監控以後,探針對常見的 AI 架構進行了自動埋點,因此不需要修改任何代碼,就可以實現調用鏈資訊的採集。如果您需要在調用鏈資訊中,體現業務方法的執行情況,可以引入 loongsuite-util-genai 以及 OpenTelemetry SDK,在業務代碼中增加自訂埋點。本文介紹如何通過 loongsuite-util-genai 以及 OpenTelemetry Python SDK 實現自訂埋點以及自訂 Attribute。

ARMS 探針支援的 AI 組件和架構,請參見:

前提條件

已經成功接入 ARMS 應用監控。

引入依賴

pip install loongsuite-util-genai安裝後提供 opentelemetry.util.genai 包及 ExtendedTelemetryHandler 等擴充介面。更多資訊,請參見 loongsuite-util-genai 詳細文檔。

使用 loongsuite-util-genai 和 OpenTelemetry SDK

通過 loongsuite-util-genai 和 OpenTelemetry SDK 主要可以實現以下操作:

建立 GenAI 語義的 Span(Entry、Agent、Tool、ReAct Step等)。

通過 OpenTelemetry SDK 埋點產生自訂 Span。

為 Span 增加自訂 Attributes。

擷取當前 Trace 上下文並列印 traceId。

名詞介紹

Span:一次請求的一個具體操作,比如一次 LLM 調用或一次工具執行。

SpanContext:一次請求追蹤的上下文,包含 traceId、spanId 等資訊。

Attribute:Span 的附加屬性欄位,用於記錄關鍵資訊,如模型名稱、Token 用量等。

Handler:loongsuite-util-genai 提供的

ExtendedTelemetryHandler,用於建立符合 GenAI語義約定的 Span。

loongsuite-util-genai 支援的全部 Span 類型如下表所示,本文重點介紹 Entry、Agent、Tool 和 ReAct Step 的用法,其他類型(Embedding、Retrieval、Rerank、Memory等)的詳細用法請參見loongsuite-util-genai 完整文檔。

Span 類型 | 操作名 | 說明 |

Entry |

| 應用入口,攜帶 session_id / user_id / 應用完整互動資訊 |

Agent |

| Agent 調用,匯總 Token 用量 |

Tool |

| 工具/函數執行 |

Step |

| ReAct 單輪迭代標識 |

LLM |

| 大模型對話(通常由探針自動採集) |

Embedding |

| 向量嵌入 |

Retriever |

| 檢索(RAG) |

Reranker |

| 重排序 |

Memory |

| 記憶讀寫 |

下面分步介紹各類 Span 的埋點寫法,每一步給出獨立的程式碼片段。完整可啟動並執行範例程式碼請參見本文末尾附錄部分。

請務必通過 get_extended_telemetry_handler() 擷取 Handler 執行個體,而非直接執行個體化 TelemetryHandler。ARMS 探針僅對 get_extended_telemetry_handler() 進行了相容適配,直接執行個體化 TelemetryHandler 可能導致環境變數相容性問題。

自訂埋點時請務必遵循LLM Trace欄位定義說明中的語義規範。AI應用可觀測能力(Token統計、會話分析等)均基於該規範中定義進行適配和渲染,若 Span 屬性不符合規範,相關資料可能無法在控制台中正確展示。

1. 擷取 Handler 和 Tracer

通過 get_extended_telemetry_handler() 擷取 loongsuite-util-genai 的單例 Handler,通過 get_tracer(__name__) 擷取 OpenTelemetry SDK 的 Tracer。兩者分別用於建立 GenAI 語義 Span 和自訂業務 Span。

from opentelemetry.util.genai.extended_handler import get_extended_telemetry_handler

from opentelemetry.util.genai.extended_types import (

ExecuteToolInvocation,

InvokeAgentInvocation,

)

from opentelemetry.util.genai._extended_common import EntryInvocation, ReactStepInvocation

from opentelemetry.util.genai.types import Error, InputMessage, OutputMessage, Text

from opentelemetry.trace import get_tracer

handler = get_extended_telemetry_handler()

tracer = get_tracer(__name__)

Handler 提供兩種使用方式:

上下文管理器(

with handler.entry(inv)等):推薦方式,自動管理 Span 生命週期。start/stop/fail API(

handler.start_entry(inv)/handler.stop_entry(inv)/handler.fail_entry(inv, error)):適用於非同步、回調或流式等無法使用with語句的情境。

2. 建立 Entry Span

在請求入口處建立 Entry Span,攜帶 session_id、user_id,並通過 input_messages 記錄使用者輸入。流式響應完成後,將輸出內容拼接設定到 output_messages,再調用 stop_entry 結束 Span。這樣在控制台中能直接看到該次請求的完整輸入和最終輸出。

entry_inv = EntryInvocation(

session_id=req.session_id or str(uuid.uuid4()),

user_id=req.user_id or "anonymous",

input_messages=[

InputMessage(role="user", parts=[Text(content=req.topic)]),

],

)

def event_generator():

handler.start_entry(entry_inv)

output_chunks: list[str] = [ ]

try:

for chunk in run_agent_stream(topic=req.topic):

output_chunks.append(chunk)

yield f"data: {json.dumps({'content': chunk}, ensure_ascii=False)}\n\n"

yield "data: [DONE]\n\n"

except Exception as exc:

handler.fail_entry(entry_inv, Error(message=str(exc), type=type(exc)))

yield f"data: {json.dumps({'error': str(exc)}, ensure_ascii=False)}\n\n"

return

entry_inv.output_messages = [

OutputMessage(

role="assistant",

parts=[Text(content="".join(output_chunks))],

finish_reason="stop",

),

]

handler.stop_entry(entry_inv)

3. 建立 Agent Span

通過 start_invoke_agent 建立 Agent Span,記錄 Agent 名稱、模型和描述資訊。Agent Span 是整個調用鏈的根 GenAI Span,所有後續的 ReAct Step、LLM 調用和 Tool 調用都作為它的子 Span。

invocation = InvokeAgentInvocation(

provider="dashscope",

agent_name="TechContentAgent",

agent_description="技術內容產生助手",

request_model="qwen-plus",

)

total_input_tokens = 0

total_output_tokens = 0

handler.start_invoke_agent(invocation)

try:

# ... Agent 核心邏輯(ReAct 迴圈) ...

invocation.input_tokens = total_input_tokens

invocation.output_tokens = total_output_tokens

handler.stop_invoke_agent(invocation)

except Exception:

handler.fail_invoke_agent(invocation, Error(message="agent failed", type=RuntimeError))

raise

Agent 執行完成後,將累積的 total_input_tokens 和 total_output_tokens 寫入 Agent Span,實現 Token 指標匯總統計。

4. 建立 ReAct Step Span

在每一輪 ReAct 推理迭代時建立 Step Span,傳入當前輪次 round。迭代結束時設定 finish_reason:需要繼續迭代為 continue,最終回答為 stop。樣本中每輪迭代的 LLM 調用由 ARMS 探針自動埋點,無需手動建立。

step_inv = ReactStepInvocation(round=iteration + 1)

handler.start_react_step(step_inv)

try:

response = client.chat.completions.create(

model="qwen-plus",

messages=messages,

tools=TOOL_DEFINITIONS,

)

# ... 處理響應 ...

step_inv.finish_reason = "stop" # 或 "continue"

handler.stop_react_step(step_inv)

except Exception:

handler.fail_react_step(step_inv, Error(message="step failed", type=RuntimeError))

raise

5. 建立 Tool Span

當模型返回工具調用時,為每個 tool_call 建立 Tool Span,記錄工具名稱、調用 ID、入參和返回結果。

tool_inv = ExecuteToolInvocation(

tool_name=tool_call.function.name,

tool_call_id=tool_call.id,

tool_call_arguments=tool_call.function.arguments,

tool_type="function",

)

handler.start_execute_tool(tool_inv)

try:

result = dispatch_tool(tool_name, tool_call.function.arguments)

tool_inv.tool_call_result = result

except Exception as exc:

handler.fail_execute_tool(tool_inv, error=Error(message=str(exc), type=type(exc)))

raise

else:

handler.stop_execute_tool(tool_inv)

6. 使用 OpenTelemetry SDK 建立自訂 Span

除了 loongsuite-util-genai 提供的 GenAI 語義 Span,還可以通過 OpenTelemetry SDK 的 tracer.start_as_current_span() 建立自訂業務 Span,與 GenAI Span 混合使用。

以下樣本展示了兩種典型的自訂 Span 用法:

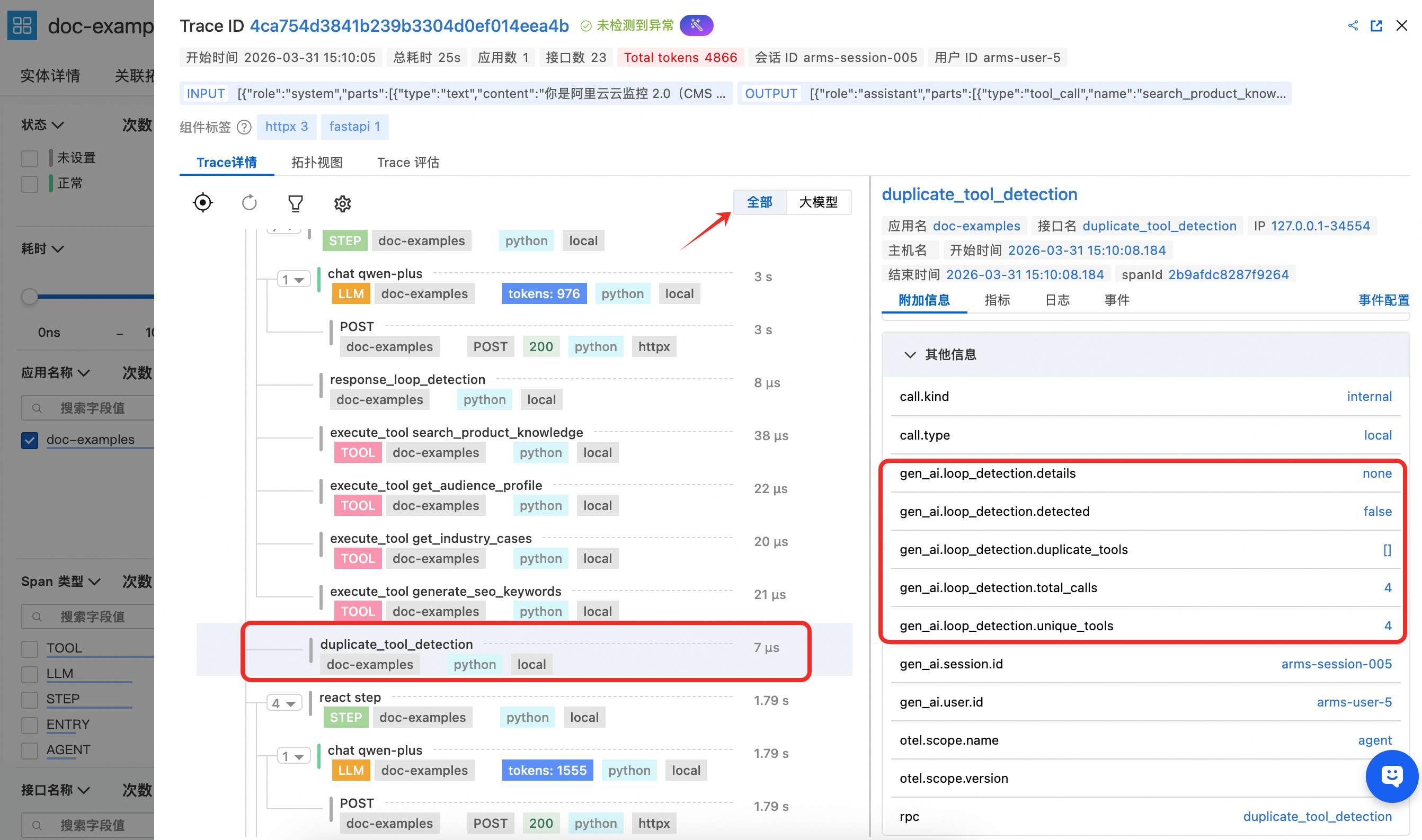

duplicate_tool_detection — 工具重複調用檢測

在每輪 ReAct 迭代前執行,通過 Counter 統計每個工具的調用次數,將檢測結果寫入 gen_ai.loop_detection.* 屬性。若發現重複,向訊息列表追加系統提示引導模型避免重複。

def _check_duplicate_tools(

tool_usage_counter: Counter,

messages: list[dict[str, Any]],

) -> None:

duplicates = [name for name, count in tool_usage_counter.items() if count > 1]

has_duplicates = len(duplicates) > 0

with tracer.start_as_current_span("duplicate_tool_detection") as span:

span.set_attributes({

"gen_ai.loop_detection.detected": has_duplicates,

"gen_ai.loop_detection.duplicate_tools": str(duplicates) if has_duplicates else "[ ]",

"gen_ai.loop_detection.total_calls": sum(tool_usage_counter.values()),

"gen_ai.loop_detection.unique_tools": len(tool_usage_counter),

})

if has_duplicates:

details = ", ".join(f"{n}({tool_usage_counter[n]}次)" for n in duplicates)

messages.append({

"role": "system",

"content": f"[系統提示] 檢測到工具被重複調用:{details}。請避免重複調用。",

})

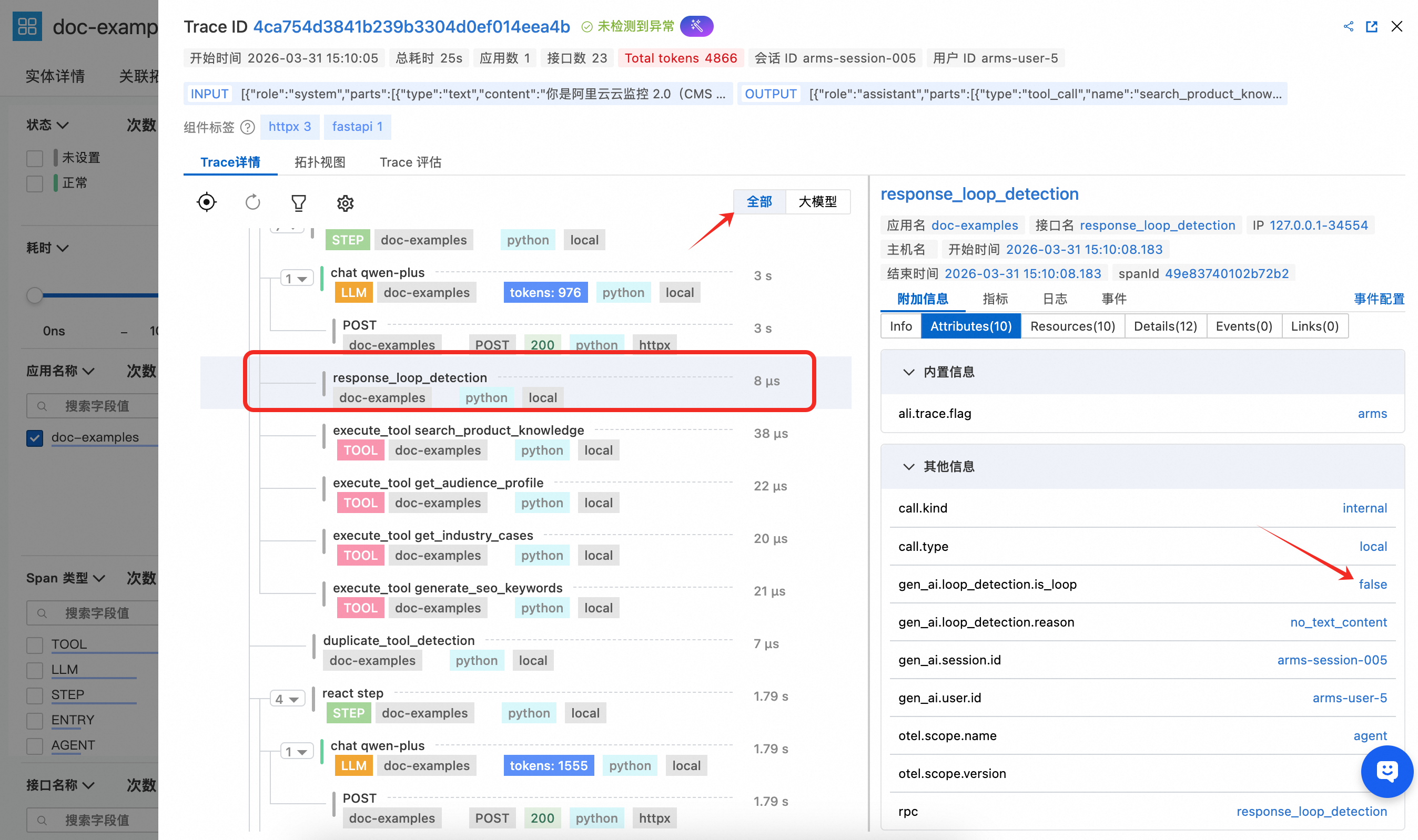

response_loop_detection — LLM 回複迴圈檢測

在每輪 LLM 回複後執行,通過比較當前回複與上一輪迴複的文本相似性,將 is_loop、overlap_ratio 等指標寫入 Span 屬性。若檢測到迴圈(文本完全相同或重疊率超過 80%),設定 finish_reason 為 loop_detected 並提前終止 Agent。

def _check_response_loop(

current_content: str | None,

previous_content: str | None,

) -> bool:

cur = (current_content or "").strip()

prev = (previous_content or "").strip()

with tracer.start_as_current_span("response_loop_detection") as span:

if not prev or not cur:

span.set_attributes({

"gen_ai.loop_detection.is_loop": False,

"gen_ai.loop_detection.reason": "no_text_content",

})

return False

is_identical = cur == prev

longer = max(len(cur), len(prev))

common_prefix_len = sum(1 for a, b in zip(cur, prev) if a == b)

overlap_ratio = common_prefix_len / longer if longer > 0 else 0.0

is_loop = is_identical or overlap_ratio > 0.8

span.set_attributes({

"gen_ai.loop_detection.is_loop": is_loop,

"gen_ai.loop_detection.is_identical": is_identical,

"gen_ai.loop_detection.overlap_ratio": round(overlap_ratio, 2),

"gen_ai.loop_detection.current_length": len(cur),

"gen_ai.loop_detection.previous_length": len(prev),

})

return is_loop

由於自訂 Span 不屬於大模型語義規範,在控制台的調用鏈視圖中需要切換到全部視圖才能查看。

查看監控詳情

登入CloudMonitor2.0控制台,選擇目標工作空間,在左側導覽列選擇所有功能 > AI應用可觀測。

在AI應用列表頁面可以看到已接入的應用,單擊應用程式名稱可以查看詳細的應用監控資料。

埋點效果展示

1. Entry Span 詳情

Enter Span 能看到 gen_ai.session.id、gen_ai.user.id 等關鍵屬性,通過在函數入口處設定能自動透傳到 LLM、TOOL等Span中,能用於關聯會話和使用者資訊進行分析。同時 Entry Span 還攜帶 gen_ai.input.messages(使用者輸入內容)和 gen_ai.output.messages(最終輸出內容),便於在控制台中直接查看該次請求的整體互動內容。

2. Agent Span 詳情

Agent Span能看到該 Agent 的定義名稱以及相應的描述,同時體現上述範例程式碼中統計的屬於該 Agent 層級的 Token 用量匯總統計效果。

3. Tool Span 詳情

Tool Span 能看到該 Tool 的名稱以及入參配置,並且展示工具調用結果。

4. LLM Span 詳情

LLM Span在上述範例程式碼中並沒有進行手動埋點,由於是 openai 調用,此處全部由探針自動採集,能清晰觀察到該次 LLM 調用的完整上下文資訊以及 token 消耗。

5.自訂 Span 詳情

範例程式碼中通過 OpenTelemetry SDK 建立了兩個自訂業務 Span,展示如何將自訂埋點與 GenAI 語義 Span 混合使用,由於該自訂Span並不在大模型語義中,需要開啟全部視圖進行查看。

duplicate_tool_detection:在每輪 ReAct 迭代前執行,用於檢測 Agent 是否陷入工具重複調用。Span 屬性中記錄了是否檢測到重複、重複的工具列表、總調用次數和去重工具數,便於在 ARMS 中快速定位 Agent 的工具調用迴圈問題。

response_loop_detection:在每輪 LLM 回複後執行,用於檢測模型是否連續返回高度相似的內容。Span 屬性中記錄了是否判定為迴圈、文本是否完全相同、重疊率以及當前和上一輪迴複的文本長度,協助排查模型陷入重複輸出的異常情境。

相關文檔

loongsuite-util-genai 支援的全部 Span 類型(Embedding、Retrieval、Rerank、Memory等)的詳細用法,請參見loongsuite-util-genai 完整文檔。