PASE (PostgreSQL ANN search extension) adds high-performance approximate nearest neighbor (ANN) vector search to PolarDB for PostgreSQL (Compatible with Oracle). It supports two index types — IVFFlat and HNSW (Hierarchical Navigable Small World) — so you can tune the speed-recall tradeoff to fit your workload.

PASE performs similarity search on vectors you supply. It does not extract or generate feature vectors.

Prerequisites

Before you begin, make sure you have:

A PolarDB for PostgreSQL (Compatible with Oracle) cluster

A privileged account to run SQL statements

Quick start

The following example shows the full workflow: install the extension, create a table, build an index, and run a query.

-- Install the extension

CREATE EXTENSION pase;

-- Create a table with a vector column

CREATE TABLE items (id integer, vector float4[]);

-- Build an HNSW index

CREATE INDEX hnsw_idx ON items

USING pase_hnsw(vector)

WITH (dim = 3, base_nb_num = 16, ef_build = 40, ef_search = 200, base64_encoded = 0);

-- Query the nearest neighbors

SELECT id, vector <?> '1,1,1:100:0'::pase AS distance

FROM items

ORDER BY vector <?> '1,1,1:100:0'::pase ASC

LIMIT 10;Choose an index type

| IVFFlat | HNSW | |

|---|---|---|

| Best for | High-precision scenarios, image comparison | Large datasets (tens of millions+ vectors), recommendation recall |

| Query latency target | Up to 100 ms | Under 10 ms |

| Build time | Fast | Slower |

| Memory usage | Lower | Higher (stores proximity graph) |

| 100% recall possible | Yes, if the query vector is in the dataset | No — precision plateaus at a certain level |

| Reconfigurable precision | Yes — adjust the probe count at query time | Limited |

When to use IVFFlat: Choose IVFFlat when you need high precision, can tolerate up to 100 ms query latency, and want fast index builds with low storage overhead.

When to use HNSW: Choose HNSW for large-scale datasets where queries must return within 10 ms. Expect higher memory usage and longer index build times.

How it works

IVFFlat

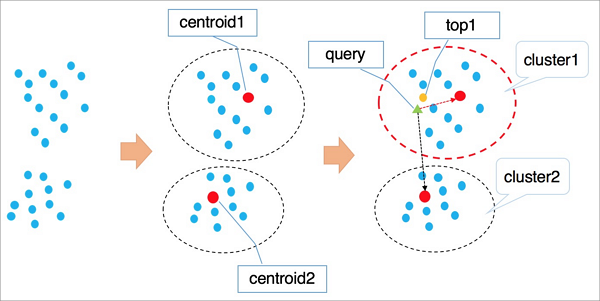

IVFFlat is a simplified version of the IVFADC algorithm. It divides vectors into clusters using k-means, then at query time narrows the search to the clusters nearest to the query vector.

IVFFlat clusters all vectors using k-means. Each cluster has a centroid.

At query time, IVFFlat finds the *n* centroids nearest to the query vector.

IVFFlat searches all vectors in those *n* clusters and returns the *k* nearest.

Searching only *n* clusters speeds up queries but may miss similar vectors in skipped clusters, reducing recall. Increase *n* to improve recall at the cost of more computation. Unlike IVFADC — which uses product quantization to avoid full traversal — IVFFlat uses brute-force search within each cluster to preserve precision.

HNSW

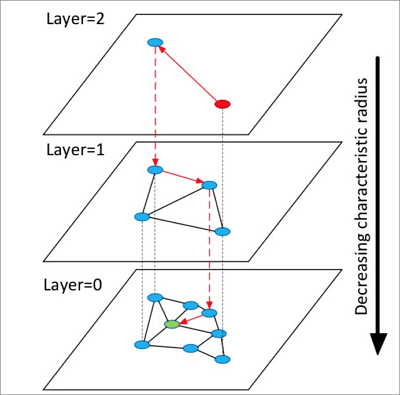

HNSW builds a multi-layer graph using the NSW (Navigable Small World) algorithm. Each layer is a coarser view of the layer below it.

HNSW builds a hierarchical graph with multiple layers.

Search starts at a random entry point in the top layer.

HNSW identifies the neighbors of the current element and adds them to a fixed-length dynamic list, ranked by distance. It then expands to the neighbors of those neighbors, continuously re-sorting the list and keeping only the top *k* candidates. This continues until the list stabilizes, then the top element becomes the entry point for the next layer down.

The bottom layer returns the final nearest neighbors.

HNSW's neighbor-selection approach gives it better query performance than clustering-based algorithms at large scale, but it requires storing the proximity graph, which consumes additional memory.

Create an IVFFlat index

Choose a distance method

| Method | Value | Requires normalization |

|---|---|---|

| Euclidean distance | 0 | No |

| Dot product (inner product) | 1 | Yes |

If you use the dot product or cosine method, normalize your vectors first. For a vector ![]() , it must satisfy

, it must satisfy ![]() . After normalization, the dot product equals the cosine value.

. After normalization, the dot product equals the cosine value.

PolarDB natively supports only Euclidean distance. To use dot product, normalize your vectors and follow the approach in the Appendix.

Create the index

CREATE INDEX ivfflat_idx ON vectors_table

USING pase_ivfflat(vector)

WITH (

clustering_type = 1,

distance_type = 0,

dimension = 256,

base64_encoded = 0,

clustering_params = "10,100"

);To use internal clustering (clustering_type = 1), insert data into the table before creating the index.IVFFlat index parameters

| Parameter | Required | Description | Default |

|---|---|---|---|

clustering_type | Yes | 0 = external clustering (loads a centroid file specified by clustering_params). 1 = internal k-means clustering. Start with 1 if you are new to PASE. | — |

distance_type | No | 0 = Euclidean distance. 1 = dot product (requires normalized vectors; dot product order is opposite to Euclidean order). | 0 |

dimension | Yes | Number of vector dimensions. Maximum: 512. | — |

base64_encoded | No | 0 = use float4[] to represent vectors. 1 = use Base64-encoded float[]. | 0 |

clustering_params | Yes | For external clustering: path to the centroid file. For internal clustering: clustering_sample_ratio,k. | — |

Configuring `clustering_params` for internal clustering:

clustering_sample_ratio: sampling fraction with 1000 as the denominator. Valid range: (0, 1000]. For example,1means a 1/1000 sampling ratio. Keep the total sampled records under 100,000.k: number of centroids. Valid range: [100, 1000]. A larger value improves recall but slows index creation.

Query with an IVFFlat index

The <#> operator activates the IVFFlat index. An ORDER BY clause is required.

SELECT id, vector <#> '1,1,1'::pase AS distance

FROM vectors_ivfflat

ORDER BY vector <#> '1,1,1:10:0'::pase ASC

LIMIT 10;The query string 'vector:probe_count:distance_type' accepts three colon-separated parameters:

| Position | Description | Range |

|---|---|---|

| 1st | Query vector | — |

| 2nd | Probe count — number of clusters to search. Higher values improve recall but reduce query speed. | (0, 1000] |

| 3rd | Distance method: 0 = Euclidean, 1 = dot product (requires normalized vectors) | 0 or 1 |

Create an HNSW index

Create the index

CREATE INDEX hnsw_idx ON vectors_table

USING pase_hnsw(vector)

WITH (

dim = 256,

base_nb_num = 16,

ef_build = 40,

ef_search = 200,

base64_encoded = 0

);HNSW index parameters

| Parameter | Required | Description | Default |

|---|---|---|---|

dim | Yes | Number of vector dimensions. Maximum: 512. | — |

base_nb_num | Yes | Number of neighbors per element. A larger value improves recall but increases build time and memory usage. Recommended range: [16, 128]. | — |

ef_build | Yes | Heap size during index construction. A larger value improves recall but slows index creation. Recommended range: [40, 400]. | — |

ef_search | Yes | Heap size during queries. A larger value improves recall but reduces query speed. Can be overridden at query time. | 200 |

base64_encoded | No | 0 = use float4[] to represent vectors. 1 = use Base64-encoded float[]. | 0 |

Query with an HNSW index

The <?> operator activates the HNSW index. An ORDER BY clause is required.

SELECT id, vector <?> '1,1,1'::pase AS distance

FROM vectors_ivfflat

ORDER BY vector <?> '1,1,1:100:0'::pase ASC

LIMIT 10;The query string 'vector:ef_search:distance_type' accepts three colon-separated parameters:

| Position | Description | Range |

|---|---|---|

| 1st | Query vector | — |

| 2nd | ef_search override — higher values improve recall at the cost of query speed. Start at 40 and increase until you reach the target recall. | (0, ∞) |

| 3rd | Distance method: 0 = Euclidean, 1 = dot product (requires normalized vectors) | 0 or 1 |

Calculate vector similarity

PASE provides two construction methods for calculating vector similarity inline.

PASE-type construction

The <?> operator takes a float4[] on the left and a PASE type on the right.

SELECT ARRAY[2, 1, 1]::float4[] <?> pase(ARRAY[3, 1, 1]::float4[]) AS distance;

SELECT ARRAY[2, 1, 1]::float4[] <?> pase(ARRAY[3, 1, 1]::float4[], 0) AS distance;

SELECT ARRAY[2, 1, 1]::float4[] <?> pase(ARRAY[3, 1, 1]::float4[], 0, 1) AS distance;The PASE constructor accepts up to three arguments: pase(vector, unused_param, distance_method). The second argument has no special purpose — set it to 0. The third argument sets the distance method: 0 for Euclidean, 1 for dot product.

Both vectors must have the same number of dimensions. A mismatch causes an error.

String-based construction

SELECT ARRAY[2, 1, 1]::float4[] <?> '3,1,1'::pase AS distance;

SELECT ARRAY[2, 1, 1]::float4[] <?> '3,1,1:0'::pase AS distance;

SELECT ARRAY[2, 1, 1]::float4[] <?> '3,1,1:0:1'::pase AS distance;The string format is 'vector:unused_param:distance_method', using colons as separators. Parameters have the same meaning as in PASE-type construction.

Usage notes

Index bloat: Check index size with

SELECT pg_relation_size('index_name');. If the index is larger than the table data and queries are slowing down, rebuild the index.Index drift: Frequent data updates can degrade index accuracy. Rebuild the index regularly if you need 100% accuracy.

Parallel queries: Only sequential search is supported for high-dimensional vectors in multi-node elastic parallel queries.

Privileged account: All SQL statements in this topic require a privileged account.

Appendix

Calculate dot product with HNSW

Because PolarDB does not natively support dot product distance, use this function to search by dot product after normalizing your vectors.

CREATE OR REPLACE FUNCTION inner_product_search(

query_vector text,

ef integer,

k integer,

table_name text

)

RETURNS TABLE (id integer, uid text, distance float4) AS $$

BEGIN

RETURN QUERY EXECUTE format('

SELECT a.id, a.vector <?> pase(ARRAY[%s], %s, 1) AS distance

FROM (

SELECT id, vector

FROM %s

ORDER BY vector <?> pase(ARRAY[%s], %s, 0) ASC

LIMIT %s

) a

ORDER BY distance DESC;',

query_vector, ef, table_name, query_vector, ef, k);

END

$$ LANGUAGE plpgsql;The dot product of a normalized vector equals its cosine value, so this function also works for cosine similarity search.

Create an IVFFlat index from an external centroid file

External clustering is an advanced option. Upload a centroid file to the server and set clustering_type = 0 in the index definition.

The centroid file format is:

Number_of_dimensions|Number_of_centroids|Centroid_vector_datasetExample:

3|2|1,1,1,2,2,2References

Product Quantization for Nearest Neighbor Search — Hervé Jégou, Matthijs Douze, Cordelia Schmid.

Efficient and robust approximate nearest neighbor search using Hierarchical Navigable Small World graphs — Yu.A. Malkov, D.A. Yashunin.