When an ApsaraDB for MongoDB instance contains a large number of collections, performance degrades and exceptions occur. The WiredTiger storage engine creates a separate disk file for each collection and for each index, and each open resource uses a corresponding data handle (dhandle) in memory. With thousands of collections, the volume of open dhandles causes lock contention that slows all database operations.

A large number of collections does not always cause problems. The impact depends on your business model and workload. For example, two instances with the same specifications, 10,000 collections, and 100,000 documents can behave very differently:

Accounting software: Most collections store cold data and are rarely accessed. Performance impact is minimal.

Multi-tenant system: Collections are isolated per tenant and most are actively used. Lock contention is severe.

How it works

The WiredTiger storage engine creates a separate disk file for each collection and for each index. Every open resource uses a unique data structure called a dhandle, which tracks checkpoint information, session references, pointers to the in-memory B-tree structure, and data statistics.

As the number of collections grows, more OS files are opened and more dhandles accumulate in memory. A large number of dhandles in memory causes lock contention, which degrades instance performance.

Potential issues

-

Slow queries and high latency caused by handle locks or schema locks

-

Out-of-memory (OOM) errors during synchronization initialization when adding nodes

-

Longer instance restart time

-

Slower data synchronization

-

Slower backup and restore operations

-

Higher physical backup failure rate

-

Longer instance recovery from failures

Diagnosing slow queries

When lock contention from too many collections causes slow queries, the slow query log contains entries similar to the following:

2024-03-07T15:59:16.856+0800 I COMMAND [conn4175155] command db.collections command: count { count: "xxxxxx", query: { A: 1, B: 1 },

$readPreference: { mode: "secondaryPreferred" }, $db: "db" } planSummary: COLLSCAN keysExamined:0 keysExaminedBySizeInBytes:0

docsExamined:1 docsExaminedBySizeInBytes:208 numYields:1 queryHash:916BD9E3 planCacheKey:916BD9E3 reslen:185

locks:{ ReplicationStateTransition: { acquireCount: { w: 2 } }, Global: { acquireCount: { r: 2 } }, Database: { acquireCount: { r: 2 } },

Collection: { acquireCount: { r: 2 } }, Mutex: { acquireCount: { r: 1 } } } storage:{ data: { bytesRead: 304, timeReadingMicros: 4 },

timeWaitingMicros: { handleLock: 40, schemaLock: 134101710 } } protocol:op_query 134268msIn this example, a count operation on a single-document collection took 134,268 ms. The key field is timeWaitingMicros: { handleLock: 40, schemaLock: 134101710 }, which shows that the read request spent almost all its time waiting to acquire handle locks and schema locks in the underlying storage layer—not performing actual work.

The following table describes key fields in a slow query log entry:

| Field | Description |

|---|---|

planSummary |

The query plan used. COLLSCAN indicates a full collection scan with no index; IXSCAN indicates an index scan. |

keysExamined |

The number of index keys scanned. |

docsExamined |

The number of documents scanned. A high value relative to the number of results returned suggests a missing or suboptimal index. |

numYields |

The number of times the operation yielded its lock to allow other operations to proceed. |

timeWaitingMicros.handleLock |

Time spent waiting to acquire a handle lock, in microseconds. |

timeWaitingMicros.schemaLock |

Time spent waiting to acquire a schema lock, in microseconds. A high value is a strong indicator of too many open dhandles. |

protocol:op_query <N>ms |

Total operation duration in milliseconds. |

Use the following commands to diagnose collection and index counts before taking action:

// Count collections in a database

db.getSiblingDB(<dbName>).getCollectionNames().length

// View database statistics (collection count, index count, document count, total size)

db.getSiblingDB(<dbName>).stats()

// View statistics for a specific collection

db.getSiblingDB(<dbName>).<collectionName>.stats()Optimization methods

Choose the method that best fits your situation. Start with the least disruptive option first.

Remove unnecessary collections

Identify collections that are expired or no longer needed, then drop them using dropCollection. For more information, see dropCollection().

Make sure a full backup is available before dropping any collections.

Remove unnecessary indexes

Each index creates a separate disk file in WiredTiger and adds a corresponding dhandle. Reducing index count directly reduces dhandle pressure.

Use the $indexStats aggregation stage to identify underused indexes. Run the following command before making any changes (requires appropriate permissions):

// View access statistics for all indexes in a collection

db.getSiblingDB(<dbName>).<collectionName>.aggregate({"$indexStats":{}})Sample output:

{

"name" : "item_1_quantity_1",

"key" : { "item" : 1, "quantity" : 1 },

"host" : "examplehost.local:27018",

"accesses" : {

"ops" : NumberLong(1),

"since" : ISODate("2020-02-10T21:11:23.059Z")

}

}The following table describes the key fields in the output:

| Field | Description |

|---|---|

name |

Index name |

key |

Index key details |

accesses.ops |

Number of operations that used the index (hit count). Resets when the instance restarts or the index is rebuilt. |

accesses.since |

Timestamp when statistics collection started |

Apply the following rules when deciding which indexes to remove:

-

Invalid indexes: Remove indexes on fields that no queries access.

-

Index prefix redundancy: If both

{a:1}and{a:1,b:1}exist,{a:1}is redundant—{a:1,b:1}covers all queries that would use it. -

Equivalence query order: If both

{a:1,b:1}and{b:1,a:1}exist, remove the one with fewer hits. For equivalence matching, field order does not affect results. -

ESR Rule for range queries: Build compound indexes in Equality, Sort, Range order. For more information, see The ESR (Equality, Sort, Range) Rule.

-

Low-hit indexes: These are often duplicated by a higher-hit index. Evaluate against all query patterns before removing.

If your instance runs MongoDB 4.4 or later, use db.collection.hideIndex() to hide an index before dropping it. Monitor the instance for a period to confirm no queries depend on the index before permanently removing it. For more information, see db.collection.hideIndex().

Example: optimizing indexes in a players collection

A players collection stores player data with the following business rule: every 20 coins automatically convert into one star.

// players collection - document structure

{

"_id": "ObjectId(123)",

"first_name": "John",

"last_name": "Doe",

"coins": 11,

"stars": 2

}The collection currently has these indexes:

-

_id(default) -

{ last_name: 1 } -

{ last_name: 1, first_name: 1 } -

{ coins: -1 } -

{ stars: -1 }

Applying the rules above:

-

Remove `{ coins: -1 }`: No queries access the

coinsfield directly. -

Remove `{ last_name: 1 }`: It is a prefix of

{ last_name: 1, first_name: 1 }and is therefore redundant. -

Keep `{ stars: -1 }`: Even if

$indexStatsshows a low hit count, the end-of-round leaderboard requires sorting players bystarsin descending order. Removing it would force a full collection scan.

After optimization, the collection retains three indexes: _id, { last_name: 1, first_name: 1 }, and { stars: -1 }. This reduces storage consumption and improves write performance.

For further index optimization questions, submit a ticket.

Integrate data from multiple collections

When collections grow over time due to a time-based partitioning pattern, merging them into a single collection eliminates the accumulation problem.

Before optimization — the temperatures database stores daily readings in separate collections:

// temperatures.march-09-2020

{ "_id": 1, "timestamp": "2020-03-09T010:00:00Z", "temperature": 29 }

{ "_id": 2, "timestamp": "2020-03-09T010:30:00Z", "temperature": 30 }

// ... 25 readings total (sensor runs 10:00-22:00, every 30 minutes)

{ "_id": 25, "timestamp": "2020-03-09T022:00:00Z", "temperature": 26 }

// temperatures.march-10-2020

{ "_id": 1, "timestamp": "2020-03-10T010:00:00Z", "temperature": 30 }

// ...Each new day creates a new collection and a new _id index. Cross-day queries require $lookup, which performs worse than queries on a single collection.

After optimization — a single temperatures.readings collection stores all data, one document per day. The default _id index supports date-based queries without additional indexes for each day's readings:

// temperatures.readings

{

"_id": ISODate("2020-03-09"),

"readings": [

{ "timestamp": "2020-03-09T010:00:00Z", "temperature": 29 },

{ "timestamp": "2020-03-09T010:30:00Z", "temperature": 30 },

// ...

{ "timestamp": "2020-03-09T022:00:00Z", "temperature": 26 }

]

}

{

"_id": ISODate("2020-03-10"),

"readings": [

{ "timestamp": "2020-03-10T010:00:00Z", "temperature": 30 },

{ "timestamp": "2020-03-10T010:30:00Z", "temperature": 32 },

// ...

{ "timestamp": "2020-03-10T022:00:00Z", "temperature": 28 }

]

}This eliminates the unbounded collection growth problem and removes the need to create indexes for each day.

For time-series workloads, consider using time series collections (MongoDB 5.0 and later). For more information, see Time Series.

Split the instance

If the total number of collections in an ApsaraDB for MongoDB standalone instance cannot be reduced, split the data across multiple instances.

| Scenario | Splitting solution | Key steps |

|---|---|---|

| Collections are distributed across multiple databases | If multiple applications or services share the same instance and their databases are not closely associated, migrate some databases to a new ApsaraDB for MongoDB instance using Data Transmission Service (DTS). For more information, see Migrate data from an ApsaraDB for MongoDB replica set instance to an ApsaraDB for MongoDB replica set or sharded cluster instance. | Select the required source databases when creating a DTS task. Retain or rename collection names as needed. Run dropDatabase on the source instance after migration is complete. |

| All collections are in a single database | Determine whether collections can be split by a dimension such as region, city, or priority. Use DTS to migrate subsets of collections to separate ApsaraDB for MongoDB instances. | Select the required source database when creating the DTS task. Run the drop command to remove migrated collections from the source. Cross-instance aggregate queries require additional application logic. |

Update your application's business logic and connection configuration before completing the migration cutover.

Example: splitting a multi-tenant platform

A multi-tenant management platform uses one collection per tenant. As the tenant count grew past 100,000, the database reached terabytes in size and queries became slow.

The application split tenants by region: North China, Northeast China, East China, Central China, South China, Southwest China, and Northwest China. DTS migrated tenants to separate ApsaraDB for MongoDB instances deployed in the corresponding regions. Data was also synchronized to a data warehouse for cross-region aggregation and analysis.

After splitting:

-

Each instance holds a fraction of the original collection count, allowing instance specifications to be reduced.

-

Requests are served by the nearest instance, cutting latency to milliseconds.

-

Operations and maintenance complexity per instance is significantly reduced.

Migrate to a sharded cluster using shard tags

If all collections must remain under a single logical instance and cannot be reduced, migrate them to a sharded cluster instance and use shard tags to pin each collection to a specific shard. This distributes the dhandle load across shards without requiring application changes—only the connection string changes.

For example, 100,000 active collections migrated to a 10-shard cluster results in approximately 10,000 collections per shard.

For more information, see sh.addShardTag() and sh.addTagRange().

Steps

-

Purchase a sharded cluster instance. For more information, see Create a sharded cluster instance.

-

Connect to the mongos node of the sharded cluster instance. For more information, see Connect to an ApsaraDB for MongoDB sharded cluster instance by using the mongo shell.

-

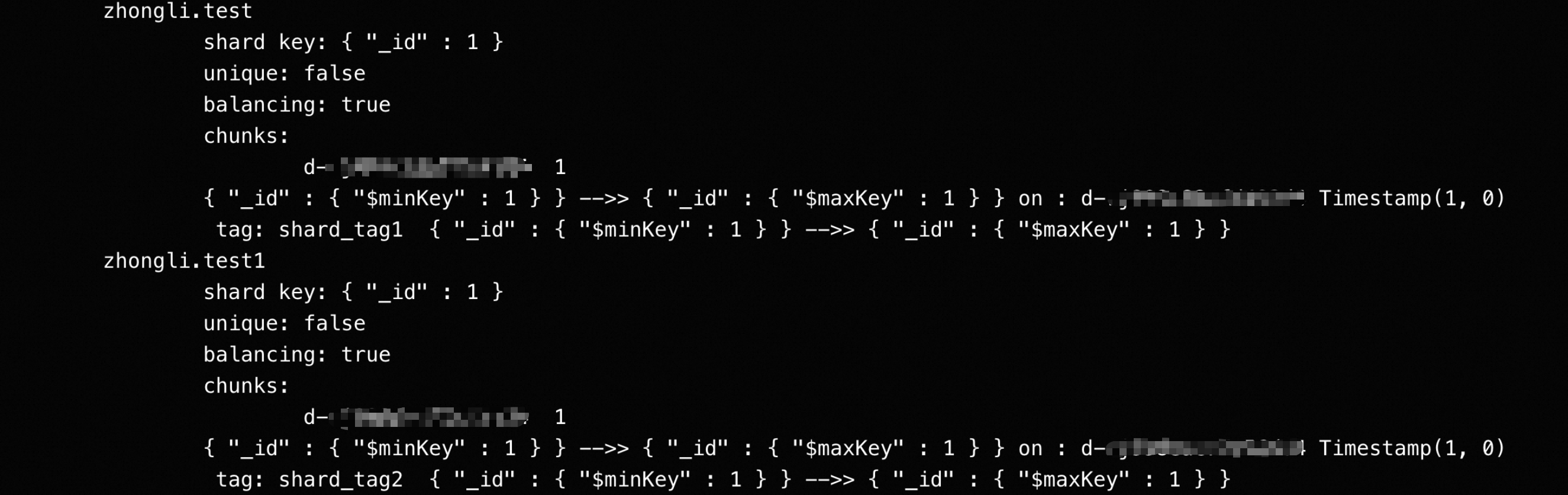

Add shard tags to each shard:

The account must have the required permissions to run these commands. Data Management (DMS) does not support

sh.addShardTag—use mongo shell or mongosh instead.sh.addShardTag("d-xxxxxxxxx1", "shard_tag1") sh.addShardTag("d-xxxxxxxxx2", "shard_tag2") -

Pre-configure tag ranges to pin each collection to a shard. Use

[MinKey, MaxKey]to ensure all data in a collection stays on a single shard:use <dbName> sh.enableSharding("<dbName>") sh.addTagRange("<dbName>.test", {"_id": MinKey}, {"_id": MaxKey}, "shard_tag1") sh.addTagRange("<dbName>.test1", {"_id": MinKey}, {"_id": MaxKey}, "shard_tag2")Replace

_idwith your actual shard key. All queries must include the shard key field. -

Shard each collection:

sh.shardCollection("<dbName>.test", {"_id": 1}) sh.shardCollection("<dbName>.test1", {"_id": 1}) -

Run

sh.status()to confirm that the tag rules are in effect.

-

Migrate data to the sharded cluster instance. For more information, see Migrate data from an ApsaraDB for MongoDB replica set instance to an ApsaraDB for MongoDB replica set or sharded cluster instance.

Because you pre-configured shard collections in step 5, collection metadata already exists on the target. Set the Processing Mode of Conflicting Tables parameter to Ignore Errors and Proceed in the DTS task.

-

After data consistency is verified, switch your application to the sharded cluster instance.

To add shards later, repeat step 3 to assign tags to the new shards.

If new collections are created after migration, repeat steps 4 and 5 for each new collection. Otherwise, the collections exist only in the primary shard, which causes a larger number of collections in the shard. In this case, your instance is always in the stuttering state or encounters an exception.

Migrate to a sharded cluster using zones

Zones work the same way as shard tags but use the newer sh.addShardToZone() and sh.updateZoneKeyRange() commands. For more information, see Manage Shard Zones, sh.addShardToZone(), and sh.updateZoneKeyRange().

Steps

-

Purchase a sharded cluster instance. For more information, see Create a sharded cluster instance.

-

Connect to the mongos node of the sharded cluster instance. For more information, see Connect to an ApsaraDB for MongoDB sharded cluster instance by using the mongo shell.

-

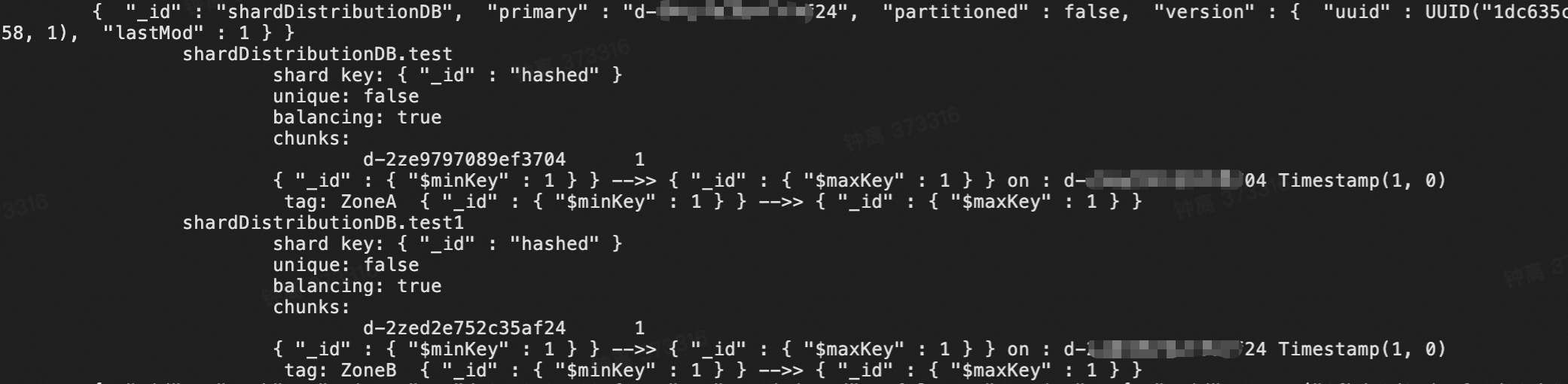

Assign zones to each shard:

The account must have the required permissions. Data Management (DMS) does not support

sh.addShardToZone—use mongo shell or mongosh instead.sh.addShardToZone("d-xxxxxxxxx1", "ZoneA") sh.addShardToZone("d-xxxxxxxxx2", "ZoneB") -

Pre-configure zone key ranges to pin each collection to a zone:

use <dbName> sh.enableSharding("<dbName>") sh.updateZoneKeyRange("<dbName>.test", { "_id": MinKey }, { "_id": MaxKey }, "ZoneA") sh.updateZoneKeyRange("<dbName>.test1", { "_id": MinKey }, { "_id": MaxKey }, "ZoneB")Replace

_idwith your actual shard key. -

Shard each collection:

sh.shardCollection("<dbName>.test", { _id: "hashed" }) sh.shardCollection("<dbName>.test1", { _id: "hashed" }) -

Run

sh.status()to confirm that the zone rules are in effect.

-

Migrate data to the sharded cluster instance. For more information, see Migrate data from an ApsaraDB for MongoDB replica set instance to an ApsaraDB for MongoDB replica set or sharded cluster instance.

Set the Processing Mode of Conflicting Tables parameter to Ignore Errors and Proceed in the DTS task.

-

After data consistency is verified, switch your application to the sharded cluster instance.

To add shards later, repeat step 3 to assign zones to the new shards.

If new collections are created after migration, repeat steps 4 and 5 for each new collection. Otherwise, the collections exist only in the primary shard, which causes a larger number of collections in the shard. In this case, your instance is always in the stuttering state or encounters an exception.

Risks

Avoid running dropDatabase on a database with many collections

When dropDatabase runs, WiredTiger asynchronously removes the metadata and physical files of all collections in the database. This can affect primary-to-secondary replication, causing replication lag to increase continuously. The MongoDB flow control mechanism activates during this process, and all writes with {writeConcern: majority} may be affected.

To avoid this issue, use one of the following approaches:

-

Drop collections individually with a delay between each drop, then run

dropDatabaseafter all collections are removed. -

Use DTS or another migration tool to migrate the databases and collections you want to keep to a new instance, then decommission the original instance after cutover.

Configure replication lag alerts on your instance. If this issue occurs, submit a ticket for technical support.submit a ticketsubmit a ticket

Summary

-

The total number of collections in a replica set instance must not exceed 10,000. If the total number of indexes in any single collection exceeds 15, reduce it.

-

If your business requires many collections—such as multi-tenant isolation by collection—use sharded cluster instances to distribute the load.

-

If your databases have a large number of collections and you need help, submit a ticket.