This feature is in public preview.

Managing data across a data lake and a data warehouse creates three common problems: complex connectivity setup, fragmented governance, and costly data duplication from ETL pipelines. MaxCompute data lake analytics addresses all three by letting you query external data sources—Data Lake Formation (DLF), Object Storage Service (OSS), Hive, and Hologres—directly from MaxCompute using standard SQL, with no data movement required.

How it works

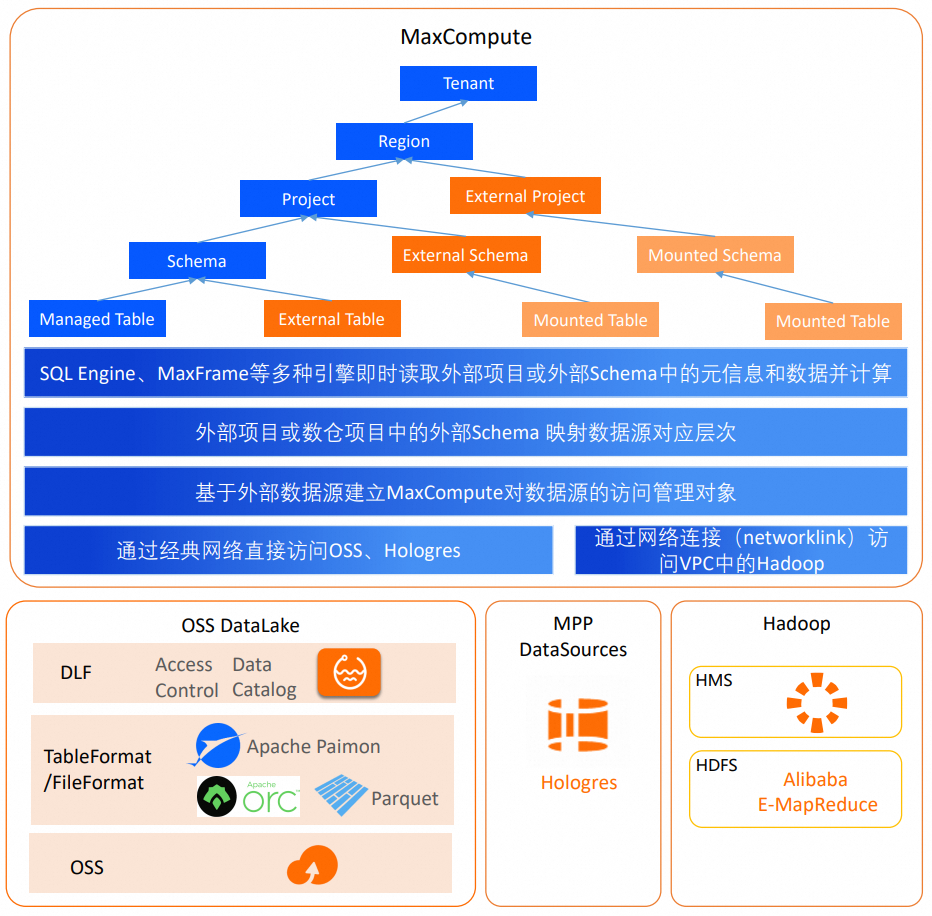

MaxCompute data lake analytics uses three management objects to map external data sources into the MaxCompute namespace:

Foreign Server — stores the credentials, location, and connection protocol for an external data source. A Foreign Server is a tenant-level object created by the tenant administrator.

External Schema — maps to a database or schema in an external data source. Tables within the mapped scope appear as federated foreign tables (also called Mounted Tables) that you query without writing DDL.

External Project — maps to a Catalog or Database in an external data source, exposing the intermediate layer as a Mounted Schema. Tables within each Mounted Schema are accessible as federated foreign tables.

Federated foreign tables do not store metadata in MaxCompute. MaxCompute fetches metadata in real time from the metadata service defined in the Foreign Server. When the source schema or data changes, the federated foreign table reflects the change immediately.

Network connectivity

How MaxCompute connects to an external data source depends on where the source is hosted:

DLF, OSS, and Hologres are in the interconnected Alibaba Cloud network. MaxCompute accesses them directly with no additional configuration.

Data sources in a VPC (such as E-MapReduce (EMR) clusters or ApsaraDB RDS instances (available soon)) require a Networklink leased line. For configuration details, see Network Connection Flow.

Supported data sources

The table below lists the supported data sources, how each management object maps to the source hierarchy, and the authentication method used.

| Data source type | Foreign server hierarchy | External Schema mapping | External Project mapping | Legacy External Project mapping | Authentication method |

|---|---|---|---|---|---|

| DLF_legacy+OSS | Region-level DLF and OSS services | DLF Catalog.Database | Not supported | DLF Catalog.Database | RAMRole |

| Hive+HDFS | E-MapReduce instance | Hive Database | Not supported | Hive Database | No authentication |

| Hologres | Database of a Hologres instance | Schema | — | Not supported | RAMRole |

| Hologres | Database of a Hologres instance | Not supported | Database | Not supported | SLR and current user identity authentication |

| DLF | Region-level DLF service | Not supported | DLF Catalog | Not supported | SLR and current user identity authentication |

| Filesystem Catalog | Paimon Catalog-level directory on OSS | Not supported | Catalog parsed from a Paimon Catalog-level directory | Not supported | RAMRole |

MaxCompute will support additional authentication methods in future releases, including current user identity authentication for Hologres and Kerberos authentication for Hive.

Key concepts

Foreign Server

A Foreign Server stores the credentials, location, and connection protocol for an external data source. It is a tenant-level object that the tenant administrator defines once. All projects in the tenant can reference it.

External Schema

An External Schema is a special schema type in a MaxCompute data warehouse project. It maps to:

A Database in the external source (for DLF_legacy or Hive scenarios)

A Schema in the external source (for Hologres scenarios)

Tables within the mapped scope appear as federated foreign tables. Query them using the project name and External Schema name as the namespace—no DDL required. Metadata is fetched in real time, so schema and data changes in the source are immediately visible.

External Project

An External Project maps to a higher level in the source hierarchy than an External Schema:

DLF scenarios: maps to a DLF Catalog, exposing the Databases under it as Mounted Schemas

Hologres scenarios: maps to a Hologres Database, exposing the Schemas under it as Mounted Schemas

Tables within a Mounted Schema are accessible as federated foreign tables.

Migrating from Data Lakehouse Solution 1.0: Legacy External Projects used a two-layer model that mapped to a Database or Schema. This model is being phased out. Migrate existing External Projects to External Schemas. For migration details, see Migrate External Projects in Data Lakehouse Solution 1.0 to External Schemas in Data Lakehouse 2.0.