This topic describes the billable items, billing methods, and pricing for Deep Learning Containers (DLC).

Important

The pricing in this topic is for reference only. Actual prices are shown on your bills.

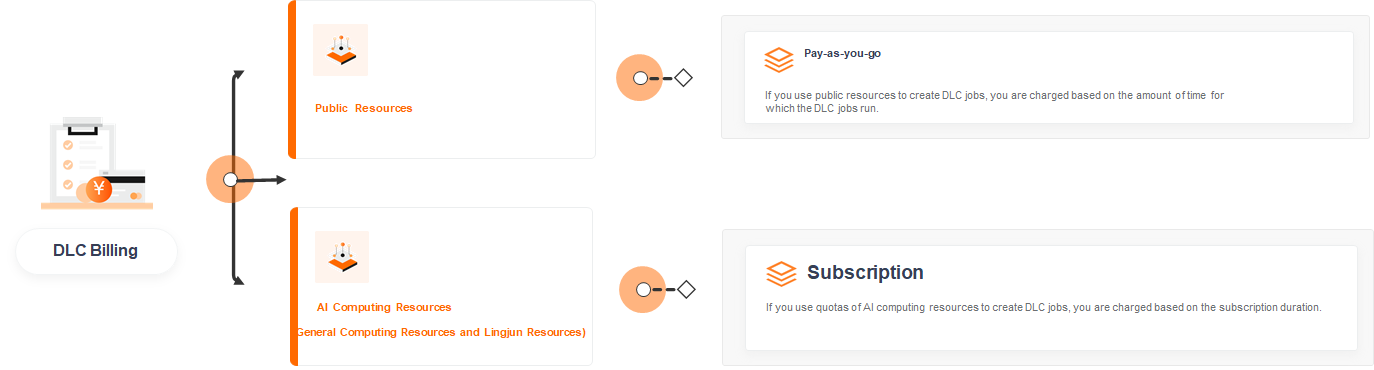

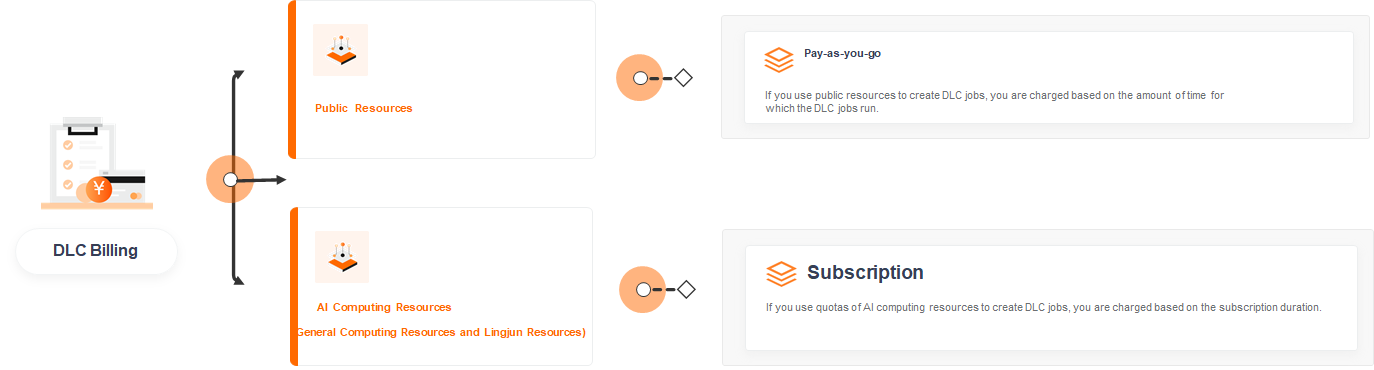

Billable items

The following figures show the billable items:

Billing methods

Select a billing method based on your business needs:

|

Billing method

|

Billable item

|

Billing entity

|

Billing rule

|

Stop billing

|

|

Pay-as-you-go

|

Public resources

|

Runtime of DLC jobs (duration of public resource usage)

|

Charged based on the runtime of DLC jobs that use public resources.

|

-

The DLC job completes.

-

The DLC job is stopped.

|

|

Subscription

|

AI computing resources (general computing resources and Lingjun resources)

|

For more information, see Billing of AI computing resources.

|

For more information, see Billing of AI computing resources.

|

Not applicable

|

Public resources

Pay-as-you-go

With pay-as-you-go, you are charged based on the runtime of DLC jobs that use public resources.

|

Resource type

|

Billing formula

|

Unit price

|

Billing duration

|

Scaling

|

Notes

|

|

General computing public resources

|

Bill amount = Number of nodes × (Unit price / 60) × Runtime (minutes)

|

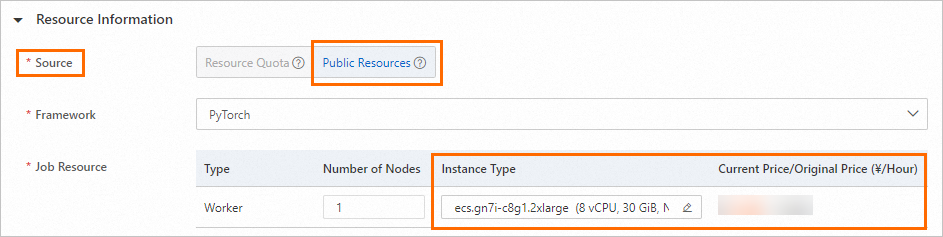

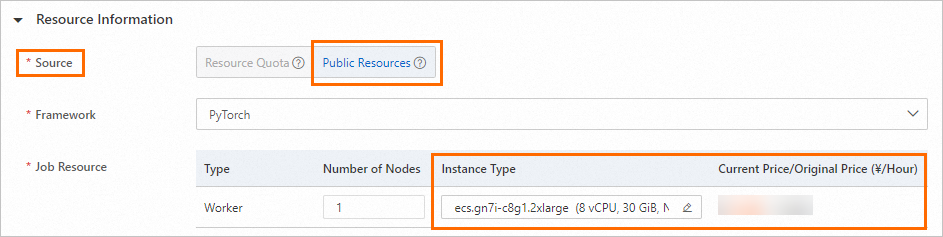

Prices vary by region. To view pricing, go to the DLC create job page. In the Resource Information section, set Resource Source to Public Resources and select a specification. For more information, see Create a job using the console.

|

Based on DLC job runtime.

|

Not applicable

|

None

|

AI computing resources

Subscription

AI computing resources include general computing resources and Lingjun resources. With subscription, you purchase AI computing resources upfront and use the resource quota to submit DLC jobs. For more information, see Billing of AI computing resources.

Billing examples

Important

The following billing examples are for reference only. Actual fees are shown on the console or buy page.

Public resources example

-

Scenario:

You create a training job in the China (Shanghai) region using a general computing public resource with the ecs.g6.2xlarge specification. The job uses one node and runs for 1 minute and 15 seconds.

-

Calculation:

Bill amount = 1 × 0.6 / 60 × 1.25 = 0.0125 USD

AI computing resources example

For billing examples of AI computing resources, see Billing of AI computing resources.

Appendix: List of public resource specifications

The following table lists some general computing public resource specifications supported by DLC. For a complete list, see the Resource Information section on the DLC create job page. For more information, see Create a job using the console. Available specifications vary by region. The specifications displayed on the console prevail.

|

Resource type

|

Specification

|

GPU type

|

|

ecs.g6.xlarge

|

4 vCPUs + 16 GB memory

|

None

|

|

ecs.c6.large

|

2 vCPUs + 4 GB memory

|

None

|

|

ecs.g6.large

|

2 vCPUs + 8 GB memory

|

None

|

|

ecs.g6.2xlarge

|

8 vCPUs + 32 GB memory

|

None

|

|

ecs.g6.4xlarge

|

16 vCPUs + 64 GB memory

|

None

|

|

ecs.g6.8xlarge

|

32 vCPUs + 128 GB memory

|

None

|

|

ecs.r7.large

|

2 vCPUs + 16 GB memory

|

None

|

|

ecs.r7.xlarge

|

4 vCPUs + 32 GB memory

|

None

|

|

ecs.r7.2xlarge

|

8 vCPUs + 64 GB memory

|

None

|

|

ecs.r7.4xlarge

|

16 vCPUs + 128 GB memory

|

None

|

|

ecs.r7.6xlarge

|

24 vCPUs + 192 GB memory

|

None

|

|

ecs.r7.8xlarge

|

32 vCPUs + 256 GB memory

|

None

|

|

ecs.r7.16xlarge

|

64 vCPUs + 512 GB memory

|

None

|

|

ecs.g5.xlarge

|

4 vCPUs + 16 GB memory

|

None

|

|

ecs.g7.xlarge

|

4 vCPUs + 16 GB memory

|

None

|

|

ecs.g7.2xlarge

|

8 vCPUs + 32 GB memory

|

None

|

|

ecs.g5.2xlarge

|

8 vCPUs + 32 GB memory

|

None

|

|

ecs.g6.3xlarge

|

12 vCPUs + 48 GB memory

|

None

|

|

ecs.g7.3xlarge

|

12 vCPUs + 48 GB memory

|

None

|

|

ecs.g7.4xlarge

|

16 vCPUs + 64 GB memory

|

None

|

|

ecs.r7.3xlarge

|

12 vCPUs + 96 GB memory

|

None

|

|

ecs.c6e.8xlarge

|

32 vCPUs + 64 GB memory

|

None

|

|

ecs.g6.6xlarge

|

24 vCPUs + 96 GB memory

|

None

|

|

ecs.g7.6xlarge

|

24 vCPUs + 96 GB memory

|

None

|

|

ecs.g5.4xlarge

|

16 vCPUs + 64 GB memory

|

None

|

|

ecs.hfc6.8xlarge

|

32 vCPUs + 64 GB memory

|

None

|

|

ecs.g7.8xlarge

|

32 vCPUs + 128 GB memory

|

None

|

|

ecs.hfc6.10xlarge

|

40 vCPUs + 96 GB memory

|

None

|

|

ecs.g6.13xlarge

|

52 vCPUs + 192 GB memory

|

None

|

|

ecs.g5.8xlarge

|

32 vCPUs + 128 GB memory

|

None

|

|

ecs.hfc6.16xlarge

|

64 vCPUs + 128 GB memory

|

None

|

|

ecs.g7.16xlarge

|

64 vCPUs + 256 GB memory

|

None

|

|

ecs.hfc6.20xlarge

|

80 vCPUs + 192 GB memory

|

None

|

|

ecs.g6.26xlarge

|

104 vCPUs + 384 GB memory

|

None

|

|

ecs.g5.16xlarge

|

64 vCPUs + 256 GB memory

|

None

|

|

ecs.r5.8xlarge

|

32 vCPUs + 256 GB memory

|

None

|

|

ecs.re6.13xlarge

|

52 vCPUs + 768 GB memory

|

None

|

|

ecs.re6.26xlarge

|

104 vCPUs + 1,536 GB memory

|

None

|

|

ecs.re6.52xlarge

|

208 vCPUs + 3,072 GB memory

|

None

|

|

ecs.g7.32xlarge

|

128 vCPUs + 512 GB memory

|

None

|

|

ecs.gn7i-c8g1.2xlarge

|

8 vCPUs + 30 GB memory

|

1 × NVIDIA A10

|

|

ecs.gn6v-c8g1.2xlarge

|

8 vCPUs + 32 GB memory

|

1 × NVIDIA V100

|

|

ecs.gn6e-c12g1.24xlarge

|

96 vCPUs + 736 GB memory

|

8 × NVIDIA V100

|

|

ecs.gn6v-c8g1.16xlarge

|

64 vCPUs + 256 GB memory

|

8 × NVIDIA V100

|

|

ecs.gn6v-c10g1.20xlarge

|

82 vCPUs + 336 GB memory

|

8 × NVIDIA V100

|

|

ecs.gn6e-c12g1.12xlarge

|

48 vCPUs + 338 GB memory

|

4 × NVIDIA V100

|

|

ecs.gn6v-c8g1.8xlarge

|

32 vCPUs + 128 GB memory

|

4 × NVIDIA V100

|

|

ecs.gn6i-c24g1.24xlarge

|

96 vCPUs + 372 GB memory

|

4 × NVIDIA T4

|

|

ecs.gn5-c8g1.4xlarge

|

16 vCPUs + 120 GB memory

|

2 × NVIDIA P100

|

|

ecs.gn7i-c32g1.16xlarge

|

64 vCPUs + 376 GB memory

|

2 × NVIDIA A10

|

|

ecs.gn6i-c24g1.12xlarge

|

48 vCPUs + 186 GB memory

|

2 × NVIDIA T4

|

|

ecs.gn6e-c12g1.3xlarge

|

12 vCPUs + 92 GB memory

|

1 × NVIDIA V100

|

|

ecs.gn5-c4g1.xlarge

|

4 vCPUs + 30 GB memory

|

1 × NVIDIA P100

|

|

ecs.gn5-c8g1.2xlarge

|

8 vCPUs + 60 GB memory

|

1 × NVIDIA P100

|

|

ecs.gn5-c28g1.7xlarge

|

28 vCPUs + 112 GB memory

|

1 × NVIDIA P100

|

|

ecs.gn6i-c4g1.xlarge

|

4 vCPUs + 15 GB memory

|

1 × NVIDIA T4

|

|

ecs.gn6i-c8g1.2xlarge

|

8 vCPUs + 31 GB memory

|

1 × NVIDIA T4

|

|

ecs.gn6i-c16g1.4xlarge

|

16 vCPUs + 62 GB memory

|

1 × NVIDIA T4

|

|

ecs.gn6i-c24g1.6xlarge

|

24 vCPUs + 93 GB memory

|

1 × NVIDIA T4

|

|

ecs.gn7i-c32g1.8xlarge

|

32 vCPUs + 188 GB memory

|

1 × NVIDIA A10

|

|

ecs.gn7e-c16g1.4xlarge

|

16 vCPUs + 125 GB memory

|

1 × GU50

|

|

ecs.gn7-c12g1.3xlarge

|

12 vCPUs + 95 GB memory

|

1 × GU50

|

|

ecs.gn7i-c16g1.4xlarge

|

16 vCPUs + 60 GB memory

|

1 × NVIDIA A10

|

|

ecs.gn7-c13g1.26xlarge

|

104 vCPUs + 760 GB memory

|

8 × GU50

|

|

ecs.ebmgn7e.32xlarge

|

128 vCPUs + 1,024 GB memory

|

8 × GU50

|

|

ecs.gn7i-c32g1.32xlarge

|

128 vCPUs + 752 GB memory

|

4 × NVIDIA A10

|

|

ecs.gn7-c13g1.13xlarge

|

52 vCPUs + 380 GB memory

|

4 × GU50

|

|

ecs.gn7s-c32g1.32xlarge

|

128 vCPUs + 1,000 GB memory

|

4 × NVIDIA A30

|

|

ecs.gn7s-c56g1.14xlarge

|

56 vCPUs + 440 GB memory

|

1 × NVIDIA A30

|

|

ecs.gn7s-c48g1.12xlarge

|

48 vCPUs + 380 GB memory

|

1 × NVIDIA A30

|

|

ecs.gn7s-c16g1.4xlarge

|

16 vCPUs + 120 GB memory

|

1 × NVIDIA A30

|

|

ecs.gn7s-c8g1.2xlarge

|

8 vCPUs + 60 GB memory

|

1 × NVIDIA A30

|

|

ecs.gn7s-c32g1.8xlarge

|

32 vCPUs + 250 GB memory

|

1 × NVIDIA A30

|