Elastic Container Instance does not support DaemonSets. Pods exit immediately after a job stops running. The system may not collect all logs when the pods exit. We recommend that you mount network-attached storage (NAS) file systems to jobs and store log files to the NAS file systems. This topic describes how to collect logs of jobs when elastic container instances are used to run jobs in Container Service for Kubernetes (ACK) clusters.

Prerequisites

An ACK cluster is created, and virtual nodes are deployed on the cluster. For more information, see Create an ACK managed cluster and Deploy the virtual node controller and use it to create Elastic Container Instance-based pods.

The

alibabacloud.com/eci=truelabel is added to the namespaces of the ACK cluster.After the label is added, pods can be scheduled to run on elastic container instances. For more information, see Schedule pods to an x86-based virtual node.

A NAS file system is created, and a mount target is added to the file system. For more information, see Create a NAS file system and Manage mount targets.

Background information

In ACK clusters, the logs of jobs that run on ECS instances on real nodes can be collected by using DaemonSet. For the jobs that run on elastic container instances on virtual nodes, pods exit immediately after jobs stop running. The system may not collect all logs when the pods exit.

For the preceding scenario, you can perform the following operations to collect the logs of the jobs:

Mount NAS file systems to the jobs and store the log output to the NAS file systems.

Mount the NAS file systems to another pod to obtain the logs of the jobs that are stored in the NAS file systems.

If you use Simple Log Service, you can mount a volume to a job by configuring environment variables and then use the data in Simple Log Service as the logs of pods. For more information, see Collect logs by using environment variables.

Procedure

The following example describes how to collect the logs of a job. In this example, the alibabacloud.com/eci=true label has been added to the namespace named vk. This way, the pods that are deployed in the namespace will be scheduled to run on the elastic container instance. Replace the namespace name by using the actual name of your namespace when you deploy the job.

Prepare the YAML configuration file of the job.

vim job.yamlThe following example provides the configurations of a job to calculate the value of π.

apiVersion: batch/v1 kind: Job metadata: name: pi spec: template: spec: containers: - name: pi image: resouer/ubuntu-bc command: ["sh", "-c", "echo 'scale=1000; 4*a(1)' | bc -l > /eci/a.log 2>&1"] # Redirect the output to the specified file. volumeMounts: - name: log-volume mountPath: /eci readOnly: false restartPolicy: Never volumes: # Mount a NAS file system to store logs. - name: log-volume nfs: server: 04edd48c7c-****.cn-hangzhou.nas.aliyuncs.com path: / readOnly: false backoffLimit: 4Deploy a job.

kubectl apply -f job.yaml -n vkView the status of the pod to check whether the job runs as expected.

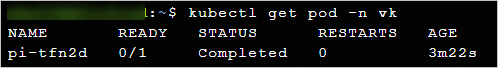

kubectl get pod -n vkThe following output is returned.

Prepare the configuration file of the pod that is used to collect the logs of the job.

vim log-collection.yamlSample YAML configuration file.

apiVersion: v1 kind: Pod metadata: name: log-collection spec: containers: - image: nginx:latest name: log-collection command: ['/bin/sh', '-c', 'echo &(cat /eci/a.log)'] # Provide the log file of the job. volumeMounts: - mountPath: /eci name: log-volume restartPolicy: Never volumes: # Mount the NAS file system in which the logs of the job are stored. - name: log-volume nfs: server: 04edd48c7c-****.cn-hangzhou.nas.aliyuncs.com path: / readOnly: falseDeploy the pod.

kubectl apply -f log-collection.yaml -n vkRun the pod until it stops running, and then view the log file of the job.

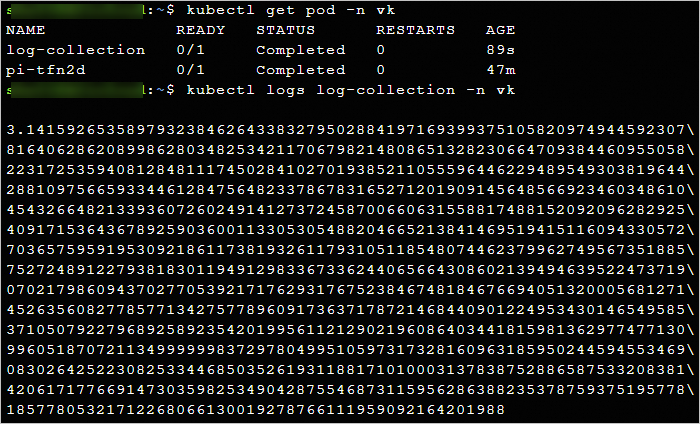

kubectl get pod -n vk kubectl logs log-collection -n vkThe following output is returned.