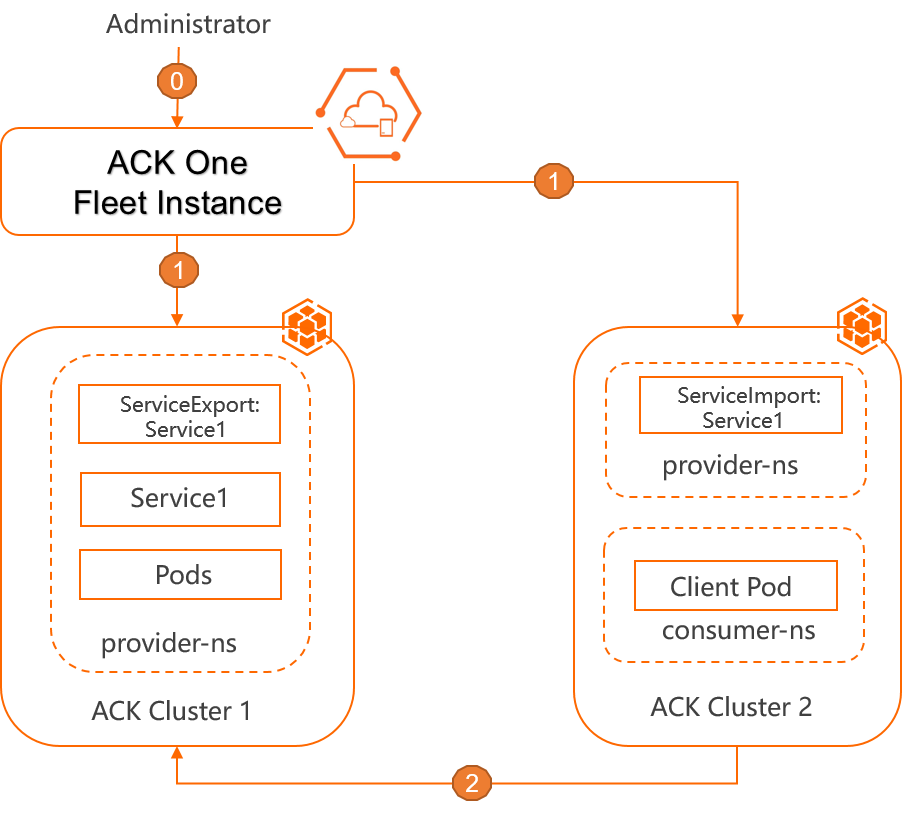

Multi-cluster Services (MCS) lets pods in one ACK cluster access Services running in another cluster without creating a load balancer. You export a Service from the provider cluster using a ServiceExport resource, and the Fleet instance automatically synchronizes endpoint information to the consumer cluster. You then create a ServiceImport in the consumer cluster to make the exported Service accessible.

Prerequisites

Before you begin, ensure that you have:

-

Fleet management enabled. See Enable multi-cluster management.

-

Two clusters associated with the Fleet instance: a service provider cluster (ACK Cluster 1) and a service consumer cluster (ACK Cluster 2). See Associate clusters with a Fleet instance.

-

Kubernetes 1.22 or later on both clusters.

-

kubeconfig files for both clusters, with kubectl configured to connect to each. See Obtain the kubeconfig file of a cluster and use kubectl to connect to the cluster.

How it works

-

The administrator deploys namespaces, Deployments, and Services in the provider cluster (ACK Cluster 1) and the consumer cluster (ACK Cluster 2), and creates MCS resources including a ServiceExport and a ServiceImport.

-

The Fleet instance listens on the ServiceExport and ServiceImport in the associated ACK clusters, and synchronizes MCS endpoint information.

-

Pods in ACK Cluster 2 can reach Service 1 in ACK Cluster 1.

Step 1: Set up the provider cluster

Skip this step if the Service and its resources already exist in ACK Cluster 1.

-

Create a namespace in ACK Cluster 1:

kubectl create ns provider-ns -

Create a file named

app-meta.yamlwith the following content:apiVersion: v1 kind: Service metadata: name: service1 namespace: provider-ns spec: ports: - name: http port: 80 protocol: TCP targetPort: 8080 selector: app: web-demo sessionAffinity: None type: ClusterIP --- apiVersion: apps/v1 kind: Deployment metadata: labels: app: web-demo name: web-demo namespace: provider-ns spec: replicas: 2 selector: matchLabels: app: web-demo template: metadata: labels: app: web-demo spec: containers: - image: acr-multiple-clusters-registry.cn-hangzhou.cr.aliyuncs.com/ack-multiple-clusters/web-demo:0.4.0 name: web-demo env: - name: ENV_NAME value: cluster1-beijing -

Apply the manifest to ACK Cluster 1:

kubectl apply -f app-meta.yaml -

Create a file named

service-export.yamlwith the following content:apiVersion: multicluster.x-k8s.io/v1alpha1 kind: ServiceExport metadata: name: service1 # Must match the name of the Service to export. namespace: provider-ns # Must match the namespace of the Service to export. -

Apply the ServiceExport in ACK Cluster 1:

kubectl apply -f service-export.yaml

Step 2: Set up the consumer cluster

-

Create the same namespace in ACK Cluster 2:

kubectl create ns provider-ns -

Create a file named

service-import.yamlwith the following content:apiVersion: multicluster.x-k8s.io/v1alpha1 kind: ServiceImport metadata: name: service1 # Must match the name of the exported Service. namespace: provider-ns # Must match the namespace of the exported Service. spec: ports: - name: http port: 80 protocol: TCP type: ClusterSetIPThe

specparameters are described in the following table:Parameter Description metadata.nameThe Service name. Must match the exported Service name. metadata.namespaceThe namespace. Must match the exported Service namespace. spec.ports.nameThe port name. Must match the exported Service. spec.ports.protocolThe protocol. Must match the exported Service. spec.ports.appProtocolThe application protocol. Must match the exported Service. spec.ports.portThe port number. Must match the exported Service. spec.ipsThe virtual IP address. Set by the Fleet instance—leave this blank. spec.typeValid values: ClusterSetIPandHeadless. UseHeadlesswhen the source Service hasClusterIP: None. UseClusterSetIPin all other cases.spec.sessionAffinitySession affinity. Valid values: ClientIPandNone. Must match the exported Service.spec.sessionAffinityConfigSession affinity configuration. Must match the exported Service. -

Apply the ServiceImport in ACK Cluster 2:

kubectl apply -f service-import.yaml

Step 3: Access the Service across clusters

After the ServiceImport is created, the Fleet instance automatically creates a multi-cluster Service with the amcs- prefix in ACK Cluster 2. You can access Service 1 in ACK Cluster 1 using either the Service name or a domain name.

Method 1: Use the Service name

-

Query Services in ACK Cluster 2 to confirm the

amcs-service1Service was created:kubectl get service -n provider-nsExpected output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE amcs-service1 ClusterIP 172.xx.xx.xx <none> 80/TCP 26m -

From a pod in ACK Cluster 2, run:

curl amcs-service1.provider-ns

Method 2: Use a domain name

-

Install or update CoreDNS in ACK Cluster 2. The version of CoreDNS must be 1.9.3 or later. For more information, see CoreDNS and Manage components.

-

Open the CoreDNS ConfigMap for editing in ACK Cluster 2:

kubectl edit configmap coredns -n kube-system -

In the

Corefilefield, addmulticluster clusterset.localto enable domain name resolution for multi-cluster Services:apiVersion: v1 data: Corefile: | .:53 { errors health { lameduck 15s } ready multicluster clusterset.local # Add this line. kubernetes cluster.local in-addr.arpa ip6.arpa { pods verified ttl 30 fallthrough in-addr.arpa ip6.arpa } ... } kind: ConfigMap metadata: name: coredns namespace: kube-system -

From a pod in ACK Cluster 2, run:

curl service1.provider-ns.svc.clusterset.local -

(Optional) To use a shorter URL, add

clusterset.localto thednsConfig.searchesfield of the client pod:apiVersion: apps/v1 kind: Deployment metadata: name: client-pod namespace: consumer-ns spec: ... template: ... spec: dnsPolicy: "ClusterFirst" dnsConfig: searches: - svc.clusterset.local - clusterset.local - consumer-ns.svc.cluster.local - svc.cluster.local - cluster.local containers: - name: client-pod ...After applying this configuration, you can use the shorter form:

curl service1.provider-ns

What's next

You can also configure MCS in the ACK One console. See Use MCS in the ACK One console.