Memory metrics are reported differently depending on where you measure them -- at the Linux host level, within a single process, inside a cgroup, or through container orchestrators like Docker and Kubernetes. When a container gets OOM-killed or a Java application leaks memory, understanding how each layer defines "memory usage" is essential for diagnosing the root cause.

The following sections describe common memory metrics, query commands, and calculation formulas across Linux, cgroups, Docker, Kubernetes, and Java.

Linux memory

The MemTotal metric value is less than the physical RAM capacity because the BIOS and kernel initialization consume memory during the Linux boot process. Retrieve the MemTotal value with the free command.

The following dmesg output shows the memory consumed during kernel initialization:

dmesg | grep Memory

Memory: 131604168K/134217136K available (14346K kernel code, 9546K rwdata, 9084K rodata, 2660K init, 7556K bss, 2612708K reserved, 0K cma-reserved)Query commands

Use the following commands to query Linux memory:

Memory formula

Calculate Linux memory usage with the following formula:

total = used + free + buff/cache // Total memory = Used memory + Free memory + Cache memoryUsed memory includes the memory consumed by the kernel and all processes.

kernel used=Slab + VmallocUsed + PageTables + KernelStack + HardwareCorrupted + Bounce + X

Process memory

Process memory consumption consists of two parts:

The physical memory to which the virtual address space is mapped.

The page cache generated for disk read and write operations.

Physical memory and virtual address mapping

Physical memory: The installed hardware memory (RAM capacity).

Virtual memory: The memory space provided by the operating system for program execution. Programs run in two modes: user mode and kernel mode.

User mode is the non-privileged mode for user programs. User space consists of:

| Segment | Description |

|---|---|

| Stack | The function stack used for function calls |

| Memory mapping segment (mmap) | The area for memory mapping |

| Heap | Dynamically allocated memory |

| BSS | Uninitialized static variables |

| Data | Initialized static constants |

| Text | Binary executable code |

Programs running in user mode use mmap to map virtual addresses to physical memory.

Kernel mode is used when programs need to access operating system kernel data. Kernel space consists of:

| Segment | Description |

|---|---|

| Direct mapping space | Simple mapping of virtual addresses to physical memory |

| Vmalloc | Dynamic mapping space; maps contiguous virtual addresses to non-contiguous physical memory |

| Persistent kernel mapping space | Maps virtual addresses to high-end physical memory |

| Fixed mapping space | Meets specific mapping requirements |

Shared vs. exclusive memory

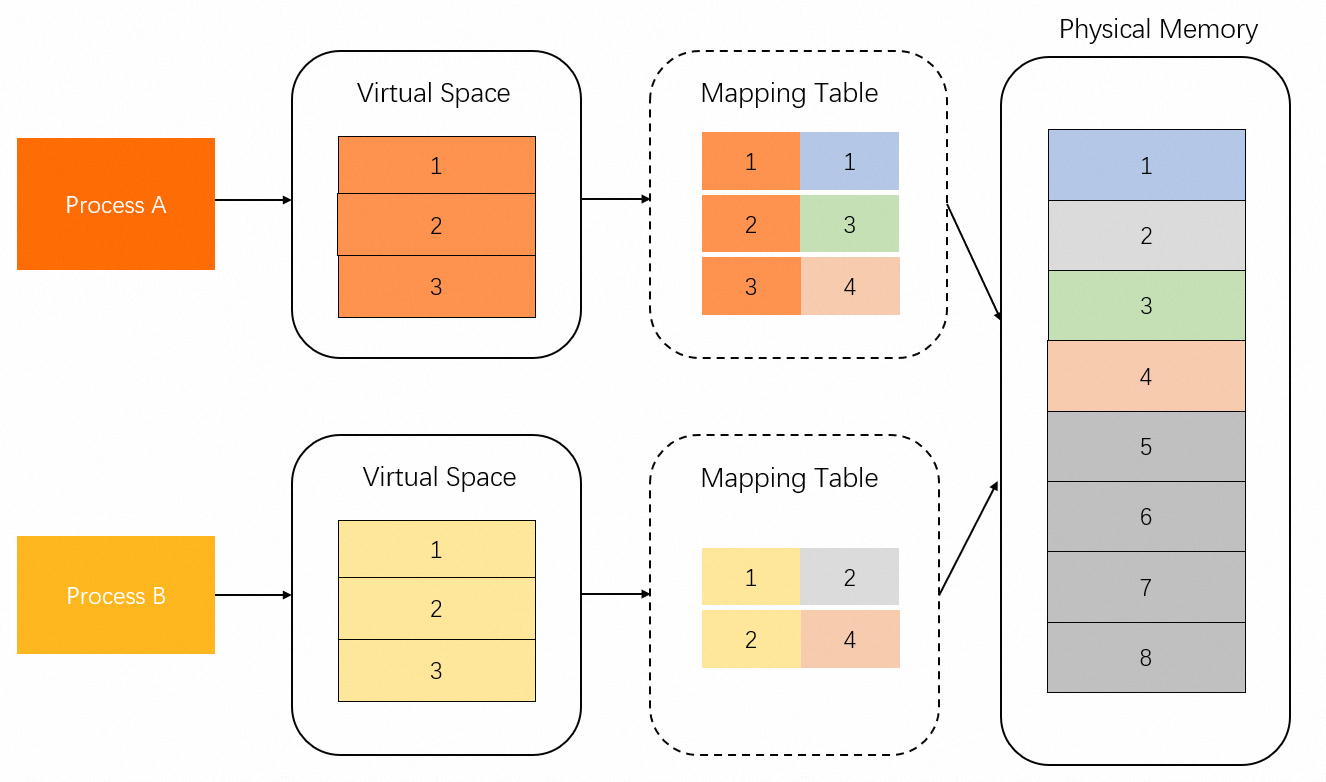

Physical memory mapped from virtual addresses is divided into shared memory and exclusive memory. In the following diagram, Memory 1 and 3 are exclusively occupied by Process A, Memory 2 is exclusively occupied by Process B, and Memory 4 is shared by Processes A and B.

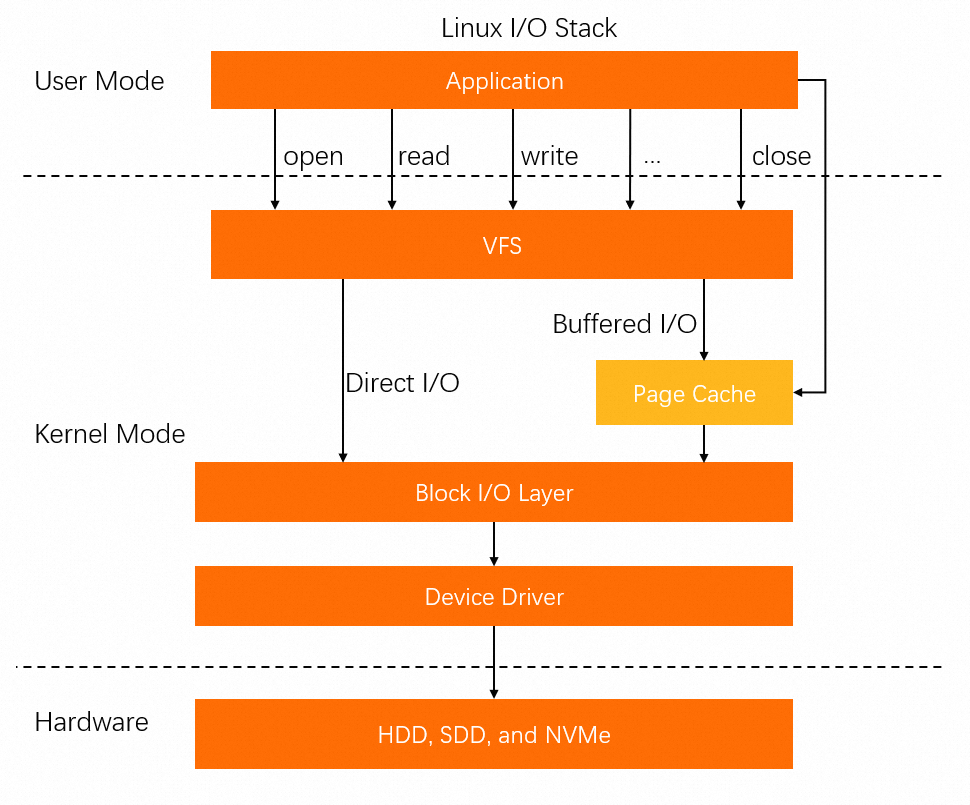

Page cache

Map process files by using mmap files for direct mapping, or use syscalls related to buffered I/O to write data to the page cache. In either case, the page cache occupies additional memory.

Process memory metrics

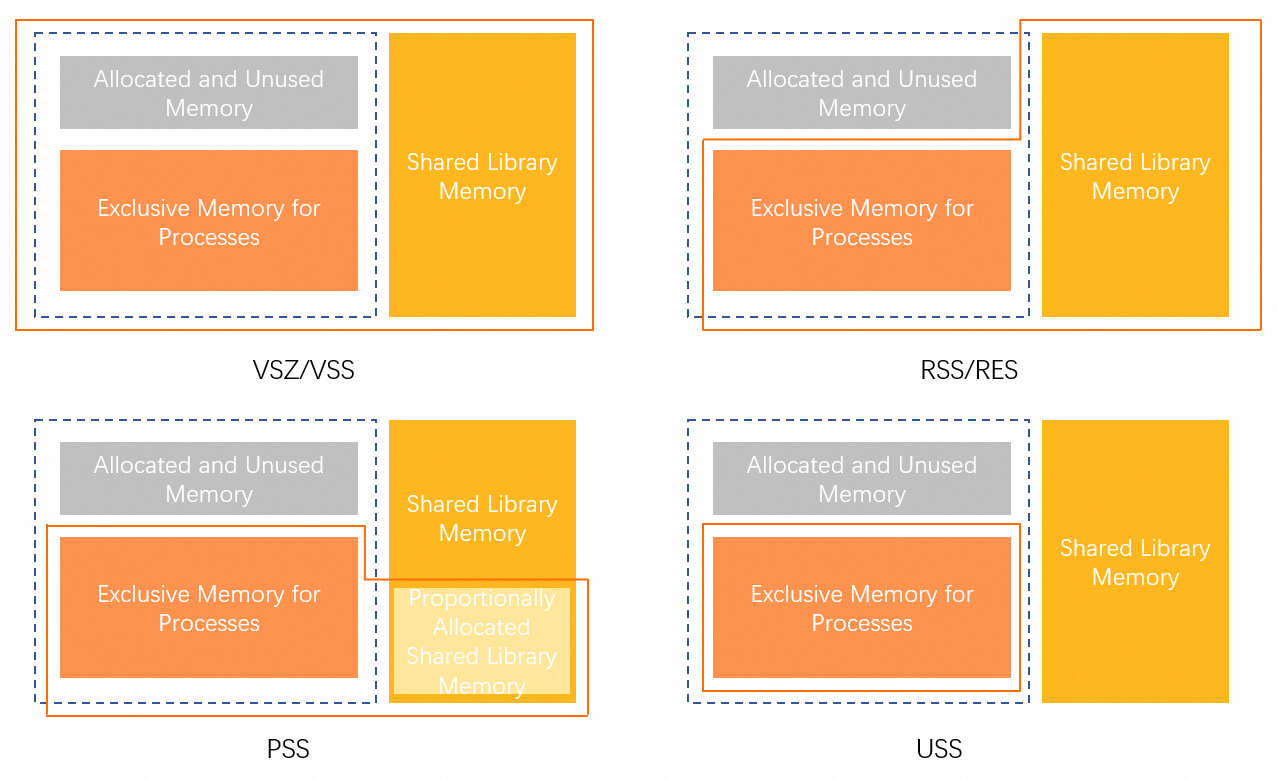

Single-process metrics

Process resources are stored in two categories:

Anonymous (anonymous pages): Stack space used by programs. No corresponding files exist on disk.

File-backed (file pages): Resources stored in disk files containing code blocks and font information.

The following metrics relate to single-process memory:

| Metric | Description |

|---|---|

| anon_rss (RssAnon) | Exclusive memory for all types of resources |

| file_rss (RSfd) | All memory occupied by file-backed resources |

| shmem_rss (RSsh) | Shared memory of anonymous resources |

The following table describes the commands used to query these metrics:

| Command | Metric | Description | Formula |

|---|---|---|---|

top | VIRT | Virtual address space | -- |

top | RES | Physical memory (RSS mapping) | anon_rss + file_rss + shmem_rss |

top | SHR | Shared memory | file_rss + shmem_rss |

top | MEM% | Memory usage percentage | RES / MemTotal |

ps | VSZ | Virtual address space | -- |

ps | RSS | Physical memory (RSS mapping) | anon_rss + file_rss + shmem_rss |

ps | MEM% | Memory usage percentage | RSS / MemTotal |

smem | USS | Exclusive memory | anon_rss |

smem | PSS | Proportionally allocated memory | anon_rss + file_rss/m + shmem_rss/n |

smem | RSS | Physical memory (RSS mapping) | anon_rss + file_rss + shmem_rss |

Memory Working Set Size (WSS) is a reasonable method for evaluating the memory required to keep processes running. However, WSS cannot be accurately calculated due to the restriction of Linux page reclaim.

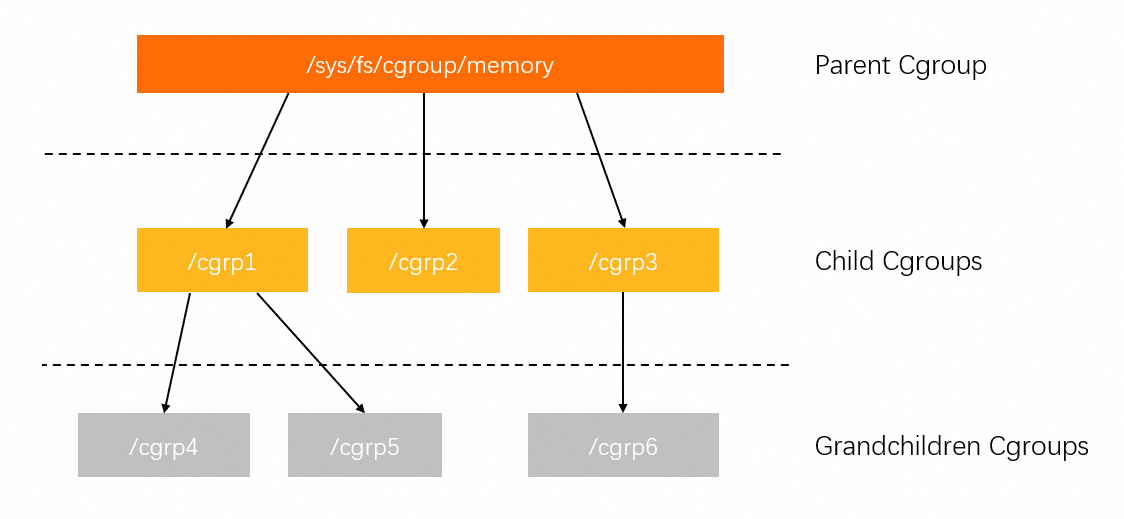

Cgroup metrics

Control groups (cgroups) limit, account for, and isolate Linux process resources. For more information, see Red Hat Linux 6 documentation.

Cgroups are hierarchically managed. Each hierarchy is attached to one or more subsystems and contains a set of files with subsystem metrics. For example, the memory control group (memcg) file contains memory metrics.

memcg file metrics

The memcg file contains the following metrics:

cgroup.event_control # Call the eventfd operation.

memory.usage_in_bytes # View the used memory.

memory.limit_in_bytes # Configure or view the current memory limit.

memory.failcnt # View the number of times that the memory usage reaches the limit.

memory.max_usage_in_bytes # View the historical maximum memory usage.

memory.soft_limit_in_bytes # Configure or view the current soft limit of the memory.

memory.stat # View the memory usage of the current cgroup.

memory.use_hierarchy # Specify whether to include the memory usage of child cgroups into the memory usage of the current cgroup, or check whether the memory usage of child cgroups is included into the memory usage of the current cgroup.

memory.force_empty # Reclaim as much memory as possible from the current cgroup.

memory.pressure_level # Configure notification events for memory pressure. This metric is used with cgroup.event_control.

memory.swappiness # Configure or view the current swappiness value.

memory.move_charge_at_immigrate # Specify whether the memory occupied by a process is moved when the process is moved to another cgroup.

memory.oom_control # Configure or view the oom controls configurations.

memory.numa_stat # View the numa-related memory.Key metrics to watch

Pay attention to the following metrics:

memory.limit_in_bytes: Configure or view the memory limit of the current cgroup. This metric is similar to the memory limit in Kubernetes and Docker.

memory.usage_in_bytes: View the total memory used by all processes in the current cgroup. The value is approximately equal to RSS + Cache in the memory.stat file.

memory.stat: View the memory usage of the current cgroup.

memory.stat fields

| Field | Description |

|---|---|

| cache | Size of cached pages |

| rss | Sum of anon_rss memory of all processes in the cgroup |

| mapped_file | Sum of file_rss and shmem_rss memory of all processes in the cgroup |

| active_anon | Memory occupied by all anonymous processes and swap cache in the active Least Recently Used (LRU) list, including tmpfs (shmem). Unit: bytes. |

| inactive_anon | Memory occupied by all anonymous processes and swap cache in the inactive LRU list, including tmpfs (shmem). Unit: bytes. |

| active_file | Memory used by all file-backed processes in the active LRU list. Unit: bytes. |

| inactive_file | Memory used by all file-backed processes in the inactive LRU list. Unit: bytes. |

| unevictable | Unevictable memory. Unit: bytes. |

Metrics prefixed with total_ apply to the current cgroup and all child cgroups. For example, the total_rss metric is the sum of the RSS metric value of the current cgroup and the RSS metric values of all child cgroups.

Single-process vs. cgroup metric comparison

The following table shows how the same metric names have different meanings depending on the context:

| Metric | Single process | cgroup (memcg) |

|---|---|---|

| RSS | anon_rss + file_rss + shmem_rss | anon_rss |

| mapped_file | -- | file_rss + shmem_rss |

| cache | -- | PageCache |

Key differences:

In cgroups, anon_rss is the only RSS metric. It is similar to the USS metric of a single process. Therefore, cgroup

mapped_file+ cgroupRSSequals the single-process RSS.Page cache must be separately calculated for a single process. The memcg file of a cgroup already includes page cache data.

Memory statistics in Docker and Kubernetes

Docker and Kubernetes memory statistics are similar to Linux memcg statistics, but they define "memory usage" differently. This difference determines how each system evaluates memory pressure and triggers OOM kills.

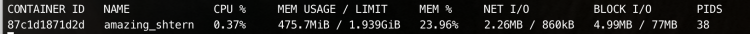

docker stats command

The following figure shows a sample docker stats response:

For more information about the docker stats command, see official documentation.

func calculateMemUsageUnixNoCache(mem types.MemoryStats) float64 {

return float64(mem.Usage - mem.Stats["cache"])

}LIMIT is similar to the memory.limit_in_bytes metric of cgroups.

MEM USAGE is similar to the memory.usage_in_bytes - memory.stat[total_cache] metric of cgroups.

kubectl top pod command

The kubectl top command uses metrics-server and Heapster to get the working_set value from cAdvisor, which indicates the memory used by pods (excluding pause containers). The following code shows how metrics-server gets pod memory. For more information, see Kubernetes documentation.

func decodeMemory(target *resource.Quantity, memStats *stats.MemoryStats) error {

if memStats == nil || memStats.WorkingSetBytes == nil {

return fmt.Errorf("missing memory usage metric")

}

*target = *uint64Quantity(*memStats.WorkingSetBytes, 0)

target.Format = resource.BinarySI

return nil

}The following code shows how cAdvisor calculates working_set. For more information, see cAdvisor documentation.

func setMemoryStats(s *cgroups.Stats, ret *info.ContainerStats) {

ret.Memory.Usage = s.MemoryStats.Usage.Usage

ret.Memory.MaxUsage = s.MemoryStats.Usage.MaxUsage

ret.Memory.Failcnt = s.MemoryStats.Usage.Failcnt

if s.MemoryStats.UseHierarchy {

ret.Memory.Cache = s.MemoryStats.Stats["total_cache"]

ret.Memory.RSS = s.MemoryStats.Stats["total_rss"]

ret.Memory.Swap = s.MemoryStats.Stats["total_swap"]

ret.Memory.MappedFile = s.MemoryStats.Stats["total_mapped_file"]

} else {

ret.Memory.Cache = s.MemoryStats.Stats["cache"]

ret.Memory.RSS = s.MemoryStats.Stats["rss"]

ret.Memory.Swap = s.MemoryStats.Stats["swap"]

ret.Memory.MappedFile = s.MemoryStats.Stats["mapped_file"]

}

if v, ok := s.MemoryStats.Stats["pgfault"]; ok {

ret.Memory.ContainerData.Pgfault = v

ret.Memory.HierarchicalData.Pgfault = v

}

if v, ok := s.MemoryStats.Stats["pgmajfault"]; ok {

ret.Memory.ContainerData.Pgmajfault = v

ret.Memory.HierarchicalData.Pgmajfault = v

}

workingSet := ret.Memory.Usage

if v, ok := s.MemoryStats.Stats["total_inactive_file"]; ok {

if workingSet < v {

workingSet = 0

} else {

workingSet -= v

}

}

ret.Memory.WorkingSet = workingSet

}The memory usage reported by kubectl top pod is calculated as follows:

Memory Usage = Memory WorkingSet = memory.usage_in_bytes - memory.stat[total_inactive_file]Docker vs. Kubernetes comparison

| Command | Ecosystem | Memory usage calculation |

|---|---|---|

docker stats | Docker | memory.usage_in_bytes - memory.stat[total_cache] |

kubectl top pod | Kubernetes | memory.usage_in_bytes - memory.stat[total_inactive_file] |

When using top and ps commands to query memory usage, the cgroup memory usage maps to these formulas:

| cgroup ecosystem | Formula |

|---|---|

| Memcg | rss + cache (active cache + inactive cache) |

| Docker | rss |

| K8s | rss + active cache |

Java memory metrics

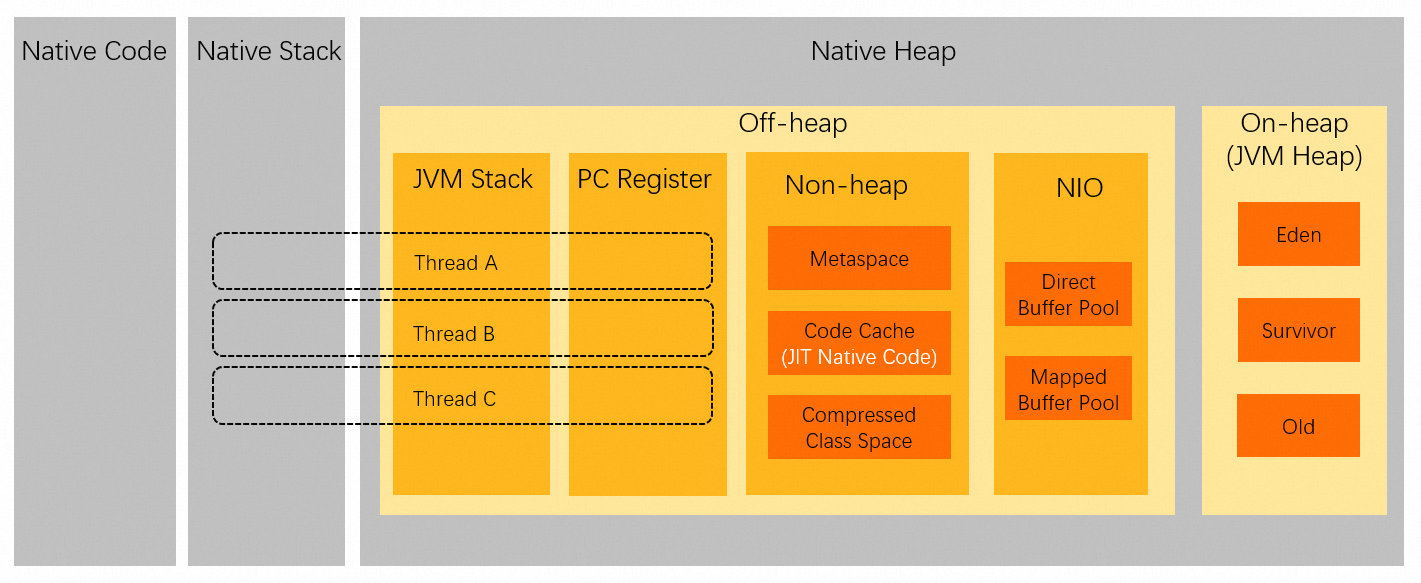

Virtual address spaces of Java processes

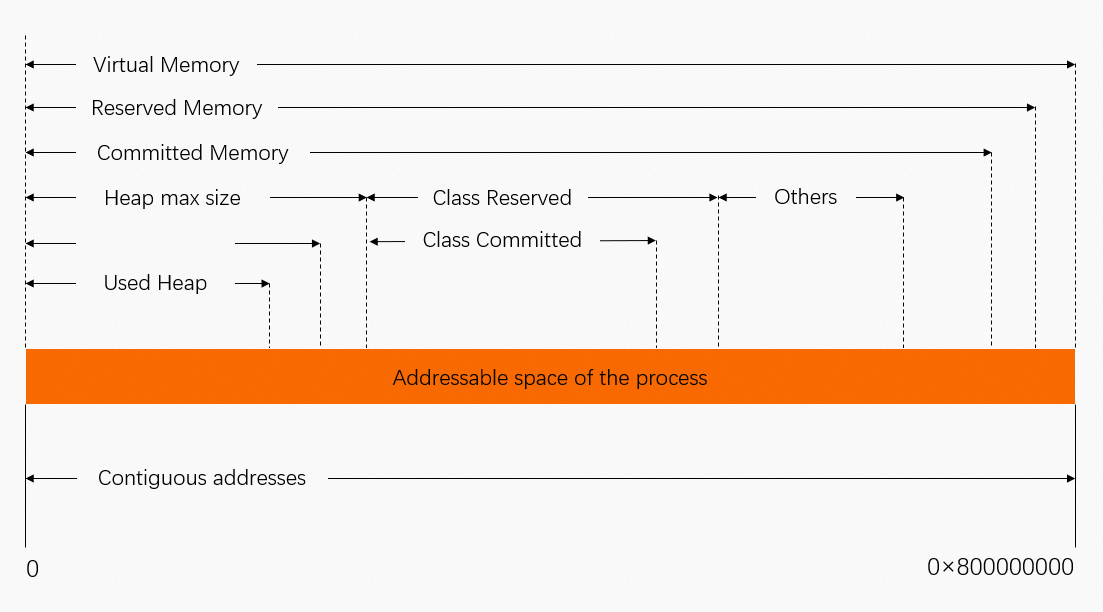

The following diagram shows how data is stored in the virtual address spaces of Java processes:

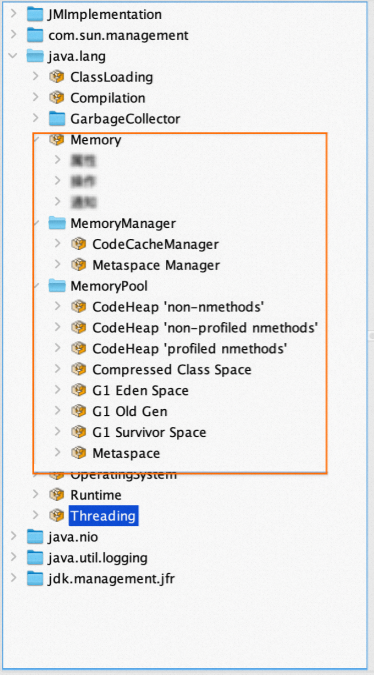

JMX memory metrics

Get memory metrics of Java processes through exposed Java Management Extensions (JMX) data. For example, use JConsole to view memory metrics.

Memory data is exposed through MBeans:

Exposed JMX metrics do not contain all memory metrics of JVM processes. For example, thread memory is not included. As a result, the sum of exposed JMX memory usage data does not equal the RSS metric value of JVM processes.

JMX MemoryUsage

JMX exposes MemoryUsage through MemoryPool MBeans. For more information, see Oracle documentation.

The used metric indicates the consumed physical memory.

Native Memory Tracking (NMT)

Java HotSpot VM provides Native Memory Tracking (NMT) for internal memory tracking. For more information, see Oracle documentation.

NMT is not suitable for production environments due to performance overhead.

Use NMT to get a detailed memory breakdown by area:

jcmd 7 VM.native_memory

Native Memory Tracking:

Total: reserved=5948141KB, committed=4674781KB

- Java Heap (reserved=4194304KB, committed=4194304KB)

(mmap: reserved=4194304KB, committed=4194304KB)

- Class (reserved=1139893KB, committed=104885KB)

(classes #21183)

( instance classes #20113, array classes #1070)

(malloc=5301KB #81169)

(mmap: reserved=1134592KB, committed=99584KB)

( Metadata: )

( reserved=86016KB, committed=84992KB)

( used=80663KB)

( free=4329KB)

( waste=0KB =0.00%)

( Class space:)

( reserved=1048576KB, committed=14592KB)

( used=12806KB)

( free=1786KB)

( waste=0KB =0.00%)

- Thread (reserved=228211KB, committed=36879KB)

(thread #221)

(stack: reserved=227148KB, committed=35816KB)

(malloc=803KB #1327)

(arena=260KB #443)

- Code (reserved=49597KB, committed=2577KB)

(malloc=61KB #800)

(mmap: reserved=49536KB, committed=2516KB)

- GC (reserved=206786KB, committed=206786KB)

(malloc=18094KB #16888)

(mmap: reserved=188692KB, committed=188692KB)

- Compiler (reserved=1KB, committed=1KB)

(malloc=1KB #20)

- Internal (reserved=45418KB, committed=45418KB)

(malloc=45386KB #30497)

(mmap: reserved=32KB, committed=32KB)

- Other (reserved=30498KB, committed=30498KB)

(malloc=30498KB #234)

- Symbol (reserved=19265KB, committed=19265KB)

(malloc=16796KB #212667)

(arena=2469KB #1)

- Native Memory Tracking (reserved=5602KB, committed=5602KB)

(malloc=55KB #747)

(tracking overhead=5546KB)

- Shared class space (reserved=10836KB, committed=10836KB)

(mmap: reserved=10836KB, committed=10836KB)

- Arena Chunk (reserved=169KB, committed=169KB)

(malloc=169KB)

- Tracing (reserved=16642KB, committed=16642KB)

(malloc=16642KB #2270)

- Logging (reserved=7KB, committed=7KB)

(malloc=7KB #267)

- Arguments (reserved=19KB, committed=19KB)

(malloc=19KB #514)

- Module (reserved=463KB, committed=463KB)

(malloc=463KB #3527)

- Synchronizer (reserved=423KB, committed=423KB)

(malloc=423KB #3525)

- Safepoint (reserved=8KB, committed=8KB)

(mmap: reserved=8KB, committed=8KB)The JVM is divided into various memory areas with different purposes, such as Java Heap, Class, Thread, and GC, plus additional memory blocks. Exposed JMX data does not include thread memory usage. However, Java programs typically have tens of thousands of threads that consume a significant amount of memory.

For more information about the memory types of HotSpot VM, see official documentation.

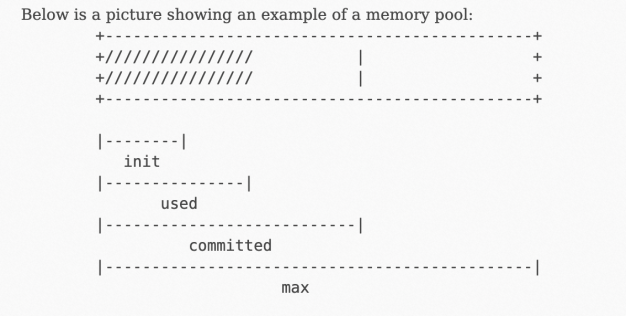

Reserved vs. committed

NMT statistics report reserved and committed metrics. However, neither reserved nor committed maps directly to used physical memory.

The following diagram shows the mapping relationships among reserved and committed virtual address metrics and physical addresses. The committed value is always greater than the used value. The used metric is similar to the RSS metric of JVM processes.

What to watch for

JMX exposes some memory pools that can be tracked within JVM. However, the sum of these memory pools cannot be mapped to the RSS metric of JVM processes.

NMT exposes the internal memory usage details of JVM, but reports the committed metric rather than the used metric. The total committed value may be slightly greater than the RSS value.

NMT cannot track memory outside JVM. For example, if Java programs have additional malloc behaviors, the RSS metric value will be greater than the memory usage reported by NMT.

If the NMT committed value and the RSS value are extremely different, memory leaks may have occurred. Use the following approaches for further investigation:

Use NMT baseline and diff to troubleshoot memory issues within JVM areas.

Use NMT together with pmap to troubleshoot memory issues outside JVM.