Pre-install node software packages into a custom image so new ECS instances join your registered cluster's node pool without manual setup—reducing the time for nodes to reach the Ready state.

This guide uses CentOS 7.9 with Kubernetes 1.28.3 connected via binary files. If you already have a custom image, skip to Step 3: Apply the custom image to a node pool.

Prerequisites

Before you begin, make sure you have:

-

A registered cluster with a self-managed Kubernetes cluster connected. See Create a registered cluster.

-

The self-managed cluster's network connected to the virtual private cloud (VPC) of the registered cluster. See Scenario-based networking for VPC connections.

-

Object Storage Service (OSS) activated with a bucket created. See Activate OSS and Create a bucket.

-

A kubectl client connected to the registered cluster. See Obtain the kubeconfig file of a cluster and use kubectl to connect to the cluster.

Limitations

-

The node created in Step 1 starts in a Failed state. This is expected—the node has no software packages until you configure it manually in Step 2.

-

SSH access to the node is required for the manual configuration in Step 2.

-

When switching from the default image to a custom image, you must delete residual kubelet certificates from the image before the node can join the cluster. This is handled in Step 4.

Overview

The workflow has five steps:

-

Create a cloud node pool — add one node to get a raw ECS instance to configure.

-

Configure the node and export a custom image — install containerd, kubelet, and kube-proxy, then create a custom ECS image.

-

Apply the custom image to a node pool — update the node pool to use the custom image.

-

Update the initialization script — rewrite the script to inject Alibaba Cloud node parameters dynamically.

-

Scale out the node pool — add nodes and verify the elastic node pool works.

Step 1: Create a cloud node pool and add nodes to it

This step adds one uninitialized node to the registered cluster. The node serves as the template for the custom image you build in Step 2.

-

In your OSS bucket, create a file named

join-ecs-node.shwith the following content, then upload it. This script logs the Alibaba Cloud node parameters injected at startup so you can verify the values before building the image.echo "The node providerid is $ALIBABA_CLOUD_PROVIDER_ID" echo "The node name is $ALIBABA_CLOUD_NODE_NAME" echo "The node labels are $ALIBABA_CLOUD_LABELS" echo "The node taints are $ALIBABA_CLOUD_TAINTS" -

Get the URL of

join-ecs-node.sh(a signed URL is acceptable) and update the custom configuration script reference in the cluster.-

Edit the

ack-agent-configConfigMap:kubectl edit cm ack-agent-config -n kube-system -

Set

addNodeScriptPathto the URL of your script:apiVersion: v1 data: addNodeScriptPath: https://kubelet-****.oss-cn-hangzhou-internal.aliyuncs.com/join-ecs-nodes.sh kind: ConfigMap metadata: name: ack-agent-config namespace: kube-system

-

-

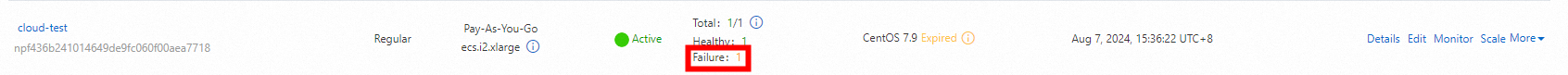

Create a cloud node pool named

cloud-testwith Expected Nodes set to1. See Create and scale out a node pool.ImportantThe new node appears in a Failed state. This is expected—the node has not been initialized with the required software packages yet. Make sure you can log in to the node via SSH before continuing.

Step 2: Configure the node and export a custom image

Log in to the Failed node and install all required components. These components are baked into the custom image so future nodes start pre-configured.

2.1 Verify node parameters

-

Log in to the node via SSH and check the initialization log:

cat /var/log/acs/init.logExpected output:

The node providerid is cn-zhangjiakou.i-xxxxx The node name is cn-zhangjiakou.192.168.66.xx The node labels are alibabacloud.com/nodepool-id=npf9fbxxxxxx,ack.aliyun.com=c22b1a2e122ff4fde85117de4xxxxxx,alibabacloud.com/instance-id=i-8vb7m7nt3dxxxxxxx,alibabacloud.com/external=true The node taints areRecord the values of

ALIBABA_CLOUD_PROVIDER_ID,ALIBABA_CLOUD_NODE_NAME,ALIBABA_CLOUD_LABELS, andALIBABA_CLOUD_TAINTS. You will need them in the kubelet startup parameters in step 2.3.

2.2 Configure the base environment

Run the following command to set up the base environment. It performs the following actions:

-

Installs required system packages

-

Disables firewall, SELinux, and swap (required by Kubernetes)

-

Configures network management and time synchronization

-

Sets system file descriptor limits

# Install tool packages.

yum update -y && yum -y install wget psmisc vim net-tools nfs-utils telnet yum-utils device-mapper-persistent-data lvm2 git tar curl

# Disable the firewall.

systemctl disable --now firewalld

# Disable SELinux.

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

# Disable swap partition.

sed -ri 's/.*swap.*/#&/' /etc/fstab

swapoff -a && sysctl -w vm.swappiness=0

# Network configuration.

systemctl disable --now NetworkManager

systemctl start network && systemctl enable network

# Time synchronization.

ln -svf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

yum install ntpdate -y

ntpdate ntp.aliyun.com

# Configure ulimit.

ulimit -SHn 65535

cat >> /etc/security/limits.conf <<EOF

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* seft memlock unlimited

* hard memlock unlimitedd

EOFAfter completing the environment configuration, upgrade the kernel to version 4.18 or later and install ipvsadm.

2.3 Install containerd

All commands in this section configure containerd as the container runtime, including kernel modules and CNI networking.

-

Download the CNI plugins and containerd packages:

wget https://github.com/containernetworking/plugins/releases/download/v1.3.0/cni-plugins-linux-amd64-v1.3.0.tgz mkdir -p /etc/cni/net.d /opt/cni/bin # Extract the cni binary package tar xf cni-plugins-linux-amd64-v*.tgz -C /opt/cni/bin/ wget https://github.com/containerd/containerd/releases/download/v1.7.8/containerd-1.7.8-linux-amd64.tar.gz tar -xzf cri-containerd-cni-*-linux-amd64.tar.gz -C / -

Create the containerd systemd service file:

cat > /etc/systemd/system/containerd.service <<EOF [Unit] Description=containerd container runtime Documentation=https://containerd.io After=network.target local-fs.target [Service] ExecStartPre=-/sbin/modprobe overlay ExecStart=/usr/local/bin/containerd Type=notify Delegate=yes KillMode=process Restart=always RestartSec=5 LimitNPROC=infinity LimitCORE=infinity LimitNOFILE=infinity TasksMax=infinity OOMScoreAdjust=-999 [Install] WantedBy=multi-user.target EOF -

Configure the kernel modules required by containerd:

cat <<EOF | sudo tee /etc/modules-load.d/containerd.conf overlay br_netfilter EOF systemctl restart systemd-modules-load.service -

Configure the kernel parameters required by containerd:

cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 net.bridge.bridge-nf-call-ip6tables = 1 EOF # Load the kernel sysctl --system -

Generate and modify the containerd configuration file:

mkdir -p /etc/containerd containerd config default | tee /etc/containerd/config.toml # Modify the containerd configuration file sed -i "s#SystemdCgroup\ \=\ false#SystemdCgroup\ \=\ true#g" /etc/containerd/config.toml cat /etc/containerd/config.toml | grep SystemdCgroup sed -i "s#registry.k8s.io#m.daocloud.io/registry.k8s.io#g" /etc/containerd/config.toml cat /etc/containerd/config.toml | grep sandbox_image sed -i "s#config_path\ \=\ \"\"#config_path\ \=\ \"/etc/containerd/certs.d\"#g" /etc/containerd/config.toml cat /etc/containerd/config.toml | grep certs.d # Configure the accelerator mkdir /etc/containerd/certs.d/docker.io -pv cat > /etc/containerd/certs.d/docker.io/hosts.toml << EOF server = "https://docker.io" [host."https://hub-mirror.c.163.com"] capabilities = ["pull", "resolve"] EOF -

Enable and start containerd:

# Reload systemd unit files after adding the service file systemctl daemon-reload systemctl enable --now containerd.service systemctl start containerd.service systemctl status containerd.service -

Configure crictl:

wget https://mirrors.chenby.cn/https://github.com/kubernetes-sigs/cri-tools/releases/download/v1.28.0/crictl-v1.28.0-linux-amd64.tar.gz tar xf crictl-v*-linux-amd64.tar.gz -C /usr/bin/ # Generate the configuration file cat > /etc/crictl.yaml <<EOF runtime-endpoint: unix:///run/containerd/containerd.sock image-endpoint: unix:///run/containerd/containerd.sock timeout: 10 debug: false EOF # Test systemctl restart containerd crictl info

2.4 Install kubelet and kube-proxy

All commands in this section install kubelet and kube-proxy using binary files copied from the master node, then configure both components to start on boot.

-

Copy the kubelet and kube-proxy binaries from the master node:

scp /usr/local/bin/kube{let,-proxy} $NODEIP:/usr/local/bin/ -

Create the certificate directory and copy certificates from the master node:

mkdir -p /etc/kubernetes/pkifor FILE in pki/ca.pem pki/ca-key.pem pki/front-proxy-ca.pem bootstrap-kubelet.kubeconfig kube-proxy.kubeconfig; do scp /etc/kubernetes/$FILE $NODE:/etc/kubernetes/${FILE}; done -

Configure the kubelet service. Replace the environment variable values with the node pool parameters you recorded in step 2.1.

mkdir -p /var/lib/kubelet /var/log/kubernetes /etc/systemd/system/kubelet.service.d /etc/kubernetes/manifests/ # Configure kubelet service on all k8s nodes cat > /usr/lib/systemd/system/kubelet.service << EOF [Unit] Description=Kubernetes Kubelet Documentation=https://github.com/kubernetes/kubernetes After=network-online.target firewalld.service containerd.service Wants=network-online.target Requires=containerd.service [Service] ExecStart=/usr/local/bin/kubelet \\ --node-ip=${ALIBABA_CLOUD_NODE_NAME} \\ --hostname-override=${ALIBABA_CLOUD_NODE_NAME} \\ --node-labels=${ALIBABA_CLOUD_LABELS} \\ --provider-id=${ALIBABA_CLOUD_PROVIDER_ID} \\ --register-with-taints=${ALIBABA_CLOUD_TAINTS} \\ --bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig \\ --kubeconfig=/etc/kubernetes/kubelet.kubeconfig \\ --config=/etc/kubernetes/kubelet-conf.yml \\ --container-runtime-endpoint=unix:///run/containerd/containerd.sock [Install] WantedBy=multi-user.target EOF -

Create the kubelet configuration file:

cat > /etc/kubernetes/kubelet-conf.yml <<EOF apiVersion: kubelet.config.k8s.io/v1beta1 kind: KubeletConfiguration address: 0.0.0.0 port: 10250 readOnlyPort: 10255 authentication: anonymous: enabled: false webhook: cacheTTL: 2m0s enabled: true x509: clientCAFile: /etc/kubernetes/pki/ca.pem authorization: mode: Webhook webhook: cacheAuthorizedTTL: 5m0s cacheUnauthorizedTTL: 30s cgroupDriver: systemd cgroupsPerQOS: true clusterDNS: - 10.96.0.10 clusterDomain: cluster.local containerLogMaxFiles: 5 containerLogMaxSize: 10Mi contentType: application/vnd.kubernetes.protobuf cpuCFSQuota: true cpuManagerPolicy: none cpuManagerReconcilePeriod: 10s enableControllerAttachDetach: true enableDebuggingHandlers: true enforceNodeAllocatable: - pods eventBurst: 10 eventRecordQPS: 5 evictionHard: imagefs.available: 15% memory.available: 100Mi nodefs.available: 10% nodefs.inodesFree: 5% evictionPressureTransitionPeriod: 5m0s failSwapOn: true fileCheckFrequency: 20s hairpinMode: promiscuous-bridge healthzBindAddress: 127.0.0.1 healthzPort: 10248 httpCheckFrequency: 20s imageGCHighThresholdPercent: 85 imageGCLowThresholdPercent: 80 imageMinimumGCAge: 2m0s iptablesDropBit: 15 iptablesMasqueradeBit: 14 kubeAPIBurst: 10 kubeAPIQPS: 5 makeIPTablesUtilChains: true maxOpenFiles: 1000000 maxPods: 110 nodeStatusUpdateFrequency: 10s oomScoreAdj: -999 podPidsLimit: -1 registryBurst: 10 registryPullQPS: 5 resolvConf: /etc/resolv.conf rotateCertificates: true runtimeRequestTimeout: 2m0s serializeImagePulls: true staticPodPath: /etc/kubernetes/manifests streamingConnectionIdleTimeout: 4h0m0s syncFrequency: 1m0s volumeStatsAggPeriod: 1m0s EOF -

Enable and start kubelet:

# Reload systemd unit files after adding the service file systemctl daemon-reload systemctl enable --now kubelet.service systemctl start kubelet.service systemctl status kubelet.service -

Verify the node joined the cluster:

kubectl get node -

Copy the kube-proxy kubeconfig from the master node:

scp /etc/kubernetes/kube-proxy.kubeconfig $NODE:/etc/kubernetes/kube-proxy.kubeconfig -

Create the kube-proxy service file:

cat > /usr/lib/systemd/system/kube-proxy.service << EOF [Unit] Description=Kubernetes Kube Proxy Documentation=https://github.com/kubernetes/kubernetes After=network.target [Service] ExecStart=/usr/local/bin/kube-proxy \\ --config=/etc/kubernetes/kube-proxy.yaml \\ --v=2 Restart=always RestartSec=10s [Install] WantedBy=multi-user.target EOF -

Create the kube-proxy configuration file:

cat > /etc/kubernetes/kube-proxy.yaml << EOF apiVersion: kubeproxy.config.k8s.io/v1alpha1 bindAddress: 0.0.0.0 clientConnection: acceptContentTypes: "" burst: 10 contentType: application/vnd.kubernetes.protobuf kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig qps: 5 clusterCIDR: 172.16.0.0/12,fc00:2222::/112 configSyncPeriod: 15m0s conntrack: max: null maxPerCore: 32768 min: 131072 tcpCloseWaitTimeout: 1h0m0s tcpEstablishedTimeout: 24h0m0s enableProfiling: false healthzBindAddress: 0.0.0.0:10256 hostnameOverride: "" iptables: masqueradeAll: false masqueradeBit: 14 minSyncPeriod: 0s syncPeriod: 30s ipvs: masqueradeAll: true minSyncPeriod: 5s scheduler: "rr" syncPeriod: 30s kind: KubeProxyConfiguration metricsBindAddress: 127.0.0.1:10249 mode: "ipvs" nodePortAddresses: null oomScoreAdj: -999 portRange: "" udpIdleTimeout: 250ms EOF -

Enable and start kube-proxy:

# Reload systemd unit files after adding the service file systemctl daemon-reload systemctl enable --now kube-proxy.service systemctl restart kube-proxy.service systemctl status kube-proxy.service

2.5 Sync the node pool status and export the image

-

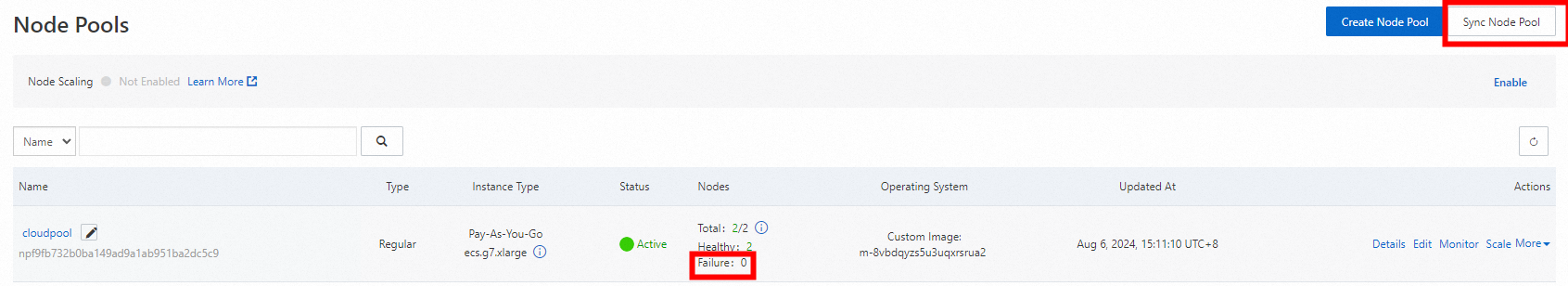

In the ACK console, go to Clusters. Click the cluster name, then go to Nodes > Node Pools.

-

Click Sync Node Pool and wait for the sync to complete. Confirm that no nodes are in the Failed state.

-

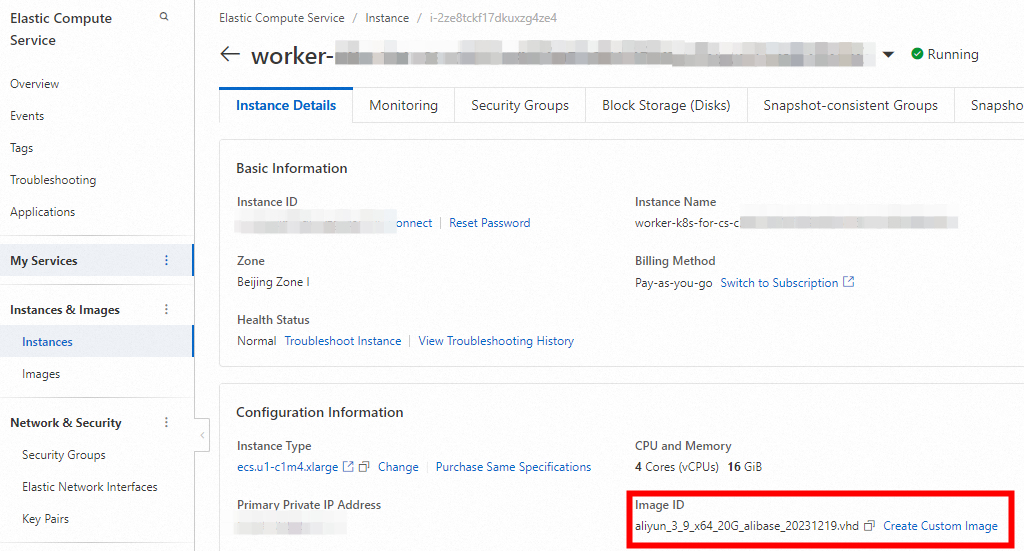

In the ECS console, go to Instances & Images > Instances.

-

Click the Instance ID, go to the Instance Details tab, and click Create Custom Image.

-

Go to Instances & Images > Images. Confirm the custom image appears with Status as Available.

Step 3: Apply the custom image to a node pool

If you already had a custom image and skipped Steps 1 and 2, create a new node pool using the custom image first. See Create and manage a node pool.

-

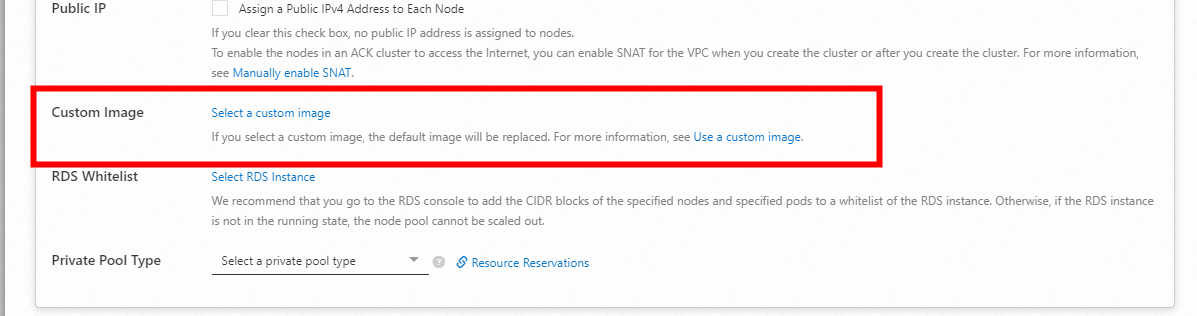

In the ACK console, go to Clusters, click the cluster name, then go to Nodes > Node Pools.

-

Find the node pool, click Edit in the Actions column, expand Advanced Options, and select your custom image next to Custom Image.

-

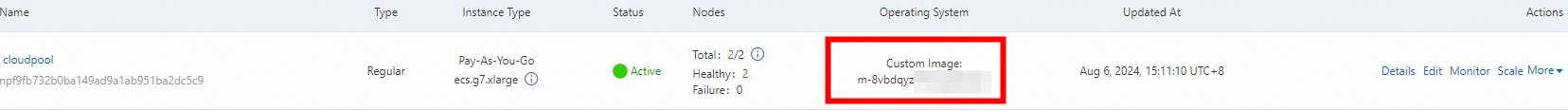

Confirm the Operating System column on the Node Pools page now shows Custom Image.

Step 4: Update the initialization script

The custom image already contains all required software packages. Update the initialization script so that when a new node starts from the custom image, it only needs to receive and apply the Alibaba Cloud node parameters—without reinstalling anything.

-

Delete residual kubelet certificates in the custom image (line 7 of the script below). Without this, new nodes cannot generate fresh certificates and will fail to join the cluster.

-

If you have an existing custom node pool, update the

addNodeScriptPathURL inack-agent-configas shown in Step 1.

-

Replace the content of

join-ecs-node.shwith the following:echo "The node providerid is $ALIBABA_CLOUD_PROVIDER_ID" echo "The node name is $ALIBABA_CLOUD_NODE_NAME" echo "The node labels are $ALIBABA_CLOUD_LABELS" echo "The node taints are $ALIBABA_CLOUD_TAINTS" systemctl stop kubelet.service echo "Delete old kubelet pki" # Need to delete old node certificates rm -rf /var/lib/kubelet/pki/* echo "Add kubelet service config" # Configure kubelet service cat > /usr/lib/systemd/system/kubelet.service << EOF [Unit] Description=Kubernetes Kubelet Documentation=https://github.com/kubernetes/kubernetes After=network-online.target firewalld.service containerd.service Wants=network-online.target Requires=containerd.service [Service] ExecStart=/usr/local/bin/kubelet \\ --node-ip=${ALIBABA_CLOUD_NODE_NAME} \\ --hostname-override=${ALIBABA_CLOUD_NODE_NAME} \\ --node-labels=${ALIBABA_CLOUD_LABELS} \\ --provider-id=${ALIBABA_CLOUD_PROVIDER_ID} \\ --register-with-taints=${ALIBABA_CLOUD_TAINTS} \\ --bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.kubeconfig \\ --kubeconfig=/etc/kubernetes/kubelet.kubeconfig \\ --config=/etc/kubernetes/kubelet-conf.yml \\ --container-runtime-endpoint=unix:///run/containerd/containerd.sock [Install] WantedBy=multi-user.target EOF systemctl daemon-reload # Start Kubelet Service systemctl start kubelet.service -

Upload the updated

join-ecs-node.shto OSS, replacing the previous version.

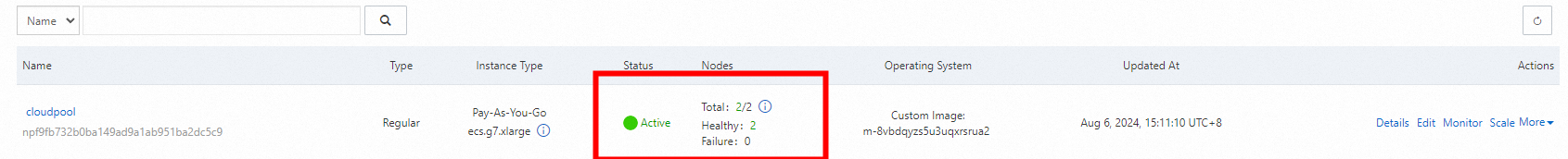

Step 5: Scale out the node pool

-

In the ACK console, go to Clusters, click the cluster name, then go to Nodes > Node Pools.

-

Find the node pool, click More > Scale in the Actions column, and add a new node.

-

Verify that both nodes are in the Ready state:

kubectl get nodesBoth nodes in a Ready state confirm the elastic node pool is built successfully.

What's next

-

Configure an auto scaling policy for the node pool. See Configure auto scaling.