This guide walks through an end-to-end deep learning workflow on Container Service for Kubernetes (ACK) using the open-source Fashion-MNIST dataset — from dataset preparation and model development through standalone and distributed training, training acceleration, model evaluation, and inference deployment.

Background

The cloud-native AI component set is a collection of components deployed independently via Helm charts. It supports two roles:

-

Administrators manage users and permissions, allocate cluster resources, configure storage, manage datasets, and monitor resource utilization.

-

Developers submit jobs and use cluster resources. Developers must be created by an administrator and granted permissions before they can use tools such as Arena or Jupyter Notebook.

The following table describes each component and its role in the workflow:

| Component | Role |

|---|---|

| AI Dashboard | Admin control plane — manage datasets and monitor resources |

| AI Developer Console | Developer portal — create notebooks, submit jobs, and manage models |

| Arena | CLI for submitting and monitoring training and inference jobs |

| Fluid | Data caching layer — accelerates dataset reads for training jobs |

| AI job scheduler | GPU topology-aware scheduling — reduces distributed training time |

Prerequisites

Before you begin, make sure the following are in place.

Cluster (completed by an administrator):

-

An ACK managed cluster where each node has at least 300 GB of disk space. See Create an ACK managed cluster.

-

For optimal data acceleration: 4 Elastic Compute Service (ECS) instances, each with 8 V100 GPUs.

-

For optimal topology awareness: 2 ECS instances, each with 2 V100 GPUs.

-

-

All cloud-native AI component set components installed. See Deploy the cloud-native AI suite.

-

AI Dashboard ready for use. See Access AI Dashboard.

-

AI Developer Console ready for use. See Log on to AI Developer Console.

The AI Console (AI Dashboard and AI Developer Console) was rolled out via a whitelist starting January 22, 2025. Existing deployments before this date are unaffected. If you are not whitelisted for a new installation, configure AI Console via the open-source community. See Open-source AI Console.

Dataset and credentials:

-

The Fashion-MNIST dataset downloaded and uploaded to an Object Storage Service (OSS) bucket. See Upload objects.

-

The address, username, and password of the Git repository that stores the training code.

Tooling:

-

A kubectl client connected to the cluster. See Obtain the kubeconfig file and connect kubectl to the cluster.

-

Arena installed. See Configure the Arena client.

Test environment

The cluster used in this guide has the following nodes:

| Host name | IP | Role | GPUs | vCPUs | Memory |

|---|---|---|---|---|---|

| cn-beijing.192.168.0.13 | 192.168.0.13 | Jump server | 1 | 8 | 30580004 KiB |

| cn-beijing.192.168.0.16 | 192.168.0.16 | Worker | 1 | 8 | 30580004 KiB |

| cn-beijing.192.168.0.17 | 192.168.0.17 | Worker | 1 | 8 | 30580004 KiB |

| cn-beijing.192.168.0.240 | 192.168.0.240 | Worker | 1 | 8 | 30580004 KiB |

| cn-beijing.192.168.0.239 | 192.168.0.239 | Worker | 1 | 8 | 30580004 KiB |

Submit Arena commands from a Jupyter Notebook terminal, not from the jump server directly.

What this guide covers

| Step | Task | Role |

|---|---|---|

| Step 1: Create a user and allocate resources | Create a user and allocate resources | Admin |

| Step 2: Create a dataset | Create and accelerate a dataset | Admin |

| Step 3: Develop a model | Develop a model in Jupyter Notebook | Developer |

| Step 4: Train the model | Submit standalone and distributed training jobs | Developer |

| Step 5: Manage the model | Register the trained model | Developer |

| Step 6: Evaluate the model | Evaluate the model | Developer |

| Step 7: Deploy the model as an inference service | Deploy an inference service | Developer |

Step 1: Create a user and allocate resources

Role: Admin

Before developers can submit jobs, the administrator must provision the following:

-

A username and password. See Manage users.

-

Resource quotas. See Manage elastic quota groups.

-

The AI Developer Console endpoint, if developers submit jobs via the console. See Log on to AI Developer Console.

-

The kubeconfig file for cluster access, if developers submit jobs via Arena. See Select a type of cluster credentials.

Step 2: Create a dataset

Role: Admin

Add the Fashion-MNIST dataset

Create a persistent volume (PV) and persistent volume claim (PVC) to mount the OSS bucket that stores the Fashion-MNIST dataset.

-

Create a file named

fashion-mnist.yamlwith the following content. ReplaceAKIDandAKSECRETwith your OSS access credentials.apiVersion: v1 kind: PersistentVolume metadata: name: fashion-demo-pv spec: accessModes: - ReadWriteMany capacity: storage: 10Gi csi: driver: ossplugin.csi.alibabacloud.com volumeAttributes: bucket: fashion-mnist otherOpts: "" url: oss-cn-beijing.aliyuncs.com akId: "AKID" akSecret: "AKSECRET" volumeHandle: fashion-demo-pv persistentVolumeReclaimPolicy: Retain storageClassName: oss volumeMode: Filesystem --- apiVersion: v1 kind: PersistentVolumeClaim metadata: name: fashion-demo-pvc namespace: demo-ns spec: accessModes: - ReadWriteMany resources: requests: storage: 10Gi selector: matchLabels: alicloud-pvname: fashion-demo-pv storageClassName: oss volumeMode: Filesystem volumeName: fashion-demo-pv -

Apply the manifest:

kubectl create -f fashion-mnist.yaml -

Verify that the PV and PVC are in the

Boundstate. Check the PV:kubectl get pv fashion-mnist-jackwgExpected output:

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE fashion-mnist-jackwg 10Gi RWX Retain Bound ns1/fashion-mnist-jackwg-pvc oss 8hCheck the PVC:

kubectl get pvc fashion-mnist-jackwg-pvc -n ns1Expected output:

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE fashion-mnist-jackwg-pvc Bound fashion-mnist-jackwg 10Gi RWX oss 8hBoth resources should show

Bound.

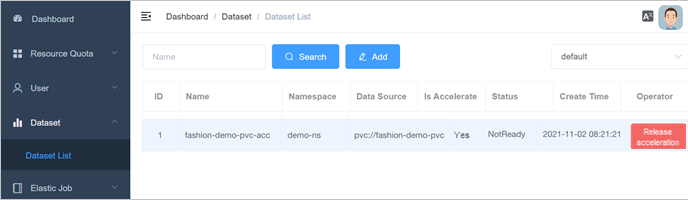

Accelerate the dataset

Accelerate the dataset with Fluid via AI Dashboard so that training jobs read data from a local cache rather than from OSS directly.

-

Access AI Dashboard as an administrator.

-

In the left-side navigation pane, choose Dataset > Dataset List.

-

Find the dataset and click Accelerate in the Operator column.

Step 3: Develop a model

Role: Developer

Use Jupyter Notebook to develop and test the model, then submit training code to a Git repository.

(Optional) Build a custom image

AI Developer Console provides built-in TensorFlow and PyTorch images. To use a custom image instead:

-

Create a

dockerfilewith the following content:FROM tensorflow/tensorflow:1.15.5-gpu USER root RUN pip install jupyter && \ pip install ipywidgets && \ jupyter nbextension enable --py widgetsnbextension && \ pip install jupyterlab && jupyter serverextension enable --py jupyterlab EXPOSE 8888 CMD ["sh", "-c", "jupyter-lab --notebook-dir=/home/jovyan --ip=0.0.0.0 --no-browser --allow-root --port=8888 --NotebookApp.token='' --NotebookApp.password='' --NotebookApp.allow_origin='*' --NotebookApp.base_url=${NB_PREFIX} --ServerApp.authenticate_prometheus=False"]For limits on custom images, see Create and use notebooks.

-

Build the image:

docker build -f dockerfile .Expected output (abbreviated):

Sending build context to Docker daemon 9.216kB Step 1/5 : FROM tensorflow/tensorflow:1.15.5-gpu ---> 73be11373498 ... Successfully built 3692f04626d5 -

Tag and push the image to your container registry:

docker tag ${IMAGE_ID} registry-vpc.cn-beijing.aliyuncs.com/${DOCKER_REPO}/jupyter:fashion-mnist-20210802a docker push registry-vpc.cn-beijing.aliyuncs.com/${DOCKER_REPO}/jupyter:fashion-mnist-20210802a -

Create a Secret to pull the image from the container registry. See Create a Secret based on existing Docker credentials.

kubectl create secret docker-registry regcred \ --docker-server=<your-registry-server> \ --docker-username=<username> \ --docker-password=<password> \ --docker-email=<your-email> -

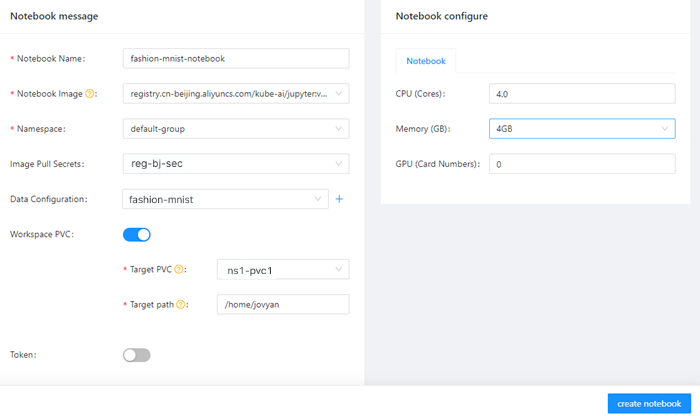

Create a Jupyter Notebook in AI Developer Console using the custom image. See Create and use notebooks.

Develop and test the model

-

In the left-side navigation pane, click Notebook.

-

On the Notebook page, click the notebook in the Running state.

-

Open a CLI launcher and verify the dataset is mounted:

pwd /root/data ls -alhExpected output:

total 30M drwx------ 1 root root 0 Jan 1 1970 . drwx------ 1 root root 4.0K Aug 2 04:15 .. drwxr-xr-x 1 root root 0 Aug 1 14:16 saved_model -rw-r----- 1 root root 4.3M Aug 1 01:53 t10k-images-idx3-ubyte.gz -rw-r----- 1 root root 5.1K Aug 1 01:53 t10k-labels-idx1-ubyte.gz -rw-r----- 1 root root 26M Aug 1 01:54 train-images-idx3-ubyte.gz -rw-r----- 1 root root 29K Aug 1 01:53 train-labels-idx1-ubyte.gz -

Create a notebook cell with the following training code. Set

dataset_pathto the mounted dataset directory andmodel_pathto the output directory.ImportantReplace

dataset_pathandmodel_pathwith the actual paths in your cluster.#!/usr/bin/python # -*- coding: UTF-8 -*- import os import gzip import numpy as np import tensorflow as tf from tensorflow import keras print('TensorFlow version: {}'.format(tf.__version__)) dataset_path = "/root/data/" model_path = "./model/" model_version = "v1" def load_data(): files = [ 'train-labels-idx1-ubyte.gz', 'train-images-idx3-ubyte.gz', 't10k-labels-idx1-ubyte.gz', 't10k-images-idx3-ubyte.gz' ] paths = [] for fname in files: paths.append(os.path.join(dataset_path, fname)) with gzip.open(paths[0], 'rb') as labelpath: y_train = np.frombuffer(labelpath.read(), np.uint8, offset=8) with gzip.open(paths[1], 'rb') as imgpath: x_train = np.frombuffer(imgpath.read(), np.uint8, offset=16).reshape(len(y_train), 28, 28) with gzip.open(paths[2], 'rb') as labelpath: y_test = np.frombuffer(labelpath.read(), np.uint8, offset=8) with gzip.open(paths[3], 'rb') as imgpath: x_test = np.frombuffer(imgpath.read(), np.uint8, offset=16).reshape(len(y_test), 28, 28) return (x_train, y_train),(x_test, y_test) def train(): (train_images, train_labels), (test_images, test_labels) = load_data() # Normalize pixel values to [0.0, 1.0] train_images = train_images / 255.0 test_images = test_images / 255.0 # Reshape for CNN input train_images = train_images.reshape(train_images.shape[0], 28, 28, 1) test_images = test_images.reshape(test_images.shape[0], 28, 28, 1) class_names = ['T-shirt/top', 'Trouser', 'Pullover', 'Dress', 'Coat', 'Sandal', 'Shirt', 'Sneaker', 'Bag', 'Ankle boot'] print('\ntrain_images.shape: {}, of {}'.format(train_images.shape, train_images.dtype)) print('test_images.shape: {}, of {}'.format(test_images.shape, test_images.dtype)) model = keras.Sequential([ keras.layers.Conv2D(input_shape=(28,28,1), filters=8, kernel_size=3, strides=2, activation='relu', name='Conv1'), keras.layers.Flatten(), keras.layers.Dense(10, activation=tf.nn.softmax, name='Softmax') ]) model.summary() epochs = 5 model.compile(optimizer='adam', loss='sparse_categorical_crossentropy', metrics=['accuracy']) logdir = "/training_logs" tensorboard_callback = keras.callbacks.TensorBoard(log_dir=logdir) model.fit(train_images, train_labels, epochs=epochs, callbacks=[tensorboard_callback], ) test_loss, test_acc = model.evaluate(test_images, test_labels) print('\nTest accuracy: {}'.format(test_acc)) export_path = os.path.join(model_path, model_version) print('export_path = {}\n'.format(export_path)) tf.keras.models.save_model( model, export_path, overwrite=True, include_optimizer=True, save_format=None, signatures=None, options=None ) print('\nSaved model success') if __name__ == '__main__': train() -

Click the

icon to run the cell. Expected output (5 epochs, test accuracy ~86.7%):

icon to run the cell. Expected output (5 epochs, test accuracy ~86.7%):TensorFlow version: 1.15.5 train_images.shape: (60000, 28, 28, 1), of float64 test_images.shape: (10000, 28, 28, 1), of float64 Model: "sequential_2" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= Conv1 (Conv2D) (None, 13, 13, 8) 80 _________________________________________________________________ flatten_2 (Flatten) (None, 1352) 0 _________________________________________________________________ Softmax (Dense) (None, 10) 13530 ================================================================= Total params: 13,610 Trainable params: 13,610 Non-trainable params: 0 _________________________________________________________________ Train on 60000 samples Epoch 1/5 60000/60000 [==============================] - 3s 57us/sample - loss: 0.5452 - acc: 0.8102 Epoch 2/5 60000/60000 [==============================] - 3s 52us/sample - loss: 0.4103 - acc: 0.8555 Epoch 3/5 60000/60000 [==============================] - 3s 55us/sample - loss: 0.3750 - acc: 0.8681 Epoch 4/5 60000/60000 [==============================] - 3s 55us/sample - loss: 0.3524 - acc: 0.8757 Epoch 5/5 60000/60000 [==============================] - 3s 53us/sample - loss: 0.3368 - acc: 0.8798 10000/10000 [==============================] - 0s 37us/sample - loss: 0.3770 - acc: 0.8673 Test accuracy: 0.8672999739646912 export_path = ./model/v1 Saved model success

Push code to a Git repository

-

Install Git:

apt-get update apt-get install git -

Configure Git credentials:

git config --global credential.helper store git pull ${YOUR_GIT_REPO} -

Push the code:

git push origin fashion-testExpected output:

Total 0 (delta 0), reused 0 (delta 0) To codeup.aliyun.com:60b4cf5c66bba1c04b442e49/tensorflow-fashion-mnist-sample.git * [new branch] fashion-test -> fashion-test

Submit a training job via the Arena SDK

Instead of running training inside the notebook, you can use the Arena SDK to submit a TFJob to the cluster.

-

Install the SDK dependency:

!pip install coloredlogs -

Run the following code in a notebook cell. Replace the Git repository URL and credentials with your own values.

-

namespace: The job is submitted to thedemo-nsnamespace. -

with_sync_source: The Git repository URL. -

with_envs: The Git repository username and password.

import os import sys import time from arenasdk.client.client import ArenaClient from arenasdk.enums.types import * from arenasdk.exceptions.arena_exception import * from arenasdk.training.tensorflow_job_builder import * from arenasdk.logger.logger import LoggerBuilder def main(): print("start to test arena-python-sdk") # Submit the job to the demo-ns namespace client = ArenaClient("","demo-ns","info","arena-system") print("create ArenaClient succeed.") print("start to create tfjob") job_name = "arena-sdk-distributed-test" job_type = TrainingJobType.TFTrainingJob try: job = TensorflowJobBuilder().with_name(job_name)\ .witch_workers(1)\ .with_gpus(1)\ .witch_worker_image("tensorflow/tensorflow:1.5.0-devel-gpu")\ .witch_ps_image("tensorflow/tensorflow:1.5.0-devel")\ .witch_ps_count(1)\ .with_datas({"fashion-demo-pvc":"/data"})\ .enable_tensorboard()\ .with_sync_mode("git")\ .with_sync_source("https://codeup.aliyun.com/60b4cf5c66bba1c04b442e49/tensorflow-fashion-mnist-sample.git")\ .with_envs({\ "GIT_SYNC_USERNAME":"USERNAME", \ "GIT_SYNC_PASSWORD":"PASSWORD",\ "TEST_TMPDIR":"/",\ })\ .with_command("python code/tensorflow-fashion-mnist-sample/tf-distributed-mnist.py").build() if client.training().get(job_name, job_type): print("the job {} has been created, to delete it".format(job_name)) client.training().delete(job_name, job_type) time.sleep(3) output = client.training().submit(job) print(output) count = 0 while True: if count > 160: raise Exception("timeout for waiting job to be running") jobInfo = client.training().get(job_name,job_type) if jobInfo.get_status() == TrainingJobStatus.TrainingJobPending: print("job status is PENDING,waiting...") count = count + 1 time.sleep(5) continue print("current status is {} of job {}".format(jobInfo.get_status().value,job_name)) break logger = LoggerBuilder().with_accepter(sys.stdout).with_follow().with_since("5m") print(str(jobInfo)) except ArenaException as e: print(e) main()Key parameters:

-

-

Click the

icon to submit the job. When the job reaches

icon to submit the job. When the job reaches RUNNINGstate, the output includes job details:current status is RUNNING of job arena-sdk-distributed-test { "allocated_gpus": 1, "chief_name": "arena-sdk-distributed-test-worker-0", "duration": "185s", "name": "arena-sdk-distributed-test", "namespace": "demo-ns", "request_gpus": 1, "tensorboard": "http://192.168.5.6:31068", "type": "tfjob" }

Step 4: Train the model

Role: Developer

The following four examples cover standalone training, distributed training, Fluid-accelerated training, and topology-aware GPU scheduling.

Example 1: Standalone TensorFlow training job

Method 1: Arena CLI

arena \

submit \

tfjob \

-n ns1 \

--name=fashion-mnist-arena \

--data=fashion-mnist-jackwg-pvc:/root/data/ \

--env=DATASET_PATH=/root/data/ \

--env=MODEL_PATH=/root/saved_model \

--env=MODEL_VERSION=1 \

--env=GIT_SYNC_USERNAME=<GIT_USERNAME> \

--env=GIT_SYNC_PASSWORD=<GIT_PASSWORD> \

--sync-mode=git \

--sync-source=https://codeup.aliyun.com/60b4cf5c66bba1c04b442e49/tensorflow-fashion-mnist-sample.git \

--image="tensorflow/tensorflow:2.2.2-gpu" \

"python /root/code/tensorflow-fashion-mnist-sample/train.py --log_dir=/training_logs"Method 2: AI Developer Console

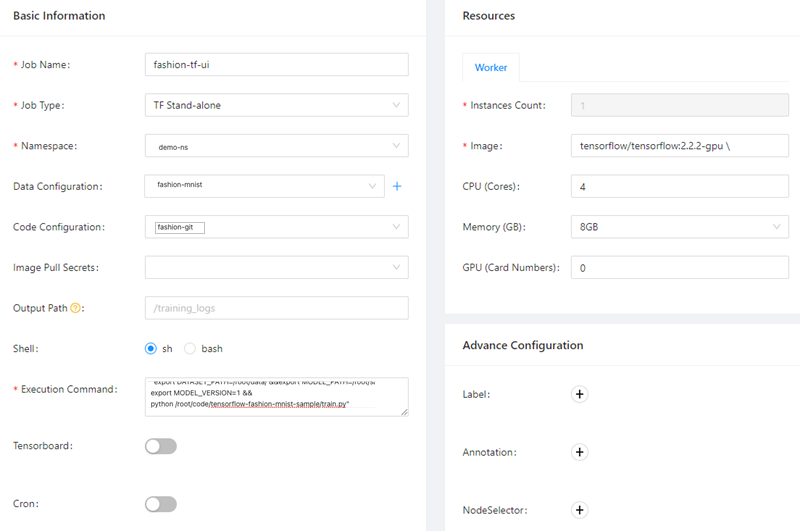

-

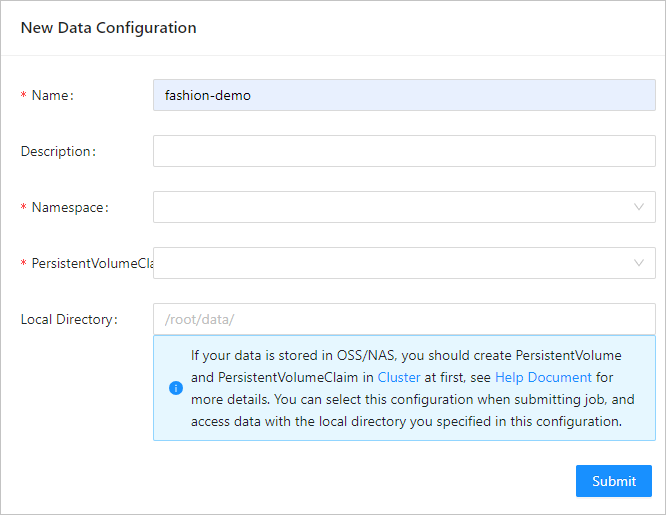

Configure the data source. See Configure a dataset.

Parameter Example Required Name fashion-demo Yes Namespace demo-ns Yes PersistentVolumeClaim fashion-demo-pvc Yes Local Directory /root/data No

-

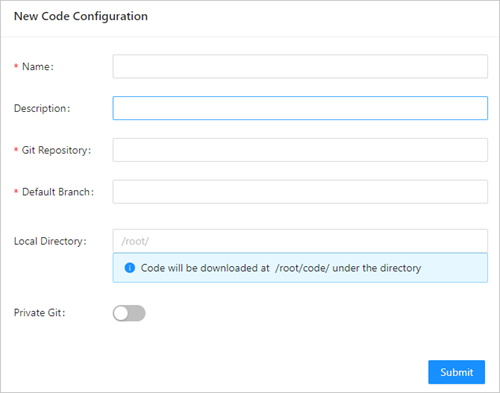

Configure the source code repository. See Configure a source code repository.

Parameter Example Required Name fashion-git Yes Git Repository https://codeup.aliyun.com/60b4cf5c66bba1c04b442e49/tensorflow-fashion-mnist-sample.git Yes Default Branch master No Local Directory /root/ No Git user Your Git username No Git secret Your Git password No

-

Submit the job. See Submit a TensorFlow training job. Key parameters for this example: For Arena CLI reference, see Use Arena to submit a TensorFlow training job.

Parameter Value Job Name fashion-tf-ui Job Type TF Stand-alone Namespace demo-ns Data Configuration fashion-demo Code Configuration fashion-git Code branch master Execution Command "export DATASET_PATH=/root/data/ \&\&export MODEL_PATH=/root/saved_model \&\&export MODEL_VERSION=1 \&\&python /root/code/tensorflow-fashion-mnist-sample/train.py"Instances Count 1 (default) Image tensorflow/tensorflow:2.2.2-gpu CPU (Cores) 4 (default) Memory (GB) 8 (default)

-

View the job log. In the left-side navigation pane, click Job List, click the job name, then on the Instances tab click Log in the Operator column. The log shows 5 training epochs with a final test accuracy of approximately 87.3%:

Epoch 5/5 1875/1875 [==============================] - 3s 2ms/step - loss: 0.3351 - accuracy: 0.8816 313/313 [==============================] - 0s 1ms/step - loss: 0.3595 - accuracy: 0.8733 Test accuracy: 0.8733000159263611 export_path = /root/saved_model/1 Saved model success -

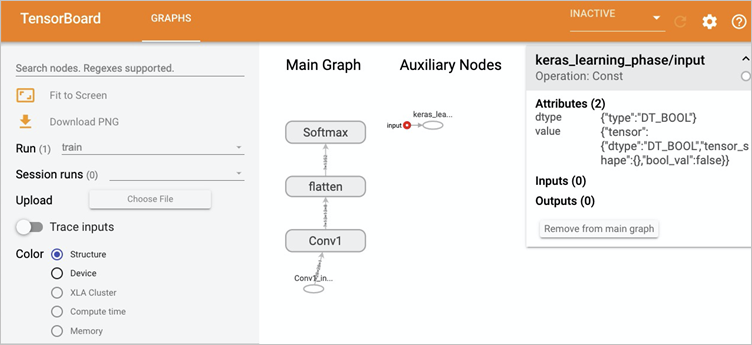

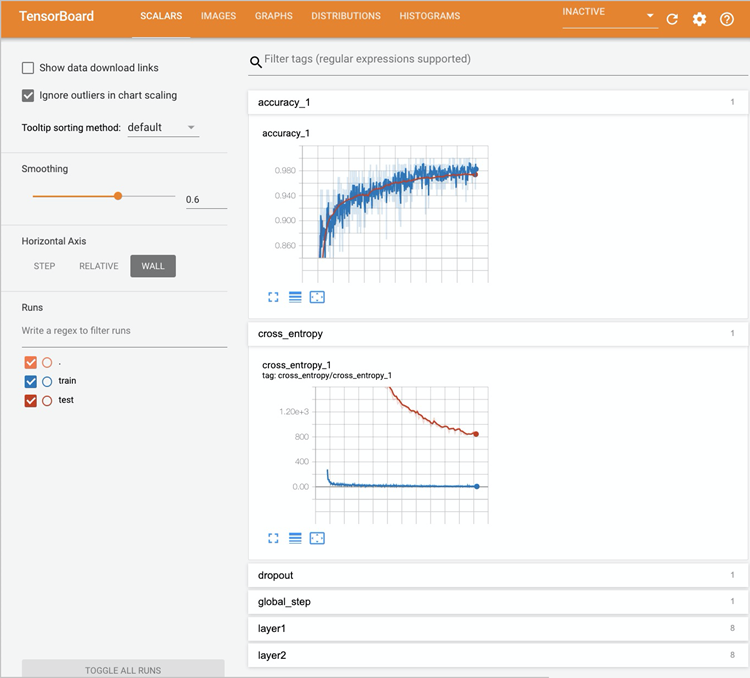

View training metrics on TensorBoard. Get the TensorBoard Service IP:

kubectl get svc -n demo-nsExpected output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE tf-dist-arena-tensorboard NodePort 172.16.XX.XX <none> 6006:32226/TCP 80mForward the port to your local machine:

kubectl port-forward svc/tf-dist-arena-tensorboard -n demo-ns 6006:6006Open

http://localhost:6006/in your browser.

Example 2: Distributed TensorFlow training job

Method 1: Arena CLI

arena submit tf \

-n demo-ns \

--name=tf-dist-arena \

--working-dir=/root/ \

--data fashion-mnist-pvc:/data \

--env=TEST_TMPDIR=/ \

--env=GIT_SYNC_USERNAME=kubeai \

--env=GIT_SYNC_PASSWORD=kubeai@ACK123 \

--env=GIT_SYNC_BRANCH=master \

--gpus=1 \

--workers=2 \

--worker-image=tensorflow/tensorflow:1.5.0-devel-gpu \

--sync-mode=git \

--sync-source=https://codeup.aliyun.com/60b4cf5c66bba1c04b442e49/tensorflow-fashion-mnist-sample.git \

--ps=1 \

--ps-image=tensorflow/tensorflow:1.5.0-devel \

--tensorboard \

"python code/tensorflow-fashion-mnist-sample/tf-distributed-mnist.py --log_dir=/training_logs"After the job starts, access TensorBoard the same way as in Example 1:

-

Get the Service IP:

kubectl get svc -n demo-ns -

Forward the port:

kubectl port-forward svc/tf-dist-arena-tensorboard -n demo-ns 6006:6006 -

Open

http://localhost:6006/in your browser.

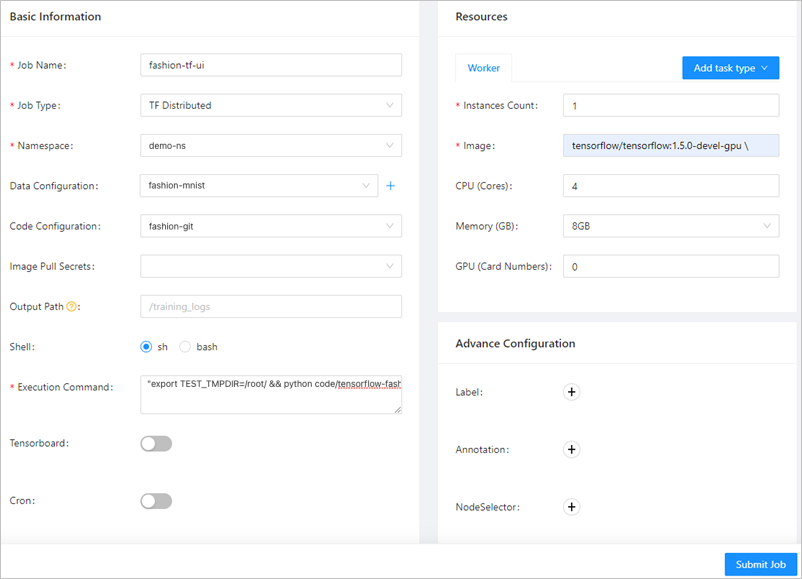

Method 2: AI Developer Console

Reuse the data source (fashion-demo) and source code (fashion-git) configured in Example 1. Key differences in the job configuration:

| Parameter | Value |

|---|---|

| Job Name | fashion-ps-ui |

| Job Type | TF Distributed |

| Namespace | demo-ns |

| Execution Command | "export TEST_TMPDIR=/root/ \&\& python code/tensorflow-fashion-mnist-sample/tf-distributed-mnist.py --log_dir=/training_logs" |

| Image (Worker tab) | tensorflow/tensorflow:1.5.0-devel-gpu |

| Image (PS tab) | tensorflow/tensorflow:1.5.0-devel |

For Arena CLI reference, see Use Arena to submit a TensorFlow training job.

Example 3: Fluid-accelerated training job

Fluid caches the OSS dataset locally on cluster nodes, reducing training time from 3 minutes to 33 seconds — a 5.5x speedup — with no code changes.

If you already accelerated the dataset in Step 2, skip the acceleration step. Otherwise, see Create an accelerated dataset based on OSS.

Submit a training job that reads from the accelerated PVC (fashion-demo-pvc-acc):

arena \

submit \

tfjob \

-n demo-ns \

--name=fashion-mnist-fluid \

--data=fashion-demo-pvc-acc:/root/data/ \

--env=DATASET_PATH=/root/data/fashion-demo-pvc-acc \

--env=MODEL_PATH=/root/saved_model \

--env=MODEL_VERSION=1 \

--env=GIT_SYNC_USERNAME=${GIT_USERNAME} \

--env=GIT_SYNC_PASSWORD=${GIT_PASSWORD} \

--sync-mode=git \

--sync-source=https://codeup.aliyun.com/60b4cf5c66bba1c04b442e49/tensorflow-fashion-mnist-sample.git \

--image="tensorflow/tensorflow:2.2.2-gpu" \

"python /root/code/tensorflow-fashion-mnist-sample/train.py --log_dir=/training_logs"The key difference from a regular job: --data=fashion-demo-pvc-acc:/root/data/ points to the Fluid-accelerated PVC, and DATASET_PATH includes the PVC name as a subdirectory.

Compare both jobs after they complete:

arena list -n demo-nsExpected output:

NAME STATUS TRAINER DURATION GPU(Requested) GPU(Allocated) NODE

fashion-mnist-fluid SUCCEEDED TFJOB 33s 0 N/A 192.168.5.7

fashion-mnist-arena SUCCEEDED TFJOB 3m 0 N/A 192.168.5.8Both jobs run the same code on the same node. The Fluid-accelerated job completes in 33 seconds vs. 3 minutes for the regular job.

Example 4: Topology-aware GPU scheduling

Topology-aware scheduling reduces training time from 120 seconds to 44 seconds, and increases throughput from 225.50 to 1,006.44 images/sec. The AI job scheduler achieves this by optimizing GPU placement based on hardware topology — NVLink and PCIe Switch interconnects, and non-uniform memory access (NUMA) topology.

Submit a job without topology-aware scheduling:

arena submit mpi \

--name=tensorflow-4-vgg16 \

--gpus=1 \

--workers=4 \

--image=registry.cn-hangzhou.aliyuncs.com/kubernetes-image-hub/tensorflow-benchmark:tf2.3.0-py3.7-cuda10.1 \

"mpirun --allow-run-as-root -np "4" -bind-to none -map-by slot -x NCCL_DEBUG=INFO -x NCCL_SOCKET_IFNAME=eth0 -x LD_LIBRARY_PATH -x PATH --mca pml ob1 --mca btl_tcp_if_include eth0 --mca oob_tcp_if_include eth0 --mca orte_keep_fqdn_hostnames t --mca btl ^openib python /tensorflow/benchmarks/scripts/tf_cnn_benchmarks/tf_cnn_benchmarks.py --model=vgg16 --batch_size=64 --variable_update=horovod"Submit a job with topology-aware scheduling:

Add the ack.node.gpu.schedule=topology label to the target node:

kubectl label node cn-beijing.192.168.XX.XX ack.node.gpu.schedule=topology --overwriteSubmit the job with --gputopology=true:

arena submit mpi \

--name=tensorflow-topo-4-vgg16 \

--gpus=1 \

--workers=4 \

--gputopology=true \

--image=registry.cn-hangzhou.aliyuncs.com/kubernetes-image-hub/tensorflow-benchmark:tf2.3.0-py3.7-cuda10.1 \

"mpirun --allow-run-as-root -np "4" -bind-to none -map-by slot -x NCCL_DEBUG=INFO -x NCCL_SOCKET_IFNAME=eth0 -x LD_LIBRARY_PATH -x PATH --mca pml ob1 --mca btl_tcp_if_include eth0 --mca oob_tcp_if_include eth0 --mca orte_keep_fqdn_hostnames t --mca btl ^openib python /tensorflow/benchmarks/scripts/tf_cnn_benchmarks/tf_cnn_benchmarks.py --model=vgg16 --batch_size=64 --variable_update=horovod"Compare results:

arena list -n demo-nsExpected output:

NAME STATUS TRAINER DURATION GPU(Requested) GPU(Allocated) NODE

tensorflow-topo-4-vgg16 SUCCEEDED MPIJOB 44s 4 N/A 192.168.4.XX1

tensorflow-4-vgg16-image-warned SUCCEEDED MPIJOB 2m 4 N/A 192.168.4.XX0Get throughput for the topology-aware job:

arena logs tensorflow-topo-4-vgg16 -n demo-nstotal images/sec: 1006.44Get throughput for the baseline job:

arena logs tensorflow-4-vgg16-image-warned -n demo-nstotal images/sec: 225.50| Training job | Processing time per GPU (ns) | Total GPU throughput (images/sec) | Duration (s) |

|---|---|---|---|

| Topology-aware scheduling enabled | 56.4 | 1006.44 | 44 |

| Topology-aware scheduling disabled | 251.7 | 225.50 | 120 |

To restore regular GPU scheduling on the node, remove the topology label:

kubectl label node cn-beijing.192.168.XX.XX0 ack.node.gpu.schedule=default --overwriteFor more information, see GPU topology-aware scheduling and Enable topology-aware CPU scheduling.

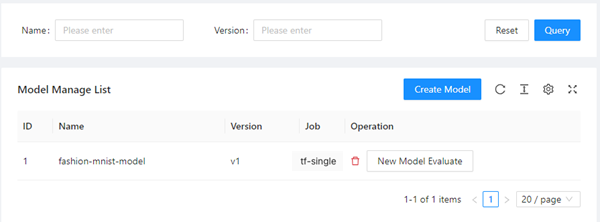

Step 5: Manage the model

Role: Developer

Register the trained model in AI Developer Console to track versions and trigger evaluations.

-

In the left-side navigation pane, click Model Manage.

-

Click Create Model.

-

In the Create dialog box, set the following fields:

-

Model Name: fsahion-mnist-demo

-

Model Version: v1

-

Job Name: tf-single

-

-

Click OK. The model appears in the list.

To evaluate the model immediately, click New Model Evaluate in the Operation column.

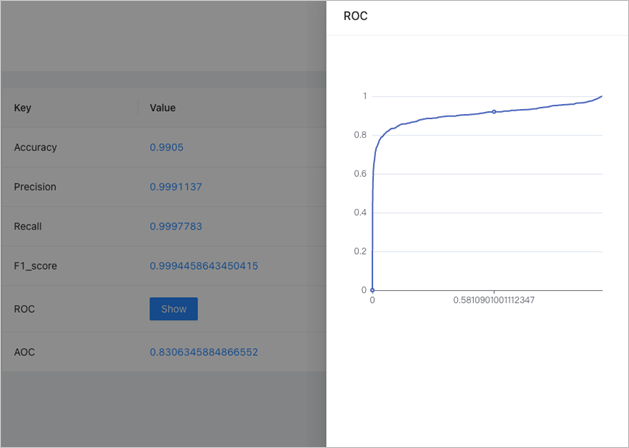

Step 6: Evaluate the model

Role: Developer

Submit an evaluation job that loads the model checkpoint, runs it against the test dataset, and stores metrics in MySQL. You can then compare metrics across model versions in AI Developer Console.

Submit a training job that exports a checkpoint

arena \

submit \

tfjob \

-n demo-ns \

--name=fashion-mnist-arena-ckpt \

--data=fashion-demo-pvc:/root/data/ \

--env=DATASET_PATH=/root/data/ \

--env=MODEL_PATH=/root/data/saved_model \

--env=MODEL_VERSION=1 \

--env=GIT_SYNC_USERNAME=${GIT_USERNAME} \

--env=GIT_SYNC_PASSWORD=${GIT_PASSWORD} \

--env=OUTPUT_CHECKPOINT=1 \

--sync-mode=git \

--sync-source=https://codeup.aliyun.com/60b4cf5c66bba1c04b442e49/tensorflow-fashion-mnist-sample.git \

--image="tensorflow/tensorflow:2.2.2-gpu" \

"python /root/code/tensorflow-fashion-mnist-sample/train.py --log_dir=/training_logs"Build the evaluation image

In the kubeai-sdk directory, build and push the evaluation image:

docker build . -t ${DOCKER_REGISTRY}:fashion-mnist

docker push ${DOCKER_REGISTRY}:fashion-mnistSubmit the evaluation job

-

Get the MySQL Service IP:

kubectl get svc -n kube-ai ack-mysqlExpected output:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE ack-mysql ClusterIP 172.16.XX.XX <none> 3306/TCP 28h -

Submit the evaluation job using the

CLUSTER-IPfrom the previous step asMYSQL_HOST:arena evaluate model \ --namespace=demo-ns \ --loglevel=debug \ --name=evaluate-job \ --image=registry.cn-beijing.aliyuncs.com/kube-ai/kubeai-sdk-demo:fashion-minist \ --env=ENABLE_MYSQL=True \ --env=MYSQL_HOST=172.16.77.227 \ --env=MYSQL_PORT=3306 \ --env=MYSQL_USERNAME=kubeai \ --env=MYSQL_PASSWORD=kubeai@ACK \ --data=fashion-demo-pvc:/data \ --model-name=1 \ --model-path=/data/saved_model/ \ --dataset-path=/data/ \ --metrics-path=/data/output \ "python /kubeai/evaluate.py"

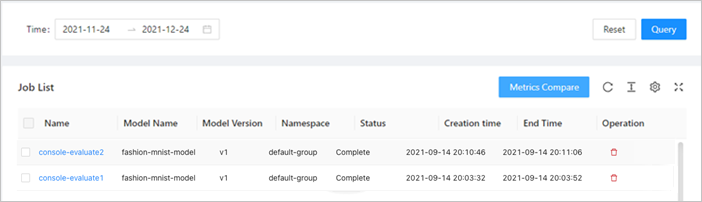

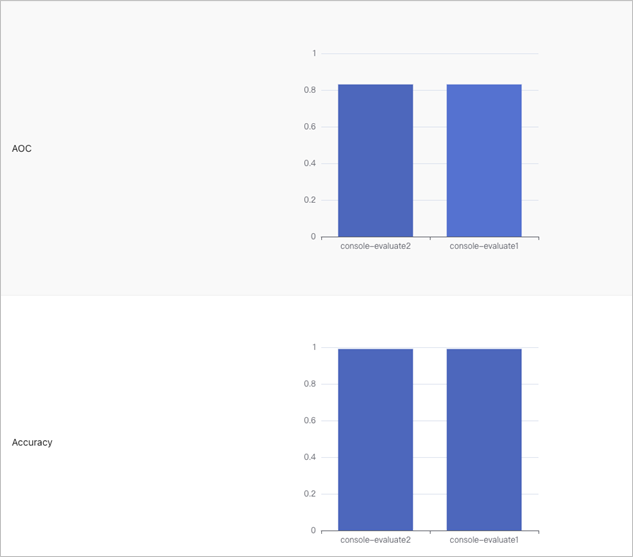

Compare evaluation results

-

In the left-side navigation pane of AI Developer Console, click Model Manage.

-

In the Job List section, click an evaluation job name to view its metrics.

-

Select multiple evaluation jobs to compare their metrics side by side.

Step 7: Deploy the model as an inference service

Role: Developer

Deploy the trained Fashion-MNIST model as a TensorFlow Serving inference service. Arena supports multiple serving frameworks including Triton and Seldon. See Arena serve guide for the full list.

The model is stored in fashion-demo-pvc from Step 2. To use a different storage type, create a PVC for that storage type first.

Deploy the inference service

arena serve tensorflow \

--loglevel=debug \

--namespace=demo-ns \

--name=fashion-mnist \

--model-name=1 \

--gpus=1 \

--image=tensorflow/serving:1.15.0-gpu \

--data=fashion-demo-pvc:/data \

--model-path=/data/saved_model/ \

--version-policy=latestVerify the service

arena serve list -n demo-nsExpected output:

NAME TYPE VERSION DESIRED AVAILABLE ADDRESS PORTS GPU

fashion-mnist Tensorflow 202111031203 1 1 172.16.XX.XX GRPC:8500,RESTFUL:8501 1The service exposes two ports: gRPC on 8500 and REST on 8501. Use the ADDRESS and PORTS values to send requests from within the cluster.

Send inference requests

Use the Jupyter Notebook from Step 3 as a client. Set server_ip to the address from the previous step and server_http_port to 8501.

import os

import gzip

import numpy as np

import random

import requests

import json

server_ip = "172.16.XX.XX" # Replace with the ADDRESS from arena serve list

server_http_port = 8501

dataset_dir = "/root/data/"

def load_data():

files = [

'train-labels-idx1-ubyte.gz',

'train-images-idx3-ubyte.gz',

't10k-labels-idx1-ubyte.gz',

't10k-images-idx3-ubyte.gz'

]

paths = []

for fname in files:

paths.append(os.path.join(dataset_dir, fname))

with gzip.open(paths[0], 'rb') as labelpath:

y_train = np.frombuffer(labelpath.read(), np.uint8, offset=8)

with gzip.open(paths[1], 'rb') as imgpath:

x_train = np.frombuffer(imgpath.read(), np.uint8, offset=16).reshape(len(y_train), 28, 28)

with gzip.open(paths[2], 'rb') as labelpath:

y_test = np.frombuffer(labelpath.read(), np.uint8, offset=8)

with gzip.open(paths[3], 'rb') as imgpath:

x_test = np.frombuffer(imgpath.read(), np.uint8, offset=16).reshape(len(y_test), 28, 28)

return (x_train, y_train),(x_test, y_test)

class_names = ['T-shirt/top', 'Trouser', 'Pullover', 'Dress', 'Coat',

'Sandal', 'Shirt', 'Sneaker', 'Bag', 'Ankle boot']

(train_images, train_labels), (test_images, test_labels) = load_data()

train_images = train_images / 255.0

test_images = test_images / 255.0

# Reshape for model input

train_images = train_images.reshape(train_images.shape[0], 28, 28, 1)

test_images = test_images.reshape(test_images.shape[0], 28, 28, 1)

print('\ntrain_images.shape: {}, of {}'.format(train_images.shape, train_images.dtype))

print('test_images.shape: {}, of {}'.format(test_images.shape, test_images.dtype))

def request_model(data):

headers = {"content-type": "application/json"}

json_response = requests.post('http://{}:{}/v1/models/1:predict'.format(server_ip, server_http_port), data=data, headers=headers)

print('=======response:', json_response, json_response.text)

predictions = json.loads(json_response.text)['predictions']

print('The model thought this was a {} (class {}), and it was actually a {} (class {})'.format(

class_names[np.argmax(predictions[0])], np.argmax(predictions[0]),

class_names[test_labels[0]], test_labels[0]))

data = json.dumps({"signature_name": "serving_default", "instances": test_images[0:3].tolist()})

print('Data: {} ... {}'.format(data[:50], data[len(data)-52:]))

request_model(data)Click the ![]() icon. Expected output:

icon. Expected output:

train_images.shape: (60000, 28, 28, 1), of float64

test_images.shape: (10000, 28, 28, 1), of float64

Data: {"signature_name": "serving_default", "instances": ... [0.0], [0.0], [0.0], [0.0], [0.0], [0.0], [0.0]]]]}

=======response: <Response [200]> {

"predictions": [[7.42696e-07, 6.91237556e-09, 2.66364452e-07, 2.27735413e-07, 4.0373439e-07, 0.00490919966, 7.27086217e-06, 0.0316713452, 0.0010733594, 0.962337255], ...]

}

The model thought this was a Ankle boot (class 9), and it was actually a Ankle boot (class 9)FAQ

How do I install software in the Jupyter Notebook console?

Run apt-get install <software-name> from a terminal in the notebook.

How do I fix garbled characters in the Jupyter Notebook console?

Update /etc/locale with the following content and reopen the terminal:

LC_CTYPE="da_DK.UTF-8"

LC_NUMERIC="da_DK.UTF-8"

LC_TIME="da_DK.UTF-8"

LC_COLLATE="da_DK.UTF-8"

LC_MONETARY="da_DK.UTF-8"

LC_MESSAGES="da_DK.UTF-8"

LC_PAPER="da_DK.UTF-8"

LC_NAME="da_DK.UTF-8"

LC_ADDRESS="da_DK.UTF-8"

LC_TELEPHONE="da_DK.UTF-8"

LC_MEASUREMENT="da_DK.UTF-8"

LC_IDENTIFICATION="da_DK.UTF-8"

LC_ALL=