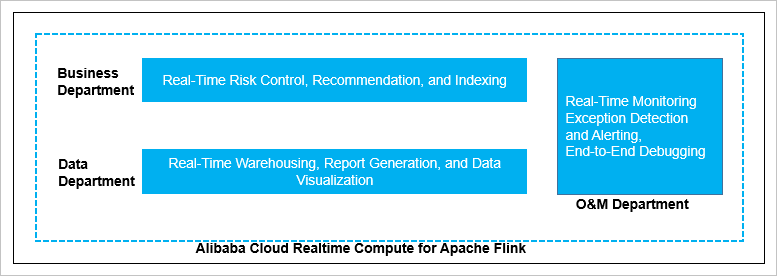

Realtime Compute for Apache Flink covers a wide range of real-time big data processing scenarios, organized by business department and technical field.

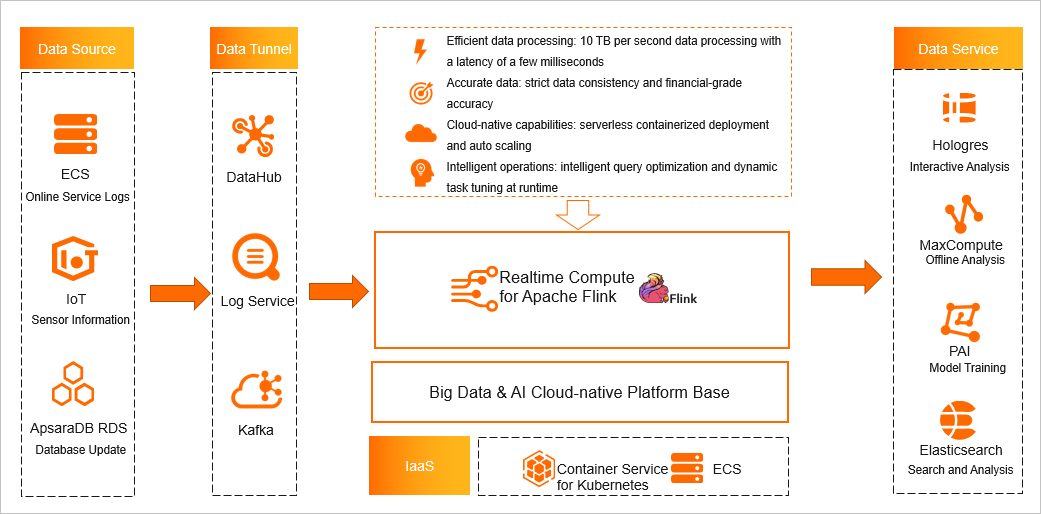

Data sources and integration

As a stream computing engine, Flink is widely used for real-time data processing. Typical data sources include online service logs from Elastic Compute Service (ECS) instances and sensor data from Internet of Things (IoT) scenarios. Flink can also subscribe to binary logging (binlog) updates from relational databases such as ApsaraDB RDS (RDS) and PolarDB. Services such as DataHub, Simple Log Service (SLS), and Kafka collect this streaming data and feed it into Realtime Compute for Apache Flink for processing. Results can then be written to downstream services such as MaxCompute, Hologres for interactive analysis, Platform for AI, and Elasticsearch.

Department scenarios

From a business perspective, Realtime Compute for Apache Flink is used in the following scenarios:

-

Business departments: real-time risk control, real-time recommendations, and real-time index building for search engines.

-

Data departments: real-time data warehousing, real-time reports, and real-time dashboards.

-

Operations and maintenance (O&M) departments: real-time monitoring, real-time anomaly detection and alerting, and end-to-end debugging.

Technical fields

From a technical perspective, Realtime Compute for Apache Flink covers four core use cases:

Real-time ETL and data streams

Real-time extract, transform, and load (ETL) and data streams deliver data from one point to another in real time. This process can include data cleaning and integration tasks. Common examples include building a search index in real time and running ETL for a real-time data warehouse.

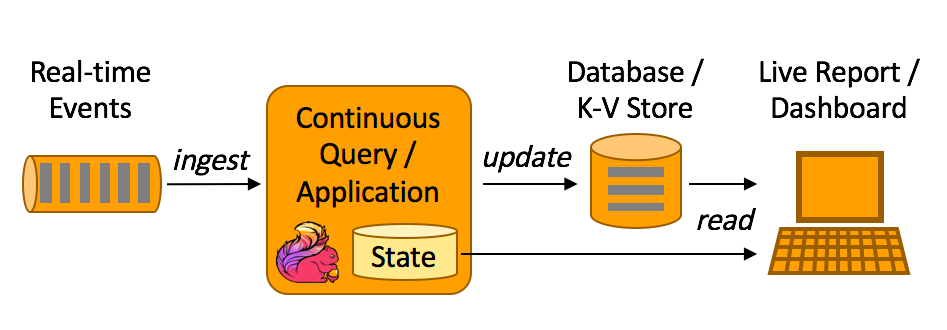

Real-time data analytics

Real-time data analytics extracts and integrates information from raw data to support business decisions as events happen. Typical metrics include the top 10 best-selling products per day, average warehouse turnover time, average document click rate, and push notification open rate. Results are surfaced in real-time reports or dashboards.

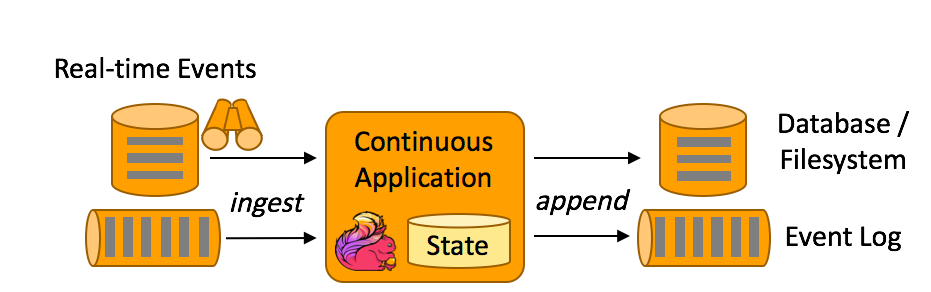

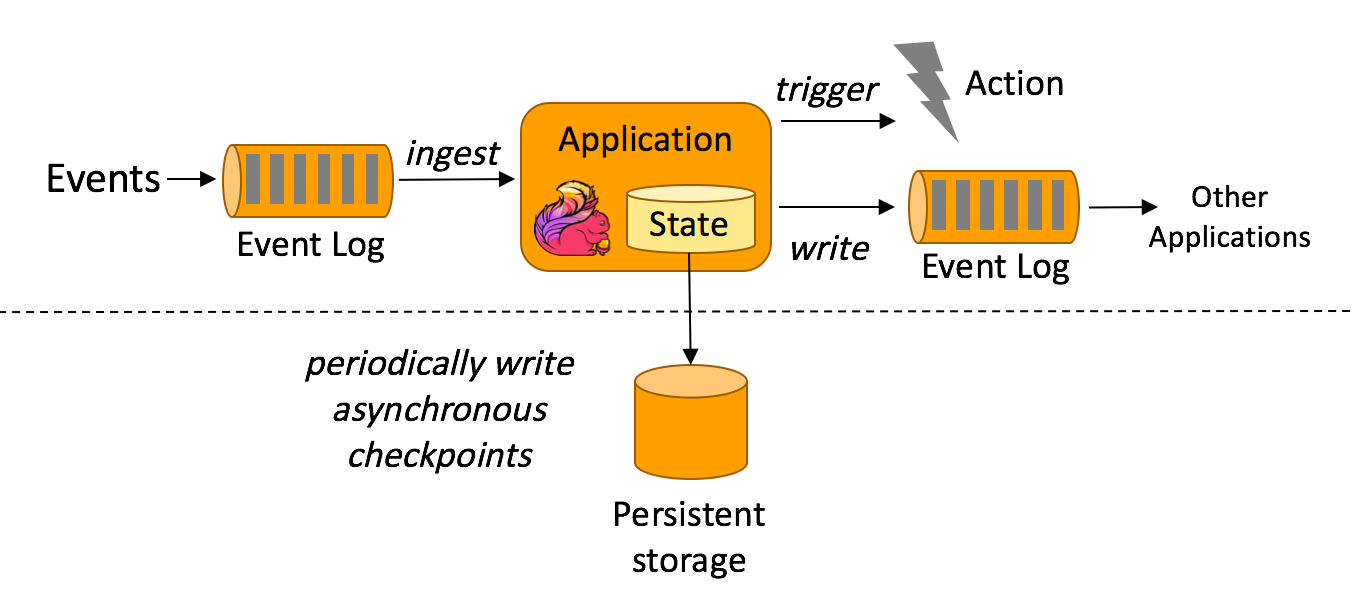

Event-driven applications

Event-driven applications process or respond to subscribed events and often maintain internal state across events. When a user action triggers a rule — for example, a risk control rule — the system catches the event, analyzes the user's current and historical behavior, and decides whether to take action. Common examples include fraud detection, risk control systems, and O&M anomaly detection.

Risk control system

Realtime Compute for Apache Flink processes complex stream and batch tasks and provides APIs for complex mathematical calculations and complex event processing rules. This lets businesses analyze data in real time and strengthen risk control capabilities — for example, detecting abnormal click patterns in an app or identifying irregular changes in IoT data streams.

The flowcharts in the technical fields section above are sourced from the official Apache Flink website.