Intel CPU architectures support unaligned memory access. A Split Lock is an atomic operation that crosses a cache line. In concurrent or high-performance computing (HPC) scenarios, frequent Split Locks can degrade system performance and may cause system stuttering or crashes.

How it works

When the operand of an atomic operation spans two cache lines, the processor locks the bus to ensure the atomicity of the operation. This forces all other cores to pause memory access until the operation is complete.

A Split Lock occurs when two conditions are met:

Atomic operation: An assembly instruction with a

LOCKprefix is executed.Cross-cache-line access: The operand address is not aligned and spans two cache lines.

Impact of Split Locks

Global performance degradation: A Split Lock blocks memory access, which degrades the performance of all processes on the system, not just the process that triggered it.

Increased latency: Frequent Split Locks increase memory access latency and cause system performance jitter.

Detect Split Locks

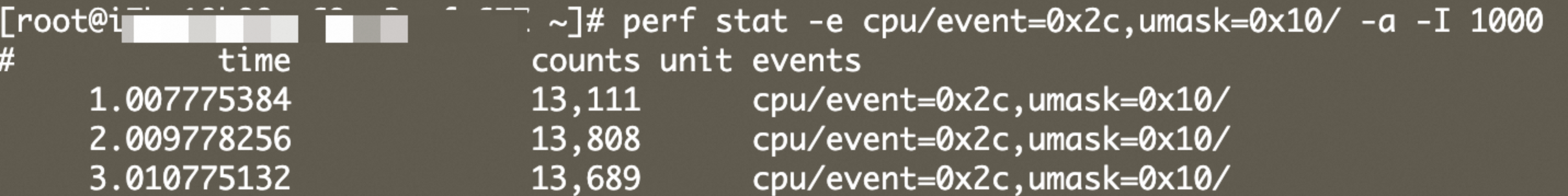

For example, on an ecs.g8i.xlarge instance, a value greater than 0 in the counts column indicates that Split Locks have occurred on the system.

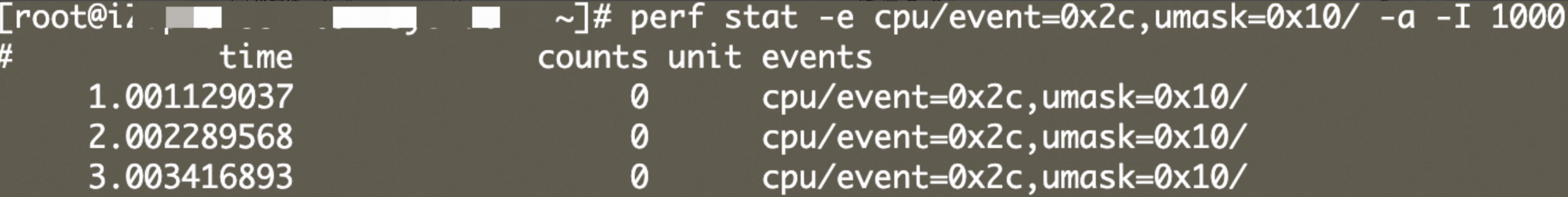

perf stat -e cpu/event=0x2c,umask=0x10/ -a -I 1000

If a memory access does not cross a cache line, the corresponding counter value is 0.

Avoid Split Locks

Ensure atomic variables are aligned to their natural boundaries.

// Recommended: Align to 64 bytes to avoid crossing cache lines and false sharing. alignas(64) atomic<uint64_t> counter; // For 128-bit atomic types, align to 16 bytes. alignas(16) atomic<__int128> big_counter;Avoid placing large atomic variables in the middle of a struct.

// Not recommended: The atomic variable can be shifted by preceding members, causing it to cross a cache line. struct BadExample { char a; // Occupies 1 byte atomic<__int128> val; // May cross a cache line }; // Recommended: Place the member with the strictest alignment requirement first and declare it explicitly. struct GoodExample { alignas(16) atomic<__int128> val; char a; };Use a combination of smaller atomic types instead of a large one. For scenarios that do not require true 128-bit atomicity, you can split the operation into two 64-bit atomic operations.

struct PaddedCounter { alignas(64) atomic<uint64_t> low; alignas(64) atomic<uint64_t> high; };Do not use unaligned pointers for atomic operations.

// Incorrect: malloc does not guarantee 16-byte alignment, especially with older libc versions. void* ptr = malloc(sizeof(atomic<__int128>)); atomic<__int128>* p = new(ptr) atomic<__int128>; // Correct: Use aligned_alloc. void* aligned_ptr = aligned_alloc(16, sizeof(atomic<__int128>)); atomic<__int128>* p = new(aligned_ptr) atomic<__int128>;Use

static_assertto check alignment.static_assert(alignof(atomic<__int128>) >= 16, "128-bit atomic must be 16-byte aligned");Avoid using atomic types in packed structs.

#pragma pack(push, 1) struct Packed { uint8_t flag; atomic<uint64_t> counter; // Incorrect: Even an 8-byte type can cross a cache line due to the compact layout. }; #pragma pack(pop)