This article analyzes the source code of the open-source version of ClickHouse v21.8.10.19-lts.

By Fan Zhen (Chen Fan), Head of the Open-Source Big Data-OLAP of Alibaba Cloud.

The ClickHouse community introduced ClickHouse Keeper in version 21.8. ClickHouse Keeper is a distributed coordination service fully compatible with the ZooKeeper protocol. This article analyzes the source code of the open-source version of ClickHouse v21.8.10.19-lts.

Contents

- Background

- Architecture Diagram

- Analysis of Core Flow Chart

- Analysis of Internal Code Flow

- Troubleshooting of NuRaft Key Configuration

- Summary

- References

Background

Note: The following version of code analysis is the open-source version of ClickHouse v21.8.10.19-lts. The class diagram and sequence diagram are not strictly in accordance with UML specifications. Convenience, function names, function parameters, and other information are not strictly in accordance with the original code.

HouseKeeper vs. ZooKeeper

- The Java development of ZooKeeper experiences the disadvantage of JVM, and its execution efficiency is not as good as C++. Too many Znodes are prone to performance problems, and Full GC is more frequent.

- ZooKeeper has complex operations and maintenance and requires the independent deployment of components. Previously, it faced many problems. HouseKeeper supports more deployment modes, such as standalone and integrated modes.

- ZooKeeper Transaction Id (ZXID) has the problem of overflow, but HouseKeeper does not.

- HouseKeeper improves the read /write performance and supports read/write linear consistency. Please see this link for more information about consistency levels

- The HouseKeeper code is unified with ClickHouse and is controllable in a closed loop. It will have strong scalability and work as the basis of the design and develop MetaServer in the future. Mainstream MetaServers are a combination of Raft and RocksDB and can use this codebase for development.

ZooKeeper Client

- The ZooKeeper Client does not need to be modified because HouseKeeper is fully compatible with the ZooKeeper protocol.

- The ZooKeeper Client is developed by ClickHouse and abandons libZooKeeper. ClickHouse encapsulates from the TCP layer and follows the ZooKeeper protocol.

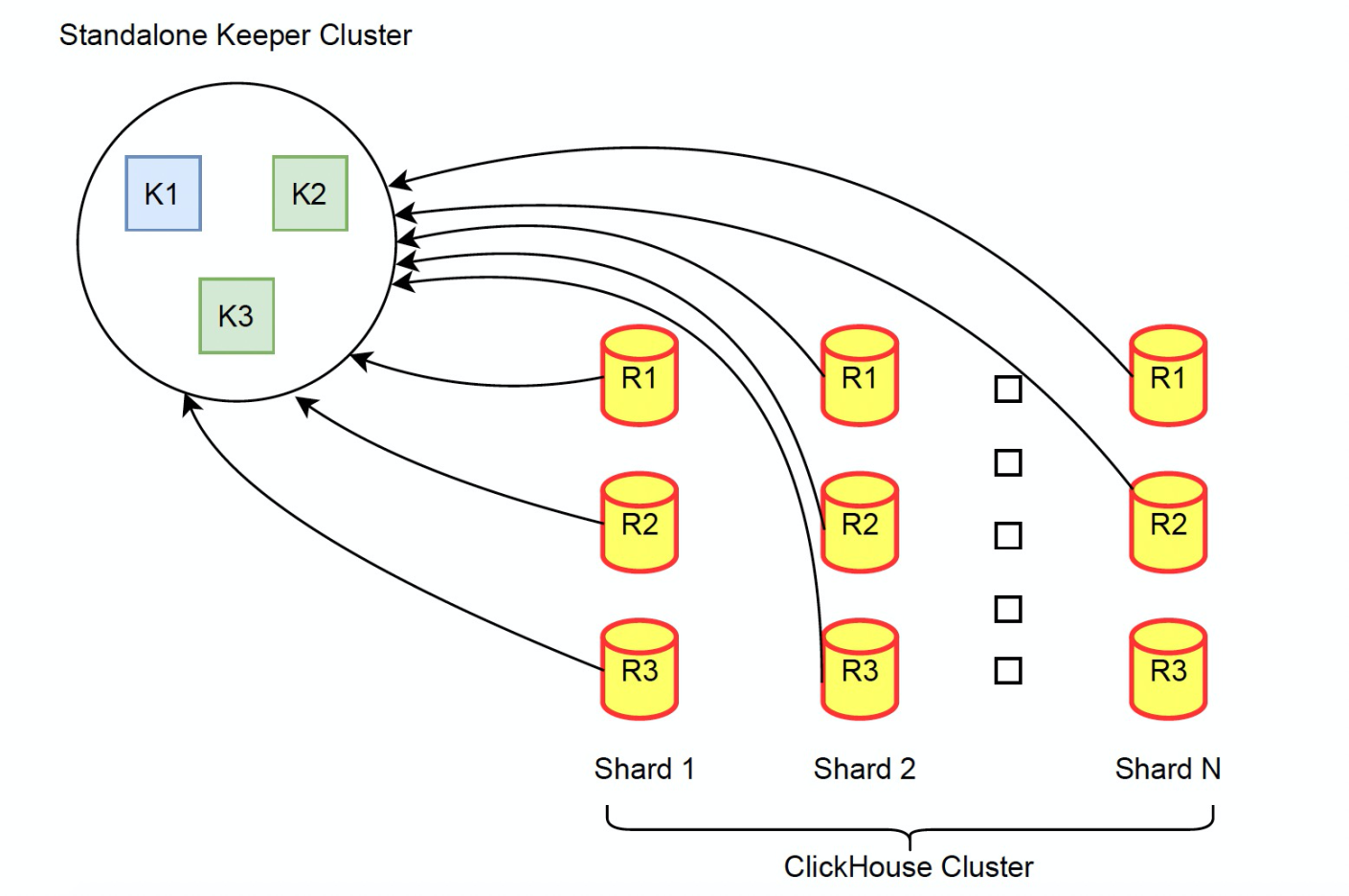

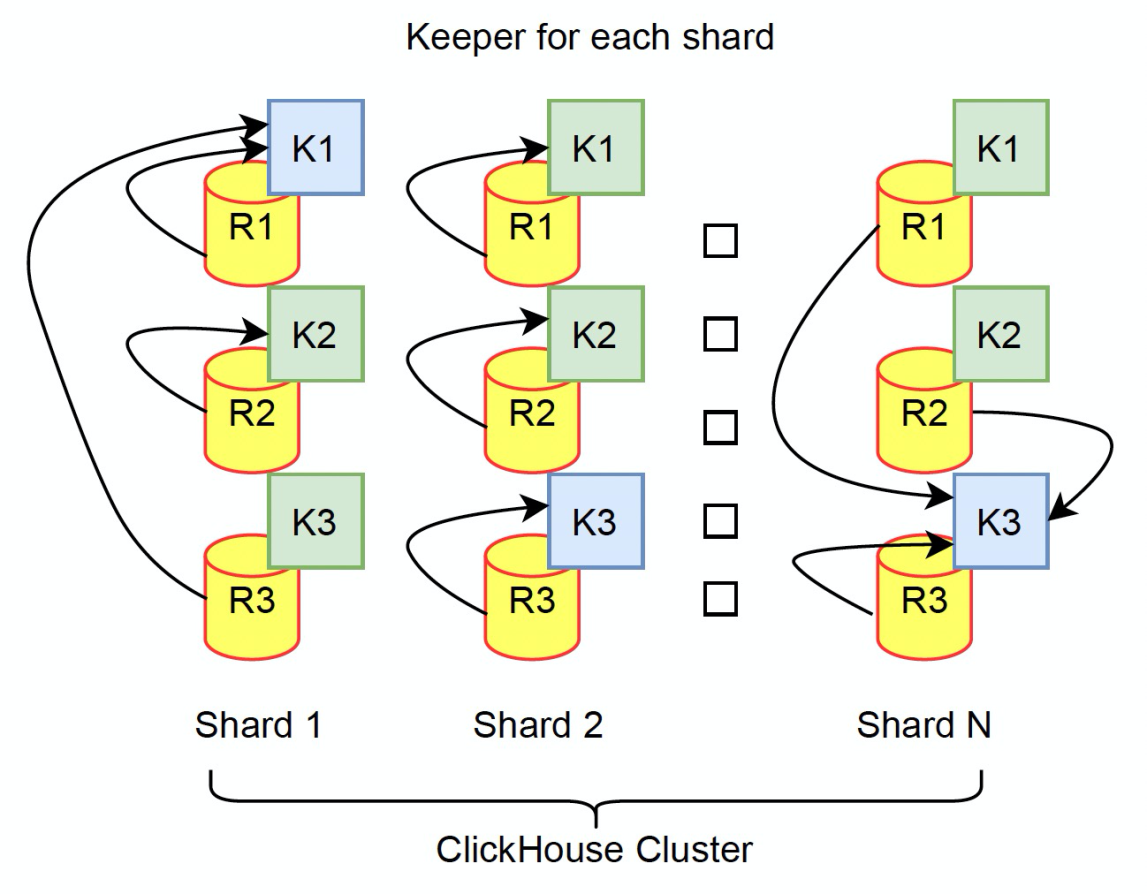

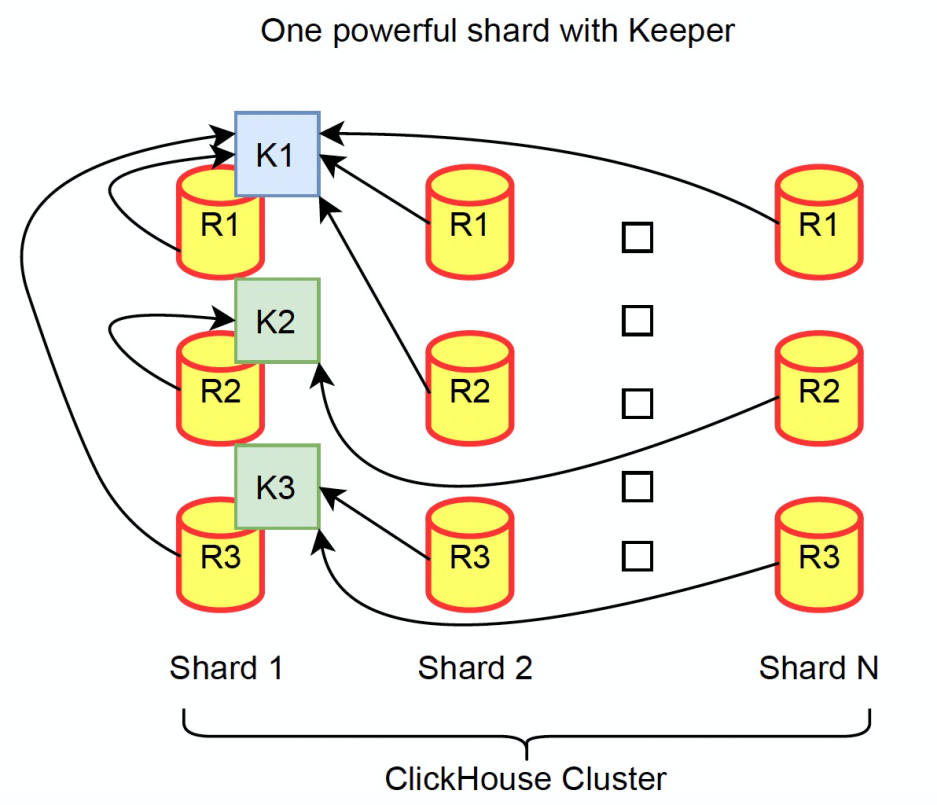

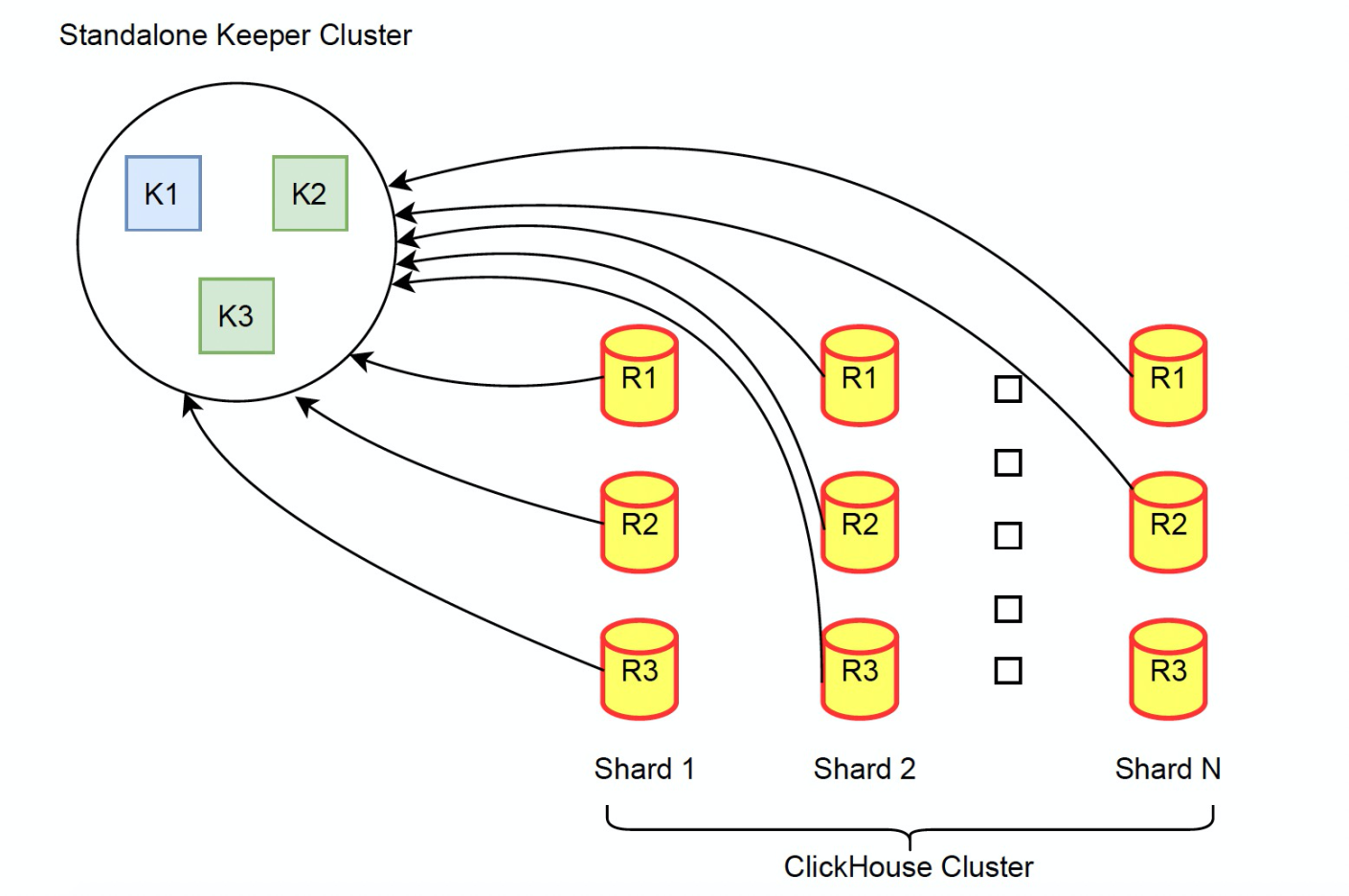

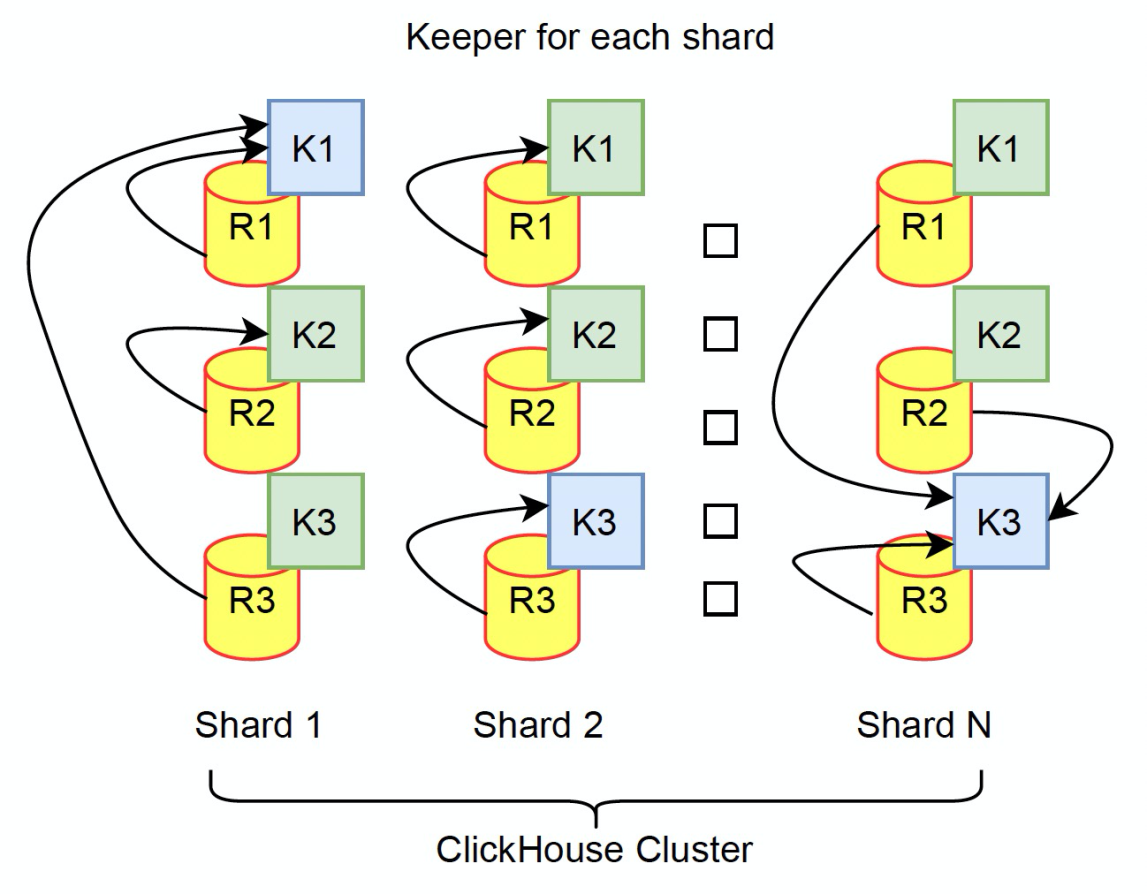

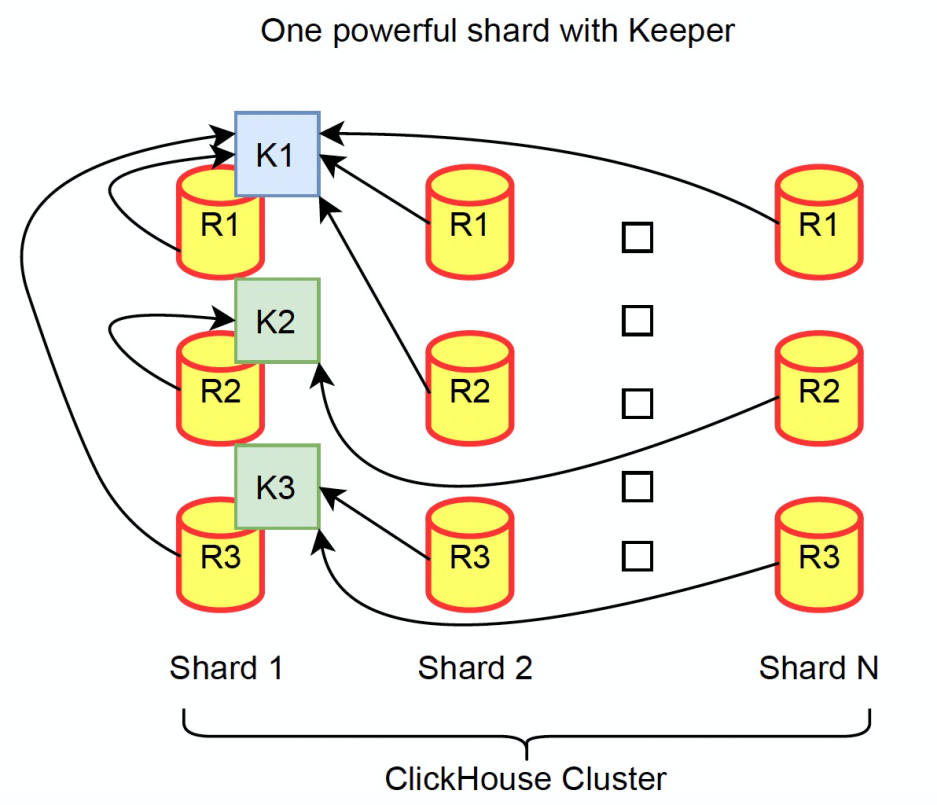

Architecture Diagram

- Among three deployment modes, we recommend the first standalone mode. You can select small-scale servers with SSD disks to maximize the performance of Keeper.

Analysis of Core Flow Chart

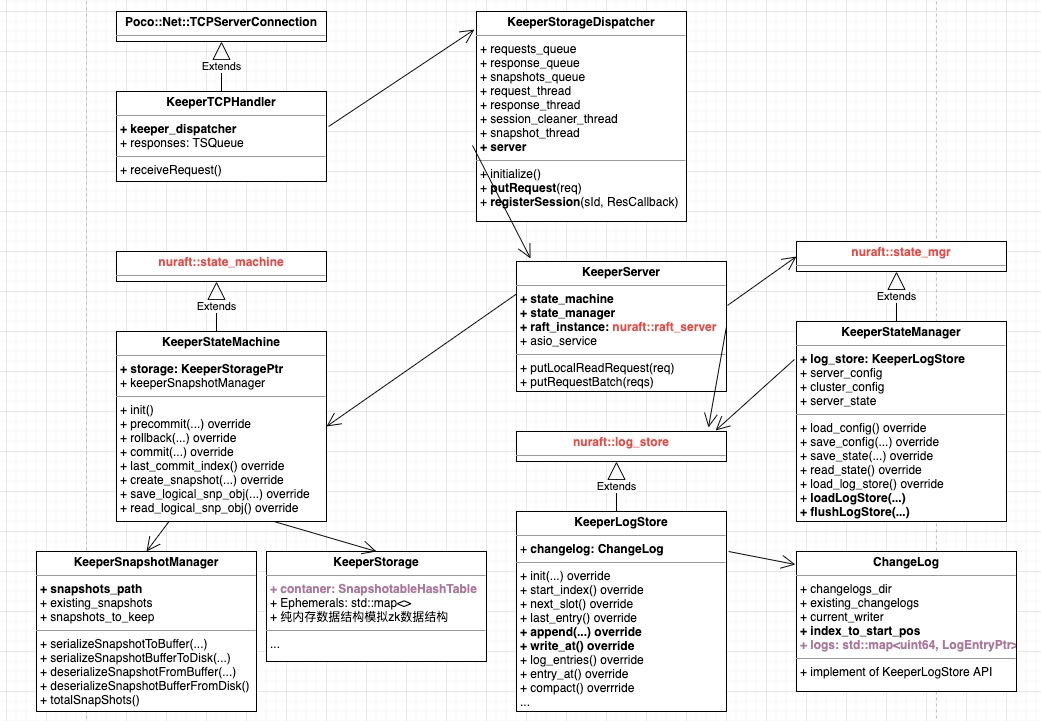

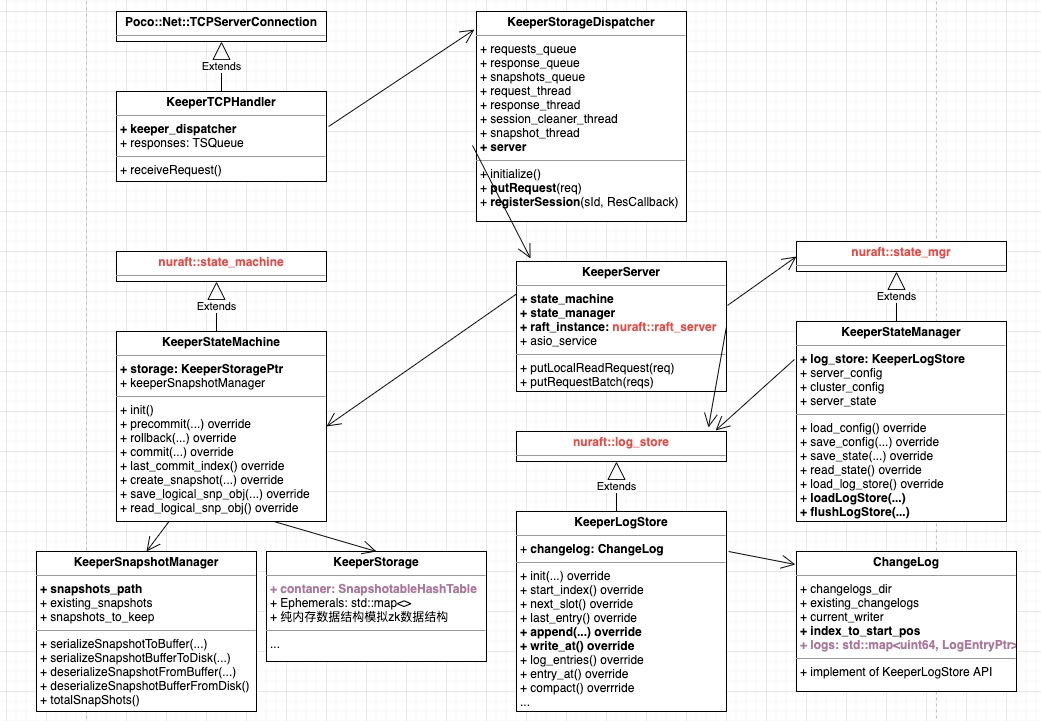

Class Diagram Relationships

The main function mainly does two things:

- Initialize Poco::Net::TCPServer and define the KeeperTCPHandler to process requests

- Instantiate keeper_storage_dispatcher and call KeeperStorageDispatcher->initialize()

This function has the following major features:

- Instantiate several threads in the class diagram and related ThreadSafeQueue to ensure data synchronization between different threads.

- Instantiate the KeeperServer object that is the core data structure and is the most important part of the entire Raft. KeeperServer consists of state_machine, state_manager, raft_instance, and log_store (indirectly). Those respectively inherit the parent class in the NuRaft library. In general, all Raft-based applications are supposed to implement those classes.

- Call KeeperServer::startup() mainly to initialize state_machine and state_manager. During startup, state_machine->init() and state_manager->loadLogStore(...) are called to respectively load snapshots and logs. The function restores from the latest Raft snapshot to the latest submitted latest_log_index and forms a memory data structure. (The most important data structure is the Container data structure, KeeperStorage::SnapshotableHashTable.) Then, the function continues loading each record in the Raft log file to logs, which is the data structure, std::unordered_map. These preceding data structures in bold are the core of the entire HouseKeeper and consume most memory resources, which will be mentioned later.

- The main loop of KeeperTCPHandler is to read the socket request, give the request dispatcher->putRequest(req) to requests_queue, read the response via responses.tryPop(res), and use write sockets to return the response to the client. The main loop mainly goes through the following steps:

- Check if the entire cluster has a leader, and if so, send Handshake. Note: HouseKeeper uses the auto_forwarding option of NuRaft. Therefore, if the recipient of the request is a non-leader, it will assume the role of a proxy, forward the request to the leader, and all read/write requests will go through the proxy.

- Obtain the session_id of the request. The process of obtaining session_id for a new connection is the auto-increment process of keeper_dispatcher->internal_session_id_counter from the server.

- keeper_dispatcher->registerSession(session_id,response_callback) to bind the corresponding session_id and the callback function

- Assign the request keeper_dispatcher->putRequest(req) to requests_queue

- Repeatedly call responses.tryPop(res) to read the response and use write sockets to return the response to the client

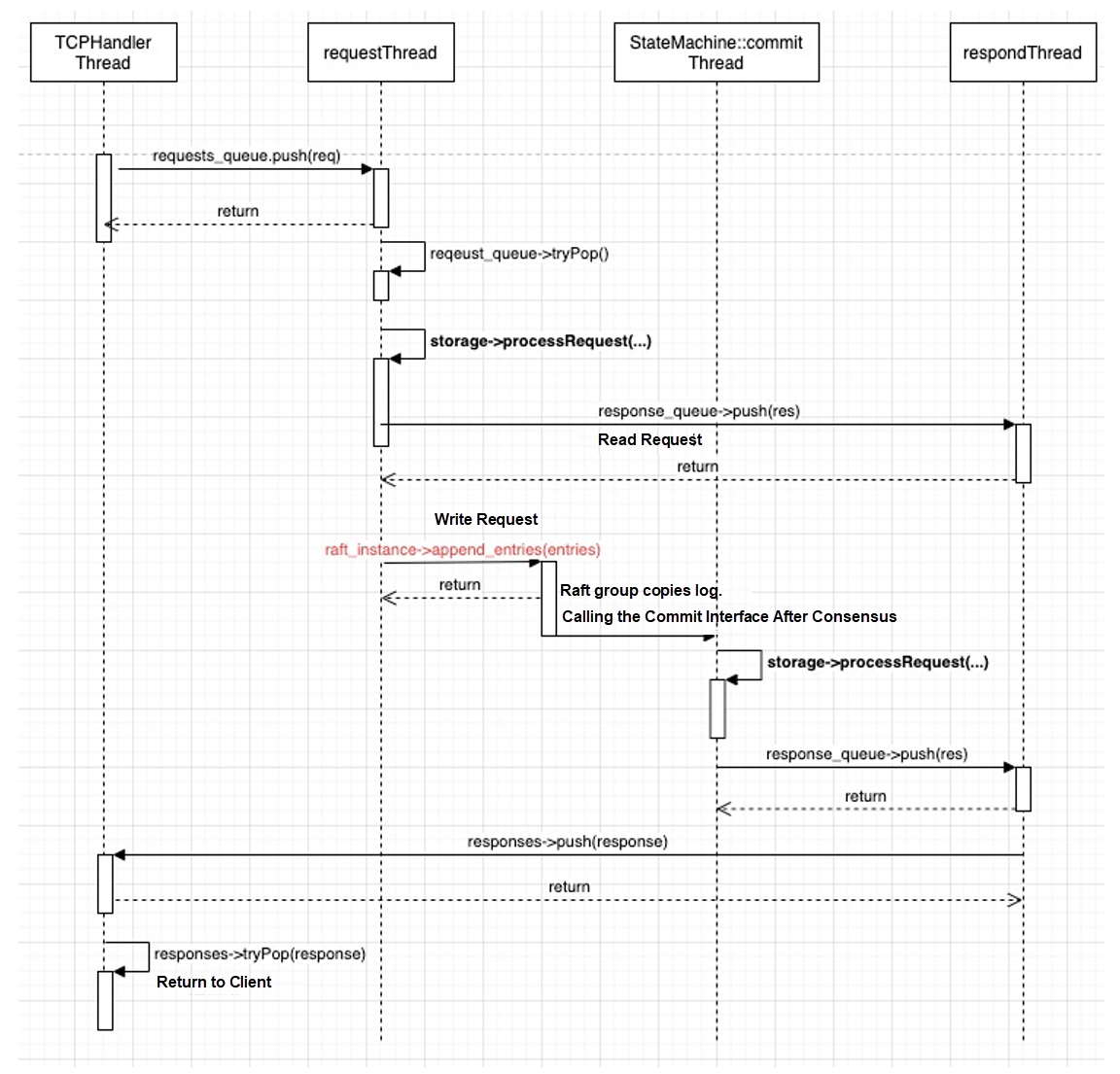

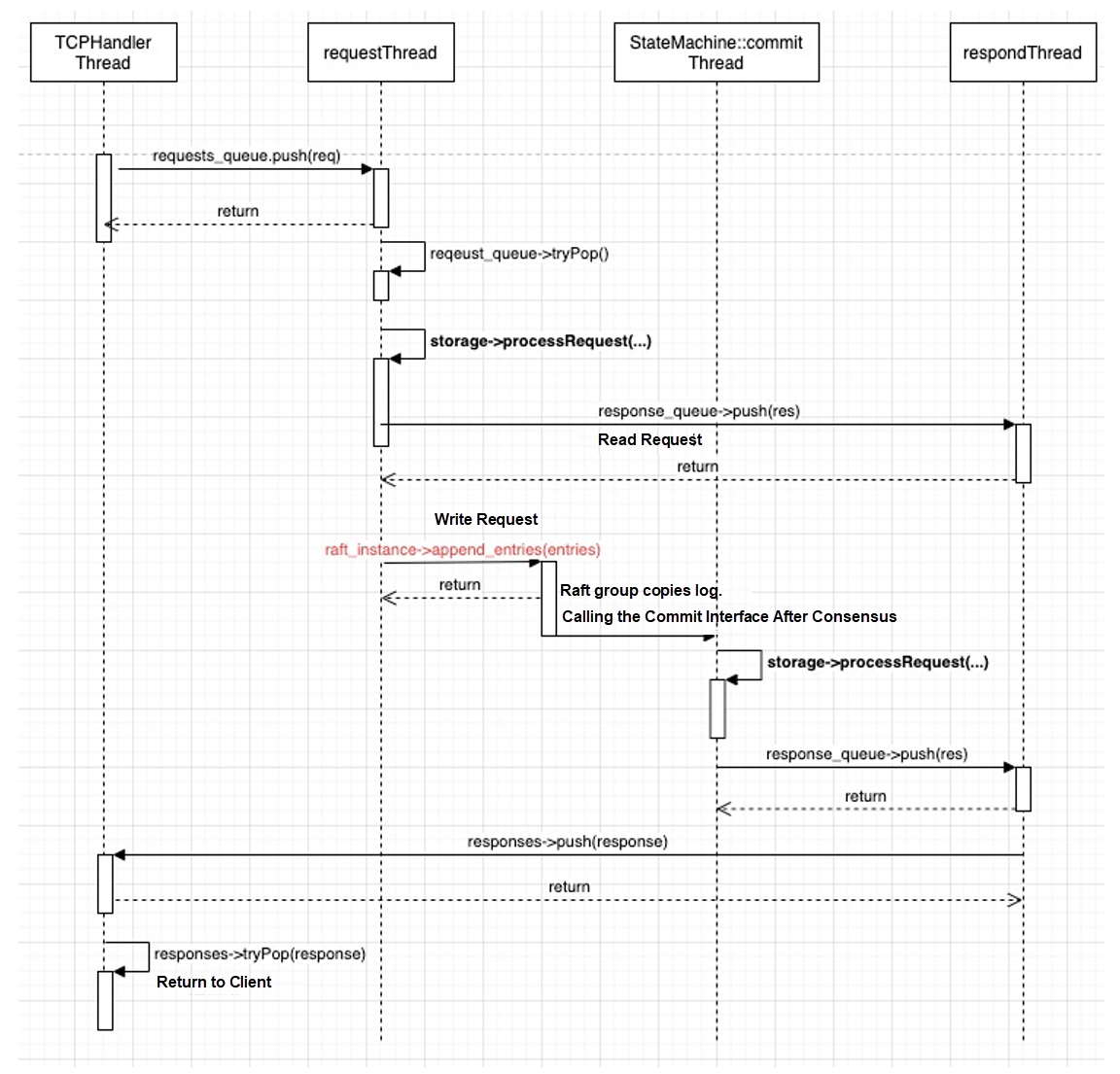

Thread Model for Processing Requests

- A request processing procedure starts from the TCPHandler thread and goes through different thread calls in the sequence diagram to complete the request processing of the comprehensive procedure

- The read request is directly processed by the requests_thread calling state_machine->processReadRequest. In this function, the storage->processRequest(...) interface is called.

- The write request is written to log through raft_instance->append_entries(entries), the User API of the NuRaft library. After the consensus is reached, the commit interface is called through the internal thread of the NuRaft library to execute the storage->processRequest(...) interface.

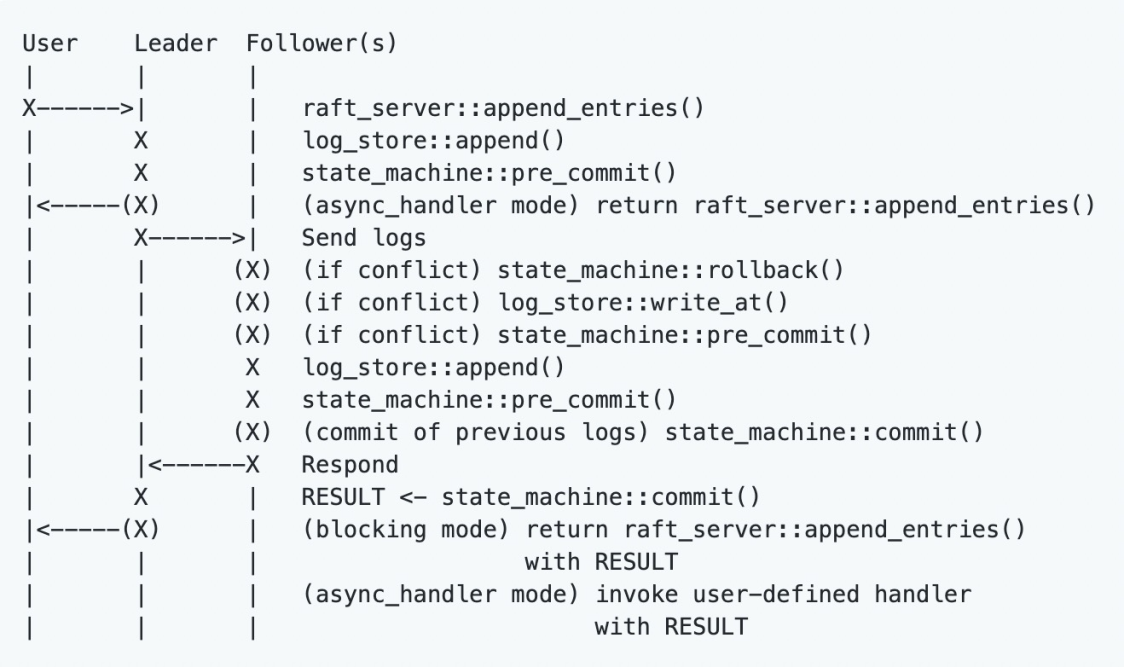

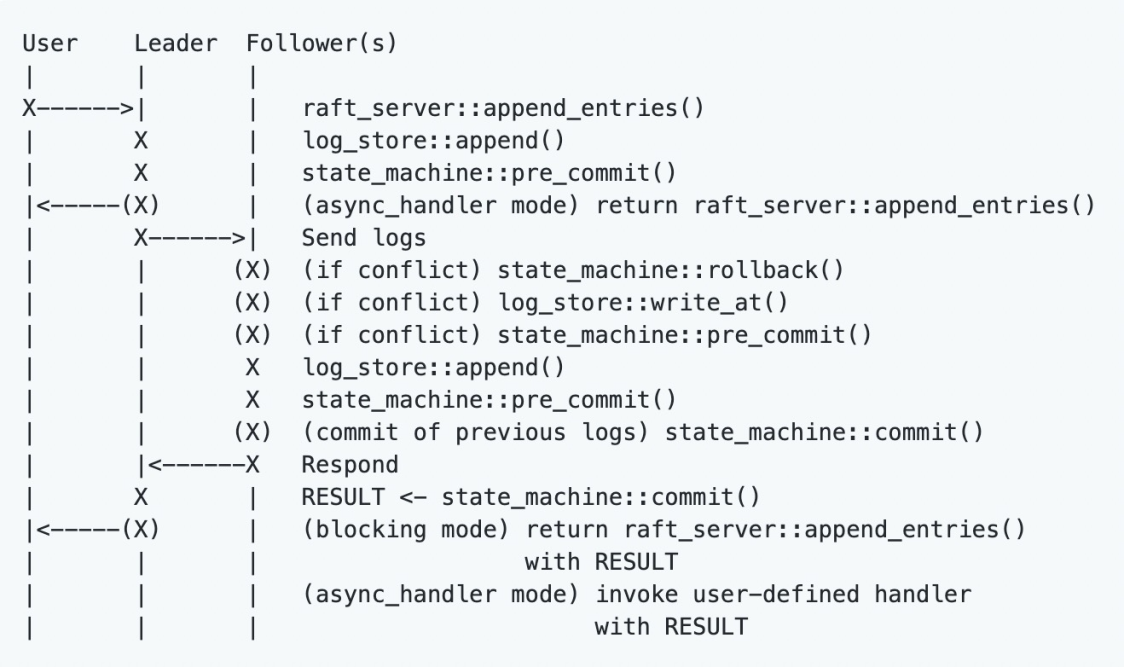

- The following figure shows the process of normal log replication of the NuRaft library:

The NuRaft library internally maintains two core threads (or thread pools):

-

raft_server::append_entries_in_bg: The leader is responsible for checking whether new entries exist in the log_store and conduct replication for the follower.

-

raft_server::commit_in_bg: All roles (role, follower) check whether their own state machines, sm_commit_index, lag behind the leader_commit_index of the leader, and if so, run apply_entries on the state machine.

Analysis of Internal Code Flow

In general, NuRaft implements a programming framework, which needs to implement several classes marked red in the class diagram.

LogStore and Snapshot

LogStore is responsible for persistent logs, which are inherited from nuraft::log_store. Among those interfaces, the relatively important ones are listed below:

-

Write: It includes sequential write KeeperLogStore:: append(entry), overwrite (truncated write) KeeperLogStore:: write_at(index, entry), and batch write KeeperLogStore:: apply_pack(index, pack)

-

Read: last_entry(), entry_at(index) and others

-

Clean up after Merging: KeeperLogStore:: compact(last_log_index), which is mainly called after the snapshot. When the KeeperStateMachine::create_snapshot(last_log_idx) is called and all snapshots serialize data to the disk, it will call log_store_->compact(compact_upto). The code flow is compact_upto=new_snp-> get_last_log_idx()-params->reserved_log_items_. This is a small hidden trouble. The compact_upto index of compact is not the latest index that has performed the snapshot, which needs to be partially reserved. The corresponding configuration is reserved_log_items.

-

ChangeLog is the pimpl of LogStore and provides all LogStore /nurse::log_store interfaces. ChangeLog mainly consists of current_wirter(log file writer) and logs (std::unordered_map).

- Each time a log is inserted, the log is serialized into the file buffer and inserted into the memory logs. Therefore, it is confirmed that the memory usage of logs keeps increasing until snapshots are performed.

- After snapshot is completed, the index of compact_upto in the serialized disk is erased from the memory logs. Therefore, we need to trade off two configuration items, snapshot_distance and reserved_log_items. Currently, the default values of the two configuration items are 100,000, which is prone to occupying a large amount of memory. The recommended values are 10000 and 5000.

KeeperSnapshotManager provides a series of interfaces for ser/deser:

- The KeeperStorageSnapshot mainly provides the ser/deser operation between KeeperStorage and file buffer.

- During initialization, KeeperSnapshotManager performs the deser operation directly through the Snapshot file to restore to the data structure of the KeeperStorage corresponding to the index indicated by the file. (For example, the indicated index of snapshot_200000.bin is 200000.)

- When KeeperStateMachine::create_snapshot is performed, KeeperSnapshotManager conducts the ser operation based on the provided snapshot metadata (such as index and term) to serialize the data structure of KeeperStorage to the disk.

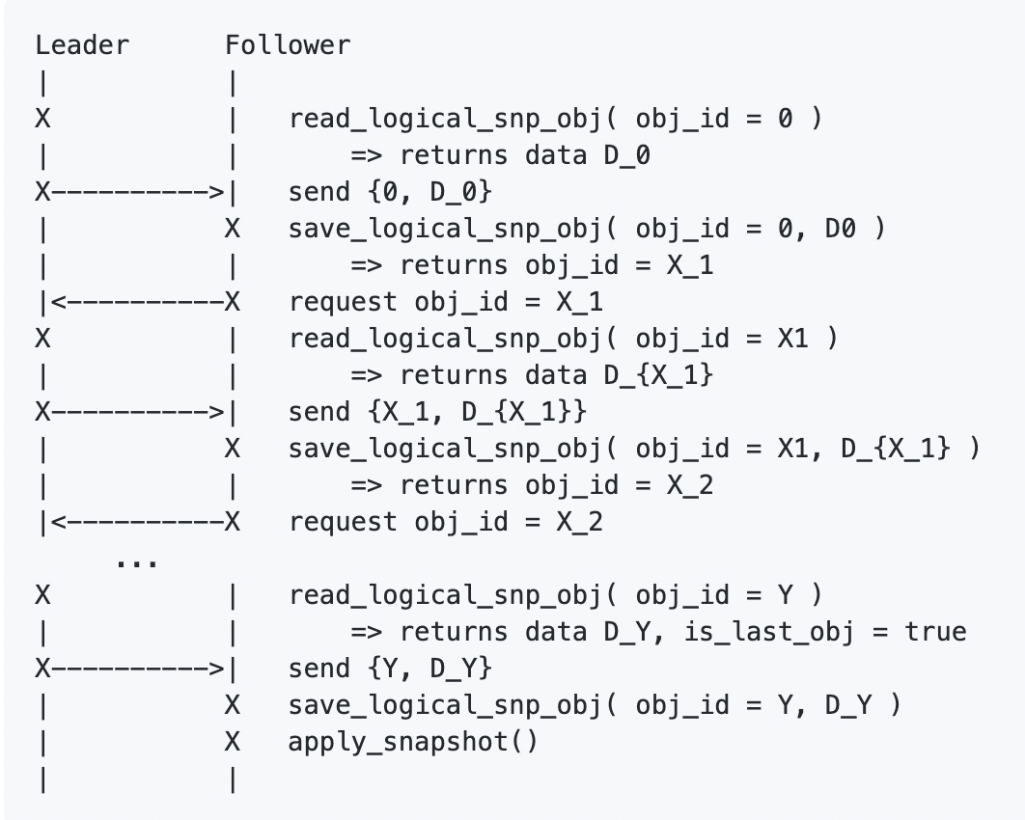

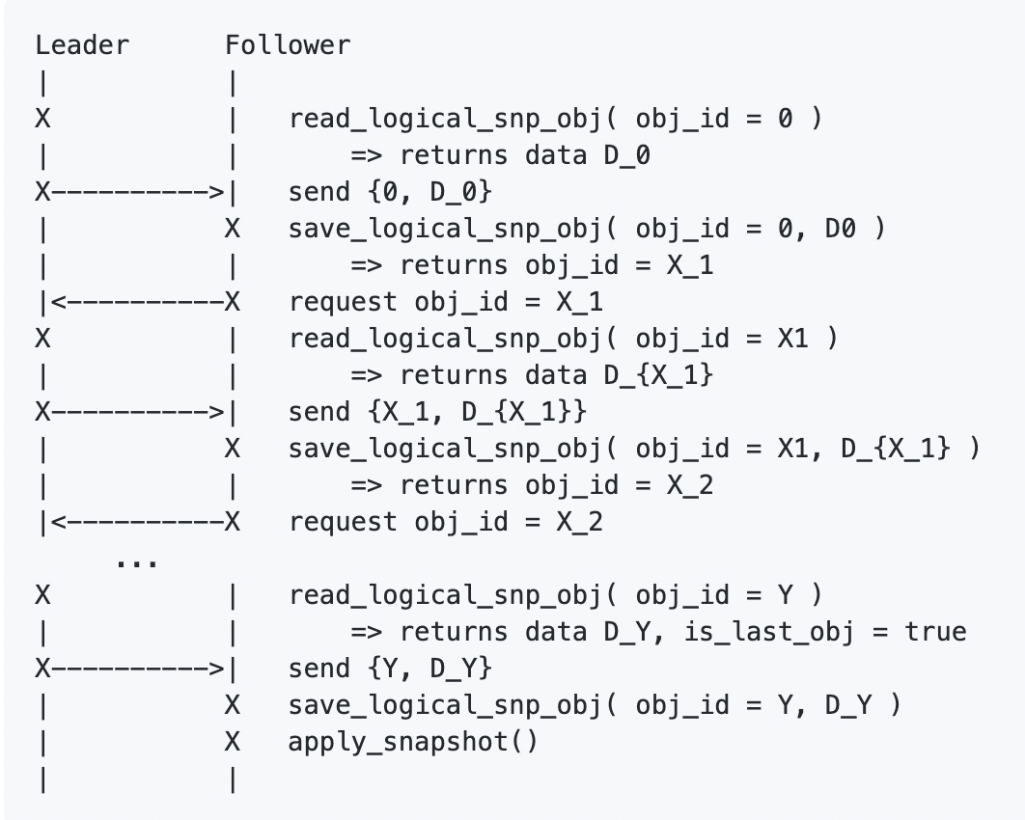

- The NuRaft library provides snapshot transmission. When the newly added follower nodes or logs of follower nodes lag behind a lot (and have fallen behind the latest log compaction upto_index), the leader will actively initiate the process of InstallSnapshot, as shown in the following figure:

The NuRaft library provides several interfaces for the process of InstallSnapshot. KeeperStateMachine has made a simple implementation of InstallSnapshot:

-

read_logical_snp_obj(...): The leader directly sends the latest snapshot latest_snapshot_buf in memory.

-

save_logical_snp_obj(...): The follower receives and serializes the disk to update its own latest_snapshot_buf.

-

apply_snapshot(...): The latest snapshot latest_snapshot_buf is generated into the latest version of storage.

KeeperStorage

This class is used to simulate features equivalent to ZooKeeper.

The core data structure is the Znode storage of ZooKeeper:

- using Container = SnapshotableHashTable:

std::unordered_map and std::list are combined to implement a lock-free data structure. When the key is ZooKeeper path, and the value is ZooKeeper Znode (including stat metadata that stores Znode), Node is defined below:

struct Node

{

String data;

uint64_t acl_id = 0; /// 0 -- no ACL by default

bool is_sequental = false;

Coordination::Stat stat{};

int32_t seq_num = 0;

ChildrenSet children{};

};

- The map in the structure of SnapshotableHashTable always holds the latest data structure for read requirements. The list provides two data structures to ensure the newly inserted data does not affect the data performing snapshot. The implementation is very simple. Please see this link for more information

- Data structures are provided, such as ephemerals, sessions_and_watchers, session_and_timeout, acl_map, and watches. The implementation is very simple, and this article will not introduce them one by one.

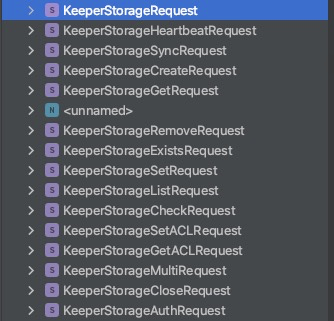

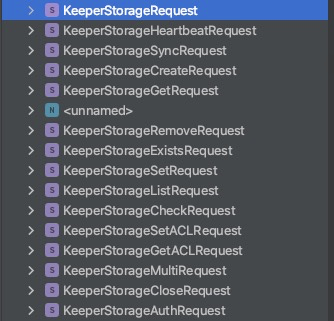

- All requests implement a self-KeeperStorageRequest parent class, including all sub-classes in the following figure. Each request implements a pure virtual function to operate on the memory data structure of KeeperStorage.

virtual std::pair<Coordination::ZooKeeperResponsePtr, Undo> process(KeeperStorage & storage, int64_t zxid, int64_t session_id) const = 0;

Troubleshooting of NuRaft Key Configuration

- Since the operating system version corresponding to the Alibaba Cloud EMR ECS machine is relatively old, the support for IPv6 is not good, and the server cannot start. The new version has resolved those problems. The workaround is to change the TCP port of the hard coding of the NuRaft library to IPv4.

- After five rounds of ZooKeeper pressure tests, the memory has been rising, which is close to a memory leak. The conclusion is that it is not a memory leak, and users need to adjust parameters so the logs memory data structure does not occupy too much memory.

- In each round, five million Znodes are created first, each Znode data is 256, and then five million Znodes are deleted. The specific process says 5,000 requests are initiated in each round, and 1,000 Znodes are created in each request transaction under the multi mode of ZooKeeper Client. After reaching five million Znodes, another 5,000 requests are initiated, and 1,000 Znode are deleted in each request, ensuring all Znodes are deleted in each round. Therefore 10,000 logEntry entries are inserted in each round.

- In the process, the memory rises in each round. After five rounds, the memory rises to over 20G, which is suspected to be a memory leak.

- After adding the profile code to print the showStatus, the ChangeLog::logs data structure keeps growing in each round, while the KeeperStorage::Container data structure changes periodically with the number of Znodes, and eventually returns to zero. The conclusion says since the default configuration of the snapshot_distance is 100,000, no create_snapshot has occurred, and no compact logs have occurred. In addition, the memory usage of ChangeLog::logs increase. Therefore, the recommended values are 10000 and 5000.

- The configuration of auto_forwarding allows the leader to forward requests to the follower, which is a transparent implementation to the ZooKeeper Client. However, this configuration is not recommended by NuRaft, and future versions will improve this practice.

Summary

- Removing ZooKeeper dependencies will make ClickHouse no longer depend on external components. This is a big step forward in terms of stability and performance and provides a prerequisite for gradually moving towards cloud-native.

- Based on this codebase, the Raft-based MetaServer will be gradually derived in the future, which provides the premise for supporting the separation of storage and compute and MPP architecture that supports distributed joins.

References

[1] https://github.com/eBay/NuRaft

[2] https://xzhu0027.gitbook.io/blog/misc/index/consistency-models-in-distributed-system

[3] https://zhuanlan.zhihu.com/p/425072031

Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

ApsaraDB for HBase

ApsaraDB for HBase

Time Series Database (TSDB)

Time Series Database (TSDB)