By Janmee

The "phantom resurgence" problem is essentially the "third state" problem of the distributed system. In the network system, three types of results are returned for a request: success, failure, and timeout unknown. If "timeout unknown" is returned, the processing result for the request from the server can be a success or a failure. Here, the point is that the result must be one of the two and must be constant.

As we know, there are many distributed consistency replication protocols in the industry, such as Paxos, Raft, Zab, and Paxos variants. These protocols are widely used to implement highly available (HA) data consistency. A Paxos group usually consists of three or five mutually redundant nodes. It allows the Paxos group to provide services even when downtime occurs on a small number of nodes, ensuring data consistency. As an optimization method, a protocol chooses one of its nodes as the leader to raise proposals. Under normal circumstances, the existence of the leader prevents the interference of parallel proposals. This greatly improves proposal processing efficiency.

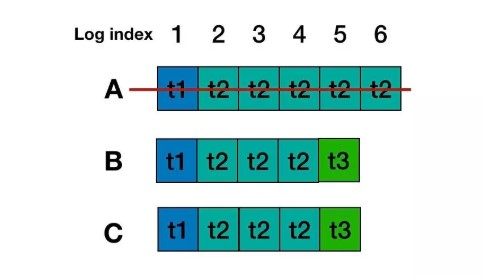

With some extreme exceptions, such as network isolation or machine failure, the leader may undergo switchover and data recovery multiple times. Using the Paxos protocol to back up and restore logs can ensure that logs which formed the majority are not lost, but cannot prevent a phenomenon that is referred to as "phantom resurgence". Consider the following situation:

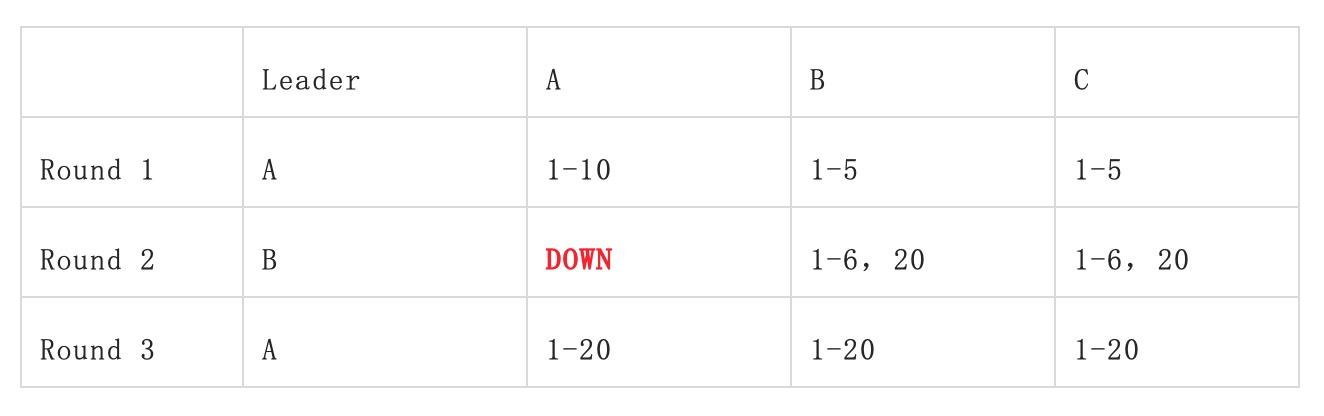

As shown in the preceding table, in the first round, A is specified as the leader. A sends logs 1 to 10 but the subsequent logs 6 to 10 do not form the majority. As a result, A breaks down. In the second round, B is specified as the leader. B continues to send logs 6 to 20 (B does not see logs 6 to 10 because they were not synchronized in the first round.) This time, logs 6 and 20 form the majority. After another random switchover, A resumes as the leader and finds that the greatest logID in the majority is 20. So, A tries to fill the gap resulted from the synchronization of logs. This time, it is very likely to start from log 6 and keep verifying until log 20. Let's see what happens next:

In the preceding four cases of situation analysis, Cases 1, 3, and 4 do not cause problems but the problem occurs in Case 2. Logs 7 to 10 that do not exist in the second round reappear in the third round. According to OceanBase, this problem is not acceptable in the scenarios of database log synchronization. A simple example is the transaction scenario. If the response times out during the transfer process, you need to check whether the transaction is successful or not and decide whether to retry the transaction. When you query the transaction result for the first time, the transaction is found to be unsuccessful. Then you retry it, and the transaction log reappears as a "phantom resurgence" log, leading to the repetition of the transaction.

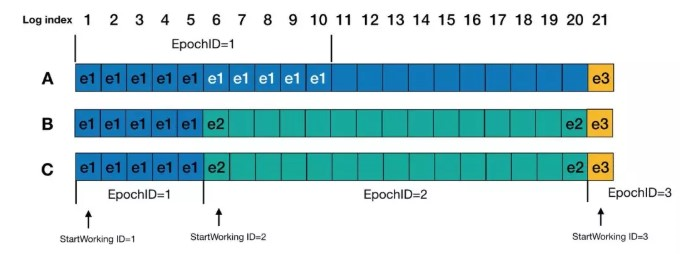

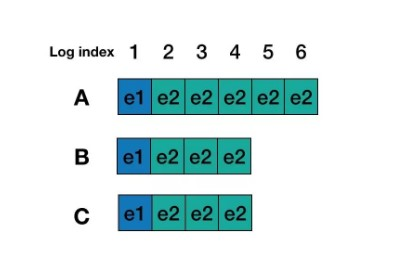

To solve the "phantom resurgence" problem, the consistency system based on Multi-Paxos can create and store an epochID for each log and specify the proposer to use the current proposalID as the epochID when the proposer generates this log. When the logs are played back in the order of logID, the leader always writes a StartWorking log before starting the service. If the epochID is smaller than that of the previous log, it indicates that this is a "phantom resurgence" log and can be ignored. In my opinion, the order here is first filling in the gap, then writing StartWorkingID, and then starting the service.

To further explain with the preceding example, in the third round, when A starts working as the leader, it needs to play back the logs to reconfirm them. For logs 1 to 5, epochID is 1. For logs whose epochID is 2, log 6 is the StartWorking log. For logs 7 to 10, their epochID is 1, which is smaller than the epochID of the last log. As a result, logs 7 to 10 will be ignored. For logs 11 to 19, their epochID remains the same with the StartWorkingID in the previous round, in which A was the leader. Meanwhile, the proposeID remains 3. Alternatively, these logs are noop logs and therefore are excluded from the epochID comparison. Then, log 20 is reconfirmed as well. Finally, after the StartWorking log is written to log 21 and is approved by the majority, A starts to receive requests as the leader.

First, let's talk about log recovery in Raft. In Raft, the leader of each round must contain data that is already committed. According to the drawer principle, the leader of each round contains the latest data among the majority, and it must contain data that is already committed on most nodes. With new leaders, they will overwrite inconsistent data from other nodes. Although the new leader must include the log entries that are already committed by the leader of the previous term, it may also include other log entries, which were not committed by the leader in the previous term. It is relatively complicated to convert these log entries to the committed state. We need to consider the fact that the leader was switched multiple times and the log recovery was not completed. We need to ensure that the final proposal is consistent and certain, otherwise, the phantom resurgence problem will occur.

As a result, a constraint is added to Raft. For the uncommitted data in the previous term, the data is restored to most nodes. Only when at least one new log entry in the new term is replicated or restored to the majority of nodes, can we deem that the log entries that were not committed are now committed. To commit the log entries that were not committed in the last term, Raft offers the following solution:

The Raft algorithm requires that a special internal log of noop be appended once the leader is elected, and be synchronized to other nodes immediately. This implements the implicit commitment of all the previously uncommitted logs. This solution ensures two things:

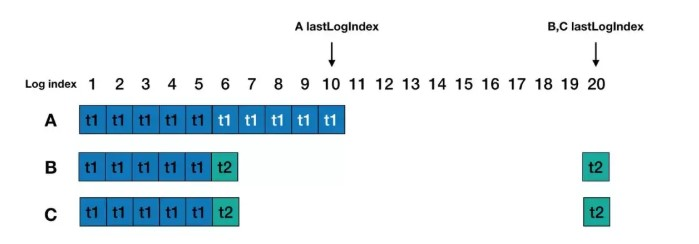

According to the scenario in section 1, in Raft, A cannot be elected as the leader in the third round. For the election, the lastLogTerm and lastLogIndex of the last log are compared among candidates. lastLogTerm(t2) and lastLogIndex(20) of B and C are greater than lastLogTerm(t1) and lastLogIndex(10) of A. Therefore, only B or C can be the leader. If C becomes the leader, it will restore the replica during runtime. For A, from log 6, C copies log entries of its own logs 6 to 10 and replicates them to A. Therefore, the original logs 6 to 10 in A are deleted and replaced by logs from C to keep consistency. Then, C sends the log entry of noop to its followers. If it is accepted by the majority, C starts to work normally. Therefore, logs 7 to 10 are empty and do not return any result to queries.

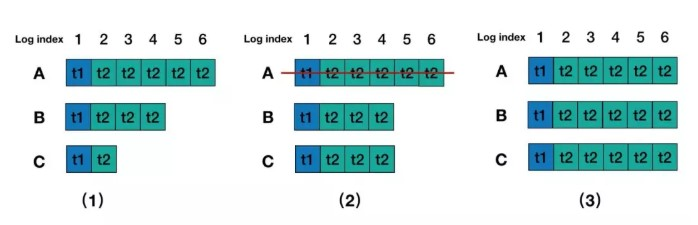

There is another more general "phantom resurgence" scenario. Consider the following log scenarios:

Round 1: A is the leader, log entries in log 5 and log 6 are not yet committed, and A breaks down. Logs 5 and 6 cannot return any value to queries.

Round 2: B is the leader, log entries in log 3 and log 4 are replicated to C, and no additional entries are written when B is the leader.

Round 3: A is restored and B and C are restarted, A is the leader again, and log entries in log 5 and log 6 are replicated to B and C. In this case, queries on log 5 and log 6 return results successfully.

When a new leader is elected in Raft, add a log entry of the current term to solve the "phantom resurgence" problem. This is a similar solution as adding a StartWorking log in Multi-Paxos. After B becomes the leader, it will add a noop log entry of term 3. This solves the two problems mentioned above.

Before the noop log of term 3 is committed, the log entries of log 3 and log 4 of B are replicated to C. This implements the principle of maximum commitment and ensures that no committed log entry is lost. Even if A restores from downtime, A cannot become the leader because the lastLogTerm of A is smaller than that of B and C. Therefore, the unfinished commitment in A is dropped, and subsequent read requests cannot read the entries of log 5 and log 6.

The work process of Zab includes 2 phases, atomic broadcast, and crash recovery. The work process of an atomic broadcast is similar to the process of submitting a transaction in Raft. Crash recovery can be further divided into two phases: leader election and data synchronization.

In the early Zab protocol, elected leaders meet the following conditions:

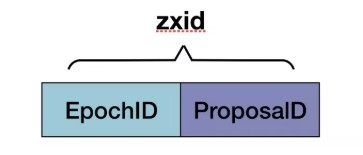

A zxID is a 64-bit number, and the higher 32 bits of it consist of the epoch number. Each time a new leader is elected, the epoch number is incremented by 1. The lower 32 bits of zxID is the message counter whose value is incremented by 1 each time a message is received. The message counter is reset to 0 after the new leader is elected. The advantage of this design is that the old leader will not be elected as a leader after it fails, so its zxID is smaller than the current new leader. When the old leader is connected to the new leader as a follower, the new leader allows it to clear all uncommitted proposals with old epoch numbers.

The log recovery phase starts after a leader is elected. The leader decides what data to send to each follower based on the zxID from that follower, so data can even out among the followers for the maximum commitment principle. All committed data is replicated to the followers. After data evens out among all followers, each follower sends ACK to the leader. After receiving ACK from more than half of the followers, the leader starts to work, and the entire Zab protocol enters the atomic broadcast phase.

As for the scenarios described in section 1, according to the election mechanism of Zab, after each election, the epoch number increments by 1 and becomes the highest 32 bits of zxID in the next round. If the epochID of the first round is 1, the epochID of the second round is 2. Every zxID generated based on this epoch must be greater than any zxID of A. Therefore, in the third round, because the zxID of either B or C is greater than the zxID of A, A will not be elected as the leader. After A is added as a follower, data on it is overwritten with the data from the new leader. In this way, Zab prevents Case 1 from occurring.

For the scenario described in section 3.2, no transaction is generated after B is elected as the leader in the second round. In the election of the third round, the zxID of the last log entry of A is greater than that of B and C because the latest log of A, B, and C has not changed, so A will be elected as the leader. After A replicates data to B and C, the "phantom resurgence" problem will occur.

To solve the "phantom resurgence" problem, in the latest Zab protocol, after each leader election is completed, a local file is saved to record the current epochID (as CurrentEpoch). In the election, CurrentEpoch is read first and added to the vote, and then sent to other candidates. If the received CurrentEpoch is smaller than that of the candidate, the vote is ignored. If the received CurrentEpoch is greater than that of the candidate, the vote is accepted. If the received CurrentEpoch is equal to that of the candidate, zxID will be compared. Therefore, for this problem, in the first round, the CurrentEpoch of A, B, and C is 2. In the second round, the CurrentEpoch of A is 2, and that of B and C is 3. In the third round, the CurrentEpoch of B and C is greater than that of A, so A cannot become the leader.

The approach of Apsara Name Service and Distributed Lock Synchronization System of Alibaba Cloud is similar to that of Raft and Zab. It ensures that roles that produce the "phantom resurgence" problem cannot be elected as leaders in a new round, preventing phantom logs from reappearing. If we look at the "phantom resurgence" problem from the perspective of the server, the new leader does not know the current committed index, and it cannot tell whether the log entry is committed or not. Therefore, we need to use certain log recovery measures to ensure that submitted logs are not lost (the maximum commitment principle) and that a dividing line (such as StartWorking for Multi-Paxos, noop for Raft, or CurrentEpoch for Zab) is available to determine whether logs are committed or dropped, to avoid ambiguity. The "phantom resurgence" problem is essentially the "third state" problem of the distributed system. In the network system, three types of results are returned for a request: success, failure, and timeout unknown. If "timeout unknown" is returned, the processing result for the request from the server can be a success or failure. However, the result must be one of the two and must be constant. On the client, if the request times out, the client does not know what the current underlying situation is and whether it is a success or a failure. In this case, the client often retries. Accordingly, business logic applied in the underlying layer must be idempotent, otherwise, the retries can cause data inconsistency.

Alibaba Clouder - February 3, 2019

Alibaba Cloud_Academy - September 16, 2022

Alibaba Cloud Community - October 17, 2022

Alibaba Cloud Native Community - March 21, 2024

Alibaba Cloud_Academy - September 9, 2022

Alibaba Clouder - February 5, 2019

ApsaraDB for OceanBase

ApsaraDB for OceanBase

A financial-grade distributed relational database that features high stability, high scalability, and high performance.

Learn More LedgerDB

LedgerDB

A ledger database that provides powerful data audit capabilities.

Learn More PolarDB for Xscale

PolarDB for Xscale

Alibaba Cloud PolarDB for Xscale (PolarDB-X) is a cloud-native high-performance distributed database service independently developed by Alibaba Cloud.

Learn More ChatAPP

ChatAPP

Reach global users more accurately and efficiently via IM Channel

Learn More