For a long time, data distribution has been an issue in the field of Big Data processing. Unfortunately, the Big Data processing systems that are popular today do not satisfactorily solve the issue. In the all-new optimizer for Maxcompute2.0, we introduced complex data distribution. In this version we added new optimization measures like partition pruning, distribution pull up and push down, and distribution alignment. This article begins with the principle and history of data distribution, explaining our thoughts and solutions on the matter.

For many people, bringing up data distribution arouses thoughts of MPP DBMS. In fact, we often say that one only needs to think about data distribution when using MPP DBMS. First, let’s take a look at the categorization of databases:

Obviously, when deploying a Shared Nothing database, you need to carefully consider data distribution. You first need to know how to distribute data across different physical nodes (since this database doesn’t place data into unified storage unlike the Shared Disk system) to reduce the demands of future operations. For example, in Greenplum, one has to define a partition key when building a table, after which the system will distribute data according to key (hash). If we partition the two tables in a Join operation according to join key, then the Join operation will not require network IO. If one of the involved tables uses a different group of partition keys, then a re-partitioning operation may be necessary.

This is precisely why we need to understand the principle behind data distribution, as it can be critical to the application and system optimization. There is a significant amount of information available concerning data distribution on MPP DBMS. But why don’t these kinds of optimizations exist for data processing systems like Hadoop? Simply put, it’s because then we need stronger expandability (and support for unstructured data, but we won’t go into that).

The difference is that MPP and Hadoop don’t place data and computing on the same node. Even if we were to do so, it would limit the system expandability. Dynamic expandability especially suffers. Take into consideration a group of 50 currently operating Greenplum clusters. It would be nearly impossible to quickly add, for example, two new nodes and still maintain efficient operation. Hadoop is very good at this, the main solution being:

This is why, when you create a table in Hive, you don't need to define a partition key like in Greenplum. Also, this explains why Join operations are less efficient in Hive than they are in Greenplum.

As described above, Big Data distribution systems often trend toward random distribution regarding storage, increasing expandability at the cost of performance. However, re-examining this trade-off, using random distribution in storage doesn’t mean that we can't take advantage of data distribution optimized searches. The goal of distribution optimization is to utilize already existing distribution and satisfy future demands to the furthest extent possible. This kind of optimization includes:

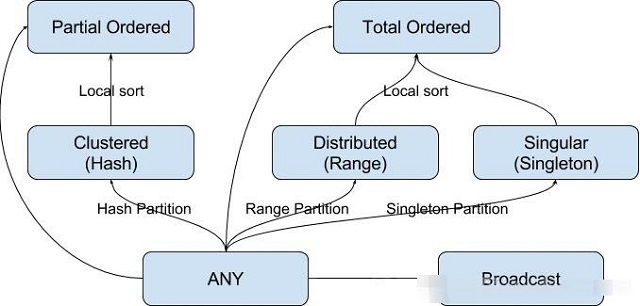

The following are examples of data distribution types and their meanings:

| Type | Meaning | Required variable | Optional variable | Example |

|---|---|---|---|---|

| ANY | Any distribution | - | - | ANY |

| HASH | Hash distribution | Keys | numBuckets | HASH(c1)[100] |

| RANGE | Range distribution | Keys | Boundaries | RNG(c1){(100, 200], (200, 300]} |

| BROADCAST | Broadcast distribution | - | - | BROADCAST |

| SINGLETON | Single node distribution | - | - | SINGLETON |

While adhering to Volcano optimizer syntax, we can turn distribution properties into a kind of physical property called distribution. Like other properties, it has ‘required property’ and ‘delivered property’ pairs. For example, for sorted merge join, it will apply a Partial Ordered required property to all input. At the same time, it will deliver a Partial Ordered property which gives following operations a chance to use this property and avoid a round of redistribution.

Consider the below query:

SELECT uid, count(*) FROM (

SELECT uid FROM user JOIN line ON user.uid = line.uid

) GROUP BY uidAt this point, if Join becomes a Sorted Merge Join, it may deliver a Hash[uid] property, which is required by Aggregate, then we can skip an unnecessary round of redistribution.

If we want to apply a similar optimization, then we need to take into consideration the below issues:

There are three ways to generate data distribution:

Several algorithms require a special data distribution:

Even if we have a series of required and delivered distribution properties, it’s still not easy to determine the kind of distribution needed for each operation. Unlike ordering properties (only includes row sequences and ascending or descending order properties), distribution properties vary significantly. The reason for this variance is:

The complexity leaves more room for finding the most optimal method. In reality, finding the most optimal method is a question of the NPC of the number of relational algebra numbers. To reduce the space that under the search, we use a heuristic branch selection algorithm. When compiling a relational algebra, we not only need to satisfy the needs of subsequent operations, we also need to think about the probability that prior operations will be able to produce satisfactory distribution. To realize the latter, one can use a module called Pulled Up Property.

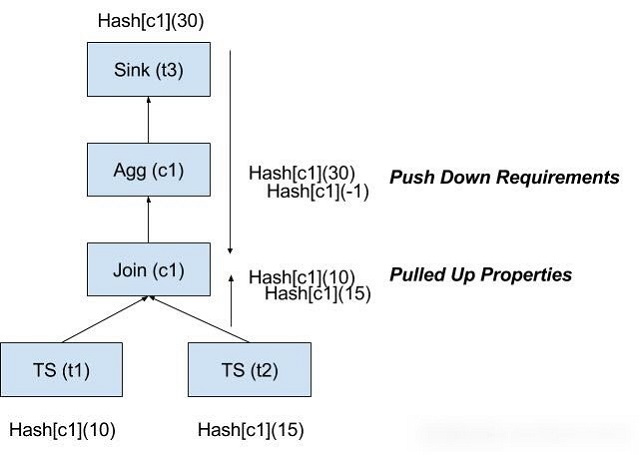

Pulled Up Property guesses and screens for possible preliminary delivered properties that we can use to narrow down searches during compilation. Consider the query in the above image. When compiling Join, because Sink requires a push-down operation, it needs to provide a Hash[c1](30). Pulled Up Property then guesses that prior operations will possibly provide Hash[c1](10) and Hash[c1](15). Considering that Join will directly require Hash[c1](30), it then reduces the Hash[c1](10) and Hash[c1](15) branches.

Data skew occurs when we store the majority of data on a minority of nodes during data distribution. It reduces the entire algorithm to single machine operation. Under Partitioning occurs when we specify very few nodes during distribution. It means that one cannot efficiently utilize the distribution resources. Of course, we hope to avoid these two situations.

These avoid these situations we need better statistical information. When an optimization plan encounters Data Skew or Under Partitioning, we need to apply the proper penalty to its cost estimation, decreasing its selection likelihood in the future. We define “good” distribution as one where the amount of data processed by each node falls within a certain pre-defined range. If data processing for a single node is lower or higher than this range, then it should penalize the distribution. Factors that go into estimating this data volume include:

In this article, we have gone over the significance of data distribution optimization and explained how to optimize data distribution in MaxCompute. We have already embodied these optimizations in the latest release of MaxCompute.

Looking at our tests, the effects of the optimization are undeniable. After applying to appropriate partitioning to TPC-H, we see that overall performance increasing by order of 20%. Even if do not partition the data on the table, partition optimization while running transparently to the user is very effective. When running in an online environment, 14% of queries were able to skip a step of redistribution because of these optimizations.

2,593 posts | 794 followers

FollowAlibaba Cloud MaxCompute - September 12, 2018

Alibaba Cloud MaxCompute - February 4, 2024

Alibaba Cloud MaxCompute - September 12, 2018

Alibaba Cloud MaxCompute - September 18, 2018

Alibaba Cloud MaxCompute - February 18, 2024

Alibaba Cloud Community - June 5, 2025

2,593 posts | 794 followers

Follow Big Data Consulting for Data Technology Solution

Big Data Consulting for Data Technology Solution

Alibaba Cloud provides big data consulting services to help enterprises leverage advanced data technology.

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More Big Data Consulting Services for Retail Solution

Big Data Consulting Services for Retail Solution

Alibaba Cloud experts provide retailers with a lightweight and customized big data consulting service to help you assess your big data maturity and plan your big data journey.

Learn More Data Lake Storage Solution

Data Lake Storage Solution

Build a Data Lake with Alibaba Cloud Object Storage Service (OSS) with 99.9999999999% (12 9s) availability, 99.995% SLA, and high scalability

Learn MoreMore Posts by Alibaba Clouder

Raja_KT March 16, 2019 at 3:45 am

The "shared" story has been vacillating from CPU, disk....:) . Snowflake shared data seems to bring life once but there are catches....