By Xiuji

When implementing and managing message queues, you may face some common challenges including:

This article describes how RocketMQ implements message reliability from three stages of the message transmission process: message sending, message storage, and message consumption.

A necessary presupposition in distributed systems is that all network transmissions are unreliable. In this case, you can only implement reliable message transmission through delivery retry. Most commonly used message queues such as RocketMQ and RabbitMQ can only guarantee successful message transmission at least once instead of exactly once.

Messages may be lost due to the unreliable network of the distributed system. How does RocketMQ prevent message loss as much as possible? The analysis of the entire process from message generation to final consumption could answer this. We can divide the complete message procedure into the following three stages:

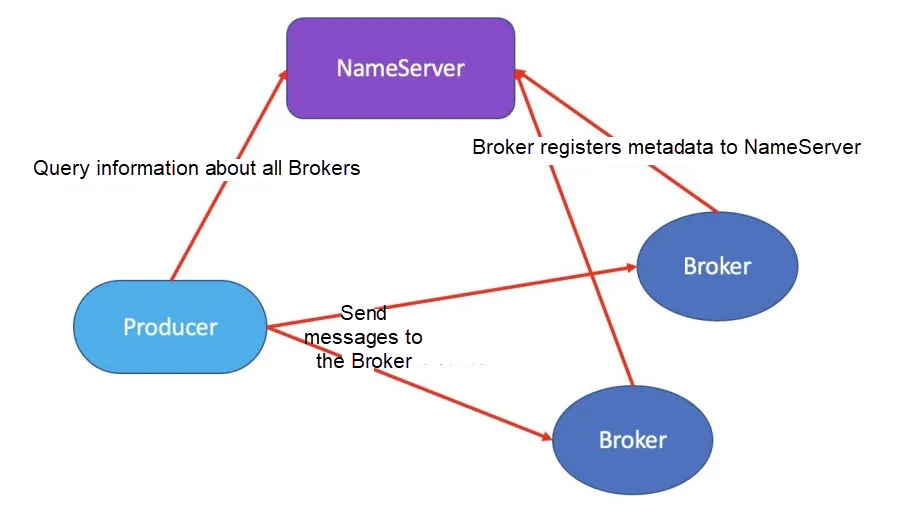

The following figure shows the core logic of message sending from Producer to Broker:

Message sending methods include synchronous sending, asynchronous sending, and one-way sending. Using a specific sending method depends on the actual conditions. The following section describes the message reliability guaranteed by different sending methods.

When the Producer sends a message in synchronous sending mode, it blocks the thread and waits until the server returns the sending result. To ensure message reliability and prevent message transmission failure, the Producer can send messages while blocking the thread and checking the status returned by the Broker synchronously to determine whether the messages are persistently successfully sent. If it times out or fails, two retries are attempted by default. RocketMQ selects the At Least Once message model. However, duplicate deliveries may occur since network transmission is unreliable. See the fourth section for the detailed retry strategy.

In asynchronous sending mode, the Producer sends messages to the callback interface implementation class. Calling such an interface does not block the thread, and the sending method is returned immediately. The callback task is executed in another thread, and the message, sending result is returned to the corresponding callback function. In a specific business implementation, you can judge whether to retry or not to ensure message reliability based on the sending results.

After the messages are sent in one-way sending mode, the Producer calls the producing interface and immediately returns it without returning the sending result. The business side cannot determine whether the messages are sent successfully according to the sending status. One-way sending is less reliable than the previous two methods. Therefore, we don't recommend it for the sake of message reliability.

In the RocketMQ architecture model, multiple message Brokers serve a certain topic. The messages under a topic are distributed on multiple Broker storage ends. The messages and Broker storage ends are in a many-to-many relationship. The Broker will report the metadata of the topic to which it provides storage service to the NameServer. The high-availability service offered by peer-to-peer NameServer nodes will maintain the mapping relation between the topic and the Broker. The many-to-many mapping relation serves as the basis for a message to be resent to multiple Brokers.

When sending messages, if the topic routing information is cached in the Producer with the message queue included, the Producer returns the routing information directly. Otherwise, the Producer queries the topic routing information from the NameServer. After that, it selects a queue to send the messages according to the specified queue selection policy, which is polling by default. The messages are returned if sent successfully. If an exception is received, delivery is retried according to the corresponding policies. You can ensure message reliability at different levels based on the Broker latency detected by the Producer, the information of the Broker that failed to send messages last time, the parameters for retrying other Brokers, and the maximum timeout value set by the Producer, etc. Delivery retry can effectively ensure successful message sending and ultimately improve the message reliability.

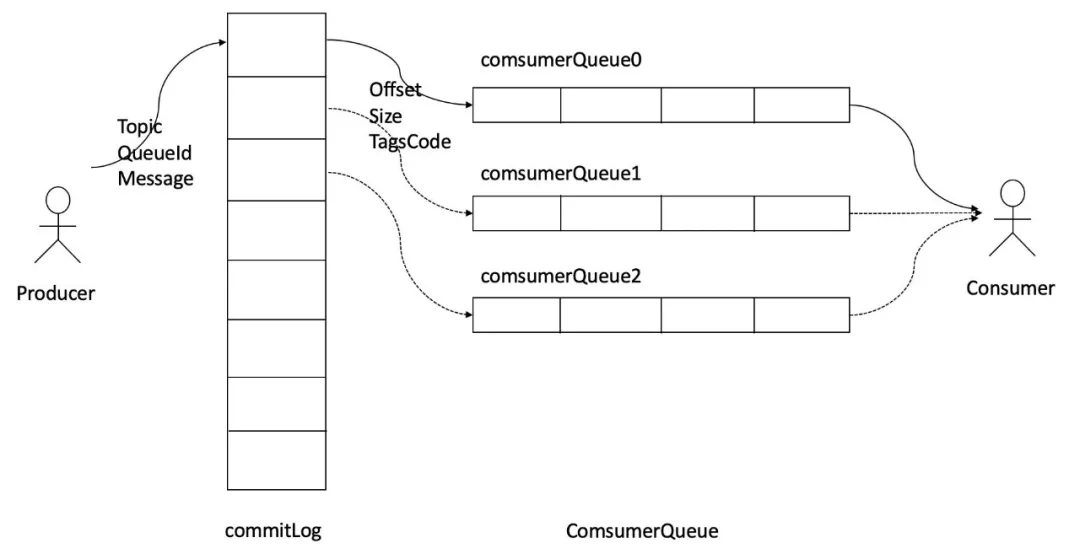

The following figure shows the message storage structure of RocketMQ:

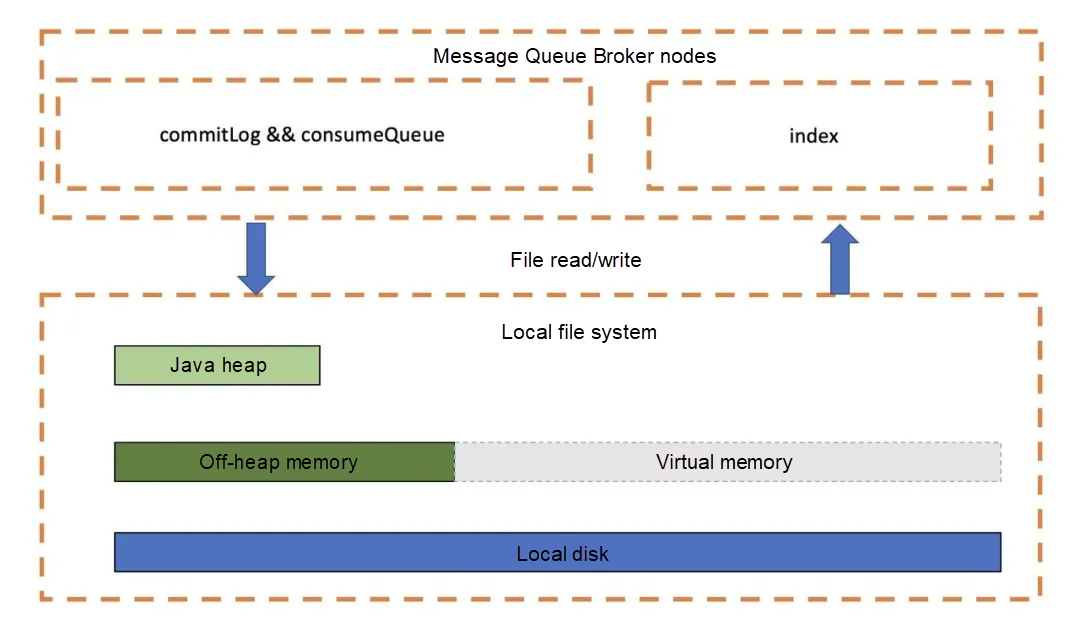

Currently, the RocketMQ storage model uses the local disk for storage. The data is written in the order of producer -> direct memory -> PageCache -> disk. Data is read from PageCache if it contains data. Otherwise, the data needs to be loaded from disk to PageCache first. The following figure shows the file storage mode on the Broker storage nodes:

The data is written into the CommitLog on the Broker in sequence, significantly improving the write efficiency. Simultaneously, different disk flushing modes ensure data reliability to various degrees. In addition, the ConsumeQueue intermediate structure stores offset information to implement message distribution. Due to the limited size and fixed structure of ConsumeQueue, most ConsumeQueue can be fully read into memory at a normal memory read speed. Besides, to ensure the consistency between the CommitLog and ConsumeQueue, the CommitLog stores all other information, including Consumer Queues, Message Key, and Tag. Even if the ConsumeQueue is lost, you can completely recover it through the CommitLog. As such, you can guarantee the reliability of the ConsumeQueue as long as the reliability of the CommitLog data is guaranteed.

The RocketMQ storage side uses local disks to store CommitLog message data, which inevitably brings the issue of storage reliability. RocketMQ has always been working on improving data reliability to prevent message loss.

In the following scenarios, the RocketMQ storage side - or the Broker - faces the challenges in storage reliability:

1) Normal Broker shutdown

2) Broker Crash

3) OS Crash

4) The machine is out of power, but the power supply can be restored immediately

5) Machine startup failure (possibly caused by the damage to critical devices, such as the CPU, mainboard, and memory)

6) Disk device damage

For a normal Broker shutdown, start the Broker again to recover all data. In Broker Crash, OS Crash, and the machine out of power, synchronous disk flushing can prevent data loss, while asynchronous disk flushing may cause a small amount of data loss. Machine startup failure and disk device damage are single point of failure (SPOF) and cannot be recovered. You can solve the single point of failure by adding a Slave node. The master-slave asynchronous replication may still cause a minimal amount of data loss, while synchronous replication can completely avoid the single point of failure problem.

In general, you can prioritize either performance or reliability. For RocketMQ, two factors mainly affect the reliability of the Broker:

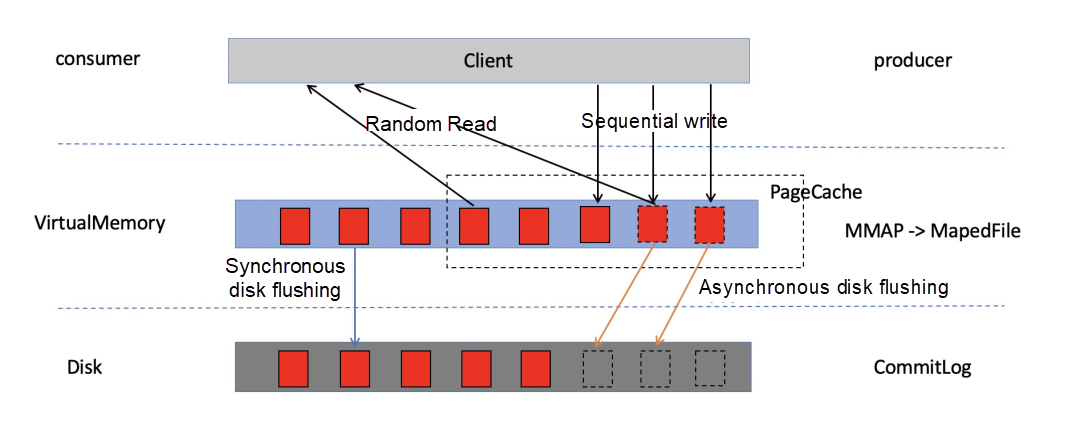

If you implement disk flushing and master-slave replication for all messages, it will undoubtedly reduce the performance. Otherwise, there may be a possibility of message loss. Generally, RocketMQ first writes messages to PageCache and then persists the messages to disk. Data can be flushed from PageCache to disk synchronously or asynchronously. The following figure shows the process of reading and writing messages:

The storage reliability of the stand-alone broker depends on the flushing policy of a single machine. For replication between master and slave, see master-slave mode in the next section.

After a message is written to the PageCache in the memory, the flushing thread is immediately informed of the flushing disk. Then, when the flushing thread is finished, the waiting thread wakes up and returns the status that the message is written successfully. This method ensures absolute data security with low throughput.

When a message is written to the PageCache in the memory, the result of a successful write is immediately returned to the client. When messages in PageCache accumulate to a certain amount, trigger a write operation or time writing PageCache messages to disks. This mode features high throughput and performance. However, data on a PageCache may be lost and be absolutely secure.

In practice, you should properly configure the disk flushing methods, especially synchronous flushing, since frequent disk writes can reduce performance significantly.

RocketMQ operates CommitLog and ConsumeQueue files based on the file memory mapping mechanism. It loads all files during startup. So, the expired file deletion mechanism is introduced to avoid memory and disk waste, recycle disks, and avoid that the messages cannot be written due to insufficient disks. With this, you can maintain the disk usage at a certain level to ensure reliable storage of newly written messages.

By default, RockerMQ provides the At Least Once consumption semantics to ensure reliable message consumption.

Generally, the confirmation mechanism for message consumption is divided into two types:

1) Submit before consumption.

2) Consume first and summit after consumption succeeds.

The first mechanism can solve the problem of repeated consumption, but messages will be lost. Therefore, RocketMQ implements the second mechanism by default. Different business sides respectively ensure idempotent consumption to solve the problem of repeated consumption on their Consumers.

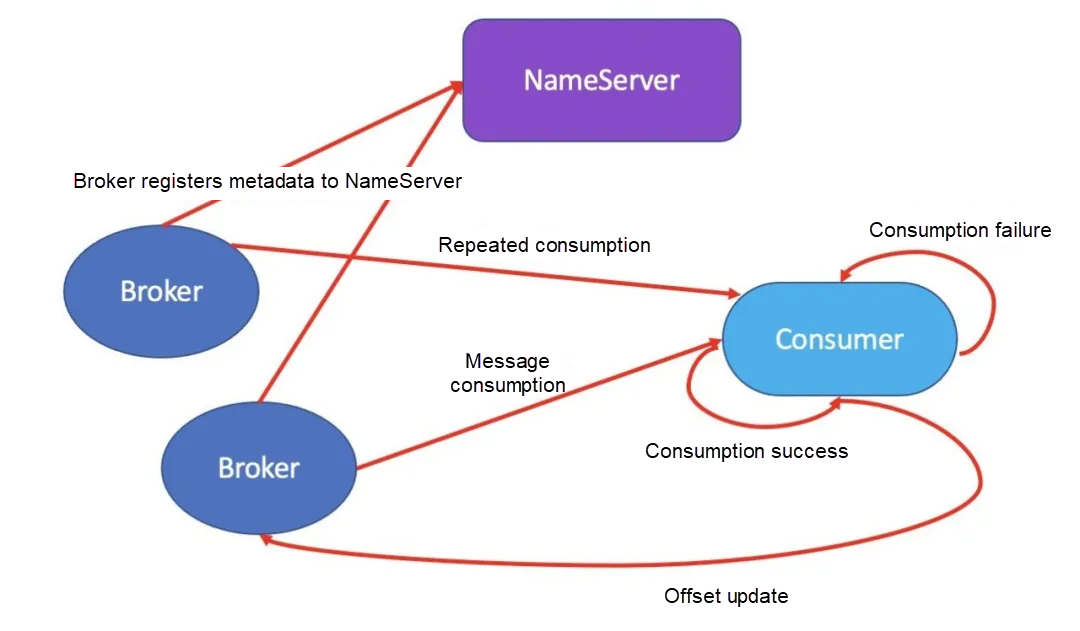

The following figure shows the core logic of message consumption on the Consumer:

After the Consumer pulls a message from RocketMQ, it should return a consumption success message to indicate that the business side normally completes the consumption. Therefore, consumption is completed only when CONSUME_SUCCESS is returned. If CONSUME_LATER is returned, messages are consumed again based on different messageDelayLevel values that range from seconds to hours. Retry the consumption after a maximum period of two hours. If the consumption fails after 16 tries, it will no longer be retried. The message will instead be sent to the dead-letter queue to guarantee message reliability.

RocketMQ does not immediately discard the messages unsuccessfully consumed, but sends them to the dead-letter queue with %DLQ% appended to the original queue name. For the message finally entering the dead-letter queue, it can be obtained through the relative API provided by RocketMQ, ensuring the reliability of message consumption.

Consumption backtracking refers to whether the successfully consumed messages or the messages with problematic consumption business logic need to be re-consumed. To support message backtracking, after the Broker delivers a success message to the Consumer, the message needs to be retained. Re-consumption is generally based on the chronological order. For example, if the Consumer system fails, the data from one hour ago needs to be re-consumed after recovery. The RocketMQ Broker provides a mechanism to roll back the consumption progress by the time, ensuring that a message can always be consumed as long as it is sent successfully and is not expired.

This article analyzes how RocketMQ ensures message reliability through the entire message transmission process:

As such, RocketMQ has ensured message reliability while implementing a closed loop, preventing message loss to the maximum extent.

1 posts | 0 followers

FollowAlibaba Cloud Native Community - October 26, 2023

Alibaba Developer - September 7, 2020

Alibaba Cloud Native - August 6, 2024

Alibaba Cloud Native Community - November 23, 2022

Alibaba Developer - November 10, 2021

Alibaba Cloud Native Community - March 20, 2023

1 posts | 0 followers

Follow ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ

ApsaraMQ for RocketMQ is a distributed message queue service that supports reliable message-based asynchronous communication among microservices, distributed systems, and serverless applications.

Learn More Apsara Stack

Apsara Stack

Apsara Stack is a full-stack cloud solution created by Alibaba Cloud for medium- and large-size enterprise-class customers.

Learn More ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn More Super Computing Cluster

Super Computing Cluster

Super Computing Service provides ultimate computing performance and parallel computing cluster services for high-performance computing through high-speed RDMA network and heterogeneous accelerators such as GPU.

Learn More