By He Ting from Alibaba Voice AI

Released by Alibaba Cloud Research Center

What is speech synthesis? As the name implies, it means to transform the text into text-to-speech (TTS).

Nowadays, self-media publishers require video dubbing, and virtual characters are needed to combine with 2D images (and even 3D modeling) to communicate with people. Coupled with those emerging requirements, TTS is required to turn text into corresponding speech and make synthesized speech more expressive, with rhythm, sound quality, and emotion closer to a real person's voice.

How can you customize a voice with human-like expressions? Let's start with Dong Dong, the virtual host during the 2022 Beijing Winter Olympics.

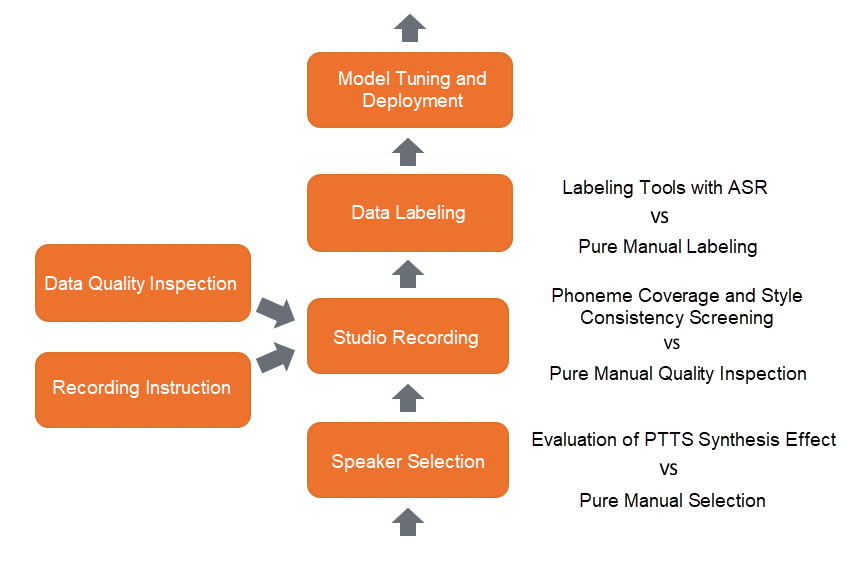

First of all, it is necessary to be clear about what kind of voice you need. The DAMO Academy Speech Laboratory calls it a voice portrait. It uses the following description. “The voice sounds like an 18 or 19-year-old girl, who speaks standard Mandarin with a sweet tone and is sporty, fashionable, and lively, such as Zhang Zifeng, a Chinese actress.” Then, multiple speakers are selected to audition one or two specific sentences.

The DAMO Academy Speech Laboratory is different from the traditionally direct selection of speakers. It uses personalized text-to-speech (PTTS) to evaluate the synthesis effect. The audio of other specific texts is synthesized according to the one or two auditions mentioned previously. Then, a comprehensive evaluation is conducted based on the original audio and the preliminary synthesis effect to determine the target speaker.

The DAMO Academy Speech Laboratory invited the target speaker that meets the recording requirements of Dong Dong to the studio to ensure that the audio effect is stable and high quality. Then, according to the hosting material of the Winter Olympics and the content of general scenarios, the text that the speaker of Dong Dong needs to record is designed through the calculation of phoneme coverage. The audio recorded by the speaker with different states may vary significantly. Recording instructions are required during the recording process. In addition, data quality inspection is required after the recording. The audio quality determines the upper limit of the customized voice. Unlike traditionally manual quality inspection, The DAMO Academy Speech Laboratory performs automated style consistency screening based on audio features to ensure high consistency of the recorded audio. The amount of filtered audio can ensure phoneme coverage.

The filtered audio can be labeled automatically by Automatic Speech Recognition (ASR), but it also needs manual inspection and adjustment.

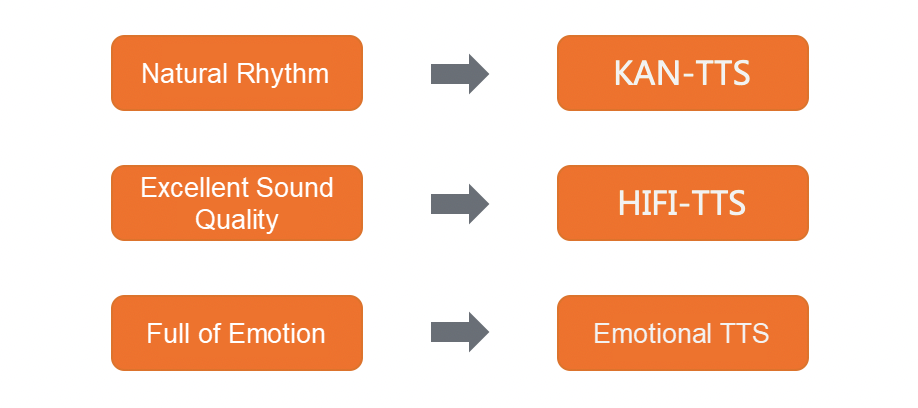

The DAMO Academy Speech Laboratory wanted to achieve the customized effect with human-like expression and developed the KAN-TTS model to make the rhythm more natural. Moreover, the HIFI-TTS model can make the sound quality of the synthesized audio sound better. In addition, the emotional TTS enriches the speaker's emotion and speaking style. Finally, combining the three models, Dong Dong can switch her styles between a formal host and a talk show host. In the comprehensive evaluation of naturalness, Dong Dong is 98% close to the original target speaker. After the tuned TTS model is obtained, the voice of Dong Dong relies on Alibaba Cloud and can be used for all-weather voice broadcasting during the Winter Olympics.

Any refined voice that users want (like the customization process of Dong Dong) can be customized through these four steps.

The voice of Dong Dong is an exclusive copyright held by the customization side. However, the DAMO Academy Speech Laboratory provides about 100 kinds of customized voices on Alibaba Cloud made by speech synthesis with various scenes, voices, and accents but without restrictions. These customized voices include Ai Xia for customer service, Ai Yuan for literature, and Xiao Xian for live broadcast scenarios. The ultra-high-definition column offers the same voice from A Bite of China, a documentary about Chinese cuisine.

The DAMO Academy Speech Laboratory also provides different levels of voice customization. In addition to Dong Dong, the refined voice has a customization effect, making it easy to recognize. There is also a good synthesis effect that can be achieved using a small amount of audio. Ordinary users can also customize their TTS voice and personalized vocal customization.

From standard customization to personalized customization, focusing on highly expressive synthesized speech will be one of the key directions for technology polishing in the DAMO Academy Speech Laboratory. The prosodic fluctuation and prosodic restoration of single-sentence text are improved after using explicit and implicit prosodic modeling. It is especially expected to strengthen the prosodic continuity and mutual influence of the beginning and end of sentences and enhance the rhythm of the context of paragraphs for long text modeling. Incorporating stumbling, repetition, and modal particles can enhance the realism of the synthesized speech to achieve a stable and reliable synthesis effect with a high-sample rate, good quality, and human-like expression.

Interpretation of “1+N” Policy: Explore Low-Carbon and Green China

1,401 posts | 492 followers

FollowAlibaba Cloud Community - June 24, 2022

Alibaba Cloud Community - January 5, 2026

Alibaba Cloud Community - February 10, 2022

5991570251130339 - January 31, 2023

Alibaba Cloud Community - December 16, 2025

Alibaba Cloud Community - March 2, 2022

1,401 posts | 492 followers

Follow Intelligent Speech Interaction

Intelligent Speech Interaction

Intelligent Speech Interaction is developed based on state-of-the-art technologies such as speech recognition, speech synthesis, and natural language understanding.

Learn More Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn MoreMore Posts by Alibaba Cloud Community