By Zhuodao

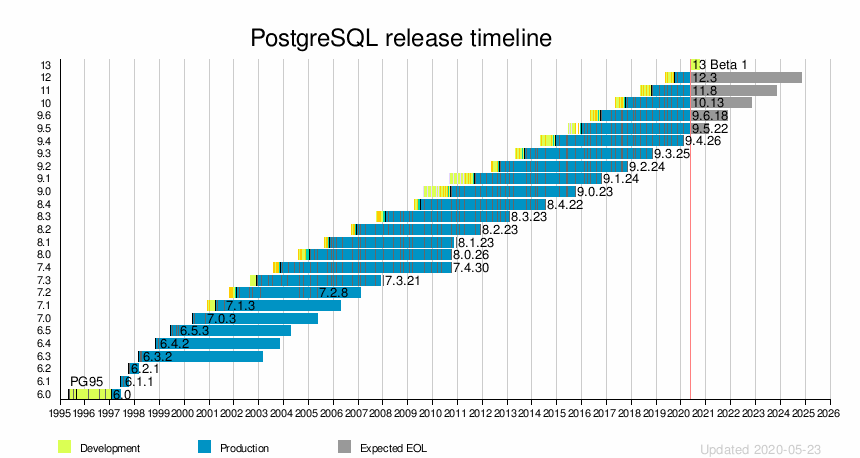

Starting from PostgreSQL 10, the community has been able to release a major revision every year, demonstrating their amazing vitality and creativity.

On May 21, 2020, the PostgreSQL Global Development Group announced that the first beta release of PostgreSQL 13 was available for download. This release contains previews of all features that will be available in the final release of PostgreSQL 13, though some details of the release could change before then. Let's look at the new features and changes found in PostgreSQL 13.

In the community's published document, we can see that the new features of PostgreSQL 13 are divided into the following sections:

We will introduce these sections and carry out realistic tests to look at specific effects.

There are many new features in PostgreSQL 13 that help improve the overall performance of PostgreSQL while making it easier to develop applications.

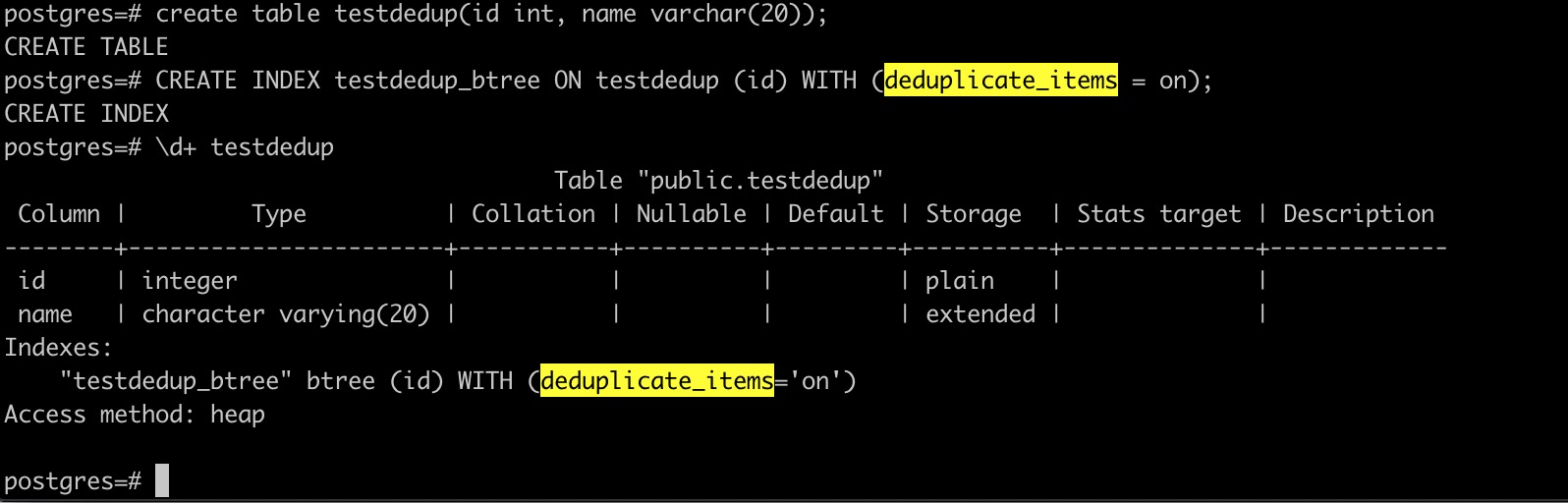

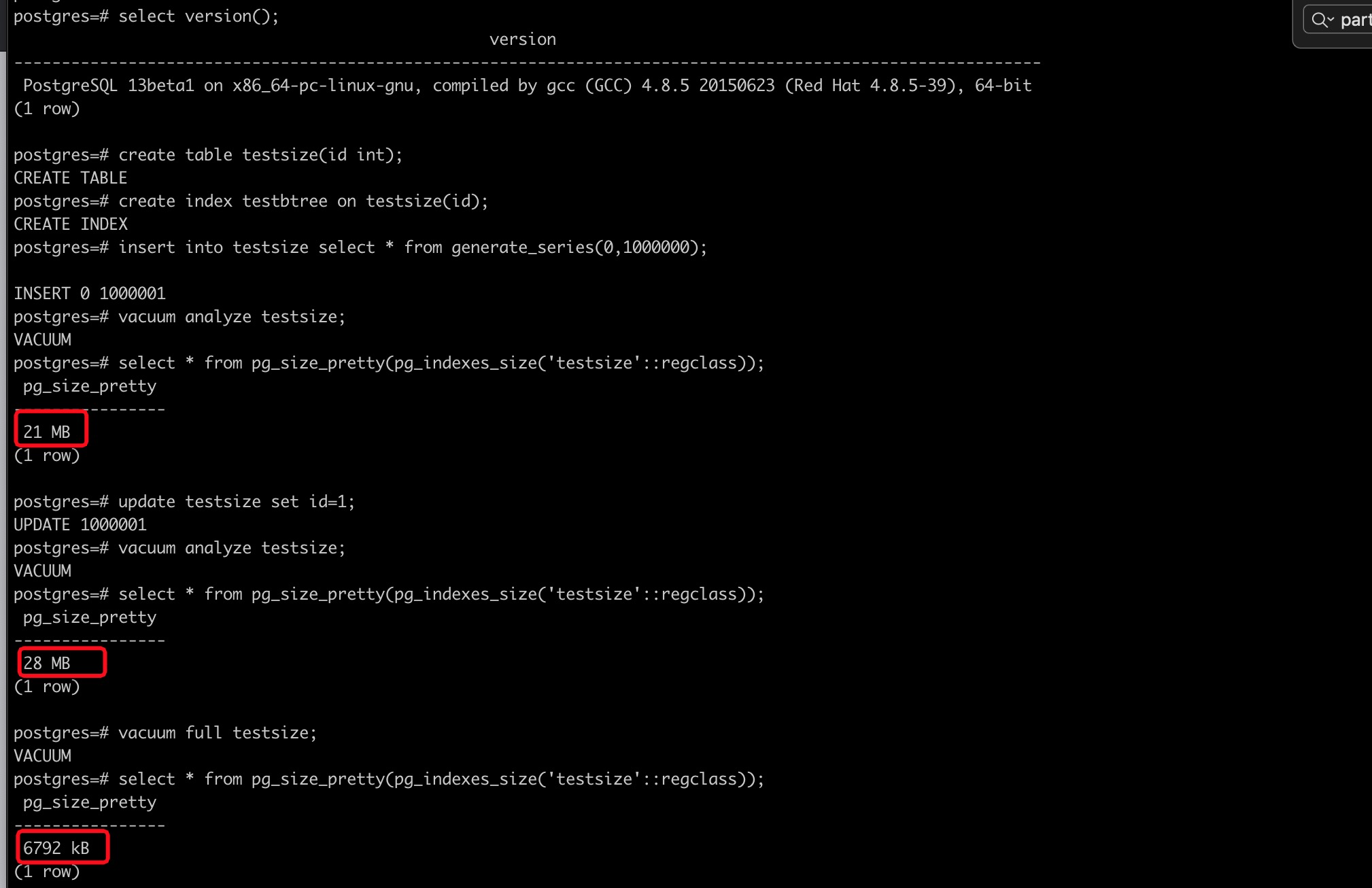

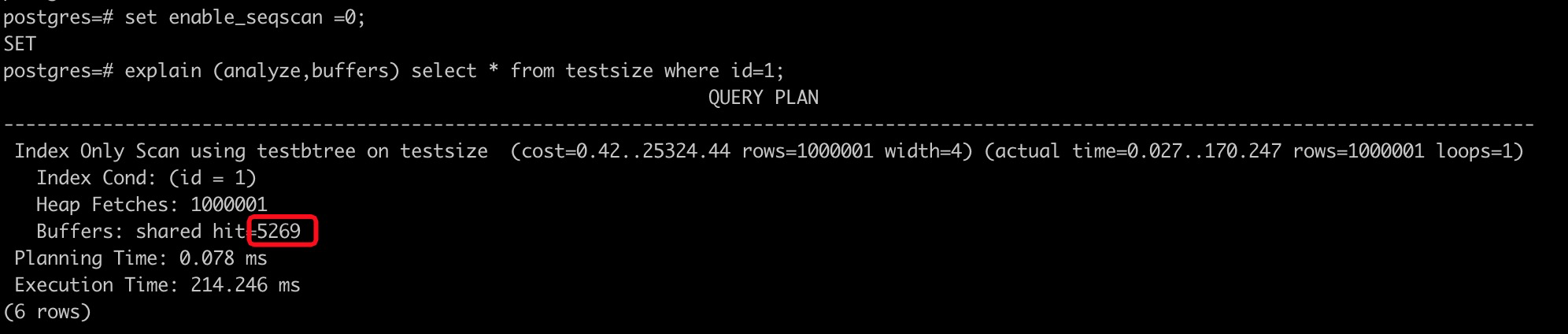

If table columns are not unique, many identical values may exist. The corresponding B-tree indexes may also have many duplicated index entries. In PostgreSQL 13, B-tree indexes refer to GIN indexes to link up ctids of the rows to which the same key points. This enhancement not only shrinks the index size, but it also reduces many unnecessary splits and improves the lookup speed. This enhancement is called deduplication for B-tree indexes. In addition, the feature is conducted asynchronously. This operation is performed only when a B-tree index needs to be split. This reduces the overhead of this feature.

The following section describes how to use deduplication for B-tree indexes:

As you can see, you must set the deduplicate_items storage parameter to "on" when you create a B-tree index to enable deduplication. In PostgreSQL 13, this storage parameter is on by default.

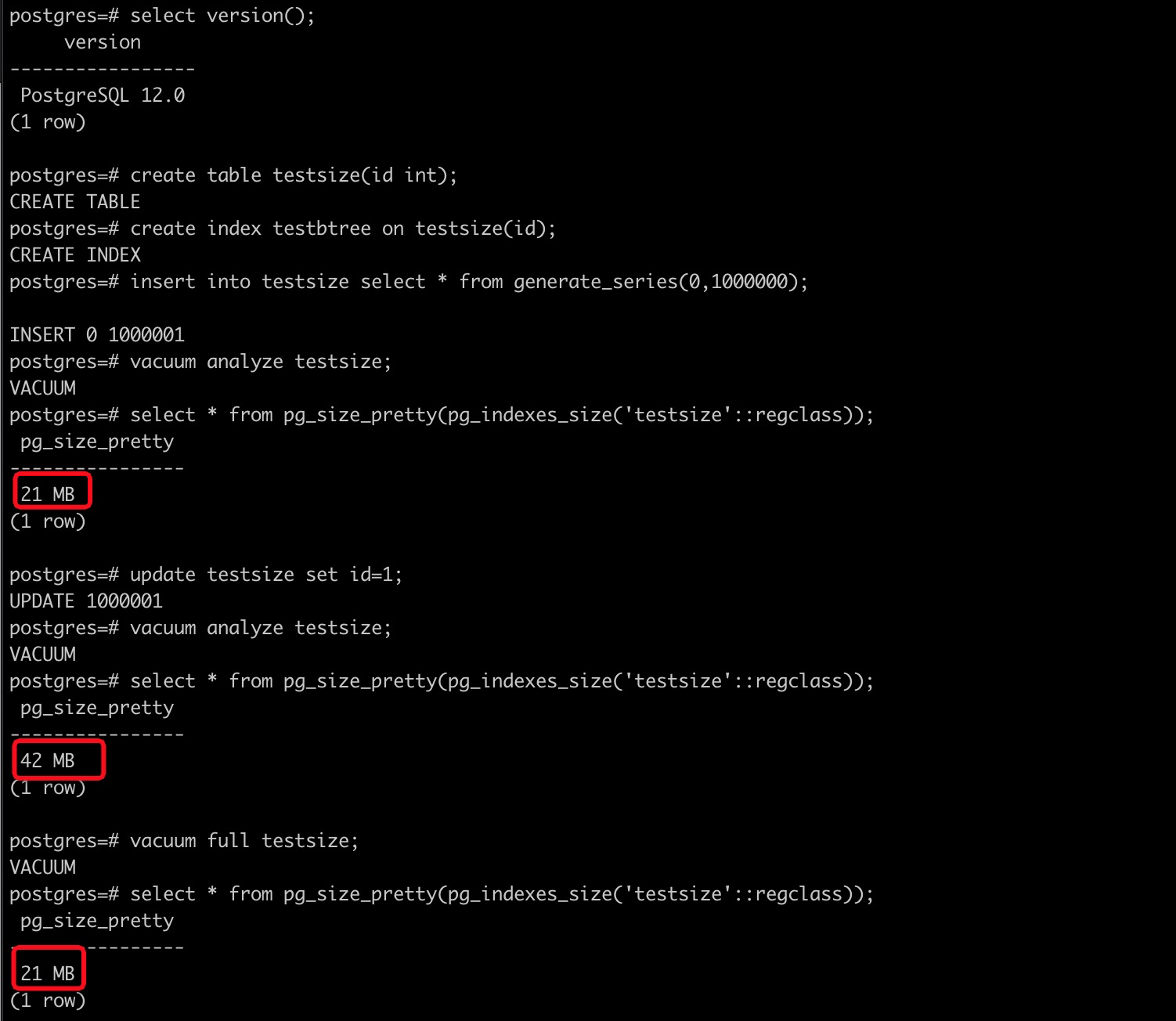

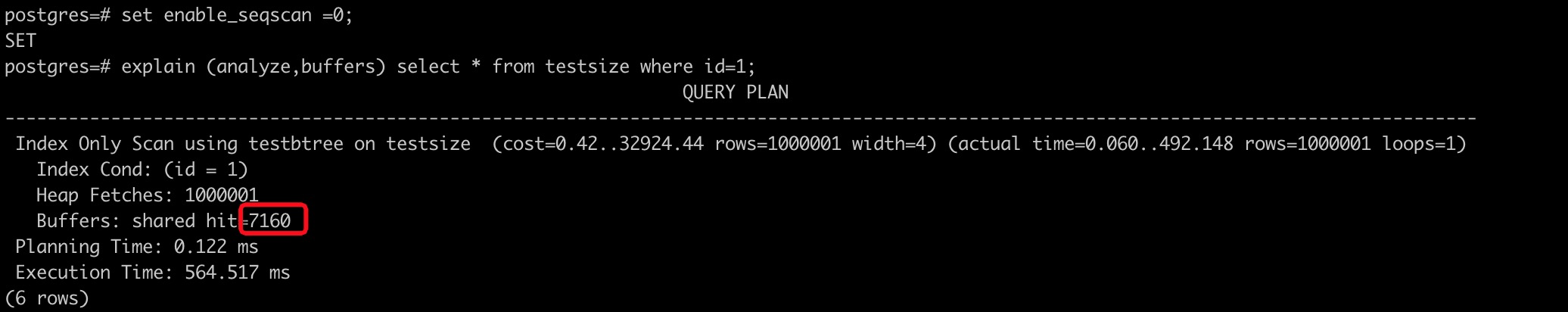

To test the effect of this feature, we compared PostgreSQL 12 and PostgreSQL 13.

PostgreSQL 12:

PostgreSQL 13:

Based on the images above, we can see the following aspects:

In addition to data-level duplication, deduplication for B-tree indexes in PostgreSQL 13 is also effective to deal with different snapshots of repeated keys in a B-tree index due to the MVCC implementation. However, deduplication cannot be used in the following cases:

Overall, these data types are unsupported primarily because extra conditions are required to determine whether keys of indexes are the same, in addition to values.

B-tree indexes are a default index type in PostgreSQL. In the community, several major versions of PostgreSQL have continuously optimized its occupied space and execution efficiency. A certain degree of deduplication was implemented to B-tree indexes in PostgreSQL 12.

PostgreSQL 13 adds incremental sorting. This improvement derives from a native algorithm. When a group of data sets (X,Y) are sorted as X and Y columns, if the current data set has been sorted by the X column, specific sorting is shown in the following figure:

(1, 5)

(1, 2)

(2, 9)

(2, 1)

(2, 5)

(3, 3)

(3, 7)At this time, you only need to group the data sets by the X column and sort Y columns in each group to obtain a result set based on sorting by X and Y columns, as shown in the following figure:

(1, 5) (1, 2)

(2, 9) (2, 1) (2, 5)

(3, 3) (3, 7)

=====================

(1, 2)

(1, 5)

(2, 1)

(2, 5)

(2, 9)

(3, 3)

(3, 7)The advantages of this algorithm are obvious, especially for large data sets, because it can reduce the volume of data sorted each time. You can use certain policy control to make the data volume in sorting better fit the current work_mem every time. Moreover, in PostgreSQL's waterfall model executor, we can obtain some result sets without sorting all data, which is very suitable for Top-N queries with the Limit keyword.

Of course, the optimizer in a database is much more complex than the preceding scenario. If each group is large and a small number of groups exist, the cost of incremental sorting is relatively high. If each group is small and a large number of groups exist, it takes less time and energy to use the previously sorted results based on the incremental sorting feature. To neutralize the effects of the two cases, two patterns are used in PostgreSQL 13:

In PostgreSQL, the optimizer prefers pattern 1 and uses pattern 2 heuristically.

The following part describes how to use incremental sorting and compares a query plan where this feature is enabled and a query plan where this feature is disabled.

postgres=# create table t (a int, b int, c int);

CREATE TABLE

postgres=# insert into t select mod(i,10),mod(i,10),i from generate_series(1,10000) s(i);

INSERT 0 10000

postgres=# create index on t (a);

CREATE INDEX

postgres=# analyze t;

ANALYZE

postgres=# set enable_incrementalsort = off;

SET

postgres=# explain analyze select a,b,sum(c) from t group by 1,2 order by 1,2,3 limit 1;

QUERY PLAN

------------------------------------------------------------------------------------------------------------------------

Limit (cost=231.50..231.50 rows=1 width=16) (actual time=2.814..2.815 rows=1 loops=1)

-> Sort (cost=231.50..231.75 rows=100 width=16) (actual time=2.813..2.813 rows=1 loops=1)

Sort Key: a, b, (sum(c))

Sort Method: top-N heapsort Memory: 25kB

-> HashAggregate (cost=230.00..231.00 rows=100 width=16) (actual time=2.801..2.804 rows=10 loops=1)

Group Key: a, b

Peak Memory Usage: 37 kB

-> Seq Scan on t (cost=0.00..155.00 rows=10000 width=12) (actual time=0.012..0.951 rows=10000 loops=1)

Planning Time: 0.169 ms

Execution Time: 2.858 ms

(10 rows)

postgres=# set enable_incrementalsort = on;

SET

postgres=# explain analyze select a,b,sum(c) from t group by 1,2 order by 1,2,3 limit 1;

QUERY PLAN

---------------------------------------------------------------------------------------------------------------------------------------------

Limit (cost=133.63..146.52 rows=1 width=16) (actual time=1.177..1.177 rows=1 loops=1)

-> Incremental Sort (cost=133.63..1422.16 rows=100 width=16) (actual time=1.176..1.176 rows=1 loops=1)

Sort Key: a, b, (sum(c))

Presorted Key: a, b

Full-sort Groups: 1 Sort Method: quicksort Average Memory: 25kB Peak Memory: 25kB

-> GroupAggregate (cost=120.65..1417.66 rows=100 width=16) (actual time=0.746..1.158 rows=2 loops=1)

Group Key: a, b

-> Incremental Sort (cost=120.65..1341.66 rows=10000 width=12) (actual time=0.329..0.944 rows=2001 loops=1)

Sort Key: a, b

Presorted Key: a

Full-sort Groups: 3 Sort Method: quicksort Average Memory: 28kB Peak Memory: 28kB

Pre-sorted Groups: 3 Sort Method: quicksort Average Memory: 71kB Peak Memory: 71kB

-> Index Scan using t_a_idx on t (cost=0.29..412.65 rows=10000 width=12) (actual time=0.011..0.504 rows=3001 loops=1)

Planning Time: 0.164 ms

Execution Time: 1.205 ms

(15 rows)There are more improvements added to PostgreSQL's partitioning functionality in this release, including an increased number of cases where a join directly between partitioned tables can occur, which can improve overall query execution time. Partitioned tables now support BEFORE row-level triggers, and a partitioned table can now be fully replicated through logical replication without having to publish individual partitions.

One of the most anticipated features of PostgreSQL 13 is the ability for the VACUUM command to process indexes in parallel. This feature was unavailable in earlier versions. Therefore, VACUUM processing time was very long for large tables. PostgreSQL 13 supports parallel VACUUM for indexes, but there are many restrictions.

We compared PostgreSQL 13 and PostgreSQL 12 in terms of VACUUM. The results are below:

=================================PG 13 parallel vacuum===============================

postgres=# create table testva(id int,info text);

CREATE TABLE

Time: 2.334 ms

postgres=# insert into testva select generate_series(1,1000000),md5(random()::text);

INSERT 0 1000000

Time: 1448.098 ms (00:01.448)

postgres=# create index idx_testva on testva(id);

CREATE INDEX

Time: 364.988 ms

postgres=# create index idx_testva_info on testva(info);

CREATE INDEX

Time: 873.416 ms

postgres=# vacuum (parallel 4) testva;

VACUUM

Time: 114.846 ms

=================================PG 12 normal vacuum===============================

postgres=# create table testva(id int,info text);

CREATE TABLE

Time: 5.817 ms

postgres=# insert into testva select generate_series(1,1000000),md5(random()::text);

INSERT 0 1000000

Time: 3023.958 ms (00:03.024)

postgres=# create index idx_testva on testva(id);

CREATE INDEX

Time: 631.632 ms

postgres=# create index idx_testva_info on testva(info);

CREATE INDEX

Time: 1374.849 ms (00:01.375)

postgres=# vacuum testva;

VACUUM

Time: 216.944 msAccording to this comparison, the VACUUM speed of PostgreSQL 13 is much higher than PostgreSQL 12. However, there is still room for improving parallel VACUUM. The good news is that the community is actively discussing block-level parallel VACUUM in emails. For more information, visit the link.

These features greatly improve PostgreSQL database administration, especially the pg_rewind, which is not detailed here. In another article, we will describe how this feature improves functionality.

PostgreSQL continues to improve on its security capabilities in this latest release, introducing several features to help further deploy PostgreSQL safely.

For more information about other features of PostgreSQL 13, visit the link.

Although PostgreSQL 13 did not introduce the planned TDE and zheap features, it still has many eye-catching features, including deduplication for B-tree indexes, parallel VACUUM, and disk usage by hash aggregation. Moreover, you can use a new tool called pg_verifybackup to verify the integrity of a backup and use pg_rewind to configure standby PostgreSQL instances. pg_rewind can also use the restore_command of the target instance to fetch needed write-ahead logs. If you are interested, you can download the source code, compile it, analyze the implementation of the features you are interested in. You may get better ideas.

Alibaba Clouder - February 13, 2021

digoal - February 5, 2020

digoal - May 28, 2019

Alibaba Clouder - July 2, 2019

Alibaba Cloud Native Community - February 9, 2023

Alibaba Clouder - July 1, 2020

PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More Security Center

Security Center

A unified security management system that identifies, analyzes, and notifies you of security threats in real time

Learn More Database Security Solutions

Database Security Solutions

Protect, backup, and restore your data assets on the cloud with Alibaba Cloud database services.

Learn More AnalyticDB for PostgreSQL

AnalyticDB for PostgreSQL

An online MPP warehousing service based on the Greenplum Database open source program

Learn More