By Priyankaa Arunachalam, Alibaba Cloud Tech Share Author. Tech Share is Alibaba Cloud's incentive program to encourage the sharing of technical knowledge and best practices within the cloud community.

In the previous article, we have discussed about Spark for big data and show you how to set it up on Alibaba Cloud.

In this blog series, we will walk you through the basics of Hive, including table creation and other underlying concepts for big data applications.

"Our ability to do great things with data will make a real difference in every aspect of our lives," Jennifer Pahlka

There are different ways of executing MapReduce operations. First is the traditional approach, where we use Java MapReduce program for all types of data. The second approach is the scripting approach for MapReduce to process structured and semi-structured data. This approach is achieved by using Pig. Then comes the Hive Query Language, HiveQL or HQL, for MapReduce to process structured data. This is achieved by Hive.

As discussed in our previous article, Hadoop is a vast array of tools and technologies and at this point, it is more convenient to deploy Hive and Pig. Hive has its advantages over Pig, especially since it can make data reporting and analyzing easier through warehousing.

Hive is built on top of Hadoop and used for querying and analysis of data that is stored in HDFS. It is a tool which helps programmers analyze large data sets and access the data easily with the help of a query language called HiveQL. This language internally converts the SQL-like queries into MapReduce jobs for deploying it on Hadoop.

We also have Impala at this standpoint, which is quite commonly heard along with Hive, but if you watch keenly, Hive has its own space in the market place and hence it has better support too. Impala is also a query engine built on top of Hadoop. It makes use of existing Hive as many Hadoop users already have it in place to perform batch oriented jobs.

The main goal of Impala is to make fast and efficient operations through SQL. Integrating Hive with Impala gives users an advantage to use either Hive or Impala for processing or to create tables. Impala uses a language called ImpalaQL which is a subset of HiveQL. In this article, we will focus on Hive.

Relational databases are of "Schema on Reaad and Schema on Write", where functions like Insertions, Updates, and Modifications can be performed. By borrowing the concept of "write once read many (WORM)", Hive was designed based on "Schema on Read only". A typical Hive query runs on multiple Data Nodes and hence it was tough to update and modify data across multiple nodes. But this has been sorted out in the latest versions of Hive.

Hive supports various file formats like the flat Files or text files, SequenceFiles, RC and ORC Files, Avro Files, Parquet and custom input and output formats. Text file is the default file format of Hive.

Storage options in Hive

Serializer/Deserializer in Hive or Hive SerDe is used for the purpose of IO which can handle both serialization and deserialization in Hive. There are different types of SerDe like native SerDe and custom SerDe with which you can create tables. If the ROW FORMAT is not specified, then use native SerDe. Apart from different types of SerDe and we can also write our own SerDe for our own data formats. At this initial stage, we will just get familiar with this concept, as it is something important to concentrate in Hive.

To recollect, whatever file you write to HDFS, it is just stored as a file over there. Here comes Hive, which can impose structure on different data formats. The points below elaborates on initiating a hive shell, its usage and some basic queries to start with and understand the working of hive.

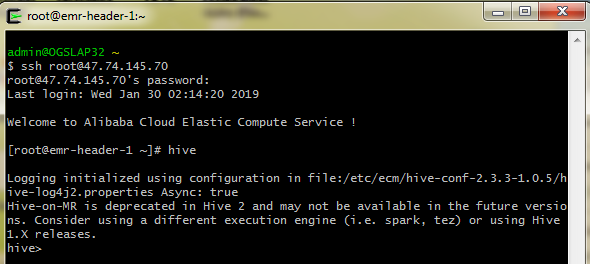

Log in to the Alibaba Cloud master Elastic Compue Service (ECS) Instance, and just type "hive" which leads to a screen as shown below. If you have configured Hive to run on a different worker node, rather than the master, then login to that particular host and open the hive shell.

Let's look through some basic queries in HiveQL. The very first is creating a table in Hive.

Syntax

CREATE TABLE [IF NOT EXISTS] table_name

[(col_name data_type [COMMENT col_comment], ...)]

[COMMENT table_comment]

[ROW FORMAT row_format]

[STORED AS file_format]Output

OK

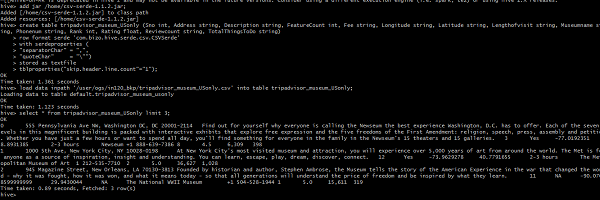

Time taken: 5.905 secondsIn our case, let's try creating a table with the columns present in the tripadvisor_merged sheet.

create table tripadvisor_museum_USonly (Sno string, Address string, Description string, Fee string, Longitude string, Latitude string, Lengthofvisit string, Museumname string, Phonenum string, Rank string, Rating float, Reviewcount string, TotalThingsToDo string, Country string, State string, Rankpercentage string, Art_Galleries string, Art_Museums string, Auto_Race_Tracks string, Families_Count string, Couple_Count string, Solo_Count string,

Friends_Count string)

row format serde 'com.bizo.hive.serde.csv.CSVSerde'

with serdeproperties (

"separatorChar" = ",",

"quoteChar" = "\"")

stored as textfile

tblproperties ("skip.header.line.count"="1");

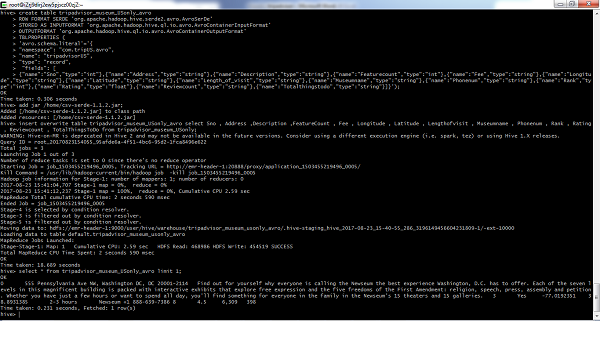

For the same case, let's try creating an Avro table, as we have been speaking about this file format in the entire blog series for its best performance.

create table tripadvisor_museum_USonly_avro

ROW FORMAT SERDE 'org.apache.hadoop.hive.serde2.avro.AvroSerDe'

STORED AS INPUTFORMAT 'org.apache.hadoop.hive.ql.io.avro.AvroContainerInputFormat'

OUTPUTFORMAT 'org.apache.hadoop.hive.ql.io.avro.AvroContainerOutputFormat'

TBLPROPERTIES (

'avro.schema.literal'='{

"namespace": "com.trip.avro",

"name": "tripadvisor",

"type": "record",

"fields": [

{"name":"Sno","type":"string"},{"name":"Address","type":"string"},{"name":"Description","type":"string"},{"name":"FeatureCount","type":"string"},{"name":"Fee","type":"string"},{"name":"Longitude","type":"string"},{"name":"Latitude","type":"string"},{"name":"Length_of_visit","type":"string"},{"name":"Museumname","type":"string},{"name":"descri_sub","type":"string"}]}');Now let's insert the data into the Avro table using insert statement. Below is a sample insert query for Avro table.

insert overwrite table tripavro select Sno, Address, Description, FeatureCount, Fee,

Longitude, Latitude, Lengthofvisit, Museumname, descri_sub from

tripadvisor_museum_USonly;Similarly create all columns for the columns in the file and create a corresponding insert statement to insert the data into the created tables

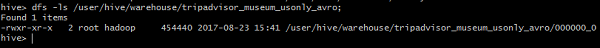

Once done, list the folder to view it

Load Data

Generally, after creating a table in SQL, we used to insert data using the Insert statement. But in Hive, we can insert an entire dataset using the "LOAD DATA" statement.

Syntax

LOAD DATA [LOCAL] INPATH 'filepath'

[OVERWRITE] INTO TABLE tablename ;To use Alibaba Cloud Object Storage Service (OSS) as storage in Hive, create an External table as follows

CREATE EXTERNAL TABLE demo_table (

userid INT, name STRING)

LOCATION 'oss://demo1bucket/users';For example, write a script for creating an external table and save it as hiveDemo.sql. Once done, upload it to OSS

USE DEFAULT;

set hive.input.format=org.apache.hadoop.hive.ql.io.HiveInputFormat;

set hive.stats.autogather=false;

CREATE EXTERNAL TABLE demo_table (

userid INT,

name STRING)

ROW FORMAT DELIMITED

FIELDS TERMINATED BY '\t'

STORED AS TEXTFILE

LOCATION 'oss://demo1bucket/users';Create a new job in E-MapReduce based on the following configuration

-f ossref://${bucket}/yourpath/hiveDemo.sqlSpecify the bucket name in "${bucket} and mention the location where you have saved the hive script in "yourpath"

2,593 posts | 794 followers

FollowAlibaba Clouder - April 8, 2019

Alibaba Clouder - April 4, 2019

Alibaba Clouder - April 9, 2019

Alibaba Clouder - September 26, 2019

Alibaba Clouder - July 7, 2020

Alibaba Clouder - September 29, 2019

2,593 posts | 794 followers

Follow E-MapReduce Service

E-MapReduce Service

A Big Data service that uses Apache Hadoop and Spark to process and analyze data

Learn More MaxCompute

MaxCompute

Conduct large-scale data warehousing with MaxCompute

Learn More ECS(Elastic Compute Service)

ECS(Elastic Compute Service)

Elastic and secure virtual cloud servers to cater all your cloud hosting needs.

Learn MoreMore Posts by Alibaba Clouder