With the development of Internet of Things, more data is generated at an increasingly fast speed. This IoT data may include vehicle traffic volume on highways, users' residence data, water temperature sensor data, e-commerce feed data, and tracking data of online rideshare vehicles.

This massive amounts of data is also used for different purposes. Some data requires real-time streaming statistics, some requires time-series processing, and some requires full analysis.

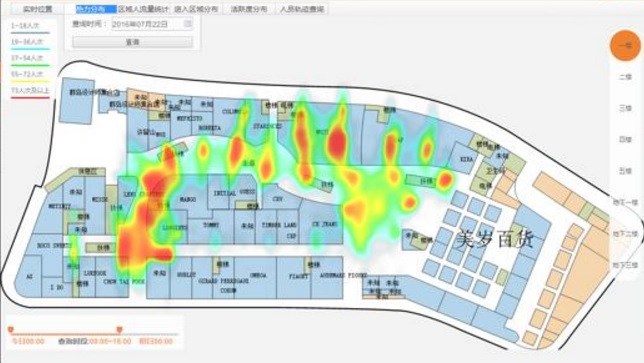

Take large screens at a command center for example. Some requirements are very interesting, such as the following:

Design the table structure - GID is a sensor ID, val is an uploaded value, and crt_time is time.

Consider 10,000 sensors and insert 10 million records.

create unlogged table sort_test(

id serial8 primary key, -- Auto-increment primary key

s_id int, -- Sensor ID

val numeric(10,2), -- Sensor value

crt_time timestamp default clock_timestamp() -- Report time

); Write 10 million pieces of sensor test data

postgres=# insert into sort_test (s_id,val) select random()*10000, random()*100 from generate_series(1,10000000);

INSERT 0 10000000 Create an index

postgres=# create index idx_test on sort_test using btree(s_id,id desc); Use a recursive call to obtain the latest values of all sensors (use the maximum auto-increment ID of each sensor as the latest report tag)

create type r as (s_id int, val numeric(10,2)); -- Composite type

with recursive skip as (

(

select (s_id,val)::r as r from sort_test where id in (select id from sort_test where s_id is not null order by s_id,id desc limit 1)

)

union all

(

select (

select (s_id,val)::r as r from sort_test where id in (select id from sort_test t where t.s_id>(s.r).s_id and t.s_id is not null order by s_id,id desc limit 1)

) from skip s where (s.r).s_id is not null

-- "where (s.r).s_id is not null" must be included. Otherwise it will create an endless loop.

)

select (t.r).s_id, (t.r).val from skip t where t.* is not null; It takes only 129 milliseconds to search 10 million records for the latest 10,000 records .

Why is it so fast? The reason is that the use of recursive queries reduces the amount of data to be scanned and computed.

s_id | val

-------+-------

0 | 83.55

1 | 91.62

2 | 72.70

3 | 45.46

4 | 99.97

5 | 17.04

6 | 8.96

7 | 25.83

8 | 28.10

9 | 26.19

10 | 83.03

11 | 1.30

......

Time: 128.779 ms Using a cursor to obtain 10 records at a time enables faster performance—it only takes 0.36 milliseconds to complete the same task.

postgres=# begin;

BEGIN

Time: 0.095 ms

postgres=# declare cur cursor for with recursive skip as (

(

select (s_id,val)::r as r from sort_test where id in (select id from sort_test where s_id is not null order by s_id,id desc limit 1)

)

union all

(

select (

select (s_id,val)::r as r from sort_test where id in (select id from sort_test t where t.s_id>(s.r).s_id and t.s_id is not null order by s_id,id desc limit 1)

) from skip s where (s.r).s_id is not null

-- "where (s.r).s_id is not null" must be included. Otherwise it will create an endless loop.

)

select (t.r).s_id, (t.r).val from skip t where t.* is not null;

DECLARE CURSOR

Time: 0.841 ms

postgres=# fetch 10 from cur;

s_id | val

------+-------

0 | 83.55

1 | 91.62

2 | 72.70

3 | 45.46

4 | 99.97

5 | 17.04

6 | 8.96

7 | 25.83

8 | 28.10

9 | 26.19

(10 rows)

Time: 0.364 ms

Compared with the first example, data in this example is converged and sorted by value, and the largest value is required.

Assume that a sensor at each intersection continuously uploads vehicle traffic data at that intersection. The large screen displays the 10 intersections with the largest vehicle traffic.

I will still use the data in the preceding example for convenience.

postgres=# with recursive skip as (

(

select (s_id,val)::r as r from sort_test where id in (select id from sort_test where s_id is not null order by s_id,id desc limit 1)

)

union all

(

select (

select (s_id,val)::r as r from sort_test where id in (select id from sort_test t where t.s_id>(s.r).s_id and t.s_id is not null order by s_id,id desc limit 1)

) from skip s where (s.r).s_id is not null

-- "where (s.r).s_id is not null" must be included. Otherwise it will create an endless loop.

)

select (t.r).s_id, (t.r).val from skip t where t.* is not null order by 2 desc limit 10;

s_id | val

------+-------

997 | 99.99

2233 | 99.97

610 | 99.97

4 | 99.97

6735 | 99.96

545 | 99.93

2992 | 99.91

4747 | 99.90

543 | 99.89

7229 | 99.88

(10 rows)

Time: 126.052 ms It takes only 126 milliseconds to search 10 million records for the latest 10,000 values and return the 10 largest values.

Compared with the two preceding examples, this example has two more dimensions:

One is the time dimension, which allows users to select a specific time period for analysis. The other is the region dimension, which allows users to specify a specific region to obtain data related to that region.

Think:

Unlike B-tree indexes, spatial indexes are not stored in a sequential manner. Spatial indexes are GiST indexes and use a structure similar to cluster partitioning. Therefore, it is impractical to combine spatial GiST indexes and index sorting to obtain the first record. This requires SORT on displayed records.

At the same time because the query criteria contains a time interval, it is also impractical to sort the non-driving columns (gid and VAL fields) of the index.

When can we combine querying and sorting of composite indexes?

Implicit sorting can only be used when all the columns before the column to be sorted are equivalent value queries and the order of the index is consistent with that of sorting. For example, index(a,b,c) supports where a=? and b=? order by c, but doesn't support where a> ? and b=? order by c.

For test convenience, re-plan test data and use points in place of longitudes and latitudes. In a realistic business scenario, you can use the geometry type.

create table test (

id serial8 primary key, -- Auto-increment series

gid int, -- Shop ID

val int, -- People flow at a shop

pos point, -- Shop location, indicated by points in place of longitudes and altitudes for test convenience

crt_time timestamp -- Time of uploading

); Insert 10 million pieces of test data, 10,000 shop IDs, and 100 million random points in a point matrix.

postgres=# insert into test (gid,val,pos,crt_time) select random()*10000, random()*100000, point(random()*10000, random()*10000), clock_timestamp() from generate_series(1,10000000);

postgres=# select min(crt_time),max(crt_time) from test;

min | max

----------------------------+----------------------------

2017-04-13 20:04:18.969268 | 2017-04-13 20:04:54.578339

(1 row) How can we speed up filtering and finding the largest value uploaded by spatio-temporal sensors?

Two optimization methods are available in different scenarios.

1. The total number of sensors (shops) is not too large (for example, 10,000 shops)

Use an index to search for the largest VAL of each GID and use partial indexes to avoid the long time problem; use CPU to complete the determination of points and surfaces.

Example

If the minimum time range that we allow users to select is two hours, we can create a partial index every two hours. (Using so many partial indexes is neither proper nor reasonable. It is advised to create a partition every two hours after the optimization of partitioned tables in PostgreSQL 10.)

create index idx_test_1 on test (gid, val desc) where crt_time between '2017-04-13 20:04:18.969268' and '2017-04-13 20:04:30.969268'; This interval includes a total of around 3,500,000 pieces of data.

postgres=# select count(*) from test where crt_time between '2017-04-13 20:04:18.969268' and '2017-04-13 20:04:30.969268';

count

---------

3461005

(1 row) Use this partial index and recursive calls to obtain the largest values among all shops in this interval. Then obtain data related to a specific region according to the point and surface determination and sort data to find the 10 largest values.

with recursive skip as (

(

select t0 from test t0 where id in

(select id from test where gid is not null and crt_time between '2017-04-13 20:04:18.969268' and '2017-04-13 20:04:30.969268' order by gid,val desc limit 1) -- The time parameter. Obtain the largest val of the smallest GID. Use it as a startup record.

)

union all

(

select (

select t1 from test t1 where id in (select id from test t where t.gid > (s.t0).gid and t.gid is not null

and crt_time between '2017-04-13 20:04:18.969268' and '2017-04-13 20:04:30.969268' -- The time parameter

order by gid,val desc limit 1)

) from skip s where (s.t0).gid is not null

) -- "where (s.t0).gid is not null" must be included. Otherwise, it will create an endless loop.

)

select (t.t0).* from skip t where t.* is not null

and circle '((5000,5000), 1000)' @> (t.t0).pos -- The region parameter

order by (t.t0).val desc limit 10; -- Find the top 10 shops Results are returned in 135 milliseconds

id | gid | val | pos | crt_time

---------+------+-------+-------------------------------------+----------------------------

1754353 | 4001 | 99997 | (4755.64117543399,5253.53815406561) | 2017-04-13 20:04:24.563999

600729 | 5874 | 99996 | (5507.96090625226,4394.04523000121) | 2017-04-13 20:04:20.851141

1137330 | 4248 | 99995 | (4332.14340358973,4383.84034205228) | 2017-04-13 20:04:22.575639

2609044 | 7209 | 99995 | (5809.22217573971,4967.18854177743) | 2017-04-13 20:04:27.328745

1330926 | 2834 | 99994 | (4153.9505450055,4986.64934188128) | 2017-04-13 20:04:23.197925

208578 | 3439 | 99994 | (4186.14753056318,5103.39797474444) | 2017-04-13 20:04:19.598547

703010 | 5736 | 99993 | (4913.89285307378,4628.21466382593) | 2017-04-13 20:04:21.178653

298380 | 7680 | 99992 | (4539.91844784468,4454.29485291243) | 2017-04-13 20:04:19.884725

996318 | 7658 | 99992 | (4462.14715018868,5504.16304729879) | 2017-04-13 20:04:22.122626

3120169 | 3261 | 99991 | (4814.33014851063,4505.81138487905) | 2017-04-13 20:04:28.98197

(10 rows)

Time: 135.480 ms The implementation code is as follows

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Limit (cost=937.82..937.83 rows=1 width=40) (actual time=147.241..147.243 rows=10 loops=1)

Output: ((t.t0).id), ((t.t0).gid), ((t.t0).val), ((t.t0).pos), ((t.t0).crt_time)

Buffers: shared hit=80066

CTE skip

-> Recursive Union (cost=1.00..935.54 rows=101 width=64) (actual time=0.037..141.284 rows=10002 loops=1)

Buffers: shared hit=80066

-> Nested Loop (cost=1.00..9.03 rows=1 width=64) (actual time=0.036..0.036 rows=1 loops=1)

Output: t0.*

Inner Unique: true

Buffers: shared hit=8

-> HashAggregate (cost=0.57..0.58 rows=1 width=8) (actual time=0.022..0.023 rows=1 loops=1)

Output: test.id

Group Key: test.id

Buffers: shared hit=4

-> Limit (cost=0.43..0.55 rows=1 width=16) (actual time=0.017..0.018 rows=1 loops=1)

Output: test.id, test.gid, test.val

Buffers: shared hit=4

-> Index Scan using idx_test_1 on public.test (cost=0.43..431864.13 rows=3461209 width=16) (actual time=0.017..0.017 rows=1 loops=1)

Output: test.id, test.gid, test.val

Index Cond: (test.gid IS NOT NULL)

Buffers: shared hit=4

-> Index Scan using test_pkey on public.test t0 (cost=0.43..8.45 rows=1 width=72) (actual time=0.012..0.012 rows=1 loops=1)

Output: t0.*, t0.id

Index Cond: (t0.id = test.id)

Buffers: shared hit=4

-> WorkTable Scan on skip s (cost=0.00..92.45 rows=10 width=32) (actual time=0.014..0.014 rows=1 loops=10002)

Output: (SubPlan 1)

Filter: ((s.t0).gid IS NOT NULL)

Rows Removed by Filter: 0

Buffers: shared hit=80058

SubPlan 1

-> Nested Loop (cost=1.20..9.22 rows=1 width=64) (actual time=0.013..0.013 rows=1 loops=10001)

Output: t1.*

Inner Unique: true

Buffers: shared hit=80058

-> HashAggregate (cost=0.76..0.77 rows=1 width=8) (actual time=0.009..0.009 rows=1 loops=10001)

Output: t_1.id

Group Key: t_1.id

Buffers: shared hit=40033

-> Limit (cost=0.43..0.75 rows=1 width=16) (actual time=0.008..0.008 rows=1 loops=10001)

Output: t_1.id, t_1.gid, t_1.val

Buffers: shared hit=40033

-> Index Scan using idx_test_1 on public.test t_1 (cost=0.43..369056.35 rows=1153736 width=16) (actual time=0.008..0.008 rows=1 loops=10001)

Output: t_1.id, t_1.gid, t_1.val

Index Cond: ((t_1.gid > (s.t0).gid) AND (t_1.gid IS NOT NULL))

Buffers: shared hit=40033

-> Index Scan using test_pkey on public.test t1 (cost=0.43..8.45 rows=1 width=72) (actual time=0.003..0.003 rows=1 loops=10000)

Output: t1.*, t1.id

Index Cond: (t1.id = t_1.id)

Buffers: shared hit=40025

-> Sort (cost=2.28..2.29 rows=1 width=40) (actual time=147.240..147.241 rows=10 loops=1)

Output: ((t.t0).id), ((t.t0).gid), ((t.t0).val), ((t.t0).pos), ((t.t0).crt_time)

Sort Key: ((t.t0).val) DESC

Sort Method: top-N heapsort Memory: 26kB

Buffers: shared hit=80066

-> CTE Scan on skip t (cost=0.00..2.27 rows=1 width=40) (actual time=0.252..147.138 rows=317 loops=1)

Output: (t.t0).id, (t.t0).gid, (t.t0).val, (t.t0).pos, (t.t0).crt_time

Filter: ((t.* IS NOT NULL) AND ('<(5000,5000),1000>'::circle @> (t.t0).pos))

Rows Removed by Filter: 9685

Buffers: shared hit=80066

Planning time: 0.508 ms

Execution time: 147.505 ms

(62 rows) 2. The number of shops is too large, but after the spatio-temporal convergence, the number of records is relatively small (for example, millions of records)

In this case, consider using partition tables by time. Then construct spatial indexes.

Use a time condition to locate the specified partition and use spatial indexes to filter data. Use few CPU resources and compute the filtered data to obtain the top 10 shops.

Example

2.1. Partition a table by time (for example, a partition every two hours; the reason has been explained above, and will not be discussed here.)

Assume that around 10 million pieces of data are generated every two hours. 2.2. Create a spatial index

postgres=# create index idx_test_gist on test using gist(pos);

CREATE INDEX 2.3. Create data perspectives

When a time condition is entered in SQL, PostgreSQL automatically locates the target table partition. Here we directly write into the TEST table for convenience.

Use the window query to obtain the top SQL

select * from

(

select row_number() over(partition by gid order by val desc) as rn, * from test

where

circle '((5000,5000), 1000)' @> pos -- The region parameter

) t

where rn = 1 -- Find the largest value of each shop in this interval

order by val desc limit 10; -- Find the 10 largest shop values Efficiency

rn | id | gid | val | pos | crt_time

----+---------+------+-------+-------------------------------------+----------------------------

1 | 7859807 | 2311 | 99999 | (4900.04640072584,4950.79724118114) | 2017-04-13 20:04:46.013424

1 | 4658616 | 3699 | 99999 | (5625.03716442734,5338.90711143613) | 2017-04-13 20:04:35.467025

1 | 1754353 | 4001 | 99997 | (4755.64117543399,5253.53815406561) | 2017-04-13 20:04:24.563999

1 | 6076598 | 4610 | 99997 | (5679.03681658208,4793.08029171079) | 2017-04-13 20:04:40.09587

1 | 6139261 | 4069 | 99997 | (5225.87833926082,4101.83480009437) | 2017-04-13 20:04:40.301817

1 | 600729 | 5874 | 99996 | (5507.96090625226,4394.04523000121) | 2017-04-13 20:04:20.851141

1 | 4281282 | 9720 | 99996 | (5036.95292398334,4731.64941649884) | 2017-04-13 20:04:34.237957

1 | 5579952 | 1503 | 99996 | (4271.09604235739,5250.28191972524) | 2017-04-13 20:04:38.469311

1 | 5310205 | 1317 | 99995 | (4439.0160869807,4796.70224711299) | 2017-04-13 20:04:37.590451

1 | 1137330 | 4248 | 99995 | (4332.14340358973,4383.84034205228) | 2017-04-13 20:04:22.575639

(10 rows)

Time: 633.342 ms Implementation code

Limit (cost=39265.88..39265.91 rows=10 width=48) (actual time=730.704..730.706 rows=10 loops=1)

Output: t.rn, t.id, t.gid, t.val, t.pos, t.crt_time

Buffers: shared hit=317037, temp read=1921 written=1928

-> Sort (cost=39265.88..39266.01 rows=50 width=48) (actual time=730.702..730.703 rows=10 loops=1)

Output: t.rn, t.id, t.gid, t.val, t.pos, t.crt_time

Sort Key: t.val DESC

Sort Method: top-N heapsort Memory: 26kB

Buffers: shared hit=317037, temp read=1921 written=1928

-> Subquery Scan on t (cost=38939.80..39264.80 rows=50 width=48) (actual time=520.846..728.927 rows=10001 loops=1)

Output: t.rn, t.id, t.gid, t.val, t.pos, t.crt_time

Filter: (t.rn = 1)

Rows Removed by Filter: 303477

Buffers: shared hit=317037, temp read=1921 written=1928

-> WindowAgg (cost=38939.80..39139.80 rows=10000 width=48) (actual time=520.844..703.933 rows=313478 loops=1)

Output: row_number() OVER (?), test.id, test.gid, test.val, test.pos, test.crt_time

Buffers: shared hit=317037, temp read=1921 written=1928

-> Sort (cost=38939.80..38964.80 rows=10000 width=40) (actual time=520.837..594.505 rows=313478 loops=1)

Output: test.gid, test.val, test.id, test.pos, test.crt_time

Sort Key: test.gid, test.val DESC

Sort Method: external merge Disk: 15368kB

Buffers: shared hit=317037, temp read=1921 written=1928

-> Index Scan using idx_test_gist on public.test (cost=0.42..38275.42 rows=10000 width=40) (actual time=0.240..336.235 rows=313478 loops=1)

Output: test.gid, test.val, test.id, test.pos, test.crt_time

Index Cond: ('<(5000,5000),1000>'::circle @> test.pos)

Buffers: shared hit=317037

Planning time: 0.140 ms

Execution time: 734.226 ms

(27 rows) Allow PostgreSQL to support spatial GRID partitions (In fact, you can now use inheritance to implement this, and use grid+mod to determine which partition data should be inserted.)

For data perspective by the spatial and temporal dimensions, we can create spatial Grid partitions and sub-partitions of a time partition.

During the process of data retrieval, locate the target sub-partitions directly by using table partitions. Use B-tree indexes and recursive calls to filter peak data of each shop in intended intervals and use few CPU resources to calculate and find the top shops.

Querying can be implemented in no more than 100 milliseconds by using this method to create spatio-temporal four-dimensional data perspective.

1. In example 1 and example 2, since the application layer uses recent data, historical data is not involved. In addition to recursive optimization, another two optimization methods are available.

Method 1: Do not record historical data and replace insert with insert or update. By using this method, the results of the sort_test are always the latest values.

create unlogged table sort_test(

s_id int primary key, -- Sensor ID

val numeric(10,2), -- Sensor value

crt_time timestamp default clock_timestamp() -- Report time

);

insert into sort_test(s_id,val,crt_time) values (?,?,?) on conflict (s_id) do update set val=excluded.val,crt_time=excluded.crt_time; Method 2: Record both historical data and the latest status. Use a trigger to complete this job.

Decomposition:

When data is inserted, the last record is automatically updated. (The volume of written data equals that of updated data)

Example

Create a status table to record the latest status and create a trigger to automatically update the status table when historical data is written.

create unlogged table hist(

id serial8 primary key, -- Auto-increment primary key

s_id int, -- Sensor ID

val numeric(10,2), -- Sensor value

crt_time timestamp default clock_timestamp() -- Report time

);

create unlogged table hist_stat(

s_id int primary key, -- Sensor ID

val numeric(10,2), -- Sensor value

crt_time timestamp default clock_timestamp() -- Report time

);

create or replace function tg() returns trigger as

$$

declare

begin

insert into hist_stat (s_id,val,crt_time) values (NEW.s_id,NEW.val,NEW.crt_time) on conflict (s_id) do update set val=excluded.val,crt_time=excluded.crt_time;

return null;

end;

$$

language plpgsql strict;

create trigger tg after insert on hist for each row execute procedure tg(); When data is inserted, the status is automatically updated.

postgres=# insert into hist(s_id,val) values(1,1);

INSERT 0 1

postgres=# insert into hist(s_id,val) values(1,1);

INSERT 0 1

postgres=# insert into hist(s_id,val) values(1,1);

INSERT 0 1

postgres=# insert into hist(s_id,val) values(1,1);

INSERT 0 1

postgres=# insert into hist(s_id,val) values(1,1);

INSERT 0 1

postgres=# select * from hist;

id | s_id | val | crt_time

----+------+------+----------------------------

3 | 1 | 1.00 | 2017-04-13 22:23:25.165286

4 | 1 | 1.00 | 2017-04-13 22:23:26.23929

5 | 1 | 1.00 | 2017-04-13 22:23:26.646152

6 | 1 | 1.00 | 2017-04-13 22:23:26.991189

7 | 1 | 1.00 | 2017-04-13 22:23:27.376265

(5 rows)

postgres=# select * from hist_stat ;

s_id | val | crt_time

------+------+----------------------------

1 | 1.00 | 2017-04-13 22:23:27.376265

(1 row) When querying data, directly query the latest status table. Recursive calls are no longer required.

postgres=# select * from hist_stat ;

s_id | val | crt_time

------+------+----------------------------

1 | 1.00 | 2017-04-13 22:23:27.376265

(1 row) 2. In example 3, historical data is analyzed by two dimensions: time and space.

Therefore, we can use one of the two dimensions as a partition and scramble data to create an index by the other dimension in that partition.

This allows data to be converged as much as possible.

Partitions are supported for both space and time. (It is recommended to use grid expressions for space partitions in order to easily find and locate partitions.)

Efficient Search on Massive Data with Multidimensional Attributes in Time, Spatial, and Object

PostgreSQL Independent Event Correlation Analysis - User-Car Fitting

Alibaba Container Service - April 8, 2025

Alibaba Clouder - June 19, 2018

Alibaba Clouder - July 15, 2020

Alibaba Clouder - March 17, 2021

Alibaba Cloud Native Community - February 13, 2026

Hologres - July 16, 2021

IoT Platform

IoT Platform

Provides secure and reliable communication between devices and the IoT Platform which allows you to manage a large number of devices on a single IoT Platform.

Learn More IoT Solution

IoT Solution

A cloud solution for smart technology providers to quickly build stable, cost-efficient, and reliable ubiquitous platforms

Learn More PolarDB for PostgreSQL

PolarDB for PostgreSQL

Alibaba Cloud PolarDB for PostgreSQL is an in-house relational database service 100% compatible with PostgreSQL and highly compatible with the Oracle syntax.

Learn More AnalyticDB for PostgreSQL

AnalyticDB for PostgreSQL

An online MPP warehousing service based on the Greenplum Database open source program

Learn MoreMore Posts by digoal