Modern data-driven applications often face challenges in delivering real-time customer insights due to repeated processing of large raw datasets, resulting in increased latency, higher computational costs, and inefficient query performance. This is particularly critical in use cases such as customer segmentation, churn prediction, and behavioral analysis, where low-latency access to precomputed features is essential.

To address these challenges, this article proposes a lightweight analytics architecture that leverages Alibaba Cloud Object Storage Service (OSS) as a scalable and durable data lake, combined with Tair (Redis OSS-compatible) as a high-performance in-memory caching layer. The solution focuses on decoupling data storage from data serving, enabling efficient feature retrieval without repeated computation.

The primary objective of this implementation is to design a simple yet production-ready pipeline that supports real-time access to processed customer data. Specifically, the goals are to reduce query latency to milliseconds, optimize resource utilization by avoiding redundant processing, and enable fast and flexible access to analytics outputs such as customer segmentation, churn risk classification, and behavioral features.

In a typical retail analytics scenario, data is stored in raw format and must undergo preprocessing before it can be utilized for analysis, which often results in high latency when querying large datasets, repeated and inefficient computations, and slow performance in segmentation or targeting use cases. These limitations can significantly impact real-time applications that require instant access to insights. To overcome these challenges, the architecture is designed by decoupling the system into a storage layer using OSS for scalable and durable data persistence, and a serving layer using Redis to provide low-latency, high-performance access to precomputed analytical results.

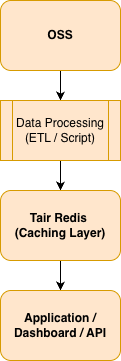

The solution follows a simple yet effective architecture that separates data storage, processing, and serving layers to optimize performance and scalability.

This design ensures a clear separation of responsibilities:

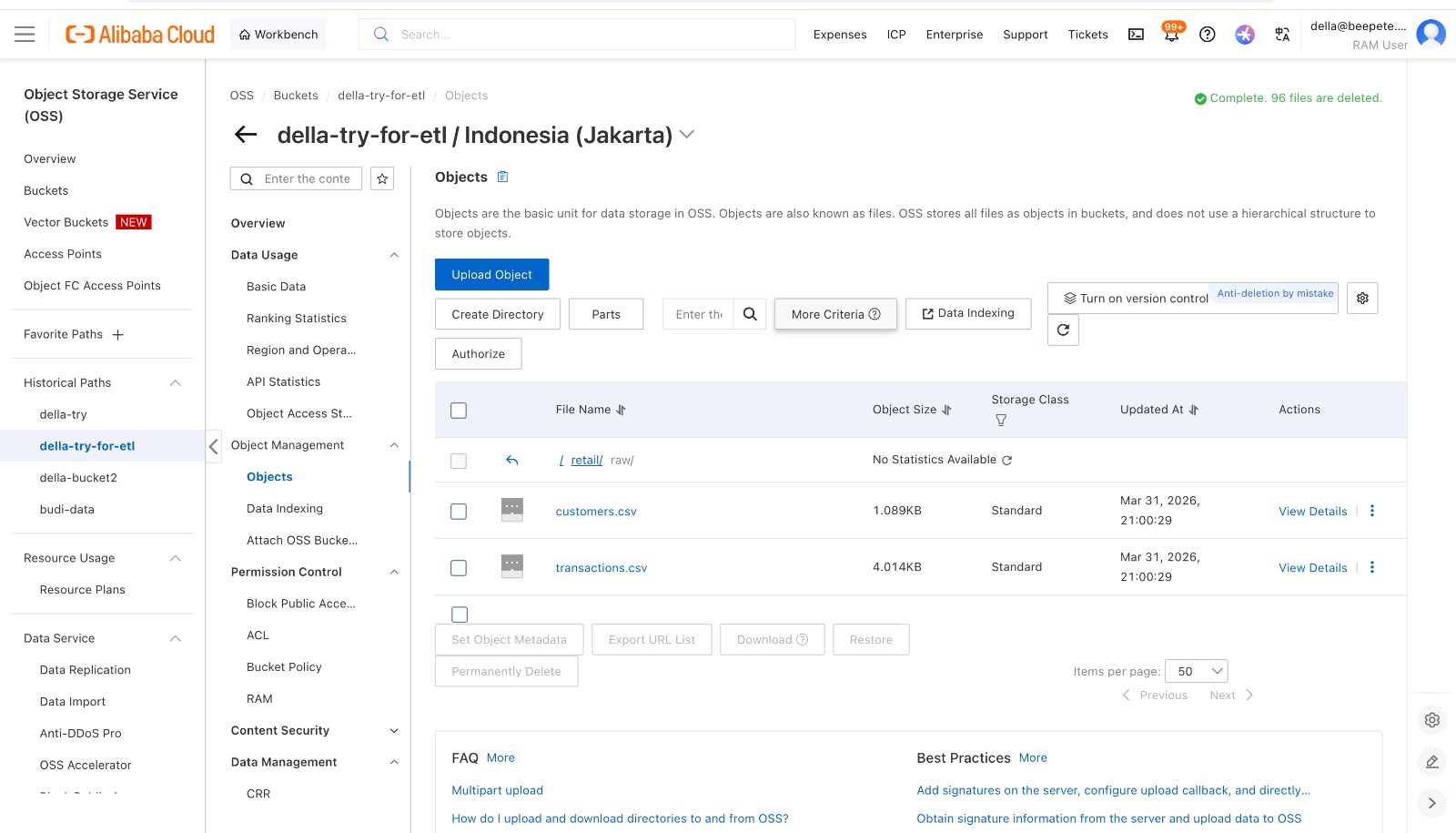

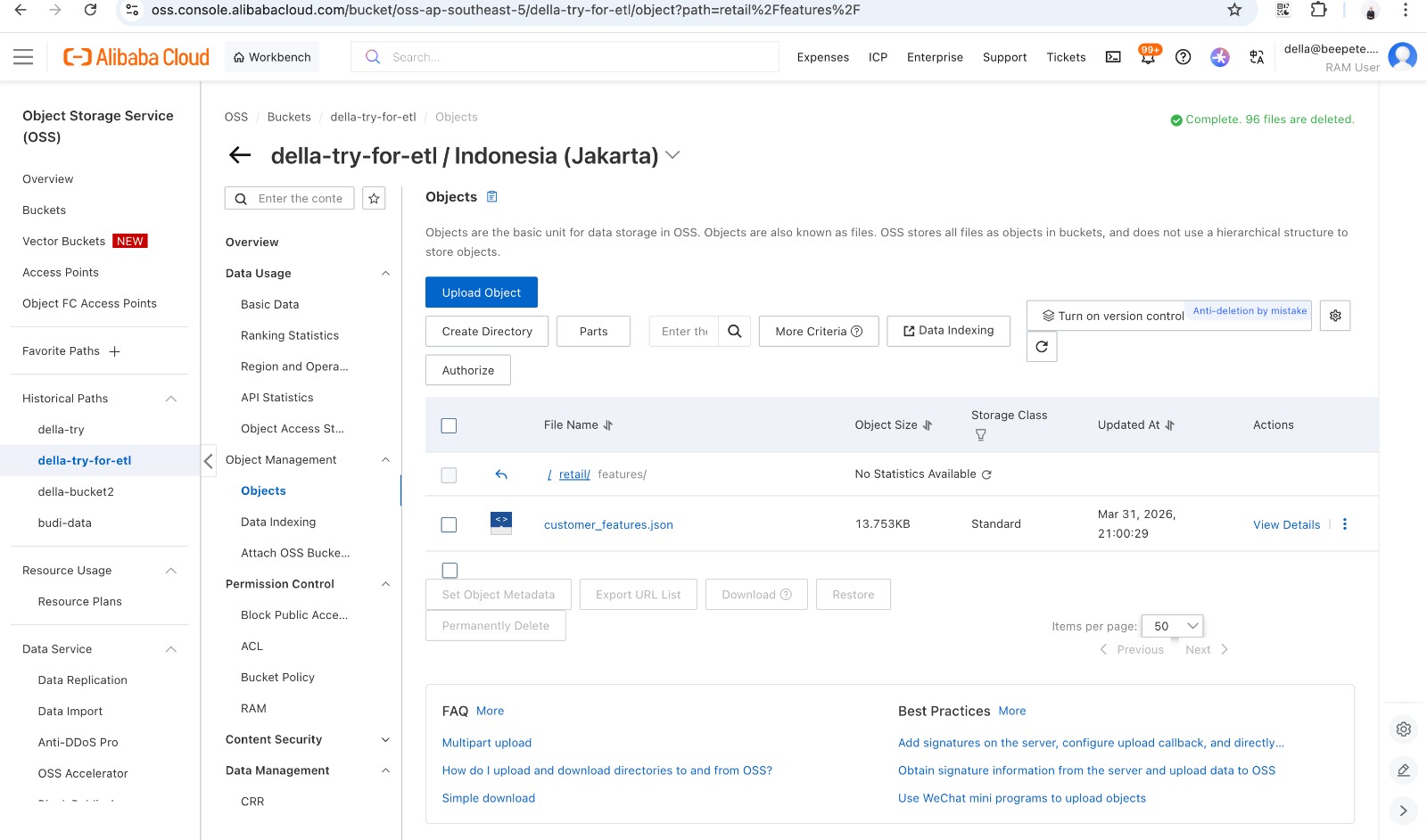

Upload Raw Data to OSS

First, upload your datasets into OSS:

These files contain:

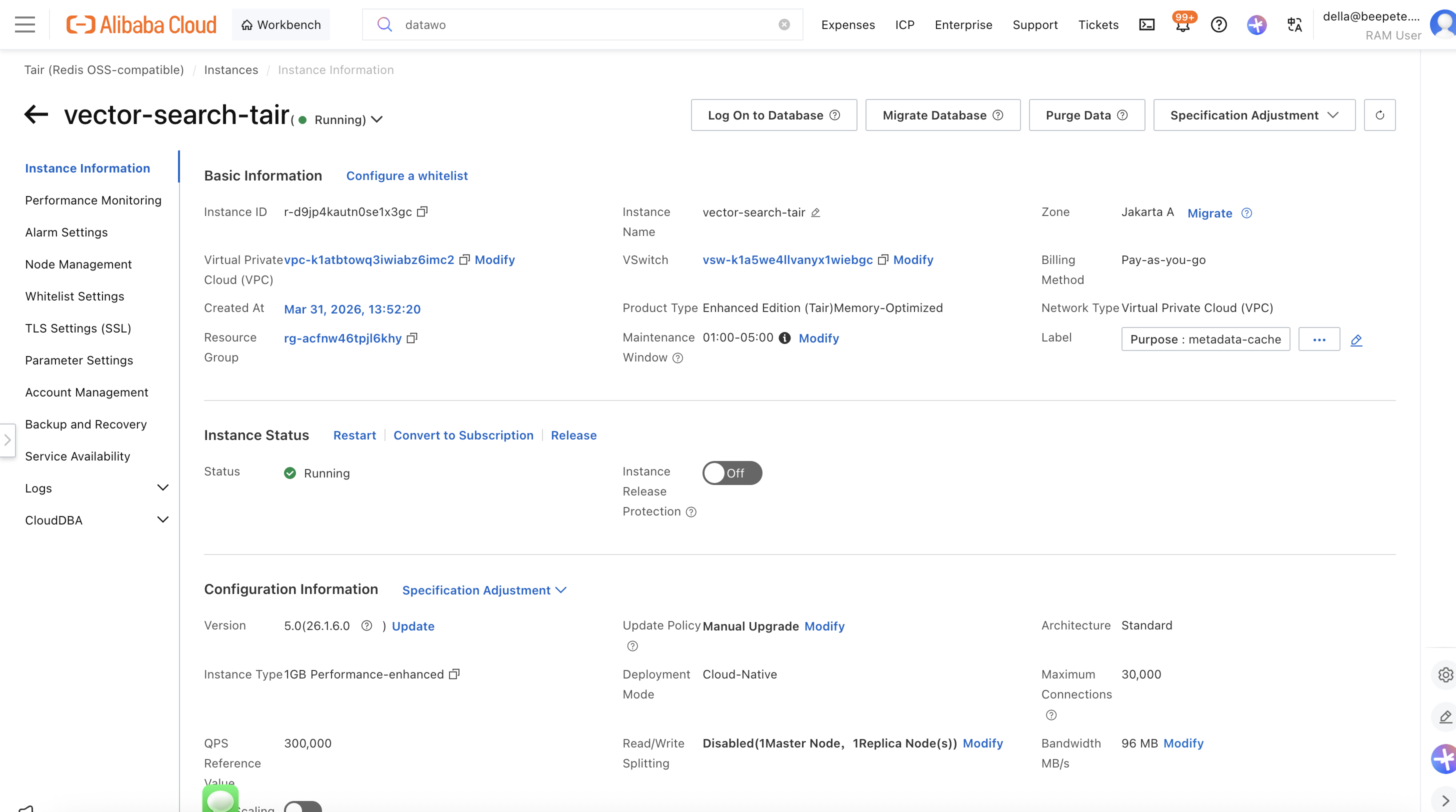

Create a Tair Redis instance with the following configuration:

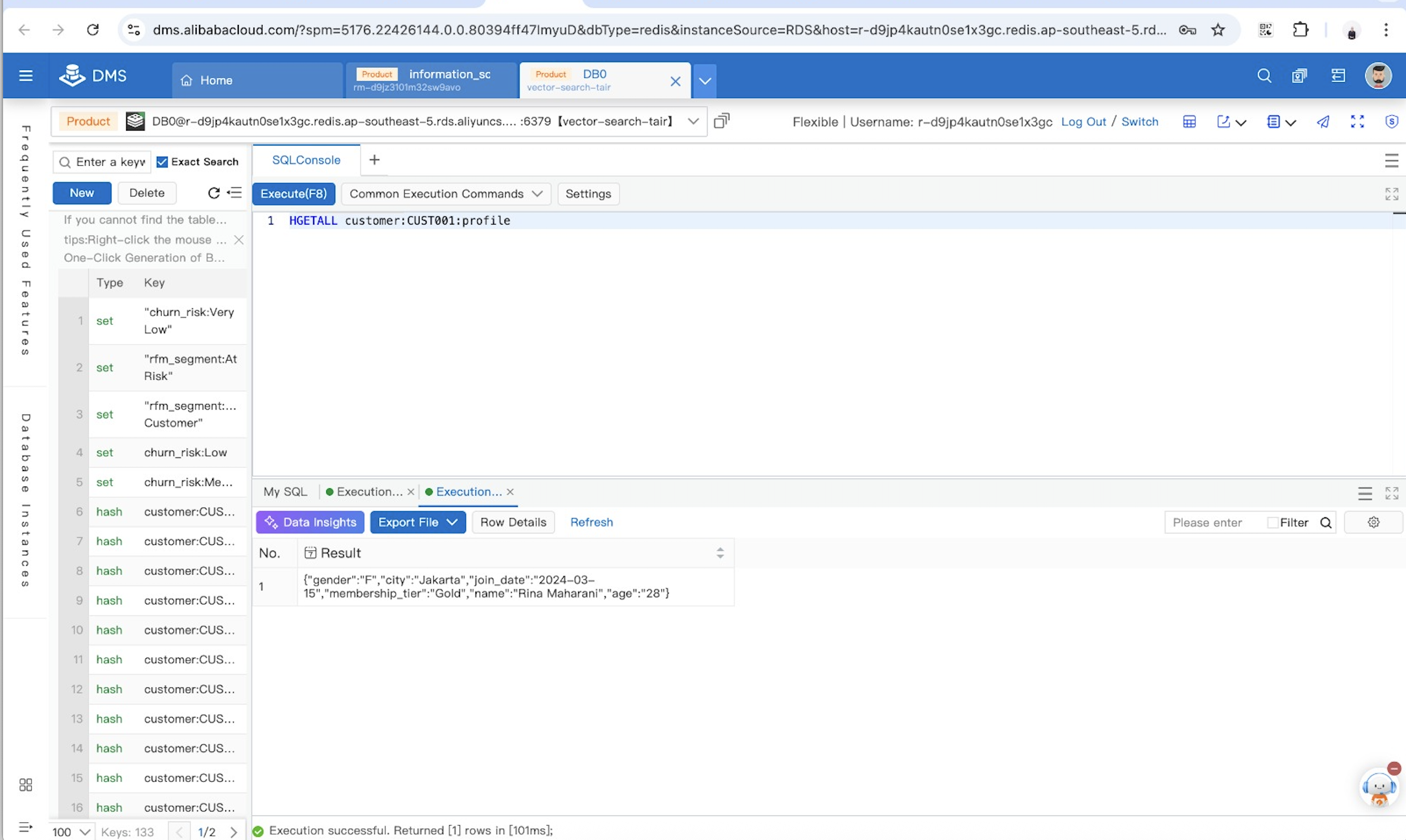

Store Customer Profile in Redis

Log on to Database,Use Redis Hash to store structured data:

This allows efficient retrieval of customer attributes.

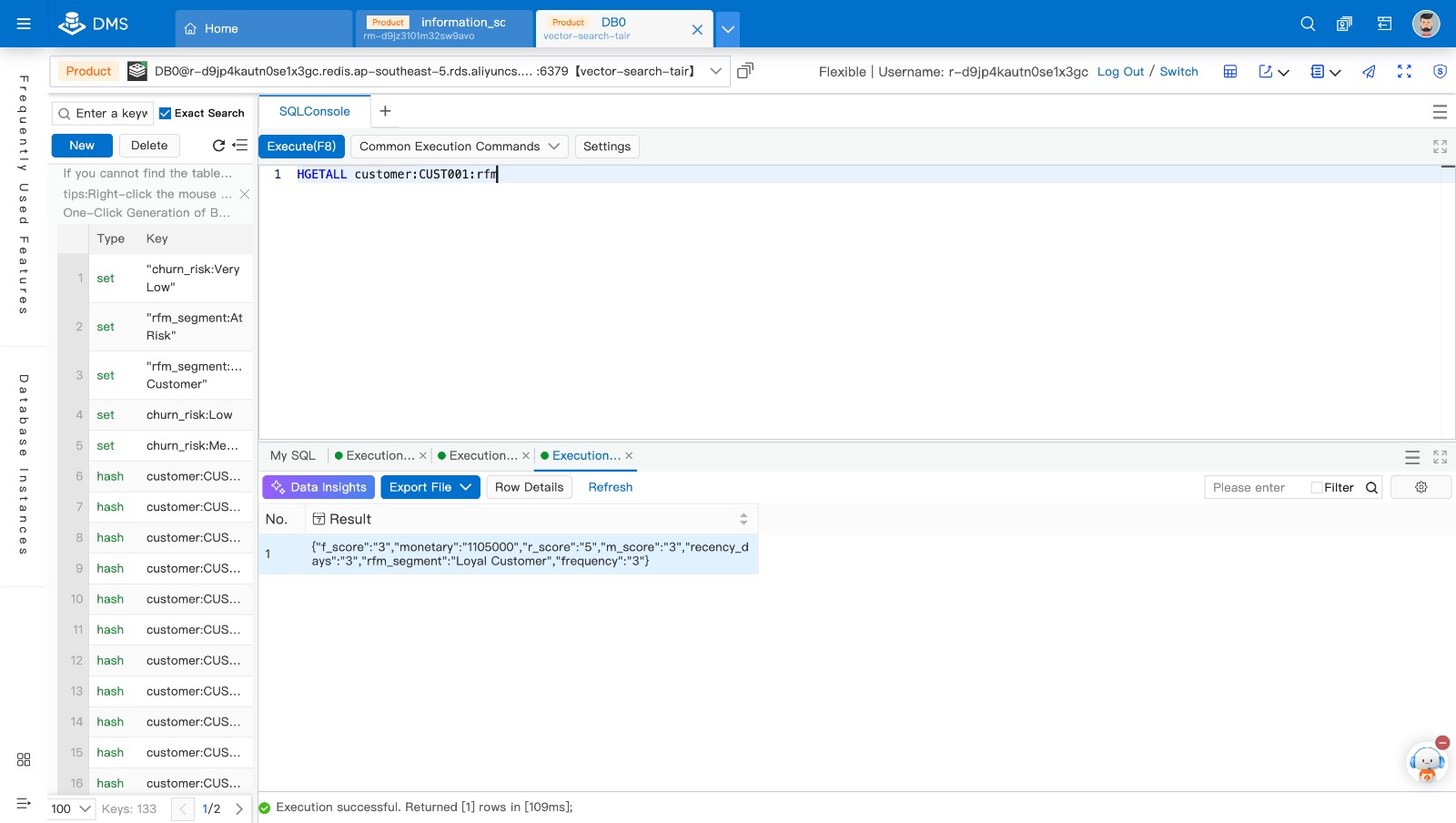

Store RFM Analysis Results

This enables direct segmentation without recomputation.

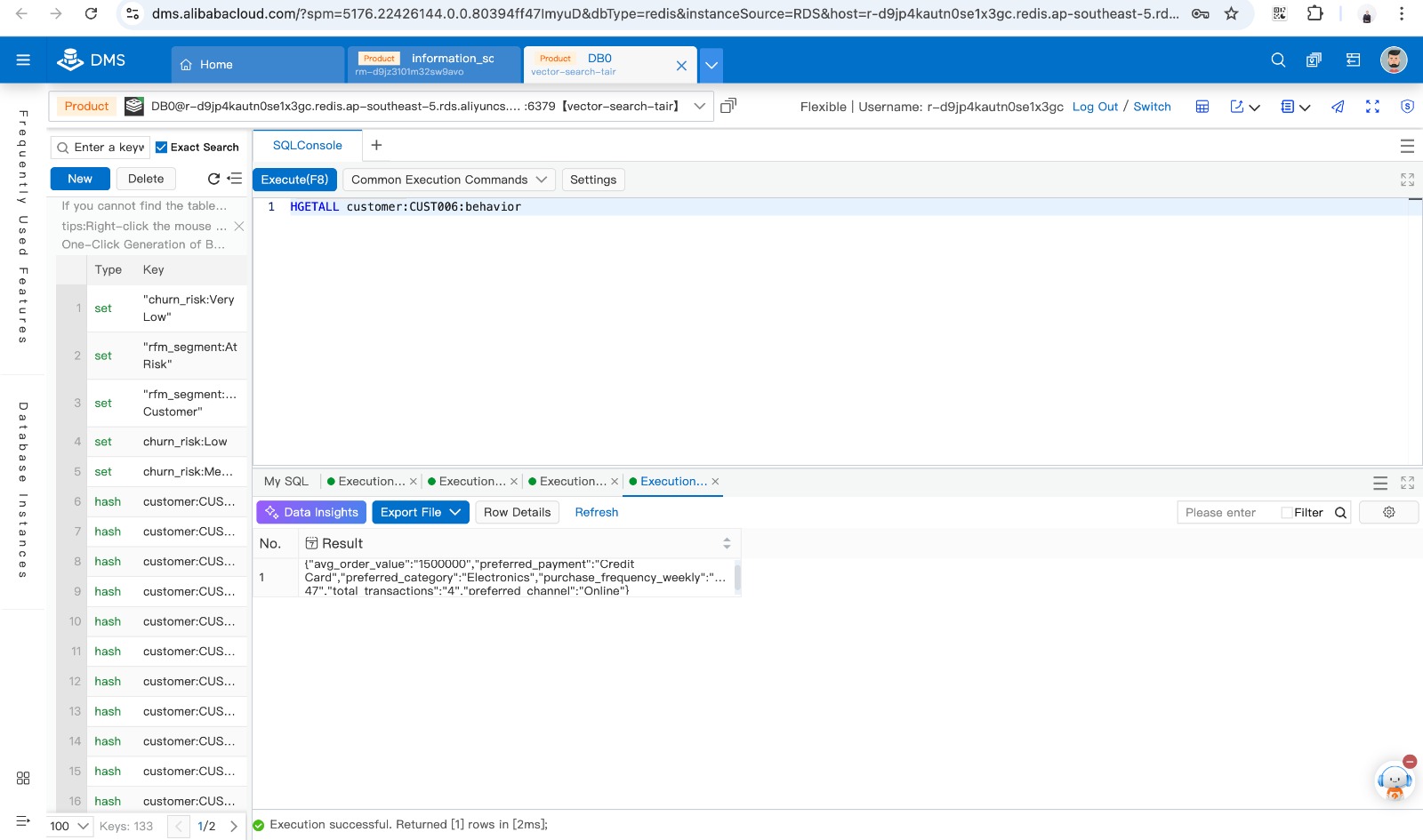

Store Customer Behavior Data

This data supports personalization and recommendations.

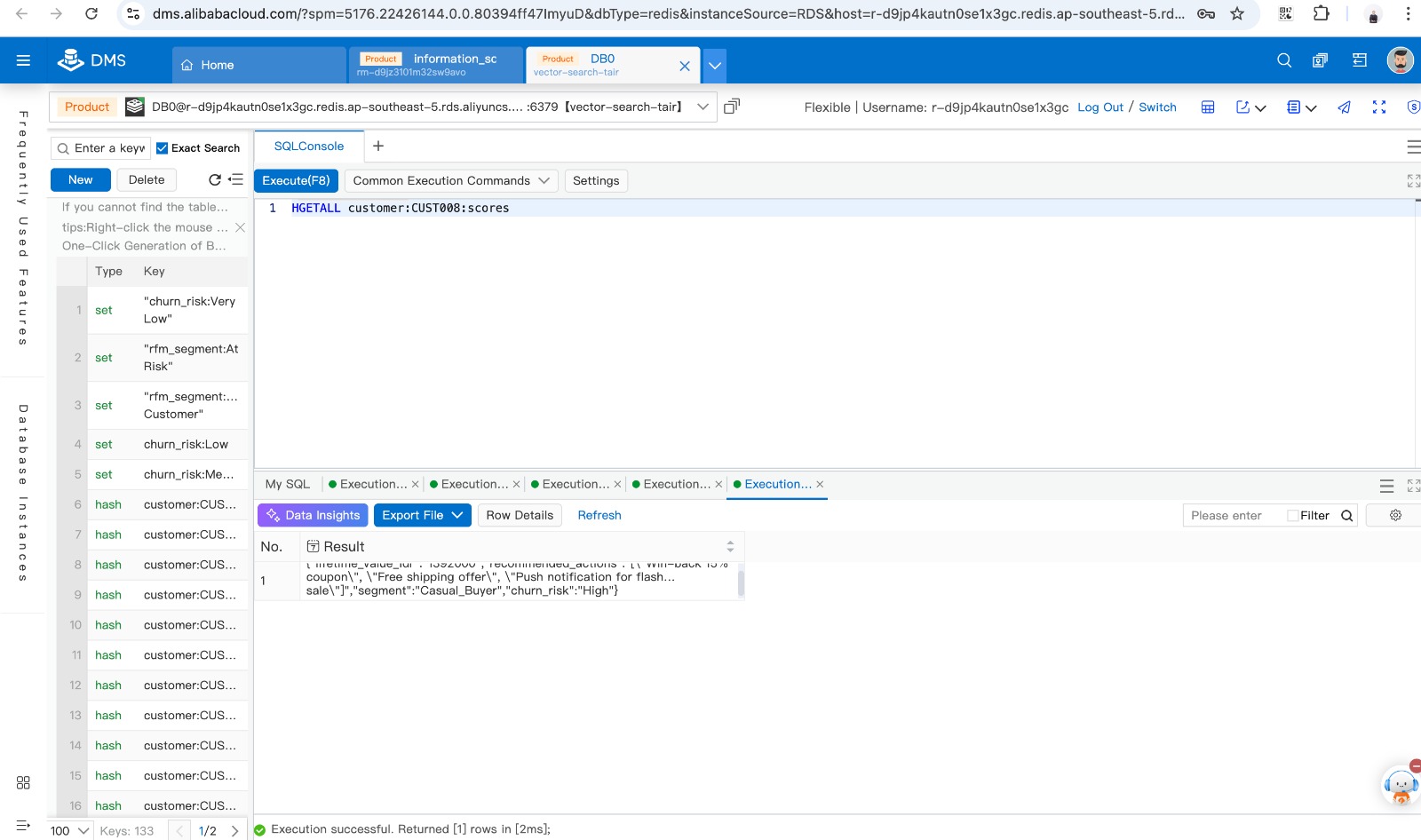

Store Churn Risk and Recommendations

This enables real-time decision making for marketing actions.

**Create Segmentation Index Using Redis Set

To enable fast querying, use Redis Set:**

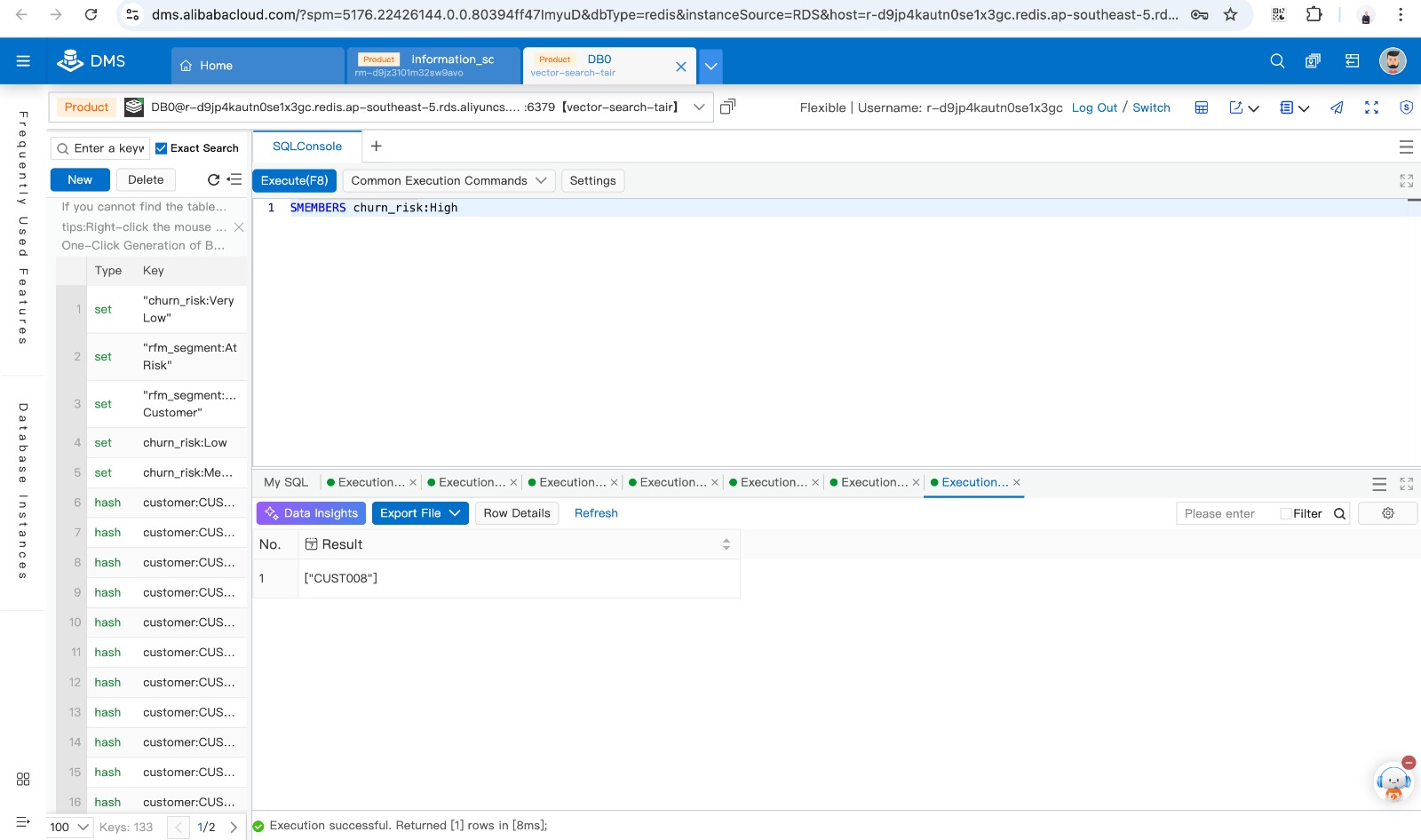

High churn customers:

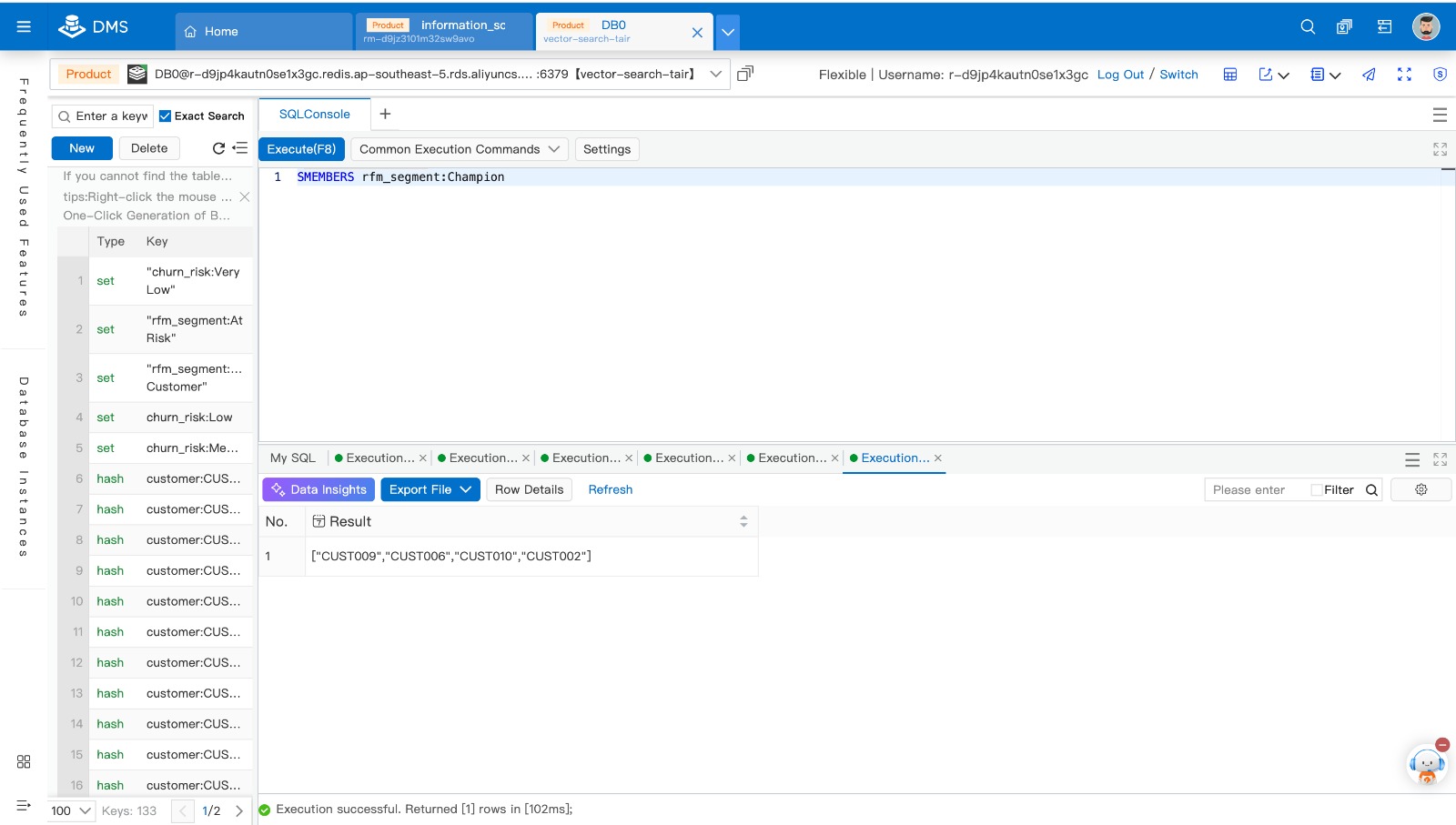

Champion segment:

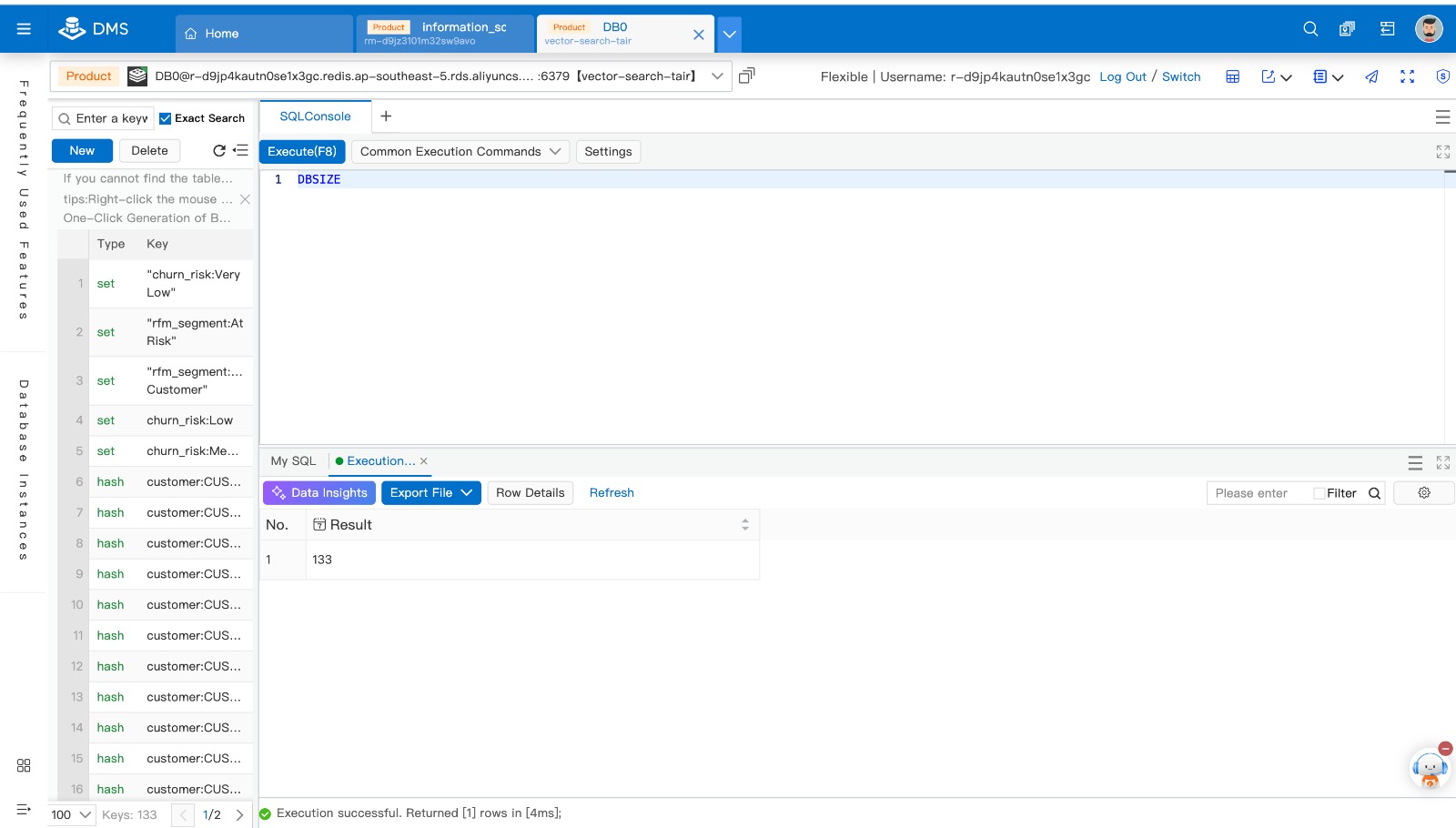

Verify Data Volume

Result

With this architecture, raw and processed datasets are persistently stored in OSS as the system of record, while precomputed features are materialized and cached in Tair Redis using optimized data structures such as Hashes and Sets. This design eliminates the need for on-the-fly computation and full dataset scans, enabling direct key-based access with sub-millisecond latency. As a result, query execution is significantly accelerated, and operations such as customer segmentation and churn-based targeting can be performed instantly and efficiently at scale.

By integrating Object Storage Service (OSS) with Tair (Redis OSS-compatible), we can build a scalable and high-performance analytics pipeline that supports real-time applications. This architecture enables scalable and cost-efficient data storage through OSS, ultra-low latency data access using Tair Redis, and a simple, maintainable system design that reduces operational complexity. As a result, this solution is well-suited for customer analytics, marketing automation, and recommendation systems, where fast and reliable access to processed data is essential.

This implementation demonstrates that a powerful real-time analytics solution does not always require complex infrastructure. By leveraging OSS as a data lake and Tair Redis as a caching layer, organizations can efficiently deliver fast, scalable, and production-ready data services with minimal overhead.

Designing a Retail Data Pipeline for Real-Time Analytics with Alibaba Cloud

3 posts | 0 followers

FollowApsaraDB - March 13, 2025

ApsaraDB - December 25, 2024

Alibaba Clouder - December 16, 2019

Alibaba Clouder - November 27, 2017

ApsaraDB - March 23, 2026

Alibaba Clouder - March 24, 2021

3 posts | 0 followers

Follow Tair (Redis® OSS-Compatible)

Tair (Redis® OSS-Compatible)

A key value database service that offers in-memory caching and high-speed access to applications hosted on the cloud

Learn More OSS(Object Storage Service)

OSS(Object Storage Service)

An encrypted and secure cloud storage service which stores, processes and accesses massive amounts of data from anywhere in the world

Learn More Content Delivery Solution

Content Delivery Solution

Save egress traffic cost. Eliminate all complexity in managing storage cost.

Learn More