By Shenlian

The fifth article in this series (Interpretation of the Source Code of OceanBase (5): Life of Tenant) was about the codes for the creation and deletion of a tenant and resource isolation in Community. This article gives a detailed explanation of the OceanBase storage engine.

This article answers the following questions about OceanBase:

Currently, two types of storage engines for databases exist in the industry:

Update-in-Place: The B+Tree structure used in traditional relational databases (MySQL and Oracle) is commonly adopted.

Log-Structure storage: The structure of LSMTree used in LevelDB, RocksDB, HBase, and BigTable is adopted.

If you want to achieve ultimate database performance, scanning requires good spatial locality, and get/put requires efficient indexes for positioning. In addition, version /gc/compaction improves the performance of read but affects overall performance. Current storage engines cannot meet all these requirements and solve the problem altogether.

So, OceanBase chooses to implement a self-developed storage engine without resorting to other existing open-source solutions.

In terms of architecture, the storage engine of OceanBase consists of two layers:

① The underlying distributed engine implements distributed features (such as linear expansion, Paxos replication, and distributed transactions) to achieve continuous availability.

② The top standalone engine combines some technologies of traditional relational databases and in-memory databases to achieve ultimate performance.

The sections below describe the standalone engine and distributed architecture.

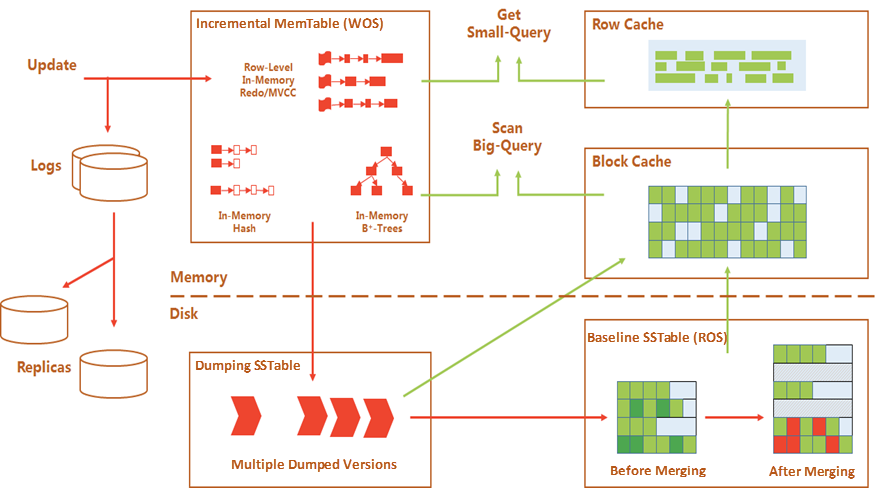

The storage engine of OceanBase features a hierarchical structure of LSMTree. Data is divided into two parts: baseline data and incremental data.

Baseline data is the data that is permanent on the disk and is not modified after being generated. It is called SSTable.

Incremental data exists in the memory. What users write becomes incremental data first, which is called MemTable. A Redo Log is used to ensure transaction performance.

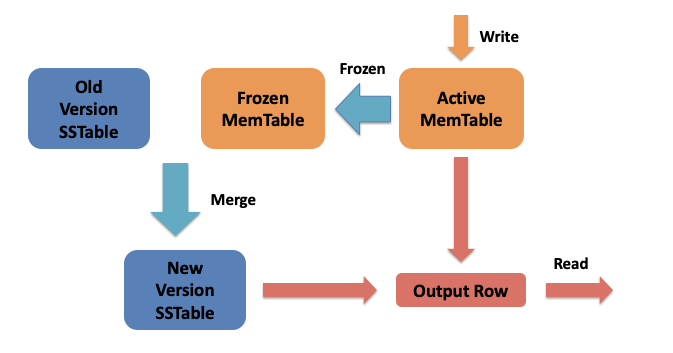

When MemTable reaches a certain threshold, the engine triggers freezing (Frozen MemTable) and opens a new MemTable (Active MemTable ). Frozen MemTable is dumped to SSTable. Then, the dumped content in SSTable is merged into the baseline SSTable during merging (a compaction action specific to the LSMTree structure) to generate a new SSTable.

When searching, you need to merge the data in MemTable and SSTable to obtain the final search result.

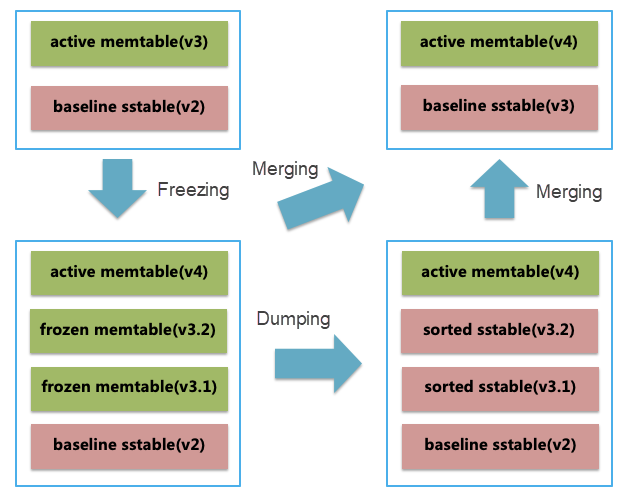

The system specifies different data versions for baseline data and incremental data. The data versions are continuously incremented.

Each time a new Active MemTable is generated, it is set to be the version of the previous MemTable plus 1. (In actual production, multiple dumping exists between two mergings. All MemTables generated between the two mergings are seen as a large version while each MemTable is a small version, such as v3.1 and v3.2 in the following figures.) After SSTable is merged with Frozen MemTable, the version of SSTable is also set to be the merged version of Frozen MemTable.

Advantages of Read/Write Splitting Architecture: Baseline data is static and can be compressed easily, so storage costs are lower and searching performance is higher. Besides, no problem of cache invalidation caused by write exists during the row-level cache.

Disadvantages of Read/Write Splitting Architecture: The read path becomes longer. Data needs to be merged in real-time, which may bring a loss of performance. Data files need to be merged to be reduced. At the same time, we introduce multi-layer caches to cache frequently accessed data.

Merging is an action unique to the storage engine based on the LSMTree architecture. It is used to merge baseline data and incremental data and is called major compaction in HBase and RocksDB.

OceanBase triggers merging during off-peak hours every day and releases memory through dumping during other hours. We call this daily merging. Data compression, data verification, materialized views, schema changes, and other things are finished within the merging window.

Merging brings many benefits but comes at a price because it puts pressure on the I/O and CPU of the system.

The LSMTree database in the industry cannot solve this problem. As a result, OceanBase is optimized, including incremental merging, progressive merging, parallel merging, rotation merging, and I/O isolation.

Compared with a traditional relational database, daily merging can make better use of idle I/O during off-peak hours to provide better support for data compression, verification, and materialized views.

Dump means storing incremental data in the memory on disk. It is similar to the minor compaction of HBase and RocksDB. It can relieve memory pressure brought by the LSMTree architecture.

OceanBase implements a hierarchical dump strategy to balance reads and writes. This prevents the slowdown in read performance caused by excessive dumping of SSTable and also avoids the amplification of dumped writes caused by a large number of random writes of business.

OceanBase also isolates resources (including CPU, memory, disk I/O, and network I/O) to avoid excessive consumption of resources caused by dumping and reduce the impact on user requests.

The optimization in these aspects helps offset the impact of dumping on performance, making the performance curve for OceanBase in TPC-C tests smooth.

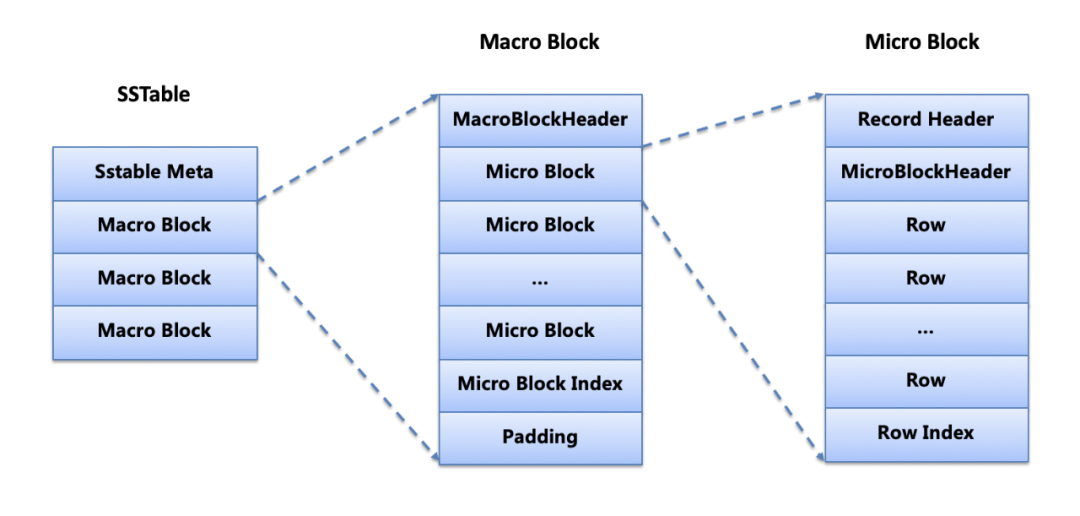

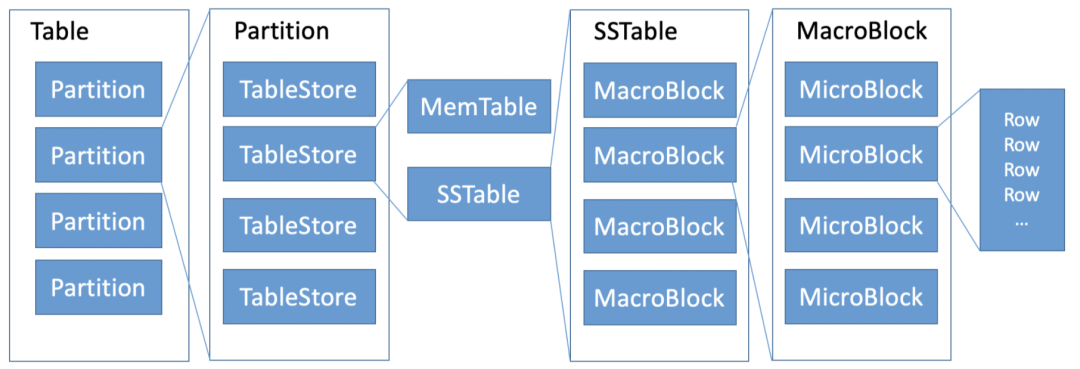

SSTable stores data in an order of primary keys. The data is divided into blocks of 2 MB, which are called macroblocks.

Each macroblock corresponds to data in the range of a primary key. A macroblock is divided into multiple micro blocks to avoid the need for loading a macroblock after reading a row. The size of a micro block is generally 16 KB. When a micro block reaches 16 KB during merging, coding rules are detected based on the rules of row data. Data is encoded, and the checksum is calculated according to the selected coding rules. A micro block is the smallest unit for reading I/O.

The micro block of OceanBase becomes variable-length data blocks after compression and causes little waste of space. In contrast, the micro block of a traditional relational database is a fixed-length data block. After compression, it inevitably leaves blanks. This means a waste of more space and an impact on the compression ratio.

With the same block size (16KB), compression algorithm, and data, it requires less space in OceanBase than in a traditional relational database.

Macroblocks are the basic unit for merging. SSTable iterates based on macroblocks while MemTable iterates based on rows. If the row of MemTable is not within the data range of a macroblock, the new version of SSTable directly references the macroblock. If updated data appears within the data range of a macroblock, you need to open the macroblock, parse the indexes for micro blocks, and iterate all micro blocks.

You need to load row indexes for each micro block, parse all rows, and merge them with MemTable. If the size of appended rows reaches 16KB, you need to construct, encode, and compress a new micro block and then calculate the checksum. You can directly copy unmodified micro blocks to a new macroblock without parsing their content.

OceanBase emphasizes data quality. When merging, it verifies data in two aspects:

① It verifies and ensures the consistency of business data among multiple copies of the same pieces of data.

② It verifies and ensures the consistency of data between the main table and index table.

OceanBase views data verification as a firewall used to ensure data delivered to the business is completely correct and is known before complaints from customers.

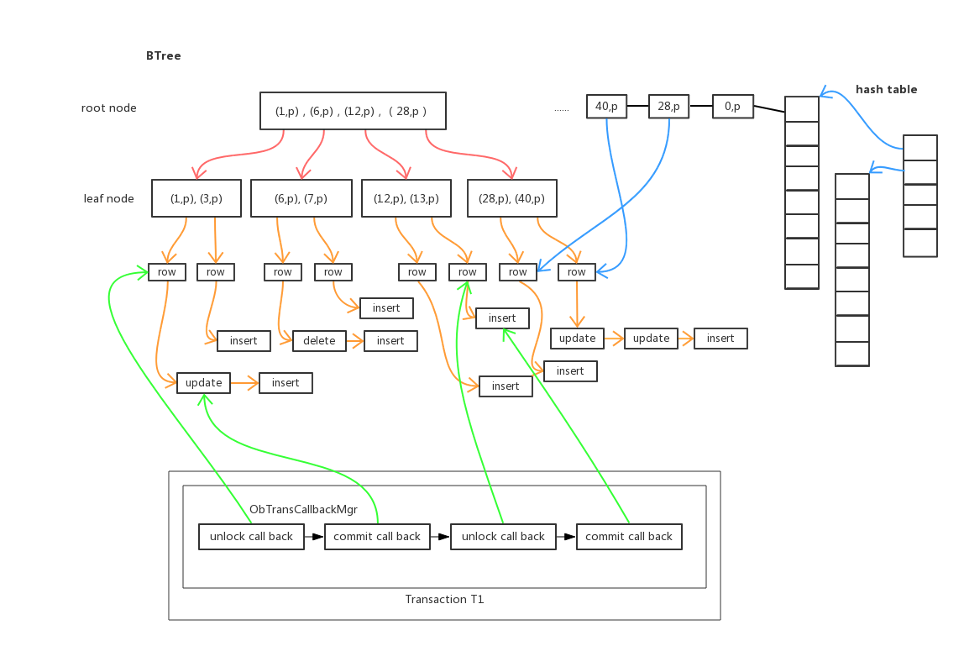

MemTable maintains historical transactions in the memory. The operations specified in historical transactions for each row are organized from new operations to old ones into operation chains for rows. When a new transaction is committed, new operations for rows are added to the top of the operation chains.

If too many historical transactions are stored in operation chains, the read performance is affected. In this case, the compaction operation needs to be triggered to merge historical transactions to generate a new operation chain for a row. The compaction operation does not cause earlier row operation chains for rows to be deleted.

OceanBase implements concurrency control based on the MVCC mechanism above, so read transactions and write transactions do not affect each other:

① If the read request only involves one partition or multiple partitions of a single OBServer, perform a snapshot read.

② If multiple partitions of multiple OBServers are involved, perform distributed snapshot read.

MemTable features a dual-index structure. One is Hashtable, which is used for fast queries for the KV class. The other is BTree, which is used for scan queries, such as Scan. When data is inserted/updated/deleted, it is written into the memory block. Pointers pointing to corresponding data are stored in Hashtable and BTree.

The consistency between two indexes is automatically maintained each time a transaction is executed. Both data structures have their advantages and disadvantages:

① When inserting a row of data, you need to check whether the row of data already exists. In terms of checking conflicts, Hashtable is faster than BTree.

② When a transaction inserts or updates a row of data, it needs to find this row and lock it to prevent other transactions from modifying this row. In terms of looking for a row lock-in ObMvccRow, Hashtable is faster than BTree.

③ In range search, since the data in the nodes of BTree is in order, the locality of the search is better. However, the data in Hashtable is not in order, so the entire Hashtable needs to be searched.

OceanBase is a quasi-in-memory database. Most of the hot data is in memory, organized in rows, and written into a disk at a granularity of MB when the memory is insufficient. This avoids the problem of write amplification in a traditional relational database that is organized in pages and significantly improves performance at the architecture level.

OceanBase also introduces optimization technologies for in-memory databases (including multi-version concurrency and lock-free data structures) to achieve the lowest latency and highest performance.

With the same hardware, OceanBase is way better than the traditional relational database in performance. In addition, the use of ordinary PC servers due to the strong synchronization of multiple copies significantly reduces the hardware cost of the database.

As mentioned above, due to the longer read path, a multi-layer cache mechanism is introduced.

The cache is mainly used to cache frequently accessed data in SSTable. It falls into several categories, such as block cache for caching SSTable data, block index cache for caching micro block indexes, row cache for caching data rows, and bloomfilter cache for caching bloomfilter to filter empty queries quickly.

SSTable is read-only during non-merging, so you do not need to worry about cache invalidation.

When a read request comes, data in the cache takes priority for the read. If the cache does not hit, disk I/O is generated to read micro block data. Logical reads are calculated based on the number of cache hits and disk reads. They are used to evaluate the SQL execution plan. For an operation in a single row, if the row exists, a row cache is needed. If the row does not exist, the bloomfilter cache is needed.

So, cache search is only needed once in baseline data and causes no additional overheads for most of the operations in a single row.

Two modules in OceanBase occupy a large amount of memory:

① MemTable requires non-dynamically scalable memory.

② Cache requires dynamically scalable memory.

The cache in OceanBase uses memory as much as possible (like the cache policy of Linux) to use all the rest memory except for MemTable. Therefore, OceanBase designs a prioritized control strategy and an intelligent elimination mechanism for the cache.

Similar to Oracle's AMM, OceanBase designs a unified cache framework. All different types of caches for different tenants are managed by the framework. A set of priorities is configured for different types of caches. (For example, the block index cache has a higher priority. It stays in memory regularly and is rarely eliminated. Row cache is more efficient than block cache, so the priority is higher.)

Different types of caches squeeze each other according to their priorities and frequency of data access. Priority generally does not need to be configured. In special scenarios, the priority of various caches can be controlled through parameters. If the write speed is fast and MemTable occupies a large amount of memory, the cache memory is phased out for MemTable.

Cache memory is eliminated by a unit of 2MB. A score is calculated based on the access frequency of each element on each 2MB memory block. The more frequently accessed memory blocks have a higher score. Meanwhile, a background thread is used to sort the scores of all 2MB memory blocks and eliminate the memory blocks with lower scores regularly.

During elimination, the upper and lower limits of memory of each tenant are considered to control the usage of memory of caches in each tenant.

As described above, OceanBase's storage engine is very close to the feature of a standalone engine of the traditional relational database. All the data (baseline data + incremental data + cached data + transaction logs) in a data partition is placed in an OBServer.

Therefore, read and write operations for a data partition are not cross-machine (except transaction logs are mostly synchronized using the Paxos protocol). In addition, quasi-in-memory databases and an excellent cache mechanism are added to provide ultimate OLTP performance.

Two Methods of OceanBase:

① It introduces the concept of a data partition table (Partition) in traditional relational databases.

② It is compatible with the partition table syntax of a traditional relational database and supports hash partition and range partition.

It supports the two-level partition mechanism. For example, for the historical database scenario, a single table contains a large amount of data. So, the database is divided into two levels: level-1 partition by a user and level-2 partition by time. The division helps solve the problem with the scalability of large users. If you need to delete expired data, you can achieve it through the drop partition.

It also supports generating column partitions. Generating columns means this column is obtained through the calculation of other columns. This feature can meet the need that certain fields are processed and then seen as partition keys.

Optimize queries of the partition table:

① For the query that contains partition keys or partition expressions, the partition corresponding to the query can be calculated accurately. This is called partition clipping.

② For multi-partition queries, technologies (such as parallel query among partitions, sorting elimination among partitions, and partition-wise join) can be used to make query performance higher.

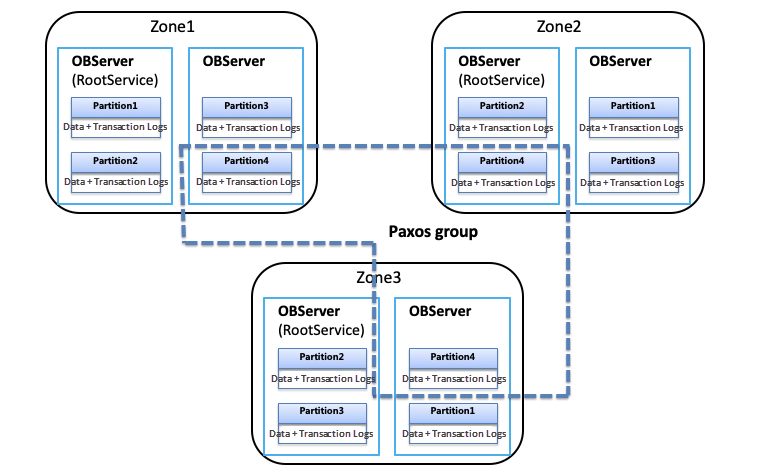

The copies of the same data partition constitute a Paxos Group. One of the copies is automatically selected as a leader, and the other copies become followers. All subsequent read and write requests for this data partition are automatically routed to the corresponding leader for service.

OceanBase adopts a shared-nothing distributed architecture. Under this architecture, each OBServer is equivalent and manages different data partitions.

① All read and write operations for a data partition are completed in the OBServer where it is located. This is a single partition transaction.

② Transactions for multiple data partitions are executed on multiple OBServers in a way of a two-phase commit. This is a distributed transaction.

Multi-partition transactions on a single server still require a two-phase commit and are optimized for the single server. Since distributed transactions increase transaction latency, table groups can be used to gather multiple tables that are frequently accessed together. Partitions of the same table group have the same OBServer distribution, and the leader is located on the same machine. This can help avoid cross-machine transactions.

A traditional relational database also supports partition, but all partitions are still stored on the same machine.

OceanBase can distribute all partitions to different physical machines, utilizing the advantages of the distributed architecture and completely solving the problem with scalability.

When the capacity or service capacity is insufficient, more data partitions can be added and distributed to more OBServers. This helps improve the overall read and write performance through online linear scaling. When capacity or processing capacity is sufficient for the same system, the machine can be taken offline to reduce costs.

Data partitions in OceanBase have multiple copies (such as three in the same-city three-copy deployment architecture and five in the three-place five-copy deployment architecture). These copies are distributed across multiple OBServers.

When a transaction commits, the Paxos protocol is used to achieve a majority commit among multiple copies, thus maintaining consistency among copies. When a single OBServer is down, data can be kept integrated, and data access can be restored in a short period, reaching an SLA with RPO=0 and RTO<30s.

Users do not need to care about the specific location of the data. The client locates the location of the data according to user requests so the read and write requests are sent to the leader for processing. The client is still displayed as a standalone database.

Based on the multi-copy architecture described above, the concept of rotation and merging is introduced to divide user request traffic from the merge process.

For example, the number of copies of partitions in an OceanBase cluster is three, and the three copies are distributed in three different zones (1, 2, and 3). When RootService controls merging (for example, merging Zone 3 first), it transfers user traffic to Zone (1 and 2) by switching the leader of all partitions to Zone (1 and 2). After Zone 3 is merged, Zone 2 will be merged. The traffic of Zone 2 is transferred before Zone 1 is merged. After the three copies are all merged, the initial distribution of the leader is restored.

The multi-copy architecture brings three relatively large enhancements in architecture:

A complete OceanBase storage engine is based on a standalone engine based on LSMTree and a distributed architecture with strong synchronization of multiple copies. This storage engine provides many benefits, including the upper layer of a relational database similar to Oracle and the distributed bottom layer similar to the spanner.

This is the core feature of OceanBase. It can avoid shortcomings of LSMTree and bring high performance and a smooth experience. It also supports the core OLTP business. Meanwhile, it shares advantages of a distributed architecture, including continuous availability, linear scalability, automatic fault tolerance, and low cost.

An Interpretation of the Source Code of OceanBase (5): Life of Tenant

An Interpretation of the Source Code of OceanBase (7): Implementation Principle of Database Index

OceanBase - September 13, 2022

OceanBase - May 30, 2022

OceanBase - September 9, 2022

OceanBase - August 26, 2022

OceanBase - September 9, 2022

OceanBase - September 14, 2022

Storage Capacity Unit

Storage Capacity Unit

Plan and optimize your storage budget with flexible storage services

Learn More Hybrid Cloud Storage

Hybrid Cloud Storage

A cost-effective, efficient and easy-to-manage hybrid cloud storage solution.

Learn More Hybrid Cloud Distributed Storage

Hybrid Cloud Distributed Storage

Provides scalable, distributed, and high-performance block storage and object storage services in a software-defined manner.

Learn More Cloud Parallel File Storage

Cloud Parallel File Storage

The fully-managed scalable parallel file system can meet your requirements on high-performance computing.

Learn MoreMore Posts by OceanBase