On March 24, 2026, a carefully planned supply-chain poisoning attack struck one of the world’s most popular AI agent frameworks—LiteLLM. This open-source project, with more than 95 million monthly downloads, became a tool for hackers to steal an enterprise’s core secrets within just a few hours. API keys, SSH private keys, cloud service credentials, database configurations... tens of thousands of sensitive records were encrypted and exfiltrated without users knowing.

What makes this incident thought-provoking is not only the attack technique itself, but a fundamental question: why have so many companies entrusted the security fate of their large-model API keys to an open-source third-party proxy?

This attack was not an isolated poisoning incident. Security community tracking found that the threat actor known as TeamPCP launched a series of chain-style supply chain attacks—they first compromised the CI/CD pipeline of the open-source security scanning tool Trivy, then obtained the PyPI release credentials of LiteLLM maintainers from it, and finally bypassed the normal code review process to directly implant highly obfuscated malicious code into LiteLLM release packages.

1. Silent execution via a .pth file

The attackers embedded a malicious file named litellm_init.pth in the release package. Python .pth files are automatically loaded and executed when the interpreter starts, with no explicit import required. As long as developers installed the affected version via pip install, the malicious code would quietly run in the background—users did not even need to import litellm in their code. In v1.82.7, the attackers also embedded the malicious payload in proxy/proxy_server.py, making detection even harder.

2. Comprehensive theft of sensitive data

Once activated, the malicious code immediately scanned and stole the following critical information:

.gitconfig and credential information containing repository access credentials3. Mixed encryption exfiltration to evade detection

The stolen data was first symmetrically encrypted with AES-256-CBC (combined with PBKDF2 key derivation), then the session key was encrypted with an RSA-4096 public key, and finally sent over HTTPS to models.litellm.cloud, controlled by the attacker. This domain closely resembles the official LiteLLM domain and is highly deceptive; and the hybrid encryption scheme means that even if the traffic is intercepted, the stolen content cannot be parsed.

To understand why this attack was so devastating, we must first understand the role LiteLLM plays in the AI stack.

LiteLLM is essentially a unified proxy layer for large-model APIs. It helps developers use a single interface to call services from OpenAI, Anthropic, Azure, Google, and other model providers, eliminating the tedious work of integrating them one by one. That is exactly why it has more than 95 million monthly downloads—almost every enterprise that needs to call multiple large-model APIs will consider using it.

But that is also where the problem lies. As a proxy layer, LiteLLM naturally needs access to all large-model API keys. Developers typically configure each provider’s API key in environment variables or .env files, and LiteLLM reads them and forwards requests on their behalf. Once LiteLLM itself is implanted with malicious code, those keys are like being stored in an unlocked safe—the attacker can take everything with ease.

This exposes a fatal architectural flaw: the responsibilities of key management and API proxying are handed to a locally deployed open-source component, whose security depends entirely on whether the upstream supply chain is trustworthy.

Alibaba Cloud AI Gateway also provides the core capabilities of LiteLLM—unified multi-model proxying, protocol adaptation, load balancing, and fallback for failures—but delivers them as a cloud service, fundamentally changing the security model. More importantly, AI Gateway is deeply integrated with Alibaba Cloud AI Security Guardrails, adding real-time security protection to every AI interaction flowing through the gateway.

This is the most fundamental difference between AI Gateway and LiteLLM.

When using LiteLLM, developers must configure the API keys of major model providers in local environment variables or on the server, and LiteLLM reads them and forwards requests on their behalf. If LiteLLM is poisoned, those keys are immediately exposed.

AI Gateway works very differently: backend model service API keys are securely stored in AI Gateway and can be integrated with KMS (Key Management Service) for encryption protection, completely transparent to the client. Clients access the service through the authentication mechanism provided by AI Gateway (such as JWT tokens), and never need to hold any static keys from large-model service providers. Even if the client environment is compromised, the attacker cannot obtain any valuable model service keys.

In the LiteLLM model, keys are scattered across the environment variables of every developer machine and server; in the AI Gateway model, keys are centrally hosted in the cloud, with zero exposure to clients. This fundamentally eliminates the attack surface for API key theft in the LiteLLM incident.

The attack path in the LiteLLM incident was: developer pip install litellm → malicious litellm_init.pth file executes silently → data is exfiltrated. The prerequisite for this attack chain is that developers must install a third-party package locally.

After using AI Gateway, developers can directly call the AI Gateway API endpoint, just like calling any standard HTTPS API. There is no need to install extra proxy packages or run third-party code locally. The supply-chain poisoning attack loses its carrier at the root—you cannot poison a local dependency that does not exist.

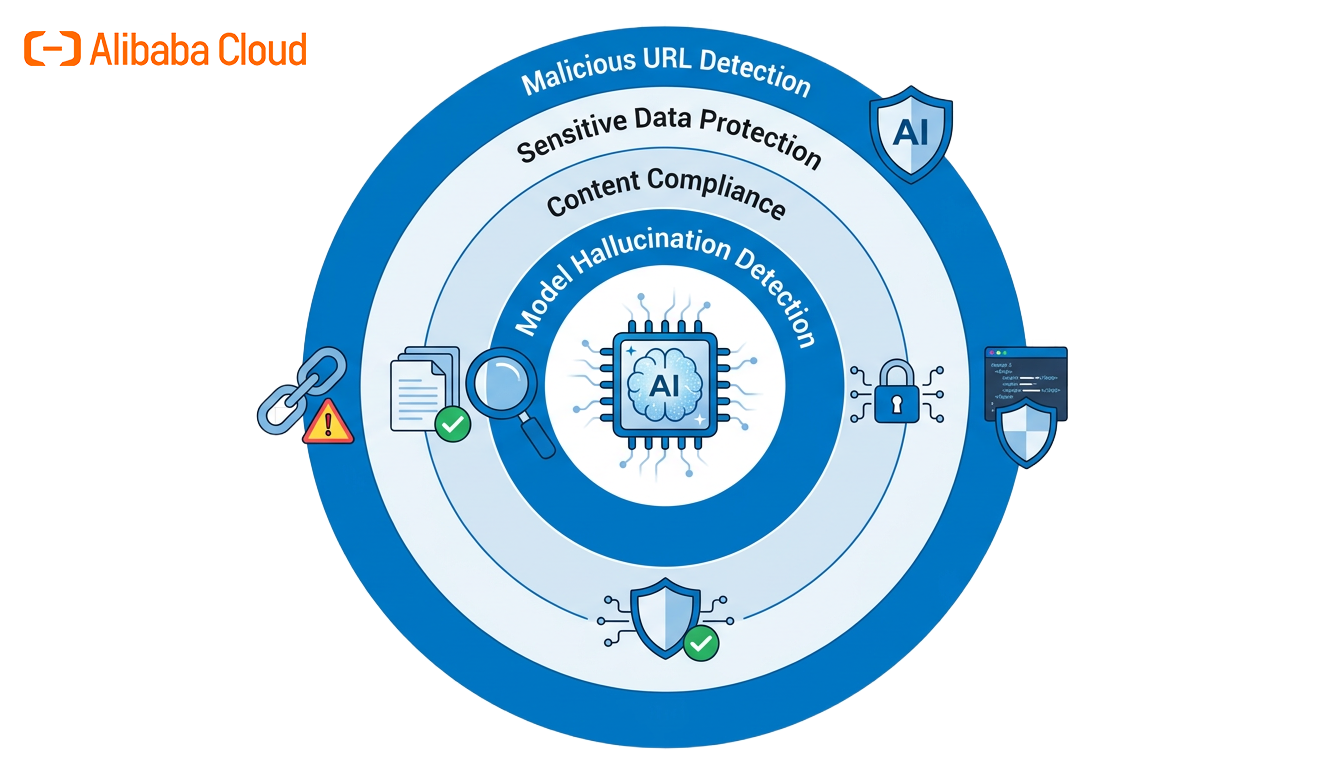

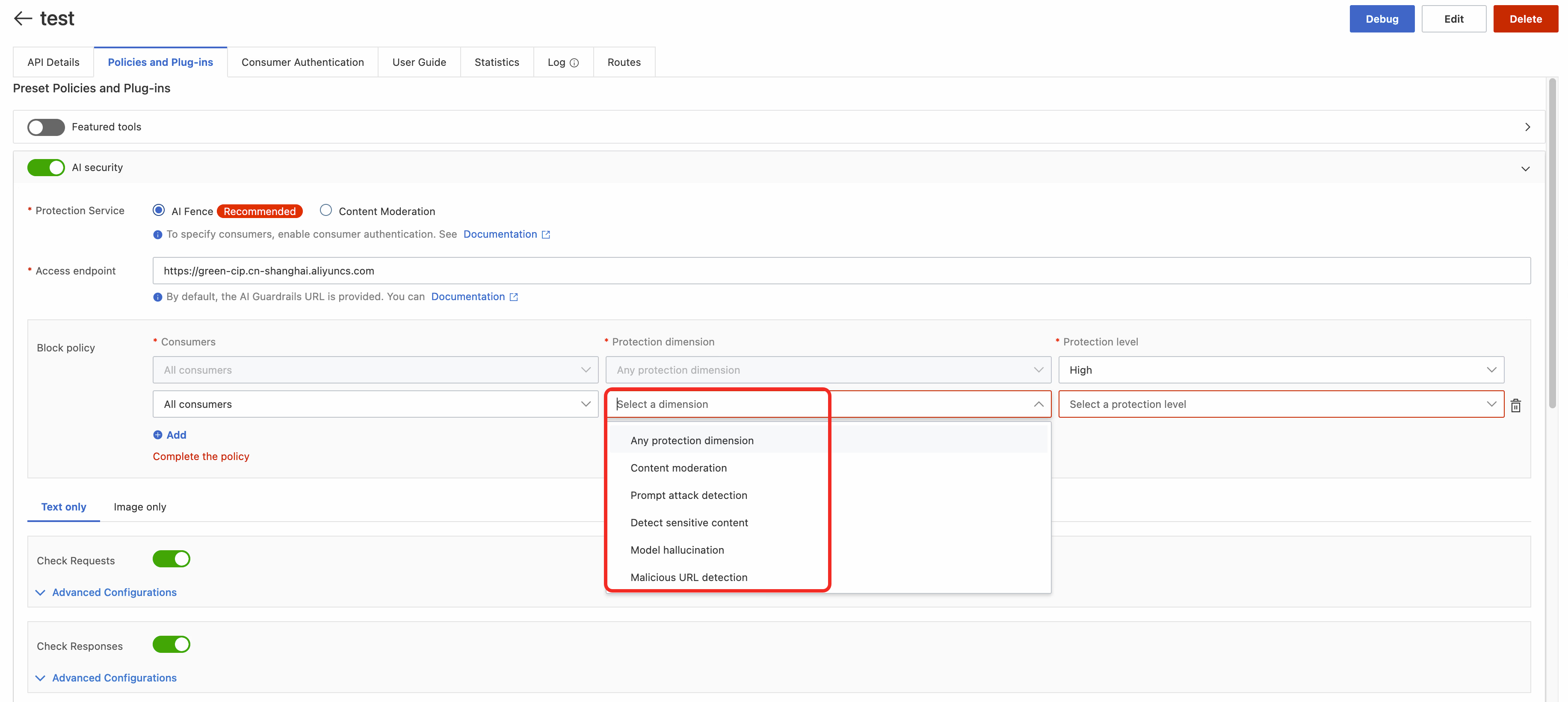

The two architectural advantages above already eliminate the possibility of LiteLLM-style supply chain attacks at the root. But the security threats facing AI applications extend far beyond supply-chain poisoning. Alibaba Cloud AI Gateway is deeply integrated with AI Security Guardrails—a professional AI security product built by the Alibaba Cloud Content Security team—to provide comprehensive defense in depth for AI applications.

After connecting to AI Gateway, users only need to enable AI Security Guardrails policies in the console with one click to automatically add the following security inspection capabilities to all AI traffic passing through the gateway, with no additional integration or deployment required:

Sensitive Data Protection

AI Security Guardrails scans every request and response flowing through the gateway in real time, automatically identifying and blocking abnormal transmission of sensitive data such as API keys, database credentials, and personally identifiable information (PII). Even if an insider or malicious code attempts to exfiltrate sensitive information through the AI request channel, the guardrails will intercept it at the exit.

Prompt Injection Defense

Using AI semantic understanding, it precisely identifies and blocks direct injection, indirect injection, jailbreak prompts, and other attack techniques, preventing attackers from inducing the model to perform unintended actions through malicious prompts and protecting business logic from being broken.

Malicious URL and Content Detection

URLs and content in model inputs and outputs are inspected in real time to identify phishing links, malware distribution addresses, and other threats, preventing AI applications from becoming channels for malicious content distribution.

Content Compliance Detection

Based on deep AI semantic understanding, this detects inappropriate, violent/extremist, and sensitive-information content with precision, including metaphorical expressions and highly adversarial risks, ensuring that AI application outputs remain compliant at all times.

Model Hallucination Detection and Digital Watermarking

Identifies and marks inaccurate information generated by models, helping enterprises control AI output quality; the digital watermark feature ensures the traceability of AI-generated content, meeting regulatory and audit requirements.

AI Security Guardrails is an independent professional security product that is also deeply integrated with AI Gateway. By connecting through AI Gateway, users do not need to deploy the guardrails service separately; it is ready to use out of the box. For scenarios that require more flexibility, it can also be integrated directly through independent SDK/API access to AI Security Guardrails.

AI Gateway provides complete traffic monitoring and log auditing capabilities. The source, destination, content, and latency of every API call are fully recorded. When abnormal call patterns occur—such as a large number of unusual requests in a short period or anomalous data carried in request content—the operations team can receive alerts immediately and take action.

By contrast, LiteLLM, as a locally deployed open-source component, has network observability entirely dependent on the monitoring system built by the operations team. In this incident, the malicious code exfiltrated data to the disguised domain models.litellm.cloud, and many teams did not discover this abnormal connection at all before the incident became public.

In addition to security advantages, AI Gateway also provides enterprise-grade capabilities that LiteLLM struggles to match:

| Dimension | LiteLLM (local deployment) | Alibaba Cloud AI Gateway |

|---|---|---|

| API Key Management | Scattered across environment variables/config files, readable locally | Centrally hosted in the cloud (supports KMS encryption), zero client exposure |

| Supply Chain Risk | Depends on pip installation and is affected by PyPI poisoning attacks | Cloud service access, no third-party package installation required |

| AI Security Protection | No built-in security inspection capabilities | Integrated AI Security Guardrails, one-click real-time detection |

| Traffic Monitoring | Must be built independently | End-to-end observability with anomaly alerts |

| High Availability | Requires self-managed operations | Serverless elastic scaling, automatic fallback |

| Compliance Assurance | None | Content compliance detection, sensitive data protection, digital watermarking |

If LiteLLM is deployed in your system, take the following actions immediately:

litellm_init.pth file in Python’s site-packages directory and delete it; check whether proxy/proxy_server.py has been tampered withmodels.litellm.cloud

The LiteLLM poisoning incident will not be the last attack targeting the AI supply chain. The TeamPCP organization demonstrated a worrying new trend through chain-style supply chain attacks (Trivy → LiteLLM)—attackers are no longer satisfied with poisoning a single project, but instead achieve "one catch, multiple gains" by compromising upstream dependencies. As large-model applications are rapidly rolled out, such attacks will only become more complex and more covert.

The biggest lesson from this incident is: AI application security cannot be guaranteed by simply "being careful with installation packages"; it must be treated as a core consideration from the architecture design stage. Hand key management over to professional cloud services, hand security inspection over to AI Security Guardrails, and hand traffic governance over to AI Gateway—let specialized infrastructure do specialized work.

Alibaba Cloud AI Gateway: https://www.alibabacloud.com/product/api-gateway

AI Security Guardrails: https://www.alibabacloud.com/product/ai_guardrails

AI security is not the destination, but a continuous journey. Choosing the right infrastructure means choosing the most solid starting point for that journey.

This article is based on public reports and analysis of the March 2026 LiteLLM supply-chain poisoning incident. To learn more about AI security solutions, please visit the Alibaba Cloud AI Gateway product page.

694 posts | 56 followers

FollowAlibaba Cloud Native Community - March 30, 2026

Alibaba Cloud Native Community - August 4, 2025

CloudSecurity - March 16, 2026

CloudSecurity - April 2, 2026

Alibaba Cloud Native Community - July 15, 2025

Alibaba Cloud Native Community - March 27, 2025

694 posts | 56 followers

Follow Container Compute Service (ACS)

Container Compute Service (ACS)

A cloud computing service that provides container compute resources that comply with the container specifications of Kubernetes

Learn More Container Service for Kubernetes

Container Service for Kubernetes

Alibaba Cloud Container Service for Kubernetes is a fully managed cloud container management service that supports native Kubernetes and integrates with other Alibaba Cloud products.

Learn More Tongyi Qianwen (Qwen)

Tongyi Qianwen (Qwen)

Top-performance foundation models from Alibaba Cloud

Learn More Managed Service for Prometheus

Managed Service for Prometheus

Multi-source metrics are aggregated to monitor the status of your business and services in real time.

Learn MoreMore Posts by Alibaba Cloud Native Community